FlinkKafkaProducer实现了TwoPhaseCommitSinkFunction,也就是两阶段提交。关于两阶段提交的原理,可以参见《An Overview of End-to-End Exactly-Once Processing in Apache Flink》,本文不再赘述两阶段提交的原理,但是会分析FlinkKafkaProducer源码中是如何实现两阶段提交的,并保证了在结合kafka的时候做到端到端的Exactly Once语义的。

TwoPhaseCommitSinkFunction分析

public abstract class TwoPhaseCommitSinkFunction<IN, TXN, CONTEXT>extends RichSinkFunction<IN>implements CheckpointedFunction, CheckpointListener

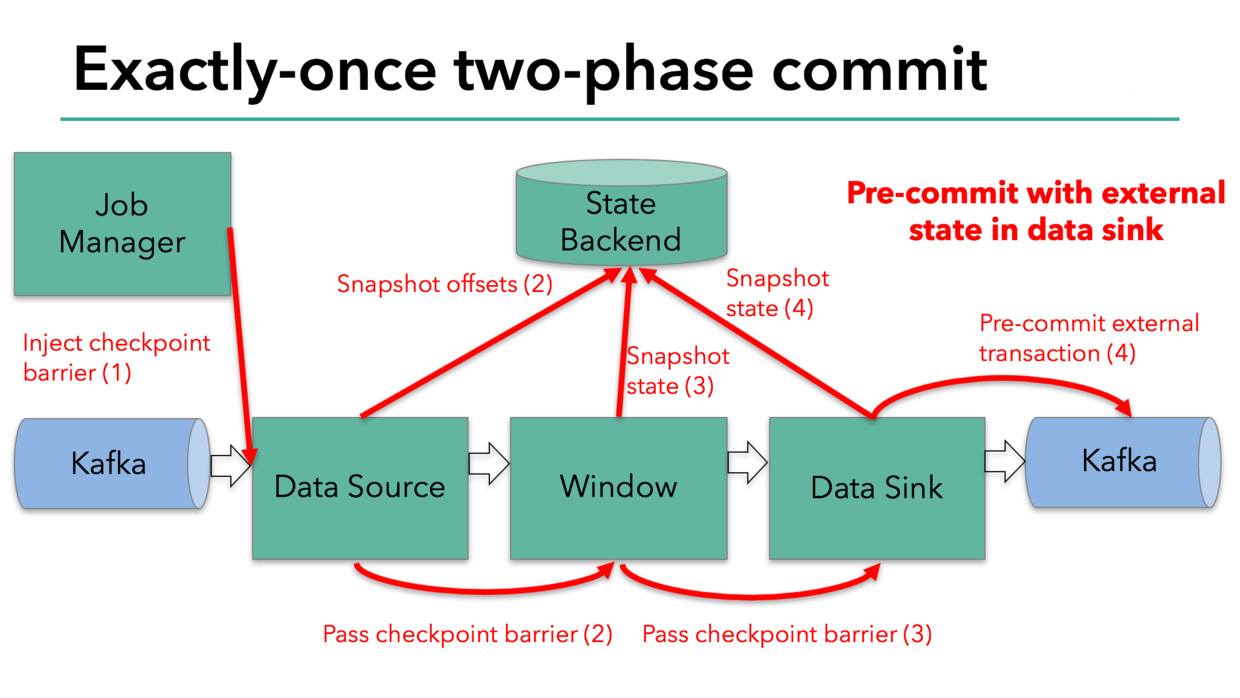

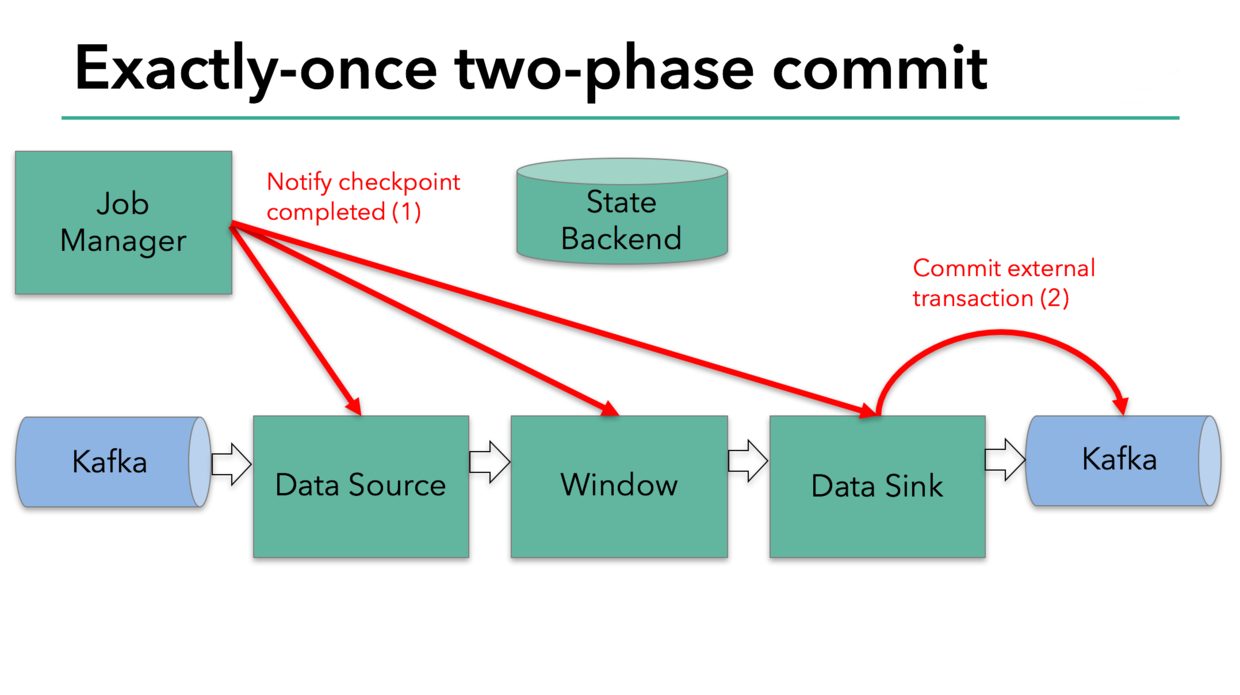

TwoPhaseCommitSinkFunction实现了CheckpointedFunction和CheckpointListener接口,首先就是在initializeState方法中开启事务,对于flink sink的两阶段提交,第一阶段就是执行CheckpointedFunction#snapshotState当所有task的checkpoint都完成之后,每个task会执行CheckpointedFunction#notifyCheckpointComplete也就是所谓的第二阶段。

FlinkKafkaProducer第一阶段分析

@Overridepublic void snapshotState(FunctionSnapshotContext context) throws Exception {// this is like the pre-commit of a 2-phase-commit transaction// we are ready to commit and remember the transactioncheckState(currentTransactionHolder != null, "bug: no transaction object when performing state snapshot");long checkpointId = context.getCheckpointId();LOG.debug("{} - checkpoint {} triggered, flushing transaction '{}'", name(), context.getCheckpointId(), currentTransactionHolder);preCommit(currentTransactionHolder.handle);pendingCommitTransactions.put(checkpointId, currentTransactionHolder);LOG.debug("{} - stored pending transactions {}", name(), pendingCommitTransactions);currentTransactionHolder = beginTransactionInternal();LOG.debug("{} - started new transaction '{}'", name(), currentTransactionHolder);state.clear();state.add(new State<>(this.currentTransactionHolder,new ArrayList<>(pendingCommitTransactions.values()),userContext));}

这部分代码的核心在于

- 先执行

preCommit方法,EXACTLY_ONCE模式下会调flush,立即将数据发送到指定的topic,这时如果消费这个topic,需要指定isolation.level为read_committed表示消费端应用不可以看到未提交的事物内的消息。@Overrideprotected void preCommit(FlinkKafkaProducer.KafkaTransactionState transaction) throws FlinkKafkaException {switch (semantic) {case EXACTLY_ONCE:case AT_LEAST_ONCE:flush(transaction);break;case NONE:break;default:throw new UnsupportedOperationException("Not implemented semantic");}checkErroneous();}

注意第一次调用的send和flush的事务都是在initializeState方法中开启事务

transaction.producer.send(record, callback);

transaction.producer.flush();

pendingCommitTransactions保存了每个checkpoint对应的事务,并为下一次checkpoint创建新的producer事务,即currentTransactionHolder = beginTransactionInternal();下一次的send和flush都会在这个事务中。也就是说第一阶段每一个checkpoint都有自己的事务,并保存在pendingCommitTransactions中。

FlinkKafkaProducer第二阶段分析

当所有checkpoint都完成后,会进入第二阶段的提交,

@Overridepublic final void notifyCheckpointComplete(long checkpointId) throws Exception {// the following scenarios are possible here//// (1) there is exactly one transaction from the latest checkpoint that// was triggered and completed. That should be the common case.// Simply commit that transaction in that case.//// (2) there are multiple pending transactions because one previous// checkpoint was skipped. That is a rare case, but can happen// for example when://// - the master cannot persist the metadata of the last// checkpoint (temporary outage in the storage system) but// could persist a successive checkpoint (the one notified here)//// - other tasks could not persist their status during// the previous checkpoint, but did not trigger a failure because they// could hold onto their state and could successfully persist it in// a successive checkpoint (the one notified here)//// In both cases, the prior checkpoint never reach a committed state, but// this checkpoint is always expected to subsume the prior one and cover all// changes since the last successful one. As a consequence, we need to commit// all pending transactions.//// (3) Multiple transactions are pending, but the checkpoint complete notification// relates not to the latest. That is possible, because notification messages// can be delayed (in an extreme case till arrive after a succeeding checkpoint// was triggered) and because there can be concurrent overlapping checkpoints// (a new one is started before the previous fully finished).//// ==> There should never be a case where we have no pending transaction here//Iterator<Map.Entry<Long, TransactionHolder<TXN>>> pendingTransactionIterator = pendingCommitTransactions.entrySet().iterator();checkState(pendingTransactionIterator.hasNext(), "checkpoint completed, but no transaction pending");Throwable firstError = null;while (pendingTransactionIterator.hasNext()) {Map.Entry<Long, TransactionHolder<TXN>> entry = pendingTransactionIterator.next();Long pendingTransactionCheckpointId = entry.getKey();TransactionHolder<TXN> pendingTransaction = entry.getValue();if (pendingTransactionCheckpointId > checkpointId) {continue;}LOG.info("{} - checkpoint {} complete, committing transaction {} from checkpoint {}",name(), checkpointId, pendingTransaction, pendingTransactionCheckpointId);logWarningIfTimeoutAlmostReached(pendingTransaction);try {commit(pendingTransaction.handle);} catch (Throwable t) {if (firstError == null) {firstError = t;}}LOG.debug("{} - committed checkpoint transaction {}", name(), pendingTransaction);pendingTransactionIterator.remove();}if (firstError != null) {throw new FlinkRuntimeException("Committing one of transactions failed, logging first encountered failure",firstError);}}

这一阶段会将pendingCommitTransactions中的事务全部提交

@Overrideprotected void commit(FlinkKafkaProducer.KafkaTransactionState transaction) {if (transaction.isTransactional()) {try {transaction.producer.commitTransaction();} finally {recycleTransactionalProducer(transaction.producer);}}}

这时消费端就能看到read_committed的数据了,至此整个producer的流程全部结束。

Exactly-Once分析

当输入源和输出都是kafka的时候,flink之所以能做到端到端的Exactly-Once语义,主要是因为第一阶段FlinkKafkaConsumer会将消费的offset信息通过checkpoint保存,所有checkpoint都成功之后,第二阶段FlinkKafkaProducer才会提交事务,结束producer的流程。这个过程中很大程度依赖了kafka producer事务的机制,可以参考Kafka事务。

总结

本文主要分析了flink结合kafka是如何实现Exactly-Once语义的。

注:本文基于flink 1.9.0和kafka 2.3