系统配置

机器数量3台操作系统Centos8.1---7.3设置主机名称分别设置主机名称为:master node1 node2每台机器必须设置域名解析192.168.81.30 master192.168.81.31 node1192.168.81.32 node2禁用开机启动防火墙# systemctl disable firewalld永久禁用SELinux# sed -i 's/SELINUX=permissive/SELINUX=disabled/' /etc/sysconfig/selinuxSELINUX=disabled关闭系统Swap1.8版本之后的新规定Kubernetes 1.8开始要求关闭系统的Swap,如果不关闭,默认配置下kubelet将无法启动。修改/etc/fstab文件,注释掉SWAP的自动挂载,使用free -m确认swap已经关闭。[root@master /]# sed -i 's/.*swap.*/#&/' /etc/fstab

基础软件安装

docker安装yum install -y yum-utils device-mapper-persistent-data lvm2 && yum-config-manager --add-repo https://download.docker.com/linux/centos/docker-ce.repo && yum makecache && yum -y install docker-ce -y && systemctl enable docker.service && systemctl start docker所有机器安装kubeadm和kubelet配置aliyun的yum源# cat <<EOF > /etc/yum.repos.d/kubernetes.repo[kubernetes]name=Kubernetesbaseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64enabled=1gpgcheck=1repo_gpgcheck=1gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpgEOF安装最新版kubeadm# yum makecache# yum install -y kubelet kubeadm kubectl ipvsadm由于官网未开放同步方式, 可能会有索引gpg检查失败的情况, 这时请用【yum install -y --nogpgcheck kubelet kubeadm kubectl】说明:如果想安装指定版本的kubeadmin【v17.2的无法使用yum默认版本的】#yum install kubelet-1.16.0-0.x86_64 kubeadm-1.16.0-0.x86_64 kubectl-1.16.0-0.x86_64配置内核参数# cat <<EOF > /etc/sysctl.d/k8s.confnet.bridge.bridge-nf-call-ip6tables = 1net.bridge.bridge-nf-call-iptables = 1vm.swappiness=0EOF# sysctl --system# modprobe br_netfilter# sysctl -p /etc/sysctl.d/k8s.conf加载ipvs相关内核模块如果重新开机,需要重新加载(可以写在 /etc/rc.local 中开机自动加载)# modprobe ip_vs# modprobe ip_vs_rr# modprobe ip_vs_wrr# modprobe ip_vs_sh# modprobe nf_conntrack_ipv4查看是否加载成功# lsmod | grep ip_vs

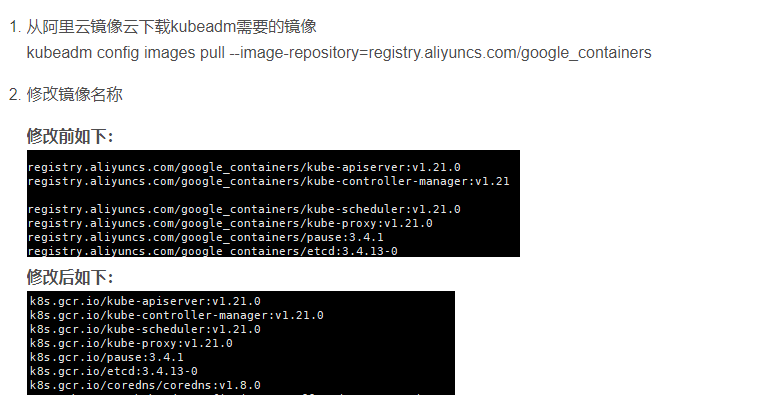

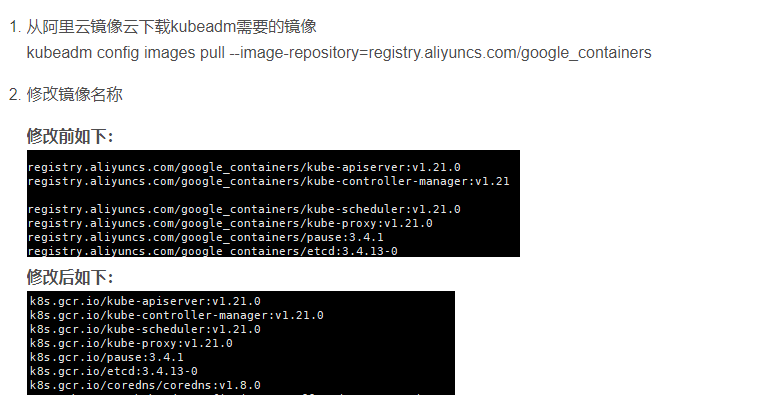

获取镜像

三个节点都要下载- 注意下载时把版本号修改到官方最新版,即使下载了最新版也可能版本不对应,需要按报错提示下载- 每次部署都会有版本更新,具体版本要求,运行初始化过程失败会有版本提示- kubeadm的版本和镜像的版本必须是对应的 用命令查看版本当前kubeadm对应的k8s镜像版本【最新的版本 [root@master ~]# kubeadm config images list】[root@master ~]# kubeadm config images listk8s.gcr.io/kube-apiserver:v1.17.2k8s.gcr.io/kube-controller-manager:v1.17.2k8s.gcr.io/kube-scheduler:v1.17.2k8s.gcr.io/kube-proxy:v1.17.2k8s.gcr.io/pause:3.1k8s.gcr.io/etcd:3.4.3-0k8s.gcr.io/coredns:1.6.5docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/kube-apiserver:v1.17.2docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/kube-apiserver:v1.17.2 k8s.gcr.io/kube-apiserver:v1.17.2docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/kube-controller-manager:v1.17.2docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/kube-controller-manager:v1.17.2 k8s.gcr.io/kube-controller-manager:v1.17.2docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/kube-scheduler:v1.17.2docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/kube-scheduler:v1.17.2 k8s.gcr.io/kube-scheduler:v1.17.2docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/kube-proxy:v1.17.2docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/kube-proxy:v1.17.2 k8s.gcr.io/kube-proxy:v1.17.2docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.1docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.1 k8s.gcr.io/pause:3.1docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/etcd:3.4.3-0docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/etcd:3.4.3-0 k8s.gcr.io/etcd:3.4.3-0docker pull coredns/coredns:1.6.5docker tag coredns/coredns:1.6.5 k8s.gcr.io/coredns:1.6.5

所有节点配置启动kubelet

配置kubelet使用国内pause镜像获取docker的cgroupsDOCKER_CGROUPS=$(docker info | grep 'Cgroup' | cut -d' ' -f4)# echo $DOCKER_CGROUPScgroupfs 1 则【使用如下命令】DOCKER_CGROUPS=$(docker info |grep cgro|awk -F':| ' '{print $NF}')# echo $DOCKER_CGROUPScgroupfs配置kubelet的cgroups[root@master ~]# cat >/etc/sysconfig/kubelet<<EOFKUBELET_EXTRA_ARGS="--cgroup-driver=$DOCKER_CGROUPS --pod-infra-container-image=k8s.gcr.io/pause:3.1"EOF启动# systemctl daemon-reload# systemctl enable kubelet && systemctl start kubelet

说明

做完如上操作后kubelet并没启动 是正常的简单地说就是在kubeadm init 之前kubelet会不断重启。

初始化集群

在master节点进行初始化操作【默认yum安装会报这个】this version of kubeadm only supports deploying clusters with the control plane version >= 1.24.0. Current version: v1.17.2初始化的时候 根据要求下载对应版本补安装docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/etcd:3.3.15-0docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/etcd:3.3.15-0 k8s.gcr.io/etcd:3.3.15-0docker pull coredns/coredns:1.6.2docker tag coredns/coredns:1.6.2 k8s.gcr.io/coredns:1.6.2特别说明:初始化完成必须要记录下初始化过程最后的命令,如下所示To start using your cluster, you need to run the following as a regular user: mkdir -p $HOME/.kube sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config sudo chown $(id -u):$(id -g) $HOME/.kube/configThen you can join any number of worker nodes by running the following on each as root:kubeadm join 192.168.81.30:6443 --token dcg8kk.t42rp6f32p2szdda \ --discovery-token-ca-cert-hash sha256:7b21de9e4be342bd5d971fbc9859169c7606c29d735f057a767ffcdd439bce10 其中有以下关键内容:[kubelet] 生成kubelet的配置文件”/var/lib/kubelet/config.yaml”[certificates]生成相关的各种证书[kubeconfig]生成相关的kubeconfig文件[bootstraptoken]生成token记录下来,后边使用kubeadm join往集群中添加节点时会用到在master节点配置使用kubectl[root@master ~]# mkdir -p $HOME/.kube[root@master ~]# cp -i /etc/kubernetes/admin.conf $HOME/.kube/config[root@master ~]# chown $(id -u):$(id -g) $HOME/.kube/config查看node节点[root@master ~]# kubectl get nodesNAME STATUS ROLES AGE VERSIONmaster NotReady master 6m31s v1.16.0

初始化过程

[root@master ~]# kubeadm init --kubernetes-version=v1.17.2 --pod-network-cidr=10.244.0.0/16 --apiserver-advertise-address=192.168.81.30 --ignore-preflight-errors=Swap[init] Using Kubernetes version: v1.17.2[preflight] Running pre-flight checks [WARNING KubernetesVersion]: Kubernetes version is greater than kubeadm version. Please consider to upgrade kubeadm. Kubernetes version: 1.17.2. Kubeadm version: 1.16.x [WARNING IsDockerSystemdCheck]: detected "cgroupfs" as the Docker cgroup driver. The recommended driver is "systemd". Please follow the guide at https://kubernetes.io/docs/setup/cri/ [WARNING SystemVerification]: this Docker version is not on the list of validated versions: 20.10.17. Latest validated version: 18.09[preflight] Pulling images required for setting up a Kubernetes cluster[preflight] This might take a minute or two, depending on the speed of your internet connection[preflight] You can also perform this action in beforehand using 'kubeadm config images pull'[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"[kubelet-start] Activating the kubelet service[certs] Using certificateDir folder "/etc/kubernetes/pki"[certs] Generating "ca" certificate and key[certs] Generating "apiserver" certificate and key[certs] apiserver serving cert is signed for DNS names [master kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local] and IPs [10.96.0.1 192.168.81.30][certs] Generating "apiserver-kubelet-client" certificate and key[certs] Generating "front-proxy-ca" certificate and key[certs] Generating "front-proxy-client" certificate and key[certs] Generating "etcd/ca" certificate and key[certs] Generating "etcd/server" certificate and key[certs] etcd/server serving cert is signed for DNS names [master localhost] and IPs [192.168.81.30 127.0.0.1 ::1][certs] Generating "etcd/peer" certificate and key[certs] etcd/peer serving cert is signed for DNS names [master localhost] and IPs [192.168.81.30 127.0.0.1 ::1][certs] Generating "etcd/healthcheck-client" certificate and key[certs] Generating "apiserver-etcd-client" certificate and key[certs] Generating "sa" key and public key[kubeconfig] Using kubeconfig folder "/etc/kubernetes"[kubeconfig] Writing "admin.conf" kubeconfig file[kubeconfig] Writing "kubelet.conf" kubeconfig file[kubeconfig] Writing "controller-manager.conf" kubeconfig file[kubeconfig] Writing "scheduler.conf" kubeconfig file[control-plane] Using manifest folder "/etc/kubernetes/manifests"[control-plane] Creating static Pod manifest for "kube-apiserver"[control-plane] Creating static Pod manifest for "kube-controller-manager"[control-plane] Creating static Pod manifest for "kube-scheduler"[etcd] Creating static Pod manifest for local etcd in "/etc/kubernetes/manifests"[wait-control-plane] Waiting for the kubelet to boot up the control plane as static Pods from directory "/etc/kubernetes/manifests". This can take up to 4m0s[apiclient] All control plane components are healthy after 16.502507 seconds[upload-config] Storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace[kubelet] Creating a ConfigMap "kubelet-config-1.17" in namespace kube-system with the configuration for the kubelets in the cluster[upload-certs] Skipping phase. Please see --upload-certs[mark-control-plane] Marking the node master as control-plane by adding the label "node-role.kubernetes.io/master=''"[mark-control-plane] Marking the node master as control-plane by adding the taints [node-role.kubernetes.io/master:NoSchedule][bootstrap-token] Using token: dcg8kk.t42rp6f32p2szdda[bootstrap-token] Configuring bootstrap tokens, cluster-info ConfigMap, RBAC Roles[bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials[bootstrap-token] configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token[bootstrap-token] configured RBAC rules to allow certificate rotation for all node client certificates in the cluster[bootstrap-token] Creating the "cluster-info" ConfigMap in the "kube-public" namespace[addons] Applied essential addon: CoreDNS[addons] Applied essential addon: kube-proxyYour Kubernetes control-plane has initialized successfully!To start using your cluster, you need to run the following as a regular user: mkdir -p $HOME/.kube sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config sudo chown $(id -u):$(id -g) $HOME/.kube/configYou should now deploy a pod network to the cluster.Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at: https://kubernetes.io/docs/concepts/cluster-administration/addons/Then you can join any number of worker nodes by running the following on each as root:kubeadm join 192.168.81.30:6443 --token dcg8kk.t42rp6f32p2szdda \ --discovery-token-ca-cert-hash sha256:7b21de9e4be342bd5d971fbc9859169c7606c29d735f057a767ffcdd439bce10

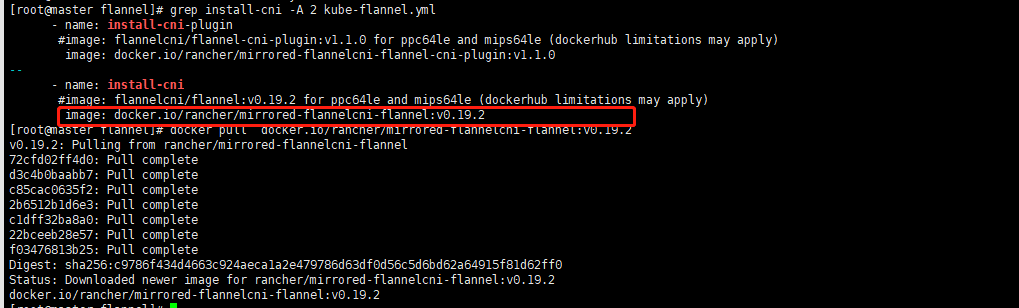

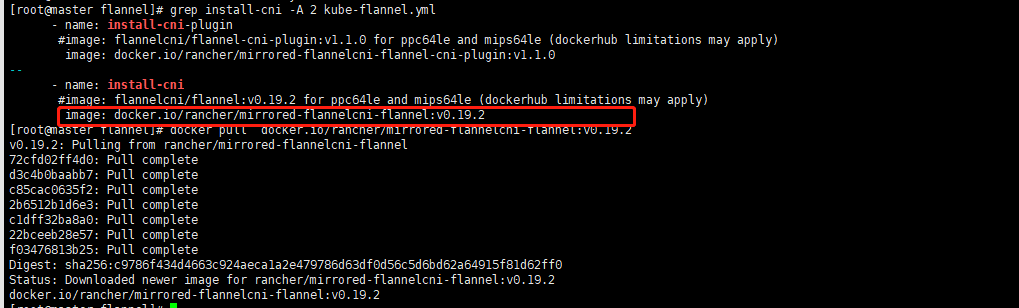

配置网络插件

master节点下载yaml配置文件特别说明:版本会经常更新,如果配置成功,就手动去https://raw.githubusercontent.com/coreos/flannel/master/Documentation/ 下载最新版yaml文件[root@master ~]# cd ~ && mkdir flannel && cd flannel[root@master flannel]# curl -O https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml修改配置文件kube-flannel.yml[root@master flannel]# grep install-cni -A 2 kube-flannel.yml [root@master flannel]# docker pull docker.io/rancher/mirrored-flannelcni-flannel:v0.19.2【确保后续没问题下载所有kube-flannel.yml文件的image】[root@master flannel]# docker pull docker.io/rancher/mirrored-flannelcni-flannel-cni-plugin:v1.1.0说明:默认的镜像是quay.io/coreos/flannel:v0.10.0-amd64,如果你能pull下来就不用修改镜像地址,否则,修改yml中镜像地址为阿里镜像源,要修改所有的镜像版本,里面有好几条flannel镜像地址image: registry.cn-shanghai.aliyuncs.com/gcr-k8s/flannel:v0.10.0-amd64指定启动网卡[root@master flannel]# cp kube-flannel.yml kube-flannel.yml_initflanneld启动参数加上--iface=<iface-name> containers: - name: kube-flannel image: registry.cn-shanghai.aliyuncs.com/gcr-k8s/flannel:v0.10.0-amd64 command: - /opt/bin/flanneld args: - --ip-masq - --kube-subnet-mgr - --iface=ens33 【增加这一行---网卡名称】 - --iface=eth0 ⚠️⚠️⚠️--iface=ens33 的值,是你当前的网卡,或者可以指定多网卡启动[root@master flannel]# kubectl apply -f ~/flannel/kube-flannel.ymlnamespace/kube-flannel createdclusterrole.rbac.authorization.k8s.io/flannel createdclusterrolebinding.rbac.authorization.k8s.io/flannel createdserviceaccount/flannel createdconfigmap/kube-flannel-cfg createddaemonset.apps/kube-flannel-ds created 查看[root@master flannel]# kubectl get pods --namespace kube-systemNAME READY STATUS RESTARTS AGEcoredns-5644d7b6d9-bs4gj 1/1 Running 0 31mcoredns-5644d7b6d9-xf25l 1/1 Running 0 31metcd-master 1/1 Running 0 30mkube-apiserver-master 1/1 Running 0 30mkube-controller-manager-master 1/1 Running 0 30mkube-proxy-mp8tf 1/1 Running 0 31mkube-scheduler-master 1/1 Running 0 30m[root@master flannel]# kubectl get serviceNAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGEkubernetes ClusterIP 10.96.0.1 <none> 443/TCP 32m[root@master flannel]# kubectl get svc --namespace kube-systemNAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGEkube-dns ClusterIP 10.96.0.10 <none> 53/UDP,53/TCP,9153/TCP 32m

配置所有node节点加入集群

在所有node节点操作,此命令为初始化master成功后返回的结果kubeadm join 192.168.81.30:6443 --token dcg8kk.t42rp6f32p2szdda \ --discovery-token-ca-cert-hash sha256:7b21de9e4be342bd5d971fbc9859169c7606c29d735f057a767ffcdd439bce10 然后master查看[root@master flannel]# kubectl get nodesNAME STATUS ROLES AGE VERSIONmaster Ready master 42m v1.16.0node1 Ready <none> 5m34s v1.16.0node2 Ready <none> 5m30s v1.16.0