简介

- 预设定相应的函数模型-Model

- 设定损失函数 -Loss function

- 求解最优的函数模型 -Find the best function

- 通过函数对未知数据的类别进行预测

- 基于概率分布的解决方法之上采用了回归模型

一、函数模型以及误差函数的定义

- 以最简单的二元分类问题为例,设定有两个类别,分别为

,

,我们需要进行分类的随机变量是

,则

属于

类的概率为:

%3D%5Cfrac%7BP(x%7CC_1)P(C_1)%7D%7BP(x%7CC_1)P(C_1)%2BP(x%7CC_2)P(C_2)%7D%0A#card=math&code=P%28C_1%7Cx%29%3D%5Cfrac%7BP%28x%7CC_1%29P%28C_1%29%7D%7BP%28x%7CC_1%29P%28C_1%29%2BP%28x%7CC_2%29P%28C_2%29%7D%0A)

%3D%5Cbegin%7Bcases%7D%20C_2%5Cquad%20P(C_1%7Cx)%3C0.5%5C%5C%20C_1%5Cquad%20P(C_1%7Cx)%3E0.5%20%20%5Cend%7Bcases%7D%0A#card=math&code=f%28x%29%3D%5Cbegin%7Bcases%7D%20C_2%5Cquad%20P%28C_1%7Cx%29%3C0.5%5C%5C%20C_1%5Cquad%20P%28C_1%7Cx%29%3E0.5%20%20%5Cend%7Bcases%7D%0A)

- 这时代入classification中的计算模型,有:

%26%3D%5Cfrac%7BP(x%7CC_1)P(C_1)%7D%7BP(x%7CC_1)P(C_1)%2BP(x%7CC_2)P(C_2)%7D%5C%5C%0A%26%3D%5Cfrac%7B1%7D%7B1%2B%5Cfrac%7BP(x%7CC_2)P(C_2)%7D%7BP(x%7CC_1)P(C_1)%7D%7D%5C%5C%0A%26%3D%5Cfrac%7B1%7D%7B1%2Be%5E%7B-z%7D%7D%5C%5C%0A%26%3D%5Csigma(z)%0A%5Cend%7Baligned%7D#card=math&code=%5Cbegin%7Baligned%7D%0AP%28C_1%7Cx%29%26%3D%5Cfrac%7BP%28x%7CC_1%29P%28C_1%29%7D%7BP%28x%7CC_1%29P%28C_1%29%2BP%28x%7CC_2%29P%28C_2%29%7D%5C%5C%0A%26%3D%5Cfrac%7B1%7D%7B1%2B%5Cfrac%7BP%28x%7CC_2%29P%28C_2%29%7D%7BP%28x%7CC_1%29P%28C_1%29%7D%7D%5C%5C%0A%26%3D%5Cfrac%7B1%7D%7B1%2Be%5E%7B-z%7D%7D%5C%5C%0A%26%3D%5Csigma%28z%29%0A%5Cend%7Baligned%7D)

%7D%7BP(C_2%7Cx)%7D%3Dln%5Cfrac%7BP(x%7CC_1)P(C_1)%7D%7BP(x%7CC_2)P(C_2)%7D%0A#card=math&code=%E5%85%B6%E4%B8%AD%5C%20z%3Dln%5Cfrac%7BP%28C_1%7Cx%29%7D%7BP%28C_2%7Cx%29%7D%3Dln%5Cfrac%7BP%28x%7CC_1%29P%28C_1%29%7D%7BP%28x%7CC_2%29P%28C_2%29%7D%0A)

- 代入高斯分布函数有:

P(C1)%7D%7BP(x%7CC_2)P(C_2)%7D%20%5C%5C%20%0A%26%3Dln%5Cfrac%7Bf%7B%5Cmu1%2C%5CSigma_1%7D(x)%7D%7Bf%7B%5Cmu2%2C%5CSigma_2%7D(x)%7D%2Bln(%5Cfrac%7BP(C_1)%7D%7BP(C_2)%7D)%5C%5C%0A%26%3D-%5Cfrac%7B1%7D%7B2%7Dx%5ET(%5CSigma_1%5E%7B-1%7D-%5CSigma_2%5E%7B-1%7D)x%2Bx%5ET(%5CSigma_1%5E%7B-1%7D%5Cmu_1-%5CSigma_2%5E%7B-1%7D%5Cmu_2)-%5Cfrac%7B1%7D%7B2%7D(%5Cmu_1%2B%5Cmu_2)%5CSigma%5E%7B-1%7D(%5Cmu_1-%5Cmu_2)%2B%5Cfrac%7B1%7D%7B2%7Dln(%5Cfrac%7B%7C%5CSigma_2%7C%7D%7B%7C%5CSigma_1%7C%7D)%2Bln(%5Cfrac%7BP(C_1)%7D%7BP(C_2)%7D)%0A%5Cend%7Baligned%7D#card=math&code=%5Cbegin%7Baligned%7D%0Az%26%3Dln%5Cfrac%7BP%28x%7CC_1%29P%28C_1%29%7D%7BP%28x%7CC_2%29P%28C_2%29%7D%20%5C%5C%20%0A%26%3Dln%5Cfrac%7Bf%7B%5Cmu1%2C%5CSigma_1%7D%28x%29%7D%7Bf%7B%5Cmu_2%2C%5CSigma_2%7D%28x%29%7D%2Bln%28%5Cfrac%7BP%28C_1%29%7D%7BP%28C_2%29%7D%29%5C%5C%0A%26%3D-%5Cfrac%7B1%7D%7B2%7Dx%5ET%28%5CSigma_1%5E%7B-1%7D-%5CSigma_2%5E%7B-1%7D%29x%2Bx%5ET%28%5CSigma_1%5E%7B-1%7D%5Cmu_1-%5CSigma_2%5E%7B-1%7D%5Cmu_2%29-%5Cfrac%7B1%7D%7B2%7D%28%5Cmu_1%2B%5Cmu_2%29%5CSigma%5E%7B-1%7D%28%5Cmu_1-%5Cmu_2%29%2B%5Cfrac%7B1%7D%7B2%7Dln%28%5Cfrac%7B%7C%5CSigma_2%7C%7D%7B%7C%5CSigma_1%7C%7D%29%2Bln%28%5Cfrac%7BP%28C_1%29%7D%7BP%28C_2%29%7D%29%0A%5Cend%7Baligned%7D)

- 当两个分布具有相同的方差值时,在多维高斯分布中显示为两者的协方差矩阵相同,这时:

-%5Cfrac%7B1%7D%7B2%7D(%5Cmu_1%2B%5Cmu_2)%5CSigma%5E%7B-1%7D(%5Cmu_1-%5Cmu_2)%2Bln(%5Cfrac%7BP(C_1)%7D%7BP(C_2)%7D)%5C%5C%0A%26%3D(%5Cmu_1-%5Cmu_2)%5ET%5CSigma%5E%7B-1%7Dx-%5Cfrac%7B1%7D%7B2%7D(%5Cmu_1%2B%5Cmu_2)%5CSigma%5E%7B-1%7D(%5Cmu_1-%5Cmu_2)%2Bln(%5Cfrac%7BP(C_1)%7D%7BP(C_2)%7D)%5C%5C%0A%26%3Dw%5ETx%2Bb%0A%5Cend%7Baligned%7D#card=math&code=%5Cbegin%7Baligned%7D%0A%E4%BB%A3%E5%85%A5%E4%B8%8A%E5%BC%8F%E5%88%99%EF%BC%9Az%26%3Dx%5ET%5CSigma%5E%7B-1%7D%28%5Cmu_1-%5Cmu_2%29-%5Cfrac%7B1%7D%7B2%7D%28%5Cmu_1%2B%5Cmu_2%29%5CSigma%5E%7B-1%7D%28%5Cmu_1-%5Cmu_2%29%2Bln%28%5Cfrac%7BP%28C_1%29%7D%7BP%28C_2%29%7D%29%5C%5C%0A%26%3D%28%5Cmu_1-%5Cmu_2%29%5ET%5CSigma%5E%7B-1%7Dx-%5Cfrac%7B1%7D%7B2%7D%28%5Cmu_1%2B%5Cmu_2%29%5CSigma%5E%7B-1%7D%28%5Cmu_1-%5Cmu_2%29%2Bln%28%5Cfrac%7BP%28C_1%29%7D%7BP%28C_2%29%7D%29%5C%5C%0A%26%3Dw%5ETx%2Bb%0A%5Cend%7Baligned%7D)

%5ET%5CSigma%5E%7B-1%7D%0A#card=math&code=%E5%85%B6%E4%B8%AD%5C%20w%3D%28%5Cmu_1-%5Cmu_2%29%5ET%5CSigma%5E%7B-1%7D%0A)

%5CSigma%5E%7B-1%7D(%5Cmu_1-%5Cmu_2)%2Bln(%5Cfrac%7BP(C_1)%7D%7BP(C_2)%7D)%0A#card=math&code=%E8%80%8C%5C%20b%3D%5Cfrac%7B1%7D%7B2%7D%28%5Cmu_1%2B%5Cmu_2%29%5CSigma%5E%7B-1%7D%28%5Cmu_1-%5Cmu_2%29%2Bln%28%5Cfrac%7BP%28C_1%29%7D%7BP%28C_2%29%7D%29%0A)

- 写成非向量形式:

- 所以可得:

%26%3Df(x)%5C%5C%0A%26%3D%5Csigma(z)%5C%5C%0A%26%3D%5Cfrac%7B1%7D%7B1%2Be%5E%7B-(%5Csum%7Bi%3D1%7D%5En%7B%5Comega%7Bi%7D%5Ccdot%5C%20x%7Bi%7D%7D%2Bb)%7D%7D%0A%5Cend%7Baligned%7D#card=math&code=%5Cbegin%7Baligned%7D%0AP%28C_1%7Cx%29%26%3Df%28x%29%5C%5C%0A%26%3D%5Csigma%28z%29%5C%5C%0A%26%3D%5Cfrac%7B1%7D%7B1%2Be%5E%7B-%28%5Csum%7Bi%3D1%7D%5En%7B%5Comega%7Bi%7D%5Ccdot%5C%20x%7Bi%7D%7D%2Bb%29%7D%7D%0A%5Cend%7Baligned%7D)

- 到了这里就可以

和

为参数进行回归运算,如果以网络的思想来看的话,就是在一层全联接层之后又加上了一个sigmod的激活函数将输入限制再在(0,1)中

- 定义误差函数:

%3D%5Csum%7Bi%3D1%7D%5E%7Bk%7D%7Bf(x%5Ei)%7D%5Ccdot%5Csum%7Bi%3Dk%2B1%7D%5E%7Bn%7D%7B(1-f(x%5Ei))%7D%0A#card=math&code=Likelihood%28f%29%3D%5Csum%7Bi%3D1%7D%5E%7Bk%7D%7Bf%28x%5Ei%29%7D%5Ccdot%5Csum%7Bi%3Dk%2B1%7D%5E%7Bn%7D%7B%281-f%28x%5Ei%29%29%7D%0A)

- 其中

为随机变量

#card=math&code=f%28x%29) 为

#card=math&code=P%28C_1%7Cx%29),

#card=math&code=1-f%28x%29) 为

#card=math&code=P%28C_2%7Cx%29)

- 1-k组的训练数据是

一类的,而k+1-n组得训练数据是

一类的

- 实际上就是寻找使训练集分类正确可能性最大的分布

二、求解函数参数

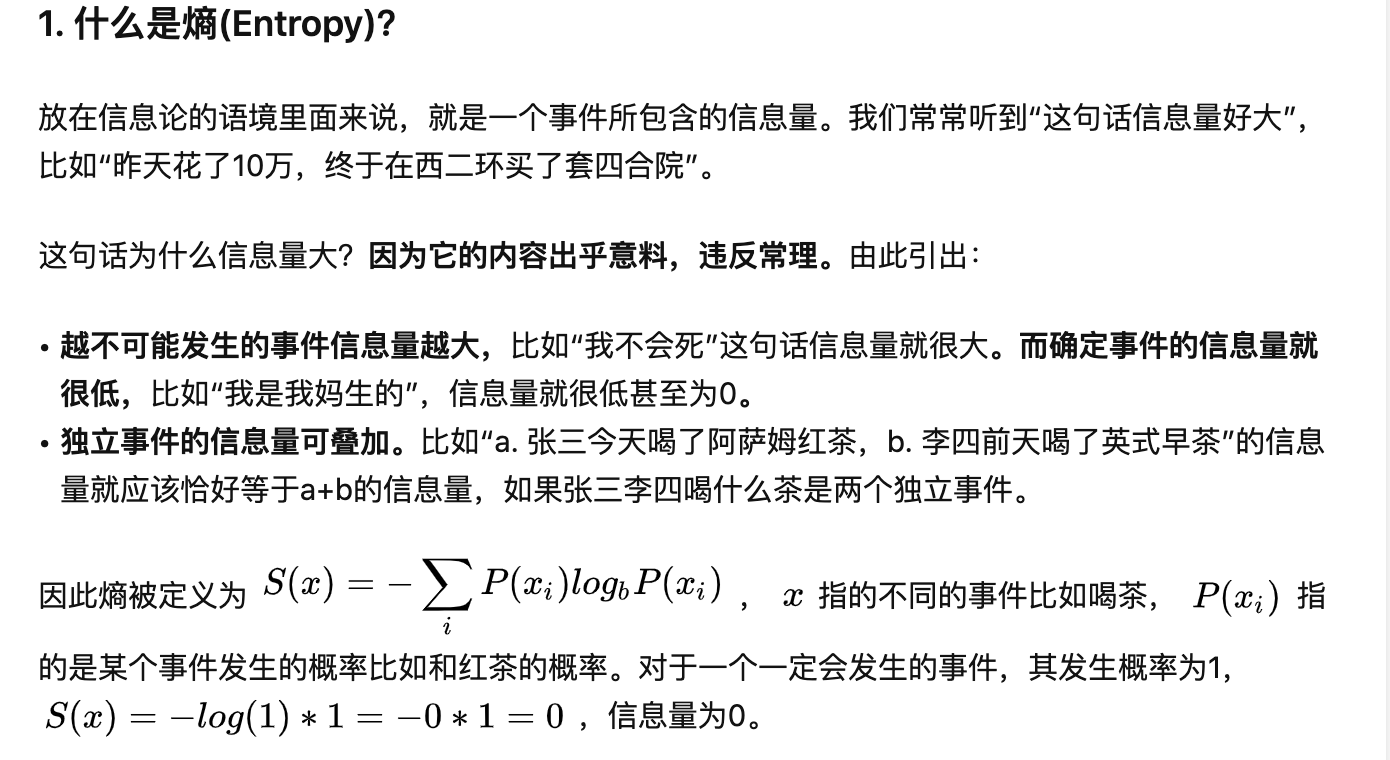

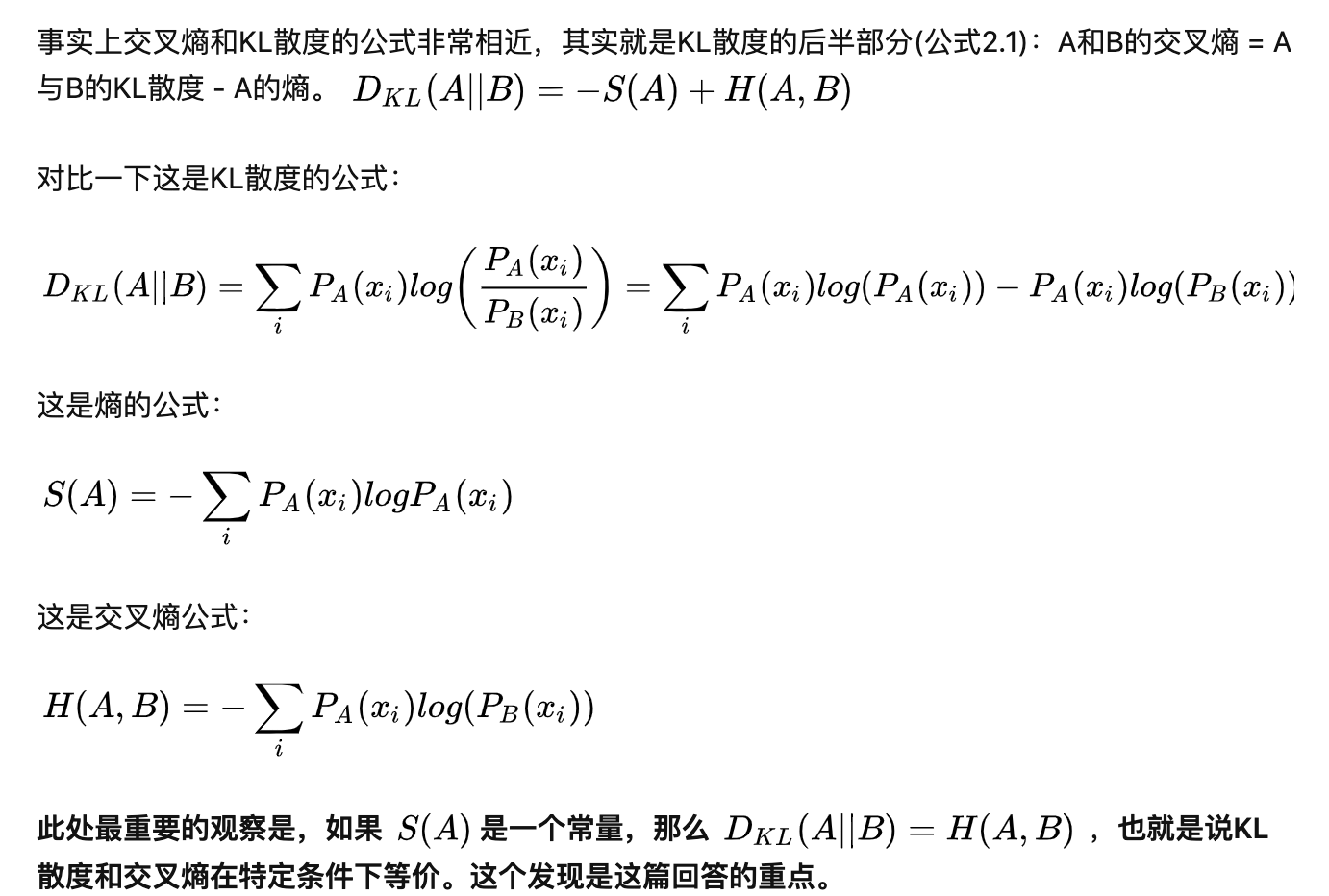

- cross entropy 交叉熵:

图片均取自知乎(https://www.zhihu.com/question/65288314/answer/244557337)

- 设如果

的类别属于

则

; 如果

的类别属于

则

,这时对一中的误差函数两边取对数则有:

)%3D-%5Csum%7Bi%3D1%7D%5En%7B%5B%5Chat%7By%7D%5Eilnf(x%5Ei)%2B(1-%5Chat%7By%7D%5Ei)ln(1-f(x%5Ei))%5D%7D%0A#card=math&code=-ln%28L%28x%29%29%3D-%5Csum%7Bi%3D1%7D%5En%7B%5B%5Chat%7By%7D%5Eilnf%28x%5Ei%29%2B%281-%5Chat%7By%7D%5Ei%29ln%281-f%28x%5Ei%29%29%5D%7D%0A)

- 这实际上就是一个交叉熵公式,而我们要做的就是使其最小

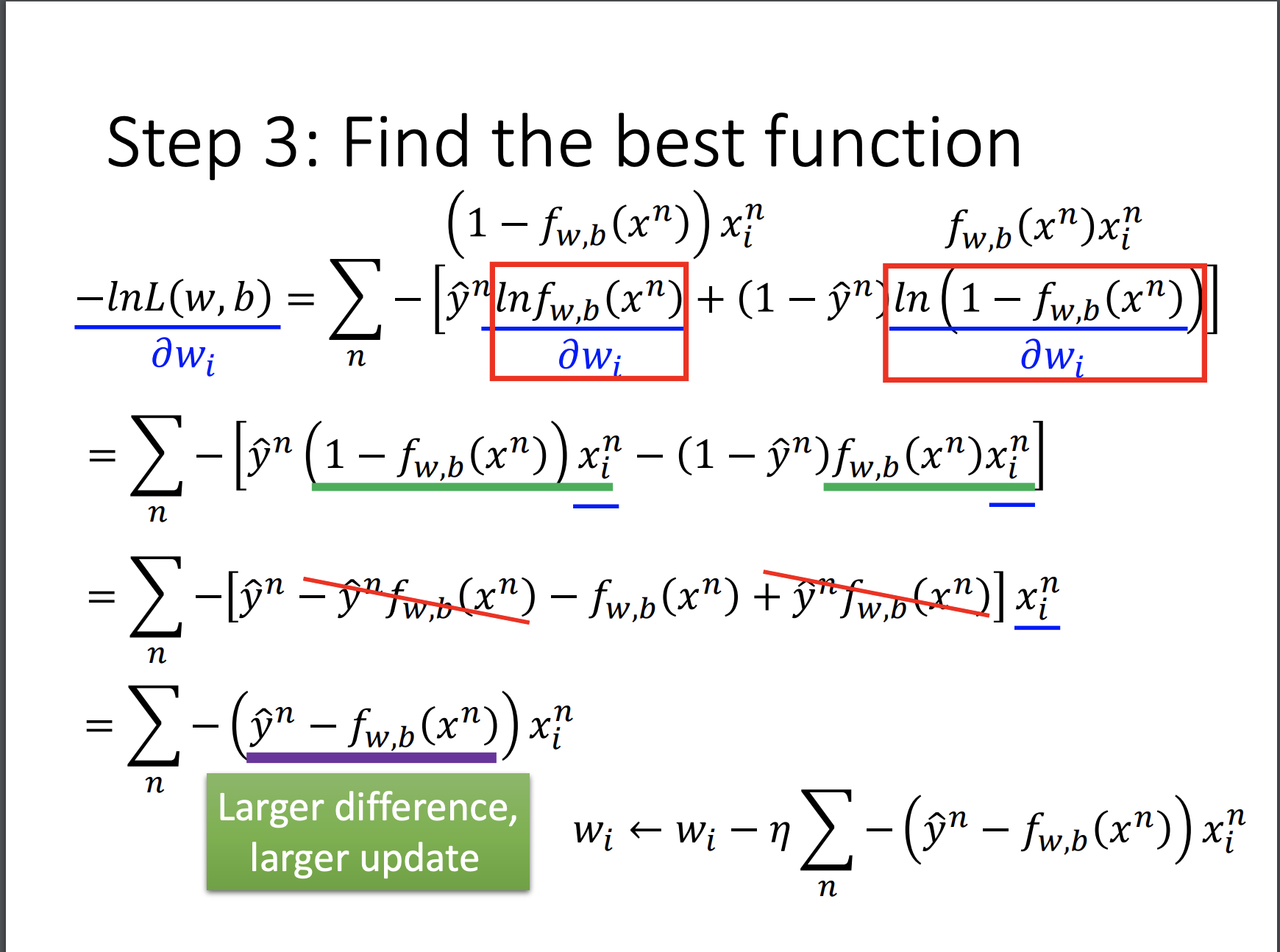

- 梯度下降

- 对比线性回归模型发现,其导数相同

三、为什么不使用二范数损失函数

- 当结果很接近最佳概率时二范数损失函数导数接近于0,当结果原理时其导数依然接近于0;所以为了寻求更好的效果选择交叉熵作为损失函数

- 可视化效果如下图所示

四、Discriminative && Generative

- 两种方法使用同一种模型,而求得参数的方法不同

- 实际上数据量较小时,生成模型更加优异;而数据量大时判别模型更加优异

五、其他

- 通过Sorfmax进行多分类问题的求解

- 逻辑回归对于异或门一类的问题是有局限的,需要进行Transform ——> 引出了神经网络(神经网络可以看作让机器对特征做transform)