- Unsupervised Representation Learning with Deep Convolutional Generative Adversarial Networks

- ABSTRACT

- 1 INTRODUCTION

- 2 RELATED WORK

- 3 APPROACH AND MODEL ARCHITECTURE

- 4 DETAILS OF ADVERSARIAL TRAINING

- 5 EMPIRICAL VALIDATION OF DCGAN SCAPABILITIES

- 6 INVESTIGATING AND VISUALIZING THE INTERNALS OF THE NETWORKS

- ">

- 7 CONCLUSION AND FUTURE WORK

- ACKNOWLEDGMENTS

Unsupervised Representation Learning with Deep Convolutional Generative Adversarial Networks

Alec Radford, Luke Metz, Soumith Chintala In recent years, supervised learning with convolutional networks (CNNs) has seen huge adoption in computer vision applications. Comparatively, unsupervised learning with CNNs has received less attention. In this work we hope to help bridge the gap between the success of CNNs for supervised learning and unsupervised learning. We introduce a class of CNNs called deep convolutional generative adversarial networks (DCGANs), that have certain architectural constraints, and demonstrate that they are a strong candidate for unsupervised learning. Training on various image datasets, we show convincing evidence that our deep convolutional adversarial pair learns a hierarchy of representations from object parts to scenes in both the generator and discriminator. Additionally, we use the learned features for novel tasks - demonstrating their applicability as general image representations. | Comments: | Under review as a conference paper at ICLR 2016 | | —- | —- | | Subjects: | Machine Learning (cs.LG); Computer Vision and Pattern Recognition (cs.CV) | | Cite as: | arXiv:1511.06434 [cs.LG] | | | (or arXiv:1511.06434v2 [cs.LG] for this version) |

Submission history

From: Alec Radford [view email]

[v1] Thu, 19 Nov 2015 22:50:32 UTC (9,027 KB)

[v2] Thu, 7 Jan 2016 23:09:39 UTC (9,028 KB)

ABSTRACT

In recent years, supervised learning with convolutional networks (CNNs) has seen huge adoption in computer vision applications. Comparatively, unsupervised learning with CNNs has received less attention. In this work we hope to help bridge the gap between the success of CNNs for supervised learning and unsupervised learning. We introduce a class of CNNs called deep convolutional generative adversarial networks (DCGANs), that have certain architectural constraints, and demonstrate that they are a strong candidate for unsupervised learning. Training on various image datasets, we show convincing evidence that our deep convolutional adversarial pair learns a hierarchy of representations from object parts to scenes in both the generator and discriminator. Additionally, we use the learned features for novel tasks - demonstrating their applicability as general image representations.

近年来,基于卷积网络(CNNs)的监督学习在计算机视觉应用中得到了广泛的应用。相比之下,使用cnn的无监督学习得到的关注较少。在这项工作中,我们希望能帮助弥合cnn在有监督学习和无监督学习方面的成功差距。我们介绍了一类被称为深度卷积生成对抗网络(DCGANs)的cnn,它们具有一定的架构约束,并证明它们是无监督学习的强大候选对象。通过对各种图像数据集的训练,我们展示了令人信服的证据,表明我们的深度卷积对抗对在生成器和鉴别器中学习了从对象部分到场景的表示层次结构。此外,我们将学习到的特征用于新任务——展示它们作为一般图像表示的适用性。

1 INTRODUCTION

Learning reusable feature representations from large unlabeled datasets has been an area of active research. In the context of computer vision, one can leverage the practically unlimited amount of unlabeled images and videos to learn good intermediate representations, which can then be used on a variety of supervised learning tasks such as image classification. We propose that one way to build good image representations is by training Generative Adversarial Networks (GANs) (Goodfellow et al., 2014), and later reusing parts of the generator and discriminator networks as feature extractors for supervised tasks. GANs provide an attractive alternative to maximum likelihood techniques. One can additionally argue that their learning process and the lack of a heuristic cost function (such as pixel-wise independent mean-square error) are attractive to representation learning. GANs have been known to be unstable to train, often resulting in generators that produce nonsensical outputs. There has been very limited published research in trying to understand and visualize what GANs learn, and the intermediate representations of multi-layer GANs.

In this paper, we make the following contributions

• We propose and evaluate a set of constraints on the architectural topology of Convolutional GANs that make them stable to train in most settings. We name this class of architectures Deep Convolutional GANs (DCGAN)

• We use the trained discriminators for image classification tasks, showing competitive performance with other unsupervised algorithms.

• We visualize the filters learnt by GANs and empirically show that specific filters have learned to draw specific objects.

• We show that the generators have interesting vector arithmetic properties allowing for easy

manipulation of many semantic qualities of generated samples.

从大型未标记数据集中学习可重用特征表示法一直是积极研究的领域。 在计算机视觉的背景下,人们可以利用几乎无限量的未标记图像和视频来学习良好的中间表示,然后可以将其用于各种有监督的学习任务,例如图像分类。 我们建议一种构建良好图像表示的方法是通过训练生成对抗网络(GAN)(Goodfellow等人,2014),然后将生成器和鉴别器网络的某些部分重用作为受监管任务的特征提取器。 GAN为最大似然技术提供了一种有吸引力的替代方法。 有人可能会说,他们的学习过程和缺乏启发式成本函数(如像素独立的均方误差)对表示学习很有吸引力。 众所周知,GAN训练起来不稳定,通常会导致生成器产生无意义的输出。 在试图理解和可视化GAN所学以及多层GAN的中间表示形式方面,已发表的研究非常有限。

在本文中,我们做出了以下贡献

•我们提出并评估了卷积GAN的体系结构拓扑上的一组约束条件,这些约束条件使它们在大多数情况下都能稳定地进行训练。 我们将此类架构命名为深度卷积GAN(DCGAN)

•我们使用训练有素的鉴别器来执行图像分类任务,从而显示出与其他无监督算法的竞争性能。

•我们将GAN所学习的过滤器可视化,并凭经验表明特定的过滤器已学会绘制特定的对象。

•我们证明了生成器具有有趣的矢量算术特性,可以轻松实现操纵所生成样本的许多语义质量。

2 RELATED WORK

2.1 REPRESENTATION LEARNING FROM UNLABELED DATA

Unsupervised representation learning is a fairly well studied problem in general computer vision research, as well as in the context of images. A classic approach to unsupervised representation learning is to do clustering on the data (for example using K-means), and leverage the clusters for improved classification scores. In the context of images, one can do hierarchical clustering of image patches (Coates & Ng, 2012) to learn powerful image representations. Another popular method is to train auto-encoders (convolutionally, stacked (Vincent et al., 2010), separating the what and where components of the code (Zhao et al., 2015), ladder structures (Rasmus et al., 2015)) that encode an image into a compact code, and decode the code to reconstruct the image as accurately as possible. These methods have also been shown to learn good feature representations from image pixels. Deep belief networks (Lee et al., 2009) have also been shown to work well in learning hierarchical representations.

在一般的计算机视觉研究以及图像环境中,无监督表示学习是一个相当好的研究问题。 无监督表示学习的经典方法是对数据进行聚类(例如,使用K-means),并利用聚类来提高分类得分。 在图像的上下文中,可以对图像补丁进行分层聚类(Coates和Ng,2012),以学习功能强大的图像表示。 另一种流行的方法是训练自动编码器(卷积,堆叠(Vincent等人,2010),分离代码的内容和位置(Zhao等人,2015),梯形结构(Rasmus等人,2015)。 ),将图像编码为紧凑代码,然后对代码进行解码,以尽可能准确地重建图像。 还显示了这些方法可从图像像素中学习良好的特征表示。 深度信念网络(Lee等人,2009)也已被证明在学习分层表示中表现良好。

2.2 GENERATING NATURAL IMAGES

Generative image models are well studied and fall into two categories: parametric and nonparametric.

The non-parametric models often do matching from a database of existing images, often matching patches of images, and have been used in texture synthesis (Efros et al., 1999), super-resolution (Freeman et al., 2002) and in-painting (Hays & Efros, 2007).

Parametric models for generating images has been explored extensively (for example on MNIST digits or for texture synthesis (Portilla & Simoncelli, 2000)). However, generating natural images of the real world have had not much success until recently. A variational sampling approach to generating images (Kingma & Welling, 2013) has had some success, but the samples often suffer from being blurry. Another approach generates images using an iterative forward diffusion process (Sohl-Dickstein et al., 2015). Generative Adversarial Networks (Goodfellow et al., 2014) generated images suffering from being noisy and incomprehensible. A laplacian pyramid extension to this approach (Denton et al., 2015) showed higher quality images, but they still suffered from the objects looking wobbly because of noise introduced in chaining multiple models. A recurrent network approach (Gregor et al., 2015) and a deconvolution network approach (Dosovitskiy et al., 2014) have also recently had some success with generating natural images. However, they have not leveraged the generators for supervised tasks.

生成图像模型已经过深入研究,分为两类:参数化和非参数化。

非参数模型通常会从现有图像的数据库中进行匹配,通常会匹配图像的斑块,并已用于纹理合成(Efros等人,1999),超分辨率(Freeman等人,2002)和 绘画(Hays和Efros,2007年)。

已经广泛探索了用于生成图像的参数模型(例如,在MNIST数字上或用于纹理合成(Portilla&Simoncelli,2000))。 但是,直到最近,生成现实世界的自然图像并没有取得多大成功。 一种用于生成图像的变分采样方法(Kingma&Welling,2013年)取得了一些成功,但是样本经常会变得模糊。 另一种方法是使用迭代正向扩散过程生成图像(Sohl-Dickstein等人,2015)。 生成对抗网络(Goodfellow et al。,2014)生成的图像饱受噪音和难以理解的困扰。 这种方法的拉普拉斯金字塔扩展(Denton等人,2015)显示了更高质量的图像,但是由于链接多个模型时引入的噪声,它们仍然遭受对象看起来不稳定的困扰。 最近,递归网络方法(Gregor等,2015)和反卷积网络方法(Dosovitskiy等,2014)在生成自然图像方面也取得了一些成功。 但是,他们没有利用生成器来执行受监管的任务。

2.3 VISUALIZING THE INTERNALS OF CNNS

One constant criticism of using neural networks has been that they are black-box methods, with little understanding of what the networks do in the form of a simple human-consumable algorithm. In the context of CNNs, Zeiler et. al. (Zeiler & Fergus, 2014) showed that by using deconvolutions and filtering the maximal activations, one can find the approximate purpose of each convolution filter in the network. Similarly, using a gradient descent on the inputs lets us inspect the ideal image that activates certain subsets of filters (Mordvintsev et al.).

使用神经网络的一个经常的批评是它们是黑盒方法,对以简单的人类消耗算法的形式对网络所做的了解很少。 在CNN的背景下,Zeiler等。 al。 (Zeiler&Fergus,2014)表明,通过使用反卷积并过滤最大激活量,可以找到网络中每个卷积滤波器的近似目的。 同样,在输入上使用梯度下降使我们可以检查理想的图像,该图像可以激活滤镜的某些子集(Mordvintsev等人)。

3 APPROACH AND MODEL ARCHITECTURE

Historical attempts to scale up GANs using CNNs to model images have been unsuccessful. This motivated the authors of LAPGAN (Denton et al., 2015) to develop an alternative approach to iteratively upscale low resolution generated images which can be modeled more reliably. We also encountered difficulties attempting to scale GANs using CNN architectures commonly used in the supervised literature. However, after extensive model exploration we identified a family of architectures that resulted in stable training across a range of datasets and allowed for training higher resolution and deeper generative models.

Core to our approach is adopting and modifying three recently demonstrated changes to CNN architectures.

The first is the all convolutional net (Springenberg et al., 2014) which replaces deterministic spatial pooling functions (such as maxpooling) with strided convolutions, allowing the network to learn its own spatial downsampling. We use this approach in our generator, allowing it to learn its own spatial upsampling, and discriminator.

Second is the trend towards eliminating fully connected layers on top of convolutional features. The strongest example of this is global average pooling which has been utilized in state of the art image classification models (Mordvintsev et al.). We found global average pooling increased model stability but hurt convergence speed. A middle ground of directly connecting the highest convolutional features to the input and output respectively of the generator and discriminator worked well. The first layer of the GAN, which takes a uniform noise distribution Z as input, could be called fully connected as it is just a matrix multiplication, but the result is reshaped into a 4-dimensional tensor and used as the start of the convolution stack. For the discriminator, the last convolution layer is flattened and then fed into a single sigmoid output. See Fig. 1 for a visualization of an example model architecture.

Third is Batch Normalization (Ioffe & Szegedy, 2015) which stabilizes learning by normalizing the input to each unit to have zero mean and unit variance. This helps deal with training problems that arise due to poor initialization and helps gradient flow in deeper models. This proved critical to get deep generators to begin learning, preventing the generator from collapsing all samples to a single point which is a common failure mode observed in GANs. Directly applying batchnorm to all layers however, resulted in sample oscillation and model instability. This was avoided by not applying batchnorm to the generator output layer and the discriminator input layer.

The ReLU activation (Nair & Hinton, 2010) is used in the generator with the exception of the output layer which uses the Tanh function. We observed that using a bounded activation allowed the model to learn more quickly to saturate and cover the color space of the training distribution. Within the discriminator we found the leaky rectified activation (Maas et al., 2013) (Xu et al., 2015) to work well, especially for higher resolution modeling. This is in contrast to the original GAN paper, which used the maxout activation (Goodfellow et al., 2013).

Architecture guidelines for stable Deep Convolutional GANs

• Replace any pooling layers with strided convolutions (discriminator) and fractional-strided convolutions (generator).

• Use batchnorm in both the generator and the discriminator.

• Remove fully connected hidden layers for deeper architectures.

• Use ReLU activation in generator for all layers except for the output, which uses Tanh.

• Use LeakyReLU activation in the discriminator for all layers.

历史上曾尝试使用cnn来模拟图像来扩大GANs,但都失败了。这促使LAPGAN的作者(Denton et al., 2015)开发了一种替代方法,用于迭代高端低分辨率生成的图像,可以更可靠地建模。我们还遇到了困难,试图使用CNN架构,通常使用在监督文献。然而,在广泛的模型探索之后,我们发现了一系列体系结构,这些体系结构能够在一系列数据集上进行稳定的训练,并允许训练更高分辨率和更深入的生成模型。

我们的方法的核心是采用和修改三个最近演示的改变CNN架构。

第一种是全卷积网络(Springenberg et al., 2014),它用步幅卷积替换确定性空间池化函数(如maxpooling),允许网络学习自己的空间下采样。我们在我们的生成器中使用这种方法,允许它学习自己的空间上采样和鉴别器。

其次是在卷积特征之上消除完全连接层的趋势。最有力的例子是全球平均池化,它已被应用于最先进的图像分类模型中(Mordvintsev等人)。我们发现,全球平均汇集增加了模型的稳定性,但损害了收敛速度。一种将最高卷积特征分别直接连接到发电机和鉴别器的输入和输出的中间方案工作得很好。GAN的第一层以均匀噪声分布Z为输入,可以称为全连通,因为它只是一个矩阵乘法,但结果被重塑为一个4维张量,用作卷积堆栈的开始。对于鉴别器,最后的卷积层被夷平,然后馈送到一个单一的s型输出。图1给出了一个示例模型架构的可视化。

第三是批归一化(Ioffe & Szegedy, 2015),通过将每个单元的输入归一化使其均值和单位方差为零来稳定学习。这有助于处理由于糟糕的初始化而产生的训练问题,并帮助更深层次的模型中的梯度流动。这被证明是让深层生成器开始学习的关键,防止生成器将所有样本压缩到一个点,这是在GANs中观察到的常见故障模式。然而,直接将batchnorm应用到所有层,会导致样品振荡和模型不稳定。通过不对发电机输出层和鉴别器输入层应用批数,避免了这一问题。

除了使用Tanh函数的输出层外,ReLU激活(Nair & Hinton, 2010)用于生成器。我们观察到,使用有界激活可以让模型更快地学习,以饱和和覆盖训练分布的颜色空间。在鉴别器中,我们发现漏泄矫正激活(Maas et al., 2013) (Xu et al., 2015)效果很好,特别是对于更高分辨率的建模。这与GAN的原始论文形成对比,后者使用了maxout激活(Goodfellow等人,2013年)。

稳定深度卷积GANs的架构指南

•用跨越卷积(discriminator)和小数跨越卷积(generator)替换所有池层。

•在生成器和鉴别器中都使用batchnorm。

•为更深层的架构移除完全连接的隐藏层。

•在generator中使用ReLU激活所有层,除了输出,它使用Tanh。

•在所有层的鉴别器中使用LeakyReLU激活

4 DETAILS OF ADVERSARIAL TRAINING

We trained DCGANs on three datasets, Large-scale Scene Understanding (LSUN) (Yu et al., 2015), Imagenet-1k and a newly assembled Faces dataset. Details on the usage of each of these datasets are given below.

No pre-processing was applied to training images besides scaling to the range of the tanh activation function [-1, 1]. All models were trained with mini-batch stochastic gradient descent (SGD) with a mini-batch size of 128. All weights were initialized from a zero-centered Normal distribution with standard deviation 0.02. In the LeakyReLU, the slope of the leak was set to 0.2 in all models. While previous GAN work has used momentum to accelerate training, we used the Adam optimizer (Kingma & Ba, 2014) with tuned hyperparameters. We found the suggested learning rate of 0.001, to be too high, using 0.0002 instead. Additionally, we found leaving the momentum term β 1 at the suggested value of 0.9 resulted in training oscillation and instability while reducing it to 0.5 helped stabilize training.

我们在三个数据集上训练了DCGAN,三个数据集是大型场景理解(LSUN)(Yu等人,2015),Imagenet-1k和一个新组装的Faces数据集。 下面给出了有关每个数据集的用法的详细信息。

除了缩放到tanh激活函数[-1,1]的范围外,没有对训练图像进行任何预处理。 所有模型均使用最小批量大小为128的微型批量随机梯度下降(SGD)进行训练。所有权重均从零中心正态分布初始化,标准偏差为0.02。 在LeakyReLU中,所有模型的泄漏斜率均设置为0.2。 尽管GAN之前的工作是利用动量来加快训练速度,但我们使用了带有优化超参数的Adam优化器(Kingma&Ba,2014)。 我们发现建议的学习率0.001太高,而使用0.0002代替。 此外,我们发现将动量项β1保持在建议值0.9会导致训练震荡和不稳定性,而将其减小到0.5则有助于稳定训练。

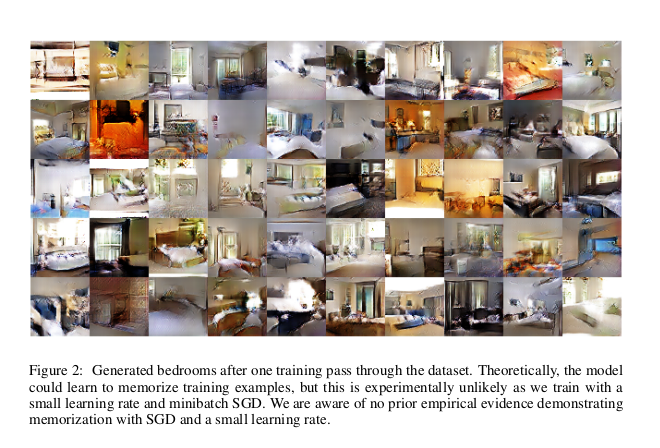

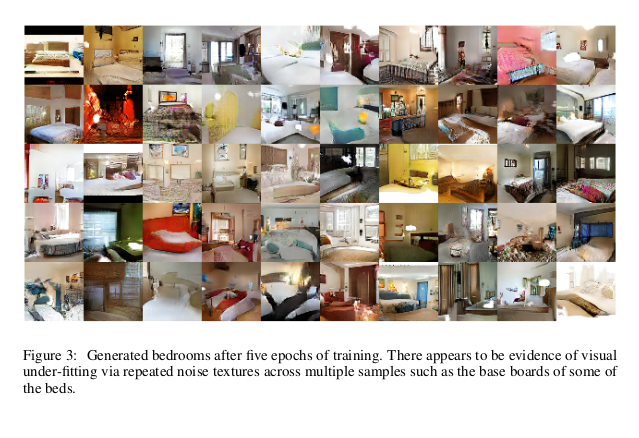

4.1 LSUN

As visual quality of samples from generative image models has improved, concerns of over-fitting and memorization of training samples have risen. To demonstrate how our model scales with more data and higher resolution generation, we train a model on the LSUN bedrooms dataset containing a little over 3 million training examples. Recent analysis has shown that there is a direct link between how fast models learn and their generalization performance (Hardt et al., 2015). We show samples from one epoch of training (Fig.2), mimicking online learning, in addition to samples after convergence (Fig.3), as an opportunity to demonstrate that our model is not producing high quality samples via simply overfitting/memorizing training examples. No data augmentation was applied to the images.

随着来自生成图像模型的样本的视觉质量的提高,对训练样本的过拟合和记忆的关注也增加了。 为了演示我们的模型如何利用更多的数据和更高的分辨率生成规模,我们在LSUN卧室数据集中训练了一个模型,其中包含了略超过300万个训练示例。 最近的分析表明,快速学习模型与其泛化性能之间存在直接联系(Hardt等,2015)。 除了收敛后的样本(图3),我们还展示了模仿在线学习的一个时期的训练样本(图2),以此为契机来证明我们的模型不是通过简单拟合/记忆训练来生成高质量样本 例子。 没有数据增强应用于图像。

4.1.1 DEDUPLICATION

To further decrease the likelihood of the generator memorizing input examples (Fig.2) we perform a simple image de-duplication process. We fit a 3072-128-3072 de-noising dropout regularized RELU autoencoder on 32x32 downsampled center-crops of training examples. The resulting code layer activations are then binarized via thresholding the ReLU activation which has been shown to be an effective information preserving technique (Srivastava et al., 2014) and provides a convenient form of semantic-hashing, allowing for linear time deduplication . Visual inspection of hash collisions showed high precision with an estimated false positive rate of less than 1 in 100. Additionally, the technique detected and removed approximately 275,000 near duplicates, suggesting a high recall.

为了进一步降低生成器记忆输入示例的可能性(图2),我们执行了一个简单的图像去重复处理。我们将3072-128-3072去噪dropout正则化RELU自动编码器安装在32x32下采样的训练示例中心。结果代码层激活然后通过对ReLU激活进行阈值化,这已被证明是一种有效的信息保存技术(Srivastava等人,2014),并提供了一种方便的语义哈希形式,允许线性时间重复数据删除。哈希碰撞的目视检查显示了很高的精度,估计假阳性率小于1 / 100。此外,该技术检测并删除了大约275,000个接近重复的产品,这表明召回率很高。

4.2 FACES

We scraped images containing human faces from random web image queries of peoples names. The people names were acquired from dbpedia, with a criterion that they were born in the modern era. This dataset has 3M images from 10K people. We run an OpenCV face detector on these images, keeping the detections that are sufficiently high resolution, which gives us approximately 350,000 face boxes. We use these face boxes for training. No data augmentation was applied to the images.

我们从人名的随机Web图像查询中抓取了包含人脸的图像。 这些人的名字是从dbpedia获得的,其标准是他们出生于现代时代。 该数据集包含1万个人的3M图像。 我们在这些图像上运行OpenCV人脸检测器,并保持足够高的分辨率,这使我们获得了大约350,000个人脸检测盒。 我们使用这些脸盒进行训练。 没有数据增强应用于图像。

4.3 IMAGENET -1 K

We use Imagenet-1k (Deng et al., 2009) as a source of natural images for unsupervised training. We train on 32 × 32 min-resized center crops. No data augmentation was applied to the images.

我们使用Imagenet-1k (Deng et al., 2009)作为无监督训练的自然图像来源。我们在32 × 32最小大小的中心作物上进行训练。没有数据增强应用于图像。

5 EMPIRICAL VALIDATION OF DCGAN SCAPABILITIES

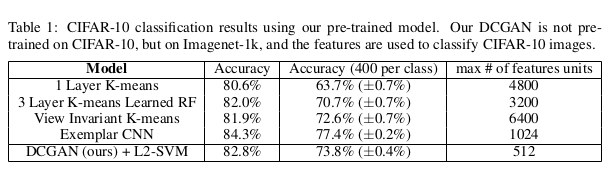

5.1 CLASSIFYING CIFAR-10 USING GAN S AS A FEATURE EXTRACTOR

One common technique for evaluating the quality of unsupervised representation learning algorithms is to apply them as a feature extractor on supervised datasets and evaluate the performance of linear models fitted on top of these features. On the CIFAR-10 dataset, a very strong baseline performance has been demonstrated from a well tuned single layer feature extraction pipeline utilizing K-means as a feature learning algorithm. When using a very large amount of feature maps (4800) this technique achieves 80.6% accuracy. An unsupervised multi-layered extension of the base algorithm reaches 82.0% accuracy (Coates & Ng, 2011). To evaluate the quality of the representations learned by DCGANs for supervised tasks, we train on Imagenet-1k and then use the discriminator’s convolutional features from all layers, maxpooling each layers representation to produce a 4 × 4 spatial grid. These features are then flattened and concatenated to form a 28672 dimensional vector and a regularized linear L2-SVM classifier is trained on top of them. This achieves 82.8% accuracy, out performing all K-means based approaches. Notably, the discriminator has many less feature maps (512 in the highest layer) compared to K-means based techniques, but does result in a larger total feature vector size due to the many layers of 4 × 4 spatial locations. The performance of DCGANs is still less than that of Exemplar CNNs (Dosovitskiy et al., 2015), a technique which trains normal discriminative CNNs in an unsupervised fashion to differentiate between specifically chosen, aggressively augmented, exemplar samples from the source dataset. Further improvements could be made by finetuning the discriminator’s representations, but we leave this for future work. Additionally, since our DCGAN was never trained on CIFAR-10 this experiment also demonstrates the domain robustness of the learned features.

评估无监督表示学习算法质量的一种常用技术是将其作为特征提取器应用于有监督的数据集,并评估基于这些特征的线性模型的性能。 在CIFAR-10数据集上,使用K均值作为特征学习算法,通过精心调整的单层特征提取管道,已证明了非常强的基线性能。 当使用大量特征图(4800)时,此技术可达到80.6%的精度。 基本算法的无监督多层扩展达到82.0%的准确性(Coates和Ng,2011年)。 为了评估DCGAN在有监督任务中学习到的表示的质量,我们在Imagenet-1k上进行训练,然后使用鉴别器的所有层的卷积特征,最大程度地汇集每一层的表示以生成4×4空间网格。 然后将这些特征展平并连接起来以形成28672维向量,并在它们之上训练规则化的线性L2-SVM分类器。 在执行所有基于K均值的方法时,该方法可达到82.8%的精度。 值得注意的是,与基于K均值的技术相比,鉴别器的特征图要少得多(最高层为512个),但由于有许多4×4空间位置的层,因此确实会导致较大的总特征矢量大小。 DCGAN的性能仍然不如示例CNN(Dosovitskiy et al。,2015),后者是一种以无监督的方式训练正常判别CNN的技术,可从源数据集中区分出特定选择的,积极扩展的示例样本。 可以通过微调歧视者的陈述来做进一步的改进,但是我们将其留给以后的工作。 此外,由于我们的DCGAN从未接受过CIFAR-10的培训,因此该实验还证明了所学功能的域鲁棒性.

5.2 CLASSIFYING SVHN DIGITS USING GANS AS AFEATURE EXTRACTOR

On the StreetView House Numbers dataset (SVHN)(Netzer et al., 2011), we use the features of the discriminator of a DCGAN for supervised purposes when labeled data is scarce. Following similar dataset preparation rules as in the CIFAR-10 experiments, we split off a validation set of 10,000 examples from the non-extra set and use it for all hyperparameter and model selection. 1000 uniformly class distributed training examples are randomly selected and used to train a regularized linear L2-SVM classifier on top of the same feature extraction pipeline used for CIFAR-10. This achieves state of the art (for classification using 1000 labels) at 22.48% test error, improving upon another modifcation of CNNs designed to leverage unlabled data (Zhao et al., 2015). Additionally, we validate that the CNN architecture used in DCGAN is not the key contributing factor of the model’s performance by training a purely supervised CNN with the same architecture on the same data and optimizing this model via random search over 64 hyperparameter trials (Bergstra & Bengio, 2012). It achieves a signficantly higher 28.87% validation error.

在StreetView门牌号码数据集(SVHN)(Netzer等人,2011)上,当缺少标签数据时,出于监控目的,我们使用DCGAN鉴别符的功能。 遵循与CIFAR-10实验相似的数据集准备规则,我们从非额外集合中分离出10,000个示例的验证集合,并将其用于所有超参数和模型选择。 随机选择1000个均匀分类的训练示例,并在与CIFAR-10相同的特征提取管道之上训练规则化的线性L2-SVM分类器。 这实现了22.48%的测试误差(使用1000个标签进行分类)的最新技术,改进了旨在利用未标记数据的CNN的另一种修改(Zhao等人,2015年)。 此外,我们通过在相同数据上训练具有相同架构的纯监督CNN并通过64个超参数试验的随机搜索来优化该模型,从而验证了DCGAN中使用的CNN架构不是该模型性能的关键因素。 ,2012)。 它实现了显着更高的28.87%验证错误。

6 INVESTIGATING AND VISUALIZING THE INTERNALS OF THE NETWORKS

We investigate the trained generators and discriminators in a variety of ways. We do not do any kind of nearest neighbor search on the training set. Nearest neighbors in pixel or feature space are trivially fooled (Theis et al., 2015) by small image transforms. We also do not use log-likelihood metrics to quantitatively assess the model, as it is a poor (Theis et al., 2015) metric

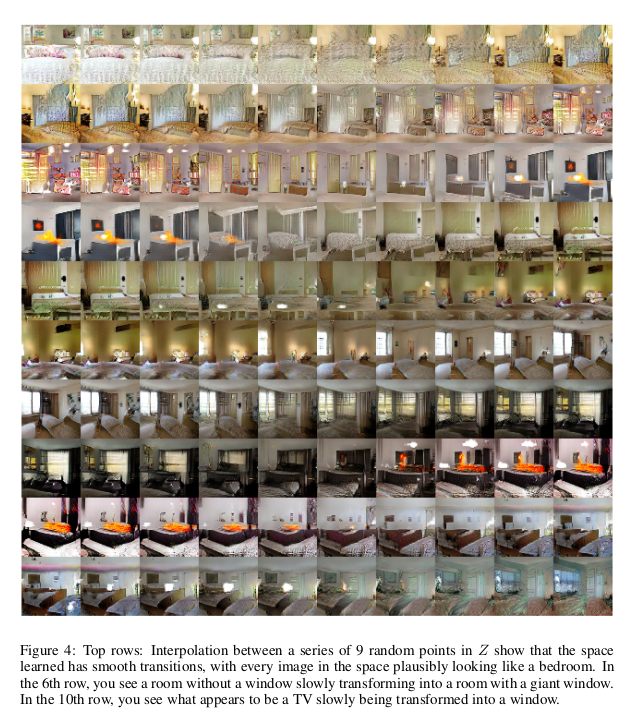

6.1 WALKING IN THE LATENT SPACE

The first experiment we did was to understand the landscape of the latent space. Walking on the manifold that is learnt can usually tell us about signs of memorization (if there are sharp transitions) and about the way in which the space is hierarchically collapsed. If walking in this latent space results in semantic changes to the image generations (such as objects being added and removed), we can reason that the model has learned relevant and interesting representations. The results are shown in Fig.4.

我们以各种方式调查受过训练的生成器和鉴别器。 我们不对训练集进行任何形式的最近邻搜索。 像素或特征空间中的最近邻居被小图像变换琐碎了(Theis等,2015)。 我们也没有使用对数似然度量来定量评估模型,因为它是一个很差的度量(Theis等,2015)。

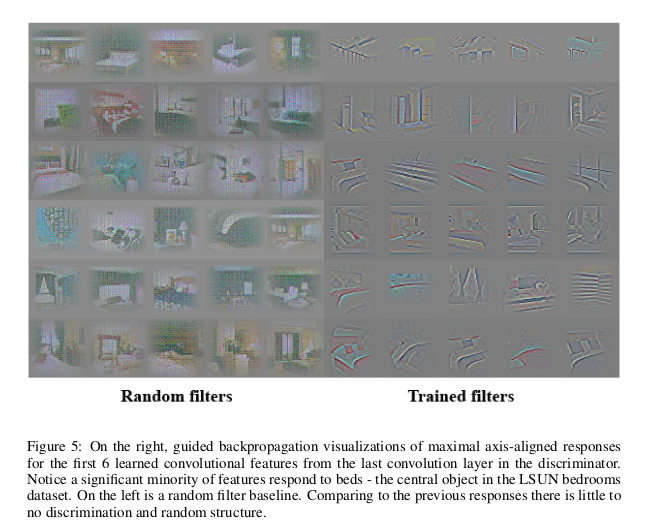

6.2 VISUALIZING THE DISCRIMINATOR FEATURES

Previous work has demonstrated that supervised training of CNNs on large image datasets results in very powerful learned features (Zeiler & Fergus, 2014). Additionally, supervised CNNs trained on scene classification learn object detectors (Oquab et al., 2014). We demonstrate that an unsupervised DCGAN trained on a large image dataset can also learn a hierarchy of features that are interesting. Using guided backpropagation as proposed by (Springenberg et al., 2014), we show in Fig.5 that the features learnt by the discriminator activate on typical parts of a bedroom, like beds and windows. For comparison, in the same figure, we give a baseline for randomly initialized features that are not activated on anything that is semantically relevant or interesting.

先前的工作表明,在大图像数据集上进行有监督的CNN训练会产生非常强大的学习功能(Zeiler&Fergus,2014)。 此外,在场景分类中受过训练的受监督的CNN可以学习物体检测器(Oquab等,2014)。 我们证明了在大图像数据集上训练的无监督DCGAN也可以学习有趣的特征层次结构。 使用(Springenberg et al。,2014)提出的引导反向传播,我们在图5中显示了鉴别器学习到的特征在卧室的典型部分(如床和窗户)上激活。 为了进行比较,在同一图中,我们为未在语义相关或有趣的任何事物上激活的随机初始化特征提供了基线。

6.3 MANIPULATING THE G ENERATOR R EPRESENTATION

6.3.1 FORGETTING TO DRAW CERTAIN OBJECTS

In addition to the representations learnt by a discriminator, there is the question of what representations the generator learns. The quality of samples suggest that the generator learns specific object representations for major scene components such as beds, windows, lamps, doors, and miscellaneous furniture. In order to explore the form that these representations take, we conducted an experiment to attempt to remove windows from the generator completely.

On 150 samples, 52 window bounding boxes were drawn manually. On the second highest convolution layer features, logistic regression was fit to predict whether a feature activation was on a window (or not), by using the criterion that activations inside the drawn bounding boxes are positives and random samples from the same images are negatives. Using this simple model, all feature maps with weights greater than zero ( 200 in total) were dropped from all spatial locations. Then, random new samples were generated with and without the feature map removal. The generated images with and without the window dropout are shown in Fig.6, and interestingly, the network mostly forgets to draw windows in the bedrooms, replacing them with other objects.

除了判别器学习到的表示形式外,还有生成器学习哪些表示形式的问题。 样本质量表明,生成器学习了主要场景组件(例如床,窗户,灯,门和其他家具)的特定对象表示。 为了探究这些表示形式的形式,我们进行了一项实验,试图从生成器中完全删除窗口。

在150个样本上,手动绘制了52个窗口边界框。 在第二高卷积层要素上,通过使用绘制的边界框内的激活为正,而来自同一图像的随机样本为负的准则,进行逻辑回归拟合以预测是否在窗口上激活了特征。 使用这个简单的模型,所有权重都大于零(总共200个)的特征图都从所有空间位置中删除了。 然后,在删除特征图和不删除特征图的情况下,生成随机的新样本。 生成的带有和不带有窗口缺失的图像如图6所示,有趣的是,网络通常会忘记在卧室绘制窗口,而将其替换为其他对象。

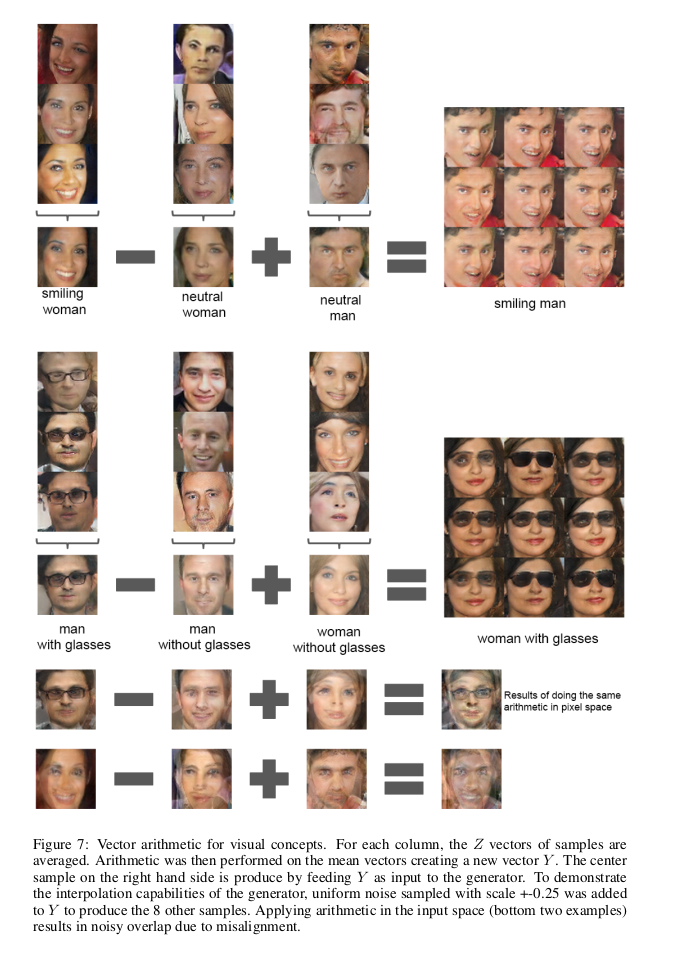

6.3.2 VECTOR ARITHMETIC ON FACE SAMPLES

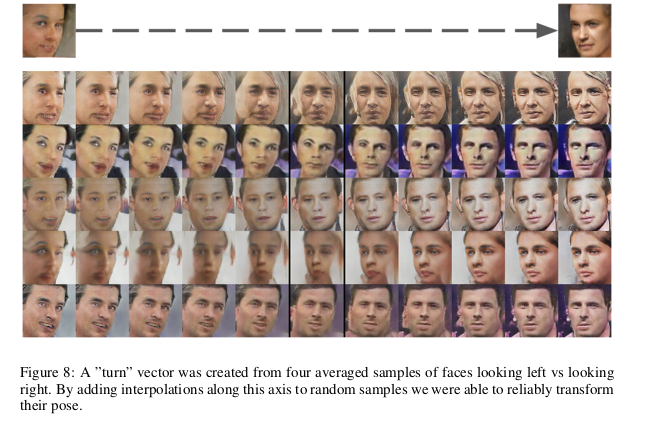

In the context of evaluating learned representations of words (Mikolov et al., 2013) demonstrated that simple arithmetic operations revealed rich linear structure in representation space. One canonical example demonstrated that the vector(”King”) - vector(”Man”) + vector(”Woman”) resulted in a vector whose nearest neighbor was the vector for Queen. We investigated whether similar structure emerges in the Z representation of our generators. We performed similar arithmetic on the Z vectors of sets of exemplar samples for visual concepts. Experiments working on only single samples per concept were unstable, but averaging the Z vector for three examplars showed consistent and stable generations that semantically obeyed the arithmetic. In addition to the object manipulation shown in (Fig. 7), we demonstrate that face pose is also modeled linearly in Z space (Fig. 8).

These demonstrations suggest interesting applications can be developed using Z representations learned by our models. It has been previously demonstrated that conditional generative models can learn to convincingly model object attributes like scale, rotation, and position (Dosovitskiy et al., 2014). This is to our knowledge the first demonstration of this occurring in purely unsupervised models. Further exploring and developing the above mentioned vector arithmetic could dramatically reduce the amount of data needed for conditional generative modeling of complex image distributions.

在评估学习的单词表示形式的背景下(Mikolov等人,2013)证明,简单的算术运算揭示了表示空间中丰富的线性结构。 一个典型的例子证明了vector(“ King”)-vector(“ Man”)+ vector(“ Woman”)产生了一个向量,其最近邻居是皇后的向量。 我们调查了发电机的Z表示形式中是否出现相似的结构。 对于视觉概念,我们对示例样本集的Z向量执行了类似的算法。 每个概念仅对单个样本进行的实验是不稳定的,但是对三个样本的Z向量求平均值显示出在语义上服从该算法的一致且稳定的世代。 除了(图7)中所示的对象操纵之外,我们还演示了在Z空间(图8)中也线性建模了人脸姿势。

这些演示表明,可以使用我们的模型学习到的Z表示来开发有趣的应用程序。 先前已经证明,条件生成模型可以使人信服地建模对象属性,例如比例,旋转和位置(Dosovitskiy等人,2014)。 据我们所知,这是在完全无监督的模型中进行的首次演示。 进一步探索和发展上述向量算法可以极大地减少复杂图像分布的条件生成建模所需的数据量。

7 CONCLUSION AND FUTURE WORK

We propose a more stable set of architectures for training generative adversarial networks and we give evidence that adversarial networks learn good representations of images for supervised learning and generative modeling. There are still some forms of model instability remaining - we noticed as models are trained longer they sometimes collapse a subset of filters to a single oscillating mode. to other domains such as video (for frame prediction) and audio (pre-trained features for speech synthesis) should be very interesting. Further investigations into the properties of the learnt latent space would be interesting as well.

我们提出了一套用于训练生成对抗网络的更稳定的体系结构,并提供了证据表明对抗网络可以在监督学习和生成建模中学习图像的良好表示。 仍然存在某些形式的模型不稳定性-我们注意到,随着模型训练时间的延长,它们有时会将滤波器的子集折叠为单个振荡模式。 视频(用于帧预测)和音频(用于语音合成的预训练功能)等其他领域的应用应该非常有趣。 对学习的潜在空间的性质的进一步研究也将是有趣的。

ACKNOWLEDGMENTS

We are fortunate and thankful for all the advice and guidance we have received during this work, especially that of Ian Goodfellow, Tobias Springenberg, Arthur Szlam and Durk Kingma. Additionally we’d like to thank all of the folks at indico for providing support, resources, and conversations, especially the two other members of the indico research team, Dan Kuster and Nathan Lintz. Finally, we’d like to thank Nvidia for donating a Titan-X GPU used in this work.

我们提出了一套用于训练生成对抗网络的更稳定的体系结构,并提供了证据表明对抗网络可以在监督学习和生成建模中学习图像的良好表示。 仍然存在某些形式的模型不稳定性-我们注意到,随着模型训练时间的延长,它们有时会将滤波器的子集折叠为单个振荡模式。 视频(用于帧预测)和音频(用于语音合成的预训练功能)等其他领域的应用应该非常有趣。 对学习的潜在空间的性质的进一步研究也将是有趣的。