softmax回归一般实现

从回归到多类分类-均方损失,校验比例

输出匹配概率(非负,和为1)

交叉熵可以用来衡量两个概率之间的区别

从回归到多类分类—校验比例

%matplotlib inlineimport torchimport torchvisionfrom torch.utils import datafrom torchvision import transformsfrom d2l import torch as d2l

#数据下载trans=transforms.ToTensor()mnist_train=torchvision.datasets.FashionMNIST(root='../data',train=True,transform=trans,download=True)mnist_test=torchvision.datasets.FashionMNIST(root='../data',train=False,transform=trans,download=True)

len(mnist_train),len(mnist_test)mnist_train[0][0].shape

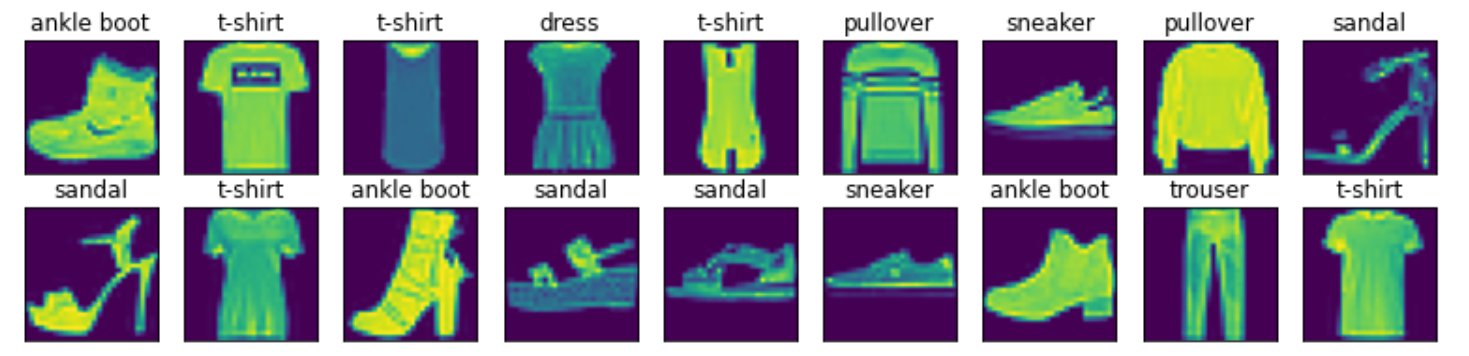

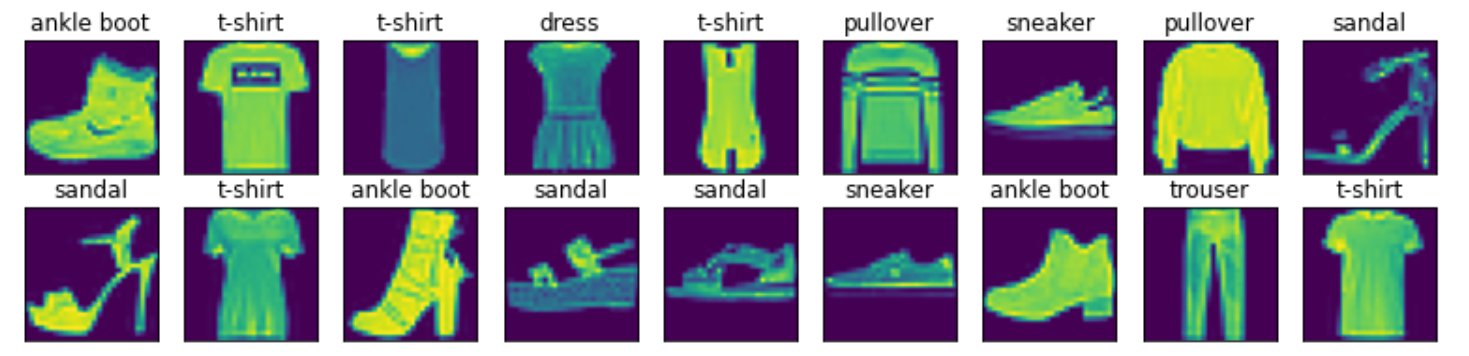

可视化数据集

def show_images(imgs, num_rows, num_cols, titles=None, scale=1.5): #@save """绘制一系列图片""" figsize = (num_cols * scale, num_rows * scale) _, axes = d2l.plt.subplots(num_rows, num_cols, figsize=figsize) axes = axes.flatten() for i, (ax, img) in enumerate(zip(axes, imgs)): if torch.is_tensor(img): # 图片张量 ax.imshow(img.numpy()) else: # PIL图片 ax.imshow(img) ax.axes.get_xaxis().set_visible(False) ax.axes.get_yaxis().set_visible(False) if titles: ax.set_title(titles[i]) return axes

X, y = next(iter(data.DataLoader(mnist_train, batch_size=18)))show_images(X.reshape(18, 28, 28), 2, 9, titles=get_fashion_mnist_labels(y));#

#读取一小批量数据,大小为batch_sizebatch_size=256def get_dataloader_workers(): """使用4个进程来读取数据""" return 4train_iter=data.DataLoader(mnist_train,batch_size,shuffle=True,num_workers=get_dataloader_workers())timer=d2l.Timer()#计算运算时间for X,y in train_iter: continuef'{timer.stop():.2f} sec'

import torchfrom IPython import displayfrom d2l import torch as d2lbatch_size=256train_iter,test_iter=d2l.load_data_fashion_mnist(batch_size)

#初始化权重和偏倚#将展平每个图像,将它们视为784的向量。因为我们的数据集有10个类别,所以网络输出维度为10num_inputs=784num_outputs=10#首先定义权重w,这是一个正太分布的值,均值为0,方差为0.1,然后矩阵的大小为(784,10),需要计算梯度w=torch.normal(0,0.01,size=(num_inputs,num_outputs),requires_grad=True)#对于每个输出都要有一个偏倚,一共有十个输出,先确定为0b=torch.zeros(num_outputs,requires_grad=True)

#给定一个矩阵X,可以对所有元素求和,这里只是一个简单举例X=torch.tensor([[1,2,3],[4,5,6]])X.sum(0,keepdim=True),X.sum(1,keepdim=True)

#使用pytorch实现softmaxdef softmax(X): X_exp=torch.exp(X) #首先对每个向量进行指数的计算 partition=X_exp.sum(1,keepdim=True) #然后按照维度1进行求和,也就是每一行所有的数字都加起来 return X_exp/partition #这里使用的广播的机制,也就是对于每一行的每一个数字都用和除以一下,和R中的sweep是一样的

#将每个元素都变成一个非负数,此外,根据概率原理,每一行的总和都是1X=torch.normal(0,1,(2,5))X_prob=softmax(X)X,X_prob,X_prob.sum(1)

#实现softmax回归模型def net(X): return softmax(torch.matmul(X.reshape((-1,w.shape[0])),w)+b) #-1代表模型自己算一下维度,基本等于批量大小256,W.shape[0]等于784,也就是X为(256,784)的矩阵

#交叉熵的损失#创建一个数据y_hat,其中包含2个样本在3个类别的预测概率,使用y作为y_hat中的概率的索引y=torch.tensor([0,2])y_hat=torch.tensor([[0.1,0.3,0.6],[0.3,0.2,0.5]])y_hat[[0,1],y] #这个操作的含义便是拿出y的一个值为0,这意味着在y_hat的第一列的第一位,拿出y的第二个值为2,然后对应的是y_hat的第三位

#定义交叉熵损失函数def cross_entrppy(y_hat,y): return -torch.log(y_hat[range(len(y_hat)),y])cross_entrppy(y_hat,y)

#将预测类别与真实y元素进行比较def accuracy(y_hat,y): """计算预测正确的数量""" if len(y_hat.shape)>1 and y_hat.shape[1]>1:#y_hat是大于2维的矩阵 y_hat=y_hat.argmax(axis=1) #将每一行中最大的那个数存到y_hat之中,也就是预测分类的类别 cmp=y_hat.type(y.dtype)==y #将y_hat转换为y的数据类型,这样才能进行比较 return float(cmp.type(y.dtype).sum()) #将cmp转成y一样的形状然后求和accuracy(y_hat,y)/len(y)

##评估在任意模型net的准确率def evaluate_accuracy(net,data_iter): """计算在指定数据集上模型的精度""" if isinstance(net,torch.nn.Module): net.eval() #将模型设置为评估模式,不要计算梯度 metric=Accumulator(2) for X,y in data_iter:#将数据放入迭代器之中 metric.add(accuracy(net(X),y),y.numel())#第一列假如模型估计的样本数,第二列放入样本的总数 return metric[0]/metric[1]#返回分类正确的样本数和总样本数的商,也就是计算准确率

#实现Accumulator,在实例中创建了2个变量,用于分别存储在正确预测的数量和预测的总数量class Accumulator: """在n个变量上累加""" def __init__(self,n): self.data=[0,0]*n def add(self,*args): self.data=[a+float(b) for a ,b in zip(self.data,args)] def reset(self): self.data=[0.0]*len(self.data) def __getitem__(self,idx): return self.data[idx]evaluate_accuracy(net,test_iter) #结果和随机差不多

def train_epoch_ch3(net,train_iter,loss,updater): if isinstance(net,torch.nn.Module): metric=Accumulator(3) for X,y in train_iter: y_hat=net(X) l=loss(y_hat,y) if isinstance(updater,torch.optim.Optimizer): updater.zero_grad() l.backward() updater.step() metric.add( float(l)*len(y),accuracy(y_hat,y),y.size().numel()) else: l.sum().backward() updater(X.shape[0]) metric.add(float(l.sum()),accuracy(y_hat,y),y.numel()) return metric[0]/metric[2],metric[1]/metric[2]

class Animator: """在动画中绘制数据。""" def __init__(self, xlabel=None, ylabel=None, legend=None, xlim=None, ylim=None, xscale='linear', yscale='linear', fmts=('-', 'm--', 'g-.', 'r:'), nrows=1, ncols=1, figsize=(3.5, 2.5)): # 增量地绘制多条线 if legend is None: legend = [] d2l.use_svg_display() self.fig, self.axes = d2l.plt.subplots(nrows, ncols, figsize=figsize) if nrows * ncols == 1: self.axes = [self.axes,] # 使用lambda函数捕获参数 self.config_axes = lambda: d2l.set_axes(self.axes[ 0], xlabel, ylabel, xlim, ylim, xscale, yscale, legend) self.X, self.Y, self.fmts = None, None, fmts def add(self, x, y): # 向图表中添加多个数据点 if not hasattr(y, "__len__"): y = [y] n = len(y) if not hasattr(x, "__len__"): x = [x] * n if not self.X: self.X = [[] for _ in range(n)] if not self.Y: self.Y = [[] for _ in range(n)] for i, (a, b) in enumerate(zip(x, y)): if a is not None and b is not None: self.X[i].append(a) self.Y[i].append(b) self.axes[0].cla() for x, y, fmt in zip(self.X, self.Y, self.fmts): self.axes[0].plot(x, y, fmt) self.config_axes() display.display(self.fig) display.clear_output(wait=True)

def train_ch3(net, train_iter, test_iter, loss, num_epochs, updater): """训练模型。""" animator = Animator(xlabel='epoch', xlim=[1, num_epochs], ylim=[0.3, 0.9], legend=['train loss', 'train acc', 'test acc']) for epoch in range(num_epochs): train_metrics = train_epoch_ch3(net, train_iter, loss, updater) test_acc = evaluate_accuracy(net, test_iter) animator.add(epoch + 1, train_metrics + (test_acc,)) train_loss, train_acc = train_metrics

#小批量随机梯度下降来优化模型的损失函数lr=0.1def updater(batch_size): return d2l.sgd([w,b],lr,batch_size)

#训练模型10个迭代周期num_epochs=10train_ch3(net,train_iter,test_iter,cross_entrppy,num_epochs,updater)

def predict_ch3(net,test_iter,n=6): """预测标签""" for X,y in test_iter: break trues=d2l.get_fashion_mnist_labels(y) preds=d2l.get_fashion_mnist_labels(net(X).argmax(axis=1)) titles=[true+'\n'+pred for true,pred in zip(trues,preds)] d2l.show_images(X[0:n].reshape((n, 28, 28)), 1, n, titles=titles[0:n])predict_ch3(net, test_iter)

softmax回归的简洁实现

#softmax回归的简洁实现#通过深度学习框架的高级API实现softmax回归import torchfrom torch import nnfrom d2l import torch as d2lbatch_size=256train_iter,test_iter=d2l.load_data_fashion_mnist(batch_size)

#softmax的回归的输出层是一个全连接层#pytorch不会隐式地调整输入的形状#因此,我们定义了展平层(flatten)在线性层前调整网络输入的形状#初始化net=nn.Sequential(nn.Flatten(),nn.Linear(784,10))#nn.Flatten()也就是将第0维度保留,剩下全部展开为向量,也就是类似于宽转长def init_weights(m): if type(m)==nn.Linear: nn.init.normal_(m.weight,std=0.01)#如果是线性回归,那么就将w全部改为方差为1的net.apply(init_weights)

#在交叉熵损失函数中传递未归一化的预测,并同时计算 softmax及其对数loss=nn.CrossEntropyLoss()

num_epochs=10d2l.train_ch3(net,train_iter,test_iter,loss,num_epochs,trainer)

def predict_ch3(net,test_iter,n=6): """预测标签""" for X,y in test_iter: break trues=d2l.get_fashion_mnist_labels(y) preds=d2l.get_fashion_mnist_labels(net(X).argmax(axis=1)) titles=[true+'\n'+pred for true,pred in zip(trues,preds)] d2l.show_images(X[0:n].reshape((n, 28, 28)), 1, n, titles=titles[0:n])predict_ch3(net, test_iter)