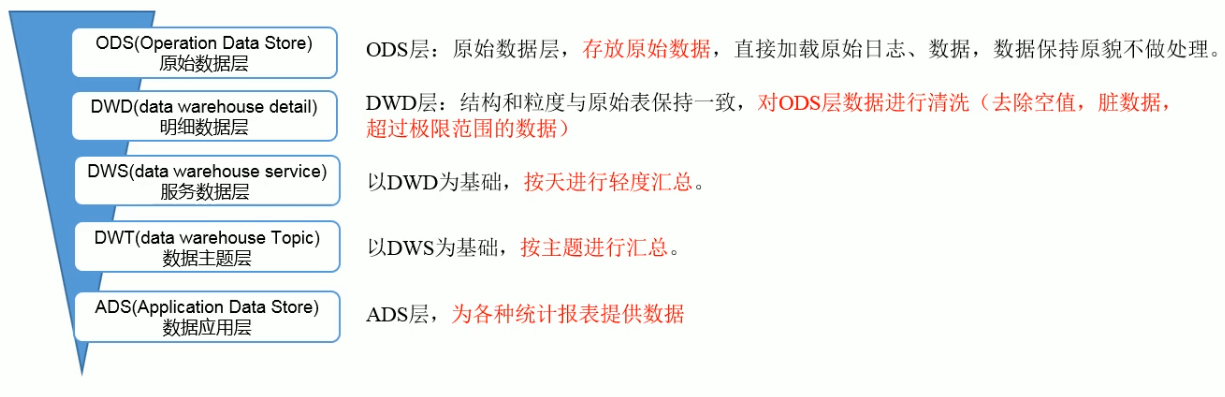

ods层

创建日志启动表

drop table if exists ods_start_log;create external table ods_start_log (`line` string) partitioned by (`dt` string) stored as inputformat 'com.hadoop.mapred.DeprecatedLzoTextInputFormat' outputformat 'org.apache.hadoop.hive.ql.io.HiveIgnoreKeyTextOutputFormat' location '/warehouse/gmall/ods/ods_start_log';

数据导入表中

load data inpath '/origin_data/gmall/log/topic_start/2020-11-04' into table gmall.ods_start_log partition(dt='2020-11-04');

查询数据

hive (gmall)> select * from ods_start_log limit 2;

为lzo文件创建索引

[root@hadoop102 hadoop-2.8.2]# hadoop jar /opt/module/hadoop-2.8.2/share/hadoop/common/hadoop-lzo-0.4.20.jar com.hadoop.compression.lzo.DistributedLzoIndexer /warehouse/gmall/ods/ods_start_log/dt=2020-11-04

日志事件表同上。

比较复杂的事情(例如反复导数据)是可以通过脚本来完成的,没有什么事情是一个脚本解决不了的。

#! /bin/bashhive=/opt/module/hive-2.3.7/bin/hiveapp=gmalldo_date=$1sql="load data inpath '/origin_data/${app}/log/topic_start/$do_date' into table ${app}.ods_start_log partition(dt='$do_date');load data inpath '/origin_data/${app}/log/topic_event/$do_date' into table ${app}.ods_event_log partition(dt='$do_date');"$hive -e "$sql"hadoop jar /opt/module/hadoop-2.8.2/share/hadoop/common/hadoop-lzo-0.4.20.jar com.hadoop.compression.lzo.DistributedLzoIndexer /warehouse/$app/ods/ods_start_log/dt=$do_datehadoop jar /opt/module/hadoop-2.8.2/share/hadoop/common/hadoop-lzo-0.4.20.jar com.hadoop.compression.lzo.DistributedLzoIndexer /warehouse/$app/ods/ods_event_log/dt=$do_date

dwd层

先针对启动日志的字段创建一个bean对象,即创建一个有对应字段的表。

drop table if exists dwd_start_log;CREATE EXTERNAL TABLE dwd_start_log( `mid_id` string, `user_id` string, `version_code` string, `version_name` string, `lang` string, `source` string, `os` string, `area` string, `model` string, `brand` string, `sdk_version` string, `gmail` string, `height_width` string, `app_time` string, `network` string, `lng` string, `lat` string, `entry` string, `open_ad_type` string, `action` string, `loading_time` string, `detail` string, `extend1` string )PARTITIONED BY (dt string) stored as parquet location '/warehouse/gmall/dwd/dwd_start_log/' TBLPROPERTIES('parquet.compression'='lzo');

导入数据到表

insert overwrite table dwd_start_log PARTITION (dt='2020-11-04') select get_json_object(line,'$.mid') mid_id, get_json_object(line,'$.uid') user_id, get_json_object(line,'$.vc') version_code, get_json_object(line,'$.vn') version_name, get_json_object(line,'$.l') lang, get_json_object(line,'$.sr') source, get_json_object(line,'$.os') os, get_json_object(line,'$.ar') area, get_json_object(line,'$.md') model, get_json_object(line,'$.ba') brand, get_json_object(line,'$.sv') sdk_version, get_json_object(line,'$.g') gmail, get_json_object(line,'$.hw') height_width, get_json_object(line,'$.t') app_time, get_json_object(line,'$.nw') network, get_json_object(line,'$.ln') lng, get_json_object(line,'$.la') lat, get_json_object(line,'$.entry') entry, get_json_object(line,'$.open_ad_type') open_ad_type, get_json_object(line,'$.action') action, get_json_object(line,'$.loading_time') loading_time, get_json_object(line,'$.detail') detail, get_json_object(line,'$.extend1') extend1 from ods_start_log where dt='2020-11-04';