环境

| 系统 | CentOS-7-x86_64-DVD-1810 |

|---|---|

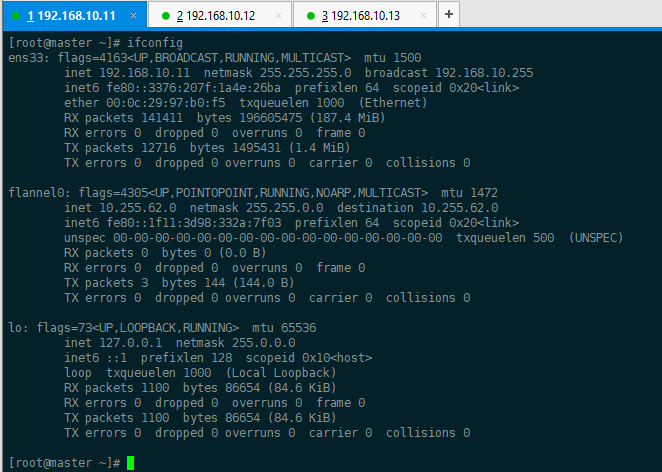

| master | 192.168.10.11 |

| node1 | 192.168.10.12 |

| node2 | 192.168.10.13 |

1 配置yum源

1.1、上传k8s包

[root@localhost ~]# lltotal 182352-rw-------. 1 root root 1603 Jan 4 16:34 anaconda-ks.cfg-rw-r--r--. 1 root root 186724113 Jan 4 16:44 k8s-package.tar.gz

1.2、给其他两个节点也传上

[root@localhost ~]# scp k8s-package.tar.gz 192.168.10.11:/rootThe authenticity of host '192.168.10.11 (192.168.10.11)' can't be established.ECDSA key fingerprint is SHA256:ykusvjfYzIAWlSvvb73Fuj3KOwx5P9T/0mjTzONV1dM.ECDSA key fingerprint is MD5:54:60:5d:6e:88:3c:98:e9:68:a4:4d:78:52:7b:78:db.Are you sure you want to continue connecting (yes/no)? yesWarning: Permanently added '192.168.10.11' (ECDSA) to the list of known hosts.root@192.168.10.11's password:k8s-package.tar.gz 100% 178MB 25.4MB/s 00:07[root@localhost ~]# scp k8s-package.tar.gz 192.168.10.12:/rootThe authenticity of host '192.168.10.12 (192.168.10.12)' can't be established.ECDSA key fingerprint is SHA256:Dh3mM0sO1ctA1r6DEoluv3QYSlimGtFdTAtjwF9iSCQ.ECDSA key fingerprint is MD5:2c:ef:f5:ba:0f:c5:be:9a:22:8a:ff:63:ce:f8:ac:5d.Are you sure you want to continue connecting (yes/no)? yesWarning: Permanently added '192.168.10.12' (ECDSA) to the list of known hosts.root@192.168.10.12's password:k8s-package.tar.gz 100% 178MB 44.5MB/s 00:04

1.3、解压包

[root@localhost ~]# tar zxvf k8s-package.tar.gz

1.4、清空默认的repo文件,创建新的repo

[root@localhost ~]# mv /etc/yum.repos.d/CentOS-* /opt[root@localhost ~]# mount /dev/cdrom /mntmount: /dev/sr0 is write-protected, mounting read-only

本地源

[root@localhost ~]# cat /etc/yum.repos.d/k8s-poackage.repo[k8s-package]name=k8s-packagebaseurl=file:///root/k8s-packageenabled=1gpgcheck=0

k8s源

[root@localhost ~]# cat /etc/yum.repos.d/centos7.repo[centos7]name=CentOS7baseurl=file:///mntenabled=1pgpcheck=0gpgkey=file:///etc/pki/rpm-gpg/RPM-GPG-KEY-CentOS-7

1.5、同步源配置到所有节点

[root@localhost ~]# scp /etc/yum.repos.d/* 192.168.10.12:/etc/yum.repos.d/root@192.168.10.12's password:centos7.repo 100% 117 69.8KB/s 00:00k8s-poackage.repo 100% 85 4.0KB/s 00:00[root@localhost ~]# scp /etc/yum.repos.d/* 192.168.10.13:/etc/yum.repos.d/The authenticity of host '192.168.10.13 (192.168.10.13)' can't be established.ECDSA key fingerprint is SHA256:yuBGVPT+mEmYaD5mGSREQh9E11btflfZZKrYoDWLofs.ECDSA key fingerprint is MD5:9e:ac:5a:7c:34:ab:11:9e:b3:1d:76:3a:77:74:3b:5c.Are you sure you want to continue connecting (yes/no)? yesWarning: Permanently added '192.168.10.13' (ECDSA) to the list of known hosts.root@192.168.10.13's password:centos7.repo 100% 117 40.1KB/s 00:00k8s-poackage.repo 100% 85 60.1KB/s 00:00[root@localhost ~]#

2 在各个节点上面安装 k8s 组件

yum install -y kubernetes etcd flannel ntp

注: Flannel为Docker提供一种可配置的虚拟重叠网络。实现跨物理机的容器之间能直接访问 1、Flannel在每一台主机上运行一个agent。 2、flanneld,负责在提前配置好的地址空间中分配子网租约。Flannel使用etcd来存储网络配置。 3、ntp:主要用于同步容器云平台中所有结点的时间。云平台中结点的时间需要保持一致。 4、kubernetes中包括了服务端和客户端相关的软件包5、etcd是etcd服务的软件包

3 配置etcd和master节点

3.1、配置hostname

[root@localhost ~]# echo master> /etc/hostname

[root@localhost ~]# echo etcd>/etc/hostname

[root@localhost ~]# echo node1 > /etc/hostname

[root@localhost ~]# hostnamectl

[root@localhost ~]# echo node2 > /etc/hostname

[root@localhost ~]# hostnamectl

执行exit退出ssh连接,再重新登入ssh刷新hostname

3.2、配置hosts文件

[root@master ~]# cat /etc/hosts

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

192.168.10.11 master

192.168.10.11 etcd

192.168.10.12 node1

192.168.10.13 node2

[root@master ~]# scp /etc/hosts 192.168.10.12:/etc/hosts

root@192.168.10.12's password:

hosts 100% 242 120.5KB/s 00:00

[root@master ~]# scp /etc/hosts 192.168.10.13:/etc/hosts

root@192.168.10.13's password:

hosts 100% 242 30.3KB/s 00:00

[root@master ~]#

3.3、配置etcd节点

3.3.1 修改配置文件

[root@master ~]# vim /etc/etcd/etcd.conf

/etc/etcd/etcd.conf配置文件含意如下: ETCD_NAME=”etcd” #etcd节点名称,如果你的etcd集群中只有一台etcd,这一项可以注释不用配置,默认名称为default,这个名字后面会用到。 ETCD_DATA_DIR=”/var/lib/etcd/default.etcd” #etcd存储数据的目录 ETCD_LISTEN_CLIENT_URLS=”http://localhost:2379,http://192.168.1.63:2379"#etcd对外服务监听地址,一般指定2379端口,如果为0.0.0.0将会监听所有接口 ETCD_ARGS=”” #需要额外添加的参数,可以自己添加,etcd的所有参数可以通过etcd -h查看。

3.3.2 启动服务

[root@master ~]# systemctl enable etcd && systemctl start etcd && systemctl status etcd

Created symlink from /etc/systemd/system/multi-user.target.wants/etcd.service to /usr/lib/systemd/system/etcd.service.

● etcd.service - Etcd Server

Loaded: loaded (/usr/lib/systemd/system/etcd.service; enabled; vendor preset: disabled)

Active: active (running) since Sun 2020-01-05 02:06:43 CST; 12ms ago

Main PID: 36436 (etcd)

Memory: 4.4M

CGroup: /system.slice/etcd.service

└─36436 /usr/bin/etcd --name=etcd --data-dir=/var/lib/etcd/default.etcd --listen-client-urls=http://localhost:2379,http://192.168.10.11:2...

Jan 05 02:06:43 etcd etcd[36436]: 8e9e05c52164694d became leader at term 2

Jan 05 02:06:43 etcd etcd[36436]: raft.node: 8e9e05c52164694d elected leader 8e9e05c52164694d at term 2

Jan 05 02:06:43 etcd etcd[36436]: setting up the initial cluster version to 3.2

Jan 05 02:06:43 etcd etcd[36436]: set the initial cluster version to 3.2

Jan 05 02:06:43 etcd etcd[36436]: enabled capabilities for version 3.2

Jan 05 02:06:43 etcd etcd[36436]: published {Name:etcd ClientURLs:[http://192.168.10.11:2379]} to cluster cdf818194e3a8c32

Jan 05 02:06:43 etcd etcd[36436]: ready to serve client requests

Jan 05 02:06:43 etcd etcd[36436]: serving insecure client requests on 192.168.10.11:2379, this is strongly discouraged!

Jan 05 02:06:43 etcd etcd[36436]: ready to serve client requests

Jan 05 02:06:43 etcd etcd[36436]: serving insecure client requests on 127.0.0.1:2379, this is strongly discouraged!

[root@master ~]#

3.3.3 检查etcd集群成员列表

这里只有一台

[root@master ~]# etcdctl member list

8e9e05c52164694d: name=etcd peerURLs=http://localhost:2380 clientURLs=http://192.168.10.11:2379 isLeader=true

3.4、 配置master节点

3.4.1 配置kubernetes配置文件

[root@master ~]# vim /etc/kubernetes/config

注:/etc/kubernetes/config 配置文件含义: KUBE_LOGTOSTDERR=”—logtostderr=true” #表示错误日志记录到文件还是输出到stderr标准错误输出。 KUBE_LOG_LEVEL=”—v=0” #日志等级 KUBE_ALLOW_PRIV=”—allow_privileged=false” #是否允讲运行特权容器。false表示不允许特权容器

3.4.2 修改apiserver配置文件

[root@master ~]# vim /etc/kubernetes/apiserver

注:/etc/kubernetes/apiserver配置文件含意: KUBE_API_ADDRESS=”—insecure-bind-address=0.0.0.0” #如果配置为127.0.0.1则只监听localhost,配置为0.0.0.0会监听所有网卡的接口,这里配置为0.0.0.0。 KUBE_ETCD_SERVERS=”—etcd-servers=http://192.168.1.63:2379“ #etcd服务地址,前面已经启动了etcd服务 KUBE_SERVICE_ADDRESSES=”—service-cluster-ip-range=10.254.0.0/16” #kubernetes可以分配的ip的范围,kubernetes 启动的每一个pod以及serveice都会分配一个ip地址,将从这个范围中废品ip KUBE_ADMISSION_CONTROL=”—admission-control=AlwaysAdmit” #不做限制,允许所有节点可以访问apicerver,对所有请求一路绿灯 admission-control(准入控制)概述:admissioncontroller本质上一段代码,在对kubernetes api的请求过程中,顺序为先经过认证和授权,然后执行准入操作,最后对目标对象迚行操作。

3.4.3 配置kube-controller-manager配置文件

[root@master ~]# vim /etc/kubernetes/controller-manager #这里采用默认配置

3.4.4 配置kube-scheduler配置文件

scheduler /ˈskedʒ.uː.lɚ/

[root@master ~]# vim /etc/kubernetes/scheduler

[root@master ~]# cat /etc/kubernetes/scheduler

###

# kubernetes scheduler config

# default config should be adequate

# Add your own!

KUBE_SCHEDULER_ARGS="0.0.0.0" #修改监听地址为0.0.0.0 ,默认是127.0.0.1

[root@master ~]#

3.5、配置etcd,制定容器云中docker的IP网段

3.5.1、扩展:etcdctl 命令使用方法

etcdctl操作参考 http://shouce.jb51.net/docker_practice/etcd/etcdctl.html

etcdctl 是操作 etcd 非关系型数据库的一个命令行客户端,它能提供一些简洁的命令,供用户直接跟etcd 数据库打交道

etcdctl 的命令,大体上分为数据库操作和非数据库操作两类。

数据库操作主要是围绕对键值和目录的 CDRU 完整生命周期的管理,即Create, Read, Update, Delete。

3.5.2、常用数据库操作

etcd 在键的组织上采用了层次化的空间结构(类似于文件系统中目录的概念),用户指定的键可以为单独的名字,如 testkey,此时实际上放在根目录 / 下面,也可以为指定目录结构,如

cluster1/node2/testkey,则将创建相应的目录结构

常用的操作举例

set 指定某个键的值

get 获取指定键的值

ls 列出目录下的键或者子目录,默认不列出子目录中的内容

update 当键存在时,更新内容

[root@master ~]# etcdctl set mk "shen"

shen

[root@master ~]# etcdctl get mk

shen

[root@master ~]# etcdctl set /testdir/testkey "nello world"

nello world

[root@master ~]# etcdctl get /testdir/testkey

nello world

[root@master ~]# etcdctl ls

/mk

/testdir

[root@master ~]# etcdctl ls /testdir

/testdir/testkey

[root@master ~]# etcdctl update /testdir/testkey aaaa

aaaa

[root@master ~]# etcdctl get /testdir/testkey

aaaa

[root@master ~]#

rm 删除某个键的值

mk 命令是如果给的键不存在,创建一个新的键,如果存在,则报错

set 不管给的键存在与否,都会创建一个新的键值

[root@master ~]# etcdctl rm mk

PrevNode.Value: shen

[root@master ~]#

[root@master ~]# etcdctl mk /testdir/testkey "bbbb"

Error: 105: Key already exists (/testdir/testkey) [7]

[root@master ~]# etcdctl set /testdir/testkey "mkzhennb"

mkzhennb

[root@master ~]# etcdctl get /testdir/testkey

mkzhennb

[root@master ~]#

mkdir 创建一个目录

[root@master ~]# etcdctl mkdir data

[root@master ~]# etcdctl ls /

/data

/testdir

[root@master ~]#

3.5.3、非数据库操作

etcdctl member 后面可以加参数 list、add、remove 命令。表示列出、添加、删除 etcd 集群中的实例。

例如本地启动一个etcd服务实例后,可以这样查看:

[root@master ~]# etcdctl member list

8e9e05c52164694d: name=etcd peerURLs=http://localhost:2380 clientURLs=http://192.168.10.11:2379 isLeader=true

[root@master ~]#

3.5.4、把flannel的网络信息存储在etcd数据库中

[root@master ~]# etcdctl mkdir /k8s/network #创建一个目录/k8s/network用于存储dlannel网络信息

[root@master ~]# etcdctl set /k8s/network/config '{"Network": "10.255.0.0/16"}' #给/k8s/network/config赋值

{"Network": "10.255.0.0/16"}

[root@master ~]# etcdctl get /k8s/network/config #查看赋值信息

{"Network": "10.255.0.0/16"}

[root@master ~]#

注: 在启动 flannel 前,需要在 etcd 中添加一条网络配置记录,这个配置将用于 flannel 分配给每个 docker 的虚拟 IP 地址段。用于配置在 minion 上 docker 的 IP 地址。 由于 flannel 将覆盖 docker0 上的地址,所以 flannel 服务要先于 docker 服务启动。如果 docker服务已经启动,则先停止 docker 服务,然后启动 flannel,再启动 docker

3.5.5、flannel启动过程解析:

- 从etcd中获取/k8s/network/config的值

- 划分subnet子网,并且在etcd中注册

- 将子网信息记录到/run/flannel/subnet.env

3.5.6、配置flannel服务

[root@master ~]# vim /etc/sysconfig/flanneld ```bash

[root@master ~]# systemctl start flanneld.service

[root@master ~]# systemctl status flanneld.service

● flanneld.service - Flanneld overlay address etcd agent

Loaded: loaded (/usr/lib/systemd/system/flanneld.service; disabled; vendor preset: disabled)

Active: active (running) since Sun 2020-01-05 03:03:43 CST; 6s ago

Process: 36824 ExecStartPost=/usr/libexec/flannel/mk-docker-opts.sh -k DOCKER_NETWORK_OPTIONS -d /run/flannel/docker (code=exited, status=0/SUCCESS)

Main PID: 36813 (flanneld)

Memory: 6.5M

CGroup: /system.slice/flanneld.service

```bash

[root@master ~]# systemctl start flanneld.service

[root@master ~]# systemctl status flanneld.service

● flanneld.service - Flanneld overlay address etcd agent

Loaded: loaded (/usr/lib/systemd/system/flanneld.service; disabled; vendor preset: disabled)

Active: active (running) since Sun 2020-01-05 03:03:43 CST; 6s ago

Process: 36824 ExecStartPost=/usr/libexec/flannel/mk-docker-opts.sh -k DOCKER_NETWORK_OPTIONS -d /run/flannel/docker (code=exited, status=0/SUCCESS)

Main PID: 36813 (flanneld)

Memory: 6.5M

CGroup: /system.slice/flanneld.service└─36813 /usr/bin/flanneld -etcd-endpoints=http://192.168.10.11:2379 -etcd-prefix=/k8s/network --iface=ens33

Jan 05 03:03:43 etcd systemd[1]: Starting Flanneld overlay address etcd agent… Jan 05 03:03:43 etcd flanneld-start[36813]: I0105 03:03:43.610117 36813 main.go:132] Installing signal handlers Jan 05 03:03:43 etcd flanneld-start[36813]: I0105 03:03:43.612647 36813 manager.go:149] Using interface with name ens33 and address 192.168.10.11 Jan 05 03:03:43 etcd flanneld-start[36813]: I0105 03:03:43.612696 36813 manager.go:166] Defaulting external address to interface address (…8.10.11) Jan 05 03:03:43 etcd flanneld-start[36813]: I0105 03:03:43.641246 36813 local_manager.go:179] Picking subnet in range 10.255.1.0 … 10.255.255.0 Jan 05 03:03:43 etcd flanneld-start[36813]: I0105 03:03:43.767706 36813 manager.go:250] Lease acquired: 10.255.62.0/24 Jan 05 03:03:43 etcd flanneld-start[36813]: I0105 03:03:43.795171 36813 network.go:98] Watching for new subnet leases Jan 05 03:03:43 etcd systemd[1]: Started Flanneld overlay address etcd agent. Hint: Some lines were ellipsized, use -l to show in full. [root@master ~]#

flannel日志路径: /var/log/messages<br />

<a name="7OXZa"></a>

#### 3.5.7、查看子网信息

/run/flannel/subnet.env

```bash

[root@master ~]# cat /run/flannel/subnet.env

FLANNEL_NETWORK=10.255.0.0/16

FLANNEL_SUBNET=10.255.62.1/24

FLANNEL_MTU=1472

FLANNEL_IPMASQ=false

[root@master ~]# cat /run/flannel/docker

DOCKER_OPT_BIP="--bip=10.255.62.1/24"

DOCKER_OPT_IPMASQ="--ip-masq=true"

DOCKER_OPT_MTU="--mtu=1472"

DOCKER_NETWORK_OPTIONS=" --bip=10.255.62.1/24 --ip-masq=true --mtu=1472"

[root@master ~]#

3.5.8、启动master上的4个服务

[root@master ~]# systemctl restart kube-apiserver kube-controller-manager kube-scheduler flanneld

[root@master ~]# systemctl status kube-apiserver kube-controller-manager kube-scheduler flanneld

[root@master ~]# systemctl enable kube-apiserver kube-controller-manager kube-scheduler flanneld

或者使用脚本

[root@master ~]# cat 11.sh

for SERVICES in kube-apiserver kube-controller-manager kube-scheduler flanneld

do

systemctl restart ${SERVICES}

systemctl status ${SERVICES}

systemctl enable ${SERVICES}

done

[root@master ~]#

4 配置minion1节点

4.1、配置node1网络

采用flannel方式

[root@node1 ~]# vim /etc/sysconfig/flanneld

[root@node1 ~]# cat /etc/sysconfig/flanneld

# Flanneld configuration options

# etcd url location. Point this to the server where etcd runs

FLANNEL_ETCD_ENDPOINTS="http://192.168.10.11:2379"

# etcd config key. This is the configuration key that flannel queries

# For address range assignment

FLANNEL_ETCD_PREFIX="/k8s/network"

# Any additional options that you want to pass

FLANNEL_OPTIONS="--iface=ens33"

[root@node1 ~]#

4.2、配置node1上的master地址和kube-proxy

4.2.1、master

修改vim /etc/kubernetes/config中KUBE_MASTER 配置为KUBE_MASTER="--master=http://192.168.10.11:8080"

4.2.2、kube-proxy

kube-proxy 的作用主要是负责 service 的实现,具体来说,就是实现了内部从 pod 到 service。

/etc/kubernetes/proxy

不用修改

日志路径是 /var/log/messages

4.3、配置node1的kubelet

Kubelet 运行在 minion 节点上。

Kubelet 组件管理 Pod、Pod 中容器及容器的镜像和卷等信息。

[root@node1 ~]# vim /etc/kubernetes/kubelet

KUBELET_POD_INFRA_CONTAINER=”—pod-infra-container-image=registry.access.redhat.com/rhel7/pod-infrastructure:latest”

注:INFRA infrastructure [ˈɪnfrəstrʌktʃə(r)] 基础设施 迎风儿戏死抓科契尔

KUBELET_POD_INFRA_CONTAINER 指定 pod 基础容器镜像地址。这个是一个基础容器,每一个Pod 启动的时候都会启动一个这样的容器。如果你的本地没有这个镜像,kubelet 会连接外网把这个镜像下载下来。最开始的时候是在 Google 的 registry 上,因此国内因为 GFW 都下载不了导致 Pod 运行不起来。现在每个版本的 Kubernetes 都把这个镜像地址改成红帽的地址了。 也可以提前传到自己的registry 上,然后再用这个参数指定成自己的镜像链接。

注:https://access.redhat.com/containers/ 是红帽的容器下载站点

4.4、启动node1上的服务

[root@node1 ~]# systemctl restart flanneld kube-proxy kubelet docker

[root@node1 ~]# systemctl enable flanneld kube-proxy kubelet docker

[root@node1 ~]# systemctl status flanneld kube-proxy kubelet docker

5 配置minion2节点

所有配置同minion1节点

[root@node2 ~]# scp 192.168.10.12:/etc/sysconfig/flanneld /etc/sysconfig/flanneld

root@192.168.10.12's password:

flanneld 100% 370 305.1KB/s 00:00

[root@node2 ~]# scp node1:/etc/kubernetes/config /etc/kubernetes/config

The authenticity of host 'node1 (192.168.10.12)' can't be established.

ECDSA key fingerprint is SHA256:Dh3mM0sO1ctA1r6DEoluv3QYSlimGtFdTAtjwF9iSCQ.

ECDSA key fingerprint is MD5:2c:ef:f5:ba:0f:c5:be:9a:22:8a:ff:63:ce:f8:ac:5d.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added 'node1' (ECDSA) to the list of known hosts.

root@node1's password:

config 100% 659 76.1KB/s 00:00

[root@node2 ~]# scp node1:/etc/kubernetes/kubelet /etc/kubernetes/kubelet

root@node1's password:

kubelet 100% 613 36.1KB/s 00:00

[root@node2 ~]# vim /etc/kubernetes/kubelet #注意,这里需要修改 KUBELET_HOSTNAME="--hostname-override=node2"

启动所有服务

[root@node2 ~]# systemctl enable flanneld kube-proxy kubelet docker

Created symlink from /etc/systemd/system/multi-user.target.wants/kube-proxy.service to /usr/lib/systemd/system/kube-proxy.service.

Created symlink from /etc/systemd/system/multi-user.target.wants/kubelet.service to /usr/lib/systemd/system/kubelet.service.

Created symlink from /etc/systemd/system/multi-user.target.wants/docker.service to /usr/lib/systemd/system/docker.service.

[root@node2 ~]# systemctl status flanneld kube-proxy kubelet docker

6 总结

在本实验中 kubernetes 4 个结点一共需要启动 13 个服务,共 6 个端口号。

kubeproxy 监控听端口号是 10249

kubeproxy 和 master 的 8080 迚行通信

| 节点 | 服务 | 端口号 |

|---|---|---|

| etcd | etcd | 2379 |

| master | kube-apiserver | |

| kube-controller-manager | ||

| kube-scheduler | ||

| flanneld | ||

| node1-minion | kube-pronxy | 10249 |

| kubelet | 10248 | |

| docker | ||

| flanneld | ||

| node2-minion | kube-pronxy | 10249 |

| kubelet | 10248 | |

| docker | ||

| flanneld | ||

| 通信端口 | 8080 |