环境准备

yum install vim wget bash-completion lrzsz nmap nc tree htop iftop net-tools ipvsadm -y

修改主机名:

hostnamectl set-hostname master

systemctl disable firewalld.service

systemctl stop firewalld.service

setenforce 0

关闭虚拟机内存

swapoff -a

sed -i ‘s/.swap./#&/‘ /etc/fstab

关闭selinux

sed -i “s/^SELINUX=enforcing/SELINUX=disabled/g” /etc/sysconfig/selinux

sed -i “s/^SELINUX=enforcing/SELINUX=disabled/g” /etc/selinux/config

sed -i “s/^SELINUX=permissive/SELINUX=disabled/g” /etc/sysconfig/selinux

sed -i “s/^SELINUX=permissive/SELINUX=disabled/g” /etc/selinux/config

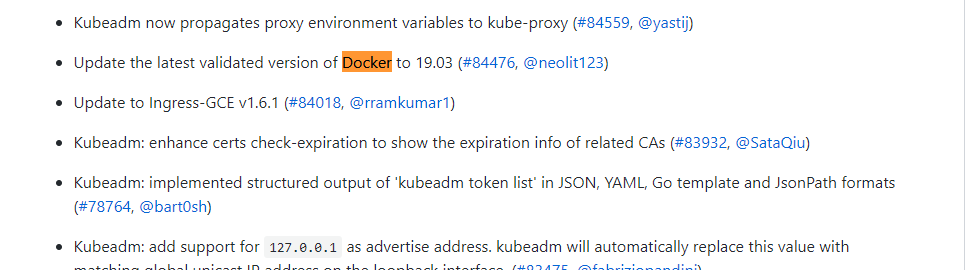

docker版本要求:

https://github.com/kubernetes/kubernetes/blob/master/CHANGELOG-1.17.md

v1.17 docker 要求

Docker versions docker-ce-18.06.3.ce

v1.16 要求:

Drop the support for docker 1.9.x. Docker versions 1.10.3, 1.11.2, 1.12.6 have been validated.

查看docker 版本:

yum list docker-ce —showduplicates|sort -r

docker安装:

yum install yum-utils device-mapper-persistent-data lvm2 -y

添加docker源

yum-config-manager \

—add-repo \

https://download.docker.com/linux/centos/docker-ce.repo

更新源并安装指定版本

yum update && yum install docker-ce-18.06.3.ce -y

配置k8s的docker引擎环境要求:

sudo mkdir -p /etc/docker

cat << EOF > /etc/docker/daemon.json

{

“exec-opts”: [“native.cgroupdriver=systemd”],

“registry-mirrors”: [“https://0[bb06s1q.mirror.aliyuncs.com](http://bb06s1q.mirror.aliyuncs.com/)”],

“log-driver”: “json-file”,

“log-opts”: {

“max-size”: “100m”

},

“storage-driver”: “overlay2”,

“storage-opts”: [“overlay2.override_kernel_check=true”]

}

EOF

systemctl daemon-reload && systemctl restart docker && systemctl enable docker.service

安装和配置containerd

yum install containerd.io -y

mkdir -p /etc/containerd

containerd config default > /etc/containerd/config.toml

systemctl restart containerd

添加kubernetes源

kubernetes安装:

cat <

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

enabled=1

gpgcheck=1

repo_gpgcheck=1

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

安装最新版本或指定版本

yum install -y kubelet kubeadm kubectl —disableexcludes=kubernetes

systemctl enable —now kubelet

开启内核转发

cat <

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

net.ipv4.ip_forward = 1

EOF

sysctl —system

加载检查 ipvs内核

检查:

lsmod | grep ip_vs

加载:

modprobe ip_vs

master节点部署

导出kubeadm集群部署自定义文件

kubeadm config print init-defaults > init.default.yaml 导出配置文件

[root@master ~]# cat init.default.yaml

apiVersion: kubeadm.k8s.io/v1beta2

bootstrapTokens:

- groups:

- system:bootstrappers:kubeadm:default-node-token

token: abcdef.0123456789abcdef

ttl: 24h0m0s

usages:

- signing

- authentication

kind: InitConfiguration

localAPIEndpoint:

#配置主节点IP信息

advertiseAddress: 192.168.31.147

bindPort: 6443

nodeRegistration:

criSocket: /var/run/dockershim.sock

name: master

taints:

- effect: NoSchedule

key: node-role.kubernetes.io/master

—-

apiServer:

timeoutForControlPlane: 4m0s

apiVersion: kubeadm.k8s.io/v1beta2

certificatesDir: /etc/kubernetes/pki

clusterName: kubernetes

controllerManager: {}

dns:

type: CoreDNS

etcd:

local:

dataDir: /var/lib/etcd

#自定义容器镜像拉取国内仓库地址

imageRepository: registry.aliyuncs.com/google_containers

kind: ClusterConfiguration

kubernetesVersion: v1.17.0

networking:

dnsDomain: cluster.local

#自定义podIP地址段

podSubnet: “192.168.0.0/16”

serviceSubnet: 10.96.0.0/12

scheduler: {}

# 开启 IPVS 模式

—-

apiVersion: kubeproxy.config.k8s.io/v1alpha1

kind: KubeProxyConfiguration

featureGates:

SupportIPVSProxyMode: true

mode: ipvs

修改配置文件 添加 主节点IP advertiseAddress:

修改国内阿里镜像地址imageRepository:

自定义pod地址 podSubnet: “192.168.0.0/16”

kubeadm config images list —config init.default.yaml

#检查需要拉取的镜像

kubeadm config images pull —config init.default.yaml

#拉取阿里云kubernetes容器镜像

[root@master ~]# kubeadm config images list —config init.default.yaml

W0204 00:37:10.879146 30608 validation.go:28] Cannot validate kubelet config - no validator is available

W0204 00:37:10.879175 30608 validation.go:28] Cannot validate kube-proxy config - no validator is available

registry.aliyuncs.com/google_containers/kube-apiserver:v1.17.2

registry.aliyuncs.com/google_containers/kube-controller-manager:v1.17.2

registry.aliyuncs.com/google_containers/kube-scheduler:v1.17.2

registry.aliyuncs.com/google_containers/kube-proxy:v1.17.2

registry.aliyuncs.com/google_containers/pause:3.1

registry.aliyuncs.com/google_containers/etcd:3.4.3-0

registry.aliyuncs.com/google_containers/coredns:1.6.5

[root@master ~]# kubeadm config images pull —config init.default.yaml

W0204 00:37:25.590147 30636 validation.go:28] Cannot validate kube-proxy config - no validator is available

W0204 00:37:25.590179 30636 validation.go:28] Cannot validate kubelet config - no validator is available

[config/images] Pulled registry.aliyuncs.com/google_containers/kube-apiserver:v1.17.2

[config/images] Pulled registry.aliyuncs.com/google_containers/kube-controller-manager:v1.17.2

[config/images] Pulled registry.aliyuncs.com/google_containers/kube-scheduler:v1.17.2

[config/images] Pulled registry.aliyuncs.com/google_containers/kube-proxy:v1.17.2

[config/images] Pulled registry.aliyuncs.com/google_containers/pause:3.1

[config/images] Pulled registry.aliyuncs.com/google_containers/etcd:3.4.3-0

[config/images] Pulled registry.aliyuncs.com/google_containers/coredns:1.6.5

[root@master ~]#

#开始部署集群

kubeadm init —config=init.default.yaml

[root@master ~]# kubeadm init —config=init.default.yaml | tee kubeadm-init.log

W0204 00:39:48.825538 30989 validation.go:28] Cannot validate kube-proxy config - no validator is available

W0204 00:39:48.825592 30989 validation.go:28] Cannot validate kubelet config - no validator is available

[init] Using Kubernetes version: v1.17.2

[preflight] Running pre-flight checks

[WARNING Hostname]: hostname “master” could not be reached

[WARNING Hostname]: hostname “master”: lookup master on 114.114.114.114:53: no such host

[preflight] Pulling images required for setting up a Kubernetes cluster

[preflight] This might take a minute or two, depending on the speed of your internet connection

[preflight] You can also perform this action in beforehand using ‘kubeadm config images pull’

[kubelet-start] Writing kubelet environment file with flags to file “/var/lib/kubelet/kubeadm-flags.env”

[kubelet-start] Writing kubelet configuration to file “/var/lib/kubelet/config.yaml”

[kubelet-start] Starting the kubelet

[certs] Using certificateDir folder “/etc/kubernetes/pki”

[certs] Generating “ca” certificate and key

[certs] Generating “apiserver” certificate and key

[certs] apiserver serving cert is signed for DNS names [master kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local] and IPs [10.96.0.1 192.168.31.90]

[certs] Generating “apiserver-kubelet-client” certificate and key

[certs] Generating “front-proxy-ca” certificate and key

[certs] Generating “front-proxy-client” certificate and key

[certs] Generating “etcd/ca” certificate and key

[certs] Generating “etcd/server” certificate and key

[certs] etcd/server serving cert is signed for DNS names [master localhost] and IPs [192.168.31.90 127.0.0.1 ::1]

[certs] Generating “etcd/peer” certificate and key

[certs] etcd/peer serving cert is signed for DNS names [master localhost] and IPs [192.168.31.90 127.0.0.1 ::1]

[certs] Generating “etcd/healthcheck-client” certificate and key

[certs] Generating “apiserver-etcd-client” certificate and key

[certs] Generating “sa” key and public key

[kubeconfig] Using kubeconfig folder “/etc/kubernetes”

[kubeconfig] Writing “admin.conf” kubeconfig file

[kubeconfig] Writing “kubelet.conf” kubeconfig file

[kubeconfig] Writing “controller-manager.conf” kubeconfig file

[kubeconfig] Writing “scheduler.conf” kubeconfig file

[control-plane] Using manifest folder “/etc/kubernetes/manifests”

[control-plane] Creating static Pod manifest for “kube-apiserver”

W0204 00:39:51.810550 30989 manifests.go:214] the default kube-apiserver authorization-mode is “Node,RBAC”; using “Node,RBAC”

[control-plane] Creating static Pod manifest for “kube-controller-manager”

W0204 00:39:51.811109 30989 manifests.go:214] the default kube-apiserver authorization-mode is “Node,RBAC”; using “Node,RBAC”

[control-plane] Creating static Pod manifest for “kube-scheduler”

[etcd] Creating static Pod manifest for local etcd in “/etc/kubernetes/manifests”

[wait-control-plane] Waiting for the kubelet to boot up the control plane as static Pods from directory “/etc/kubernetes/manifests”. This can take up to 4m0s

[apiclient] All control plane components are healthy after 35.006692 seconds

[upload-config] Storing the configuration used in ConfigMap “kubeadm-config” in the “kube-system” Namespace

[kubelet] Creating a ConfigMap “kubelet-config-1.17” in namespace kube-system with the configuration for the kubelets in the cluster

[upload-certs] Skipping phase. Please see —upload-certs

[mark-control-plane] Marking the node master as control-plane by adding the label “node-role.kubernetes.io/master=’’”

[mark-control-plane] Marking the node master as control-plane by adding the taints [node-role.kubernetes.io/master:NoSchedule]

[bootstrap-token] Using token: abcdef.0123456789abcdef

[bootstrap-token] Configuring bootstrap tokens, cluster-info ConfigMap, RBAC Roles

[bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials

[bootstrap-token] configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token

[bootstrap-token] configured RBAC rules to allow certificate rotation for all node client certificates in the cluster

[bootstrap-token] Creating the “cluster-info” ConfigMap in the “kube-public” namespace

[kubelet-finalize] Updating “/etc/kubernetes/kubelet.conf” to point to a rotatable kubelet client certificate and key

[addons] Applied essential addon: CoreDNS

[addons] Applied essential addon: kube-proxy

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

You should now deploy a pod network to the cluster.

Run “kubectl apply -f [podnetwork].yaml” with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join 192.168.31.90:6443 —token abcdef.0123456789abcdef \

—discovery-token-ca-cert-hash sha256:09959f846dba6a855fbbd090e99b4ba1df4e643ec1a1578c28eaf9a9d3ea6a03

[root@master ~]#

[root@master ~]#mkdir -p $HOME/.kube

[root@master ~]#sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

[root@master ~]#sudo chown $(id -u):$(id -g) $HOME/.kube/config

[root@master ~]#kubectl get nodes

NAME STATUS ROLES AGE VERSION

master NotReady master 2m34s v1.17.2

[root@master ~]#

[root@master ~]# kubectl get cs

NAME STATUS MESSAGE ERROR

scheduler Healthy ok

controller-manager Healthy ok

etcd-0 Healthy {“health”:”true”}

kuberctl 命令自动补全

**

source <(kubectl completion bash)

echo “source <(kubectl completion bash)” >> ~/.bashrc

kubernetes网络部署:

Calico

https://www.projectcalico.org/

https://docs.projectcalico.org/getting-started/kubernetes/

Calico网络部署:

kubectl apply -f https://docs.projectcalico.org/manifests/calico.yaml

[root@master ~]# kubectl apply -f https://docs.projectcalico.org/manifests/calico.yaml

configmap/calico-config created

customresourcedefinition.apiextensions.k8s.io/felixconfigurations.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/ipamblocks.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/blockaffinities.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/ipamhandles.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/ipamconfigs.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/bgppeers.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/bgpconfigurations.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/ippools.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/hostendpoints.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/clusterinformations.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/globalnetworkpolicies.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/globalnetworksets.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/networkpolicies.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/networksets.crd.projectcalico.org created

clusterrole.rbac.authorization.k8s.io/calico-kube-controllers created

clusterrolebinding.rbac.authorization.k8s.io/calico-kube-controllers created

clusterrole.rbac.authorization.k8s.io/calico-node created

clusterrolebinding.rbac.authorization.k8s.io/calico-node created

daemonset.apps/calico-node created

serviceaccount/calico-node created

deployment.apps/calico-kube-controllers created

serviceaccount/calico-kube-controllers created

[root@master ~]#

[root@master ~]# kubectl get pod -n kube-system

NAME READY STATUS RESTARTS AGE

calico-kube-controllers-77c4b7448-hfqws 1/1 Running 0 32s

calico-node-59p6f 1/1 Running 0 32s

coredns-9d85f5447-6wgkd 1/1 Running 0 2m5s

coredns-9d85f5447-bkjj8 1/1 Running 0 2m5s

etcd-master 1/1 Running 0 2m2s

kube-apiserver-master 1/1 Running 0 2m2s

kube-controller-manager-master 1/1 Running 0 2m2s

kube-proxy-lwww6 1/1 Running 0 2m5s

kube-scheduler-master 1/1 Running 0 2m2s

node节点部署

*

[root@node02 ~]# kubeadm join 192.168.31.90:6443 —token abcdef.0123456789abcdef \

> —discovery-token-ca-cert-hash sha256:09959f846dba6a855fbbd090e99b4ba1df4e643ec1a1578c28eaf9a9d3ea6a03

W0204 00:46:53.878006 30928 join.go:346] [preflight] WARNING: JoinControlPane.controlPlane settings will be ignored when control-plane flag is not set.

[preflight] Running pre-flight checks

[WARNING Hostname]: hostname “node02” could not be reached

[WARNING Hostname]: hostname “node02”: lookup node02 on 114.114.114.114:53: no such host

[preflight] Reading configuration from the cluster…

[preflight] FYI: You can look at this config file with ‘kubectl -n kube-system get cm kubeadm-config -oyaml’

[kubelet-start] Downloading configuration for the kubelet from the “kubelet-config-1.17” ConfigMap in the kube-system namespace

[kubelet-start] Writing kubelet configuration to file “/var/lib/kubelet/config.yaml”

[kubelet-start] Writing kubelet environment file with flags to file “/var/lib/kubelet/kubeadm-flags.env”

[kubelet-start] Starting the kubelet

[kubelet-start] Waiting for the kubelet to perform the TLS Bootstrap…

This node has joined the cluster:

Certificate signing request was sent to apiserver and a response was received.

* The Kubelet was informed of the new secure connection details.

Run ‘kubectl get nodes’ on the control-plane to see this node join the cluster.

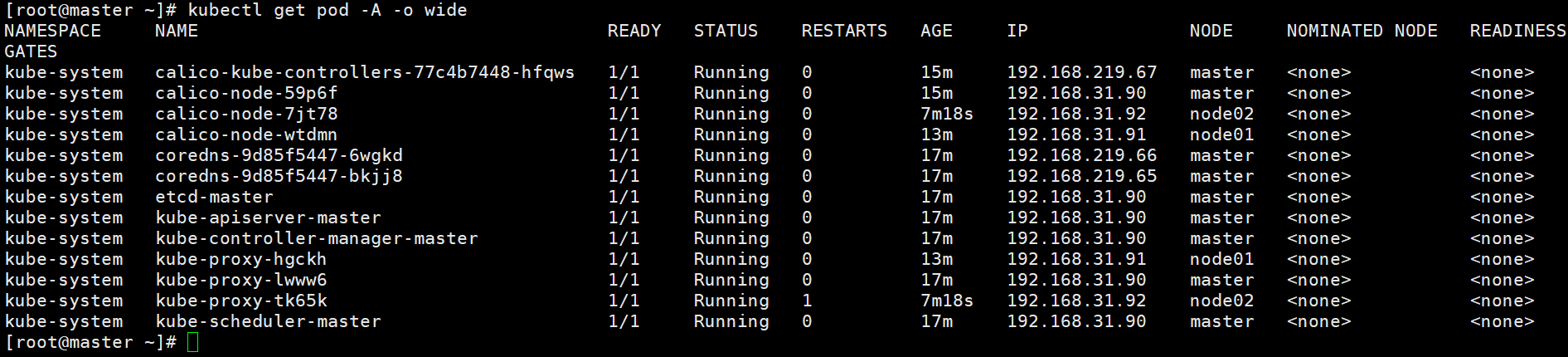

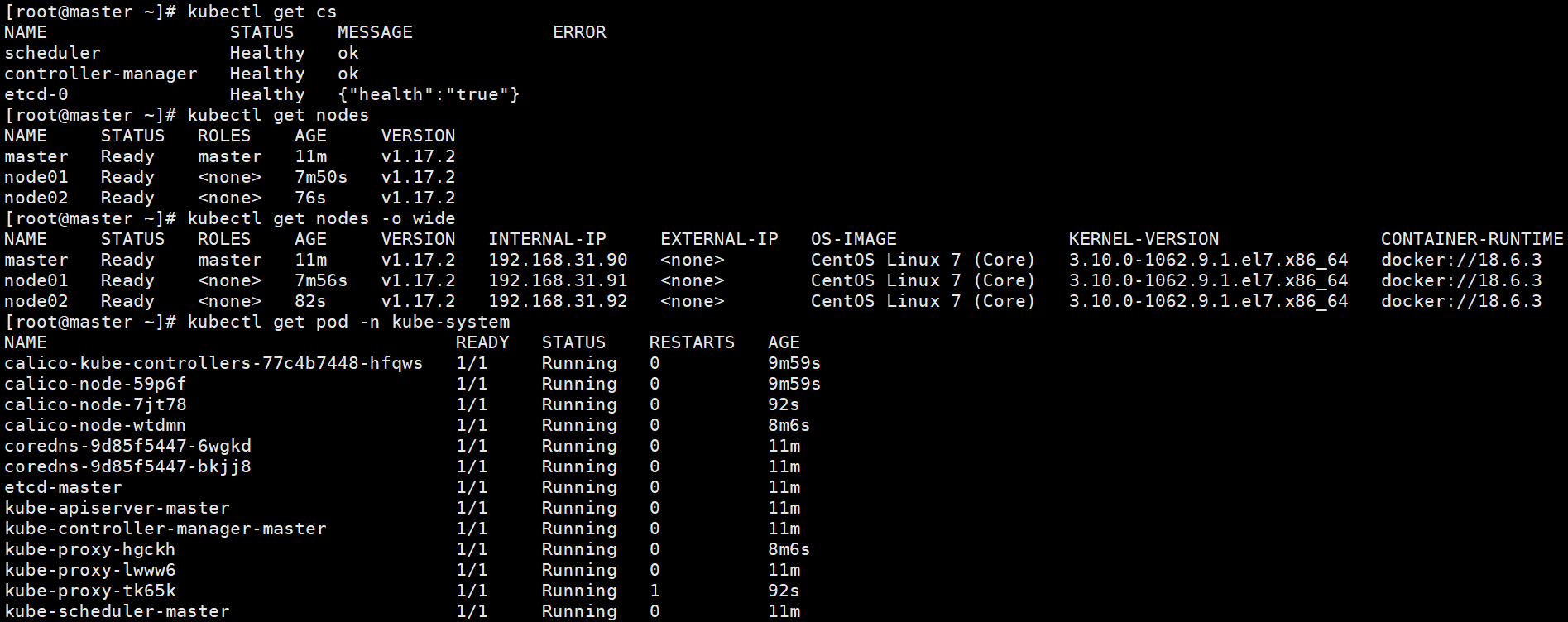

集群检查:

[root@master ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

master Ready master 19m v1.17.2

node01 Ready

node02 Ready

[root@master ~]# kubectl get cs

NAME STATUS MESSAGE ERROR

controller-manager Healthy ok

scheduler Healthy ok

etcd-0 Healthy {“health”:”true”}

[root@master ~]# kubectl get pod -A

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-system calico-kube-controllers-77c4b7448-zd6dt 0/1 Error 1 119s

kube-system calico-node-2fcbs 1/1 Running 0 119s

kube-system calico-node-56f95 1/1 Running 0 119s

kube-system calico-node-svlg9 1/1 Running 0 119s

kube-system coredns-9d85f5447-4f4hq 0/1 Running 0 19m

kube-system coredns-9d85f5447-n68wd 0/1 Running 0 19m

kube-system etcd-master 1/1 Running 0 19m

kube-system kube-apiserver-master 1/1 Running 0 19m

kube-system kube-controller-manager-master 1/1 Running 0 19m

kube-system kube-proxy-ch4vl 1/1 Running 1 15m

kube-system kube-proxy-fjl5c 1/1 Running 1 19m

kube-system kube-proxy-hhsqc 1/1 Running 1 13m

kube-system kube-scheduler-master 1/1 Running 0 19m

[root@master ~]#