K8S监控实战-ELK收集K8S内应用日志

目录

- K8S监控实战-ELK收集K8S内应用日志

- 1 收集K8S日志方案

- 1.1 传统ELk模型缺点:

- 1.2 K8s容器日志收集模型

- 2 制作tomcat底包

- 2.1 准备tomcat底包

- 2.1.1 下载tomcat8

- 2.1.2 简单配置tomcat

- 2.2 准备docker镜像

- 2.2.1 创建dockerfile

- 2.2.2 准备dockerfile所需文件

- 2.2.3 构建docker

- 2.1 准备tomcat底包

- 3 部署ElasticSearch

- 3.1 安装ElasticSearch

- 3.1.1 下载二进制包

- 3.1.2 配置elasticsearch.yml

- 3.2 优化其他设置

- 3.2.1 设置jvm参数

- 3.2.2 创建普通用户

- 3.2.3 调整文件描述符

- 3.2.4 调整内核参数

- 3.3 启动ES

- 3.3.1 启动es服务

- 3.3.1 调整ES日志模板

- 3.1 安装ElasticSearch

- 4 部署kafka和kafka-manager

- 4.1 但节点安装kafka

- 4.1.1 下载包

- 4.1.2 修改配置

- 4.1.3 启动kafka

- 4.2 获取kafka-manager的docker镜像

- 4.2.1 方法一 通过dockerfile获取

- 4.2.2 直接下载docker镜像

- 4.3 部署kafka-manager

- 4.3.1 准备dp清单

- 4.3.2 准备svc资源清单

- 4.3.3 准备ingress资源清单

- 4.3.4 应用资源配置清单

- 4.3.5 解析域名

- 4.3.6 浏览器访问

- 4.1 但节点安装kafka

- 5 部署filebeat

- 5.1 制作docker镜像

- 5.1.1 准备Dockerfile

- 5.1.2 准备filebeat配置文件

- 5.1.3 准备启动脚本

- 5.1.4 构建镜像

- 5.2 以边车模式运行POD

- 5.2.1 准备资源配置清单

- 5.2.2 应用资源清单

- 5.2.3 验证

- 5.1 制作docker镜像

- 6 部署logstash

- 6.1 准备docker镜像

- 6.1.1 下载官方镜像

- 6.1.2 准备配置文件

- 6.2 启动logstash

- 6.2.1 启动测试环境的logstash

- 6.2.2 查看es是否接收数据

- 6.2.3 启动正式环境的logstash

- 6.1 准备docker镜像

- 7 部署Kibana

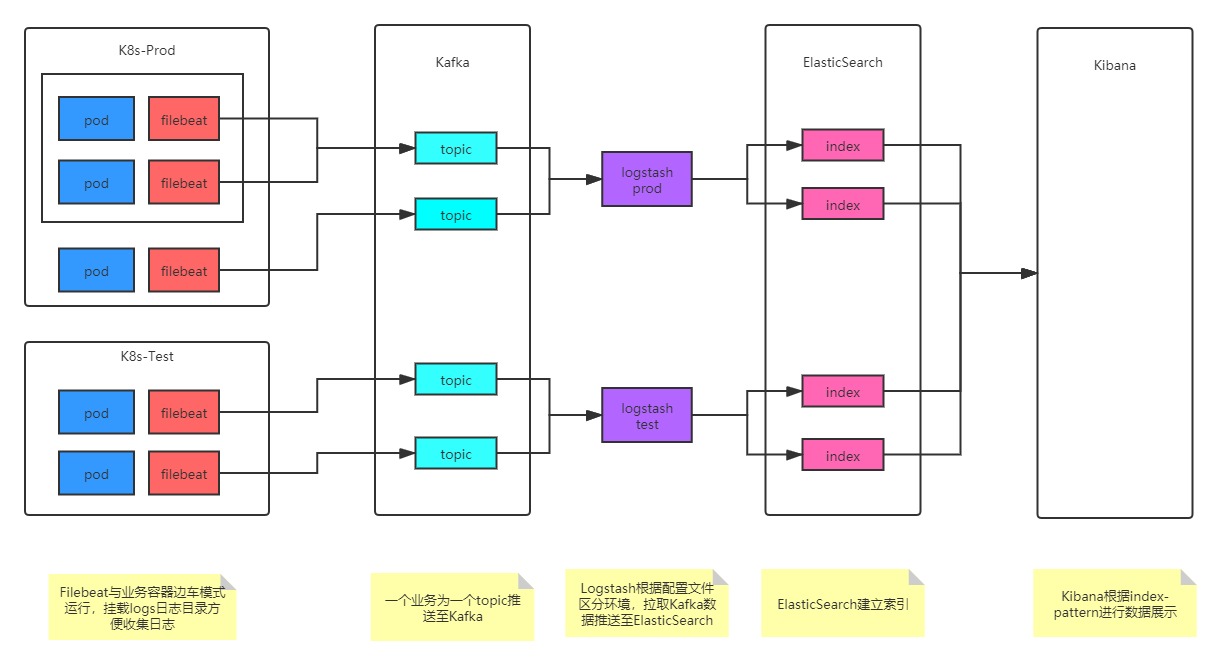

- 1 收集K8S日志方案

- 收集 – 能够采集多种来源的日志数据(流式日志收集器)

- 传输 – 能够稳定的把日志数据传输到中央系统(消息队列)

- 存储 – 可以将日志以结构化数据的形式存储起来(搜索引擎)

- 分析 – 支持方便的分析、检索方法,最好有GUI管理系统(web)

-

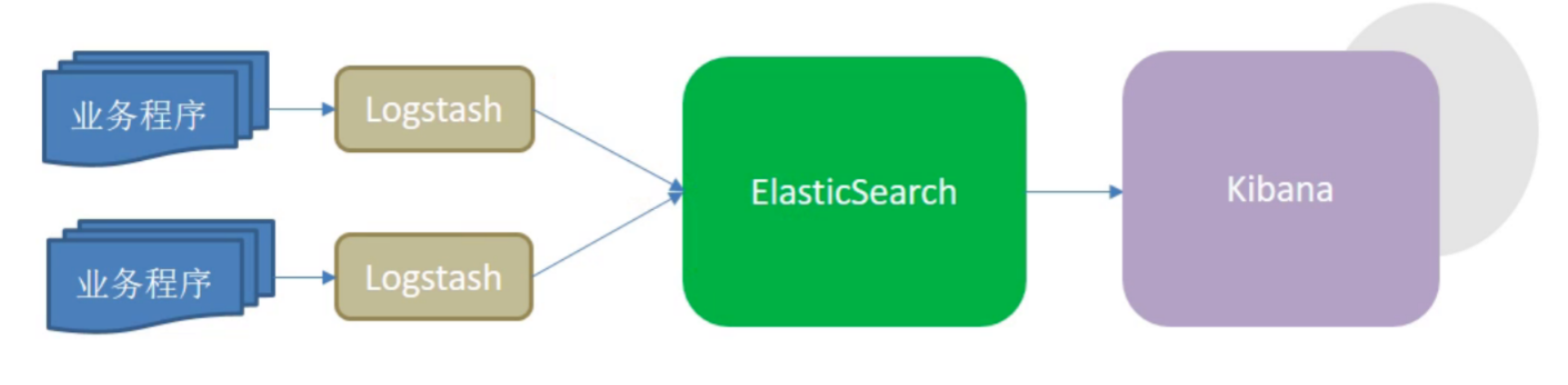

1.1 传统ELk模型缺点:

Logstash使用Jruby语言开发,吃资源,大量部署消耗极高

- 业务程序与logstash耦合过松,不利于业务迁移

- 日志收集与ES耦合又过紧,(Logstash)易打爆(ES)、丢数据

- 在容器云环境下,传统ELk模型难以完成工作

1.2 K8s容器日志收集模型

2 制作tomcat底包

2.1 准备tomcat底包

2.1.1 下载tomcat8

cd /opt/src/wget http://mirror.bit.edu.cn/apache/tomcat/tomcat-8/v8.5.50/bin/apache-tomcat-8.5.50.tar.gzmkdir /data/dockerfile/tomcattar xf apache-tomcat-8.5.50.tar.gz -C /data/dockerfile/tomcatcd /data/dockerfile/tomcat

2.1.2 简单配置tomcat

删除自带网页

关闭AJP端口rm -rf apache-tomcat-8.5.50/webapps/*

修改日志类型tomcat]# vim apache-tomcat-8.5.50/conf/server.xml<!-- <Connector port="8009" protocol="AJP/1.3" redirectPort="8443" /> -->

删除3manager,4host-manager的handlers

tomcat]# vim apache-tomcat-8.5.50/conf/logging.propertieshandlers = [1catalina.org.apache.juli.AsyncFileHandler](http://1catalina.org.apache.juli.asyncfilehandler/), [2localhost.org.apache.juli.AsyncFileHandler](http://2localhost.org.apache.juli.asyncfilehandler/), java.util.logging.ConsoleHandler

日志级别改为INFO

1catalina.org.apache.juli.AsyncFileHandler.level = INFO2localhost.org.apache.juli.AsyncFileHandler.level = INFOjava.util.logging.ConsoleHandler.level = INFO

注释所有关于3manager,4host-manager日志的配置

#3manager.org.apache.juli.AsyncFileHandler.level = FINE#3manager.org.apache.juli.AsyncFileHandler.directory = ${catalina.base}/logs#3manager.org.apache.juli.AsyncFileHandler.prefix = manager.#3manager.org.apache.juli.AsyncFileHandler.encoding = UTF-8#4host-manager.org.apache.juli.AsyncFileHandler.level = FINE#4host-manager.org.apache.juli.AsyncFileHandler.directory = ${catalina.base}/logs#4host-manager.org.apache.juli.AsyncFileHandler.prefix = host-manager.#4host-manager.org.apache.juli.AsyncFileHandler.encoding = UTF-8

2.2 准备docker镜像

2.2.1 创建dockerfile

cat >Dockerfile <<'EOF'From harbor.od.com/public/jre:8u112RUN /bin/cp /usr/share/zoneinfo/Asia/Shanghai /etc/localtime &&\echo 'Asia/Shanghai' >/etc/timezoneENV CATALINA_HOME /opt/tomcatENV LANG zh_CN.UTF-8ADD apache-tomcat-8.5.50/ /opt/tomcatADD config.yml /opt/prom/config.ymlADD jmx_javaagent-0.3.1.jar /opt/prom/jmx_javaagent-0.3.1.jarWORKDIR /opt/tomcatADD entrypoint.sh /entrypoint.shCMD ["/bin/bash","/entrypoint.sh"]EOF

2.2.2 准备dockerfile所需文件

JVM监控所需jar包

wget -O jmx_javaagent-0.3.1.jar https://repo1.maven.org/maven2/io/prometheus/jmx/jmx_prometheus_javaagent/0.3.1/jmx_prometheus_javaagent-0.3.1.jar

jmx_agent读取的配置文件

cat >config.yml <<'EOF'---rules:- pattern: '.*'EOF

容器启动脚本

cat >entrypoint.sh <<'EOF'#!/bin/bashM_OPTS="-Duser.timezone=Asia/Shanghai -javaagent:/opt/prom/jmx_javaagent-0.3.1.jar=$(hostname -i):${M_PORT:-"12346"}:/opt/prom/config.yml" # Pod ip:port 监控规则传给jvm监控客户端C_OPTS=${C_OPTS} # 启动追加参数MIN_HEAP=${MIN_HEAP:-"128m"} # java虚拟机初始化时的最小内存MAX_HEAP=${MAX_HEAP:-"128m"} # java虚拟机初始化时的最大内存JAVA_OPTS=${JAVA_OPTS:-"-Xmn384m -Xss256k -Duser.timezone=GMT+08 -XX:+DisableExplicitGC -XX:+UseConcMarkSweepGC -XX:+UseParNewGC -XX:+CMSParallelRemarkEnabled -XX:+UseCMSCompactAtFullCollection -XX:CMSFullGCsBeforeCompaction=0 -XX:+CMSClassUnloadingEnabled -XX:LargePageSizeInBytes=128m -XX:+UseFastAccessorMethods -XX:+UseCMSInitiatingOccupancyOnly -XX:CMSInitiatingOccupancyFraction=80 -XX:SoftRefLRUPolicyMSPerMB=0 -XX:+PrintClassHistogram -Dfile.encoding=UTF8 -Dsun.jnu.encoding=UTF8"} # 年轻代,gc回收CATALINA_OPTS="${CATALINA_OPTS}"JAVA_OPTS="${M_OPTS} ${C_OPTS} -Xms${MIN_HEAP} -Xmx${MAX_HEAP} ${JAVA_OPTS}"sed -i -e "1a\JAVA_OPTS=\"$JAVA_OPTS\"" -e "1a\CATALINA_OPTS=\"$CATALINA_OPTS\"" /opt/tomcat/bin/catalina.shcd /opt/tomcat && /opt/tomcat/bin/catalina.sh run 2>&1 >> /opt/tomcat/logs/stdout.log # 日志文件EOF

2.2.3 构建docker

docker build . -t harbor.zq.com/base/tomcat:v8.5.50docker push harbor.zq.com/base/tomcat:v8.5.50

3 部署ElasticSearch

官网

官方github地址

下载地址

部署HDSS7-12.host.com上:

3.1 安装ElasticSearch

3.1.1 下载二进制包

cd /opt/srcwget https://artifacts.elastic.co/downloads/elasticsearch/elasticsearch-6.8.6.tar.gztar xf elasticsearch-6.8.6.tar.gz -C /opt/ln -s /opt/elasticsearch-6.8.6/ /opt/elasticsearchcd /opt/elasticsearch

3.1.2 配置elasticsearch.yml

mkdir -p /data/elasticsearch/{data,logs}cat >config/elasticsearch.yml <<'EOF'cluster.name: es.zq.comnode.name: hdss7-12.host.compath.data: /data/elasticsearch/datapath.logs: /data/elasticsearch/logsbootstrap.memory_lock: truenetwork.host: 10.4.7.12http.port: 9200EOF

3.2 优化其他设置

3.2.1 设置jvm参数

elasticsearch]# vi config/jvm.options# 根据环境设置,-Xms和-Xmx设置为相同的值,推荐设置为机器内存的一半左右-Xms512m-Xmx512m

3.2.2 创建普通用户

useradd -s /bin/bash -M eschown -R es.es /opt/elasticsearch-6.8.6chown -R es.es /data/elasticsearch/

3.2.3 调整文件描述符

vim /etc/security/limits.d/es.confes hard nofile 65536es soft fsize unlimitedes hard memlock unlimitedes soft memlock unlimited

3.2.4 调整内核参数

sysctl -w vm.max_map_count=262144echo "vm.max_map_count=262144" > /etc/sysctl.confsysctl -p

3.3 启动ES

3.3.1 启动es服务

]# su -c "/opt/elasticsearch/bin/elasticsearch -d" es]# netstat -luntp|grep 9200tcp6 0 0 10.4.7.12:9200 :::* LISTEN 16784/java

3.3.1 调整ES日志模板

curl -XPUT http://10.4.7.12:9200/_template/k8s -d '{"template" : "k8s*","index_patterns": ["k8s*"],"settings": {"number_of_shards": 5,"number_of_replicas": 0 # 生产为3份副本集,本es为单节点,不能配置副本集}}'

4 部署kafka和kafka-manager

官网

官方github地址

下载地址HDSS7-11.host.com上:

4.1 但节点安装kafka

4.1.1 下载包

cd /opt/srcwget https://archive.apache.org/dist/kafka/2.2.0/kafka_2.12-2.2.0.tgztar xf kafka_2.12-2.2.0.tgz -C /opt/ln -s /opt/kafka_2.12-2.2.0/ /opt/kafkacd /opt/kafka

4.1.2 修改配置

mkdir /data/kafka/logs -pcat >config/server.properties <<'EOF'log.dirs=/data/kafka/logszookeeper.connect=localhost:2181 # zk消息队列地址log.flush.interval.messages=10000log.flush.interval.ms=1000delete.topic.enable=truehost.name=hdss7-11.host.comEOF

4.1.3 启动kafka

bin/kafka-server-start.sh -daemon config/server.properties]# netstat -luntp|grep 9092tcp6 0 0 10.4.7.11:9092 :::* LISTEN 34240/java

4.2 获取kafka-manager的docker镜像

官方github地址

源码下载地址

运维主机HDSS7-200.host.com上:

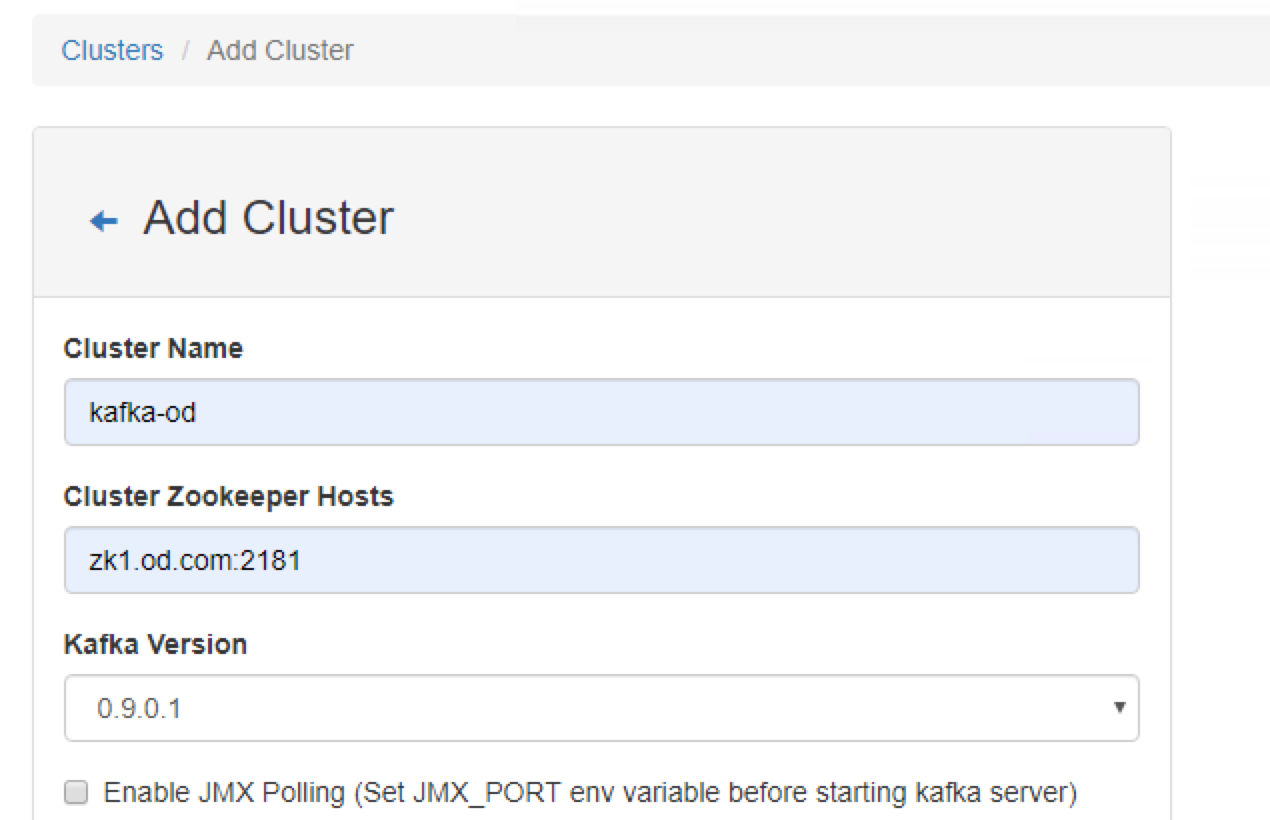

kafka-manager是kafka的一个web管理页面,非必须

4.2.1 方法一 通过dockerfile获取

1 准备Dockerfile

cat >/data/dockerfile/kafka-manager/Dockerfile <<'EOF'FROM hseeberger/scala-sbtENV ZK_HOSTS=10.4.7.11:2181 \KM_VERSION=2.0.0.2RUN mkdir -p /tmp && \cd /tmp && \wget https://github.com/yahoo/kafka-manager/archive/${KM_VERSION}.tar.gz && \tar xxf ${KM_VERSION}.tar.gz && \cd /tmp/kafka-manager-${KM_VERSION} && \sbt clean dist && \unzip -d / ./target/universal/kafka-manager-${KM_VERSION}.zip && \rm -fr /tmp/${KM_VERSION} /tmp/kafka-manager-${KM_VERSION}WORKDIR /kafka-manager-${KM_VERSION}EXPOSE 9000ENTRYPOINT ["./bin/kafka-manager","-Dconfig.file=conf/application.conf"]EOF

2 制作docker镜像

cd /data/dockerfile/kafka-managerdocker build . -t harbor.od.com/infra/kafka-manager:v2.0.0.2(漫长的过程)docker push harbor.zq.com/infra/kafka-manager:latest

构建过程极其漫长,大概率会失败,因此可以通过第二种方式下载构建好的镜像 但构建好的镜像写死了zk地址,要注意传入变量修改zk地址

4.2.2 直接下载docker镜像

docker pull sheepkiller/kafka-manager:latestdocker images|grep kafka-managerdocker tag 4e4a8c5dabab harbor.zq.com/infra/kafka-manager:latestdocker push harbor.zq.com/infra/kafka-manager:latest

4.3 部署kafka-manager

mkdir /data/k8s-yaml/kafka-managercd /data/k8s-yaml/kafka-manager

4.3.1 准备dp清单

cat >deployment.yaml <<'EOF'kind: DeploymentapiVersion: extensions/v1beta1metadata:name: kafka-managernamespace: infralabels:name: kafka-managerspec:replicas: 1selector:matchLabels:name: kafka-managertemplate:metadata:labels:app: kafka-managername: kafka-managerspec:containers:- name: kafka-managerimage: harbor.zq.com/infra/kafka-manager:latestports:- containerPort: 9000protocol: TCPenv:- name: ZK_HOSTSvalue: zk1.od.com:2181- name: APPLICATION_SECRETvalue: letmeinimagePullPolicy: IfNotPresentimagePullSecrets:- name: harborrestartPolicy: AlwaysterminationGracePeriodSeconds: 30securityContext:runAsUser: 0schedulerName: default-schedulerstrategy:type: RollingUpdaterollingUpdate:maxUnavailable: 1maxSurge: 1revisionHistoryLimit: 7progressDeadlineSeconds: 600EOF

4.3.2 准备svc资源清单

cat >service.yaml <<'EOF'kind: ServiceapiVersion: v1metadata:name: kafka-managernamespace: infraspec:ports:- protocol: TCPport: 9000targetPort: 9000selector:app: kafka-managerEOF

4.3.3 准备ingress资源清单

cat >ingress.yaml <<'EOF'kind: IngressapiVersion: extensions/v1beta1metadata:name: kafka-managernamespace: infraspec:rules:- host: km.zq.comhttp:paths:- path: /backend:serviceName: kafka-managerservicePort: 9000EOF

4.3.4 应用资源配置清单

任意一台运算节点上:

kubectl apply -f http://k8s-yaml.od.com/kafka-manager/deployment.yamlkubectl apply -f http://k8s-yaml.od.com/kafka-manager/service.yamlkubectl apply -f http://k8s-yaml.od.com/kafka-manager/ingress.yaml

4.3.5 解析域名

HDSS7-11.host.com上

~]# vim /var/named/zq.com.zonekm A 10.4.7.10~]# systemctl restart named~]# dig -t A km.od.com @10.4.7.11 +short10.4.7.10

4.3.6 浏览器访问

http://km.zq.com

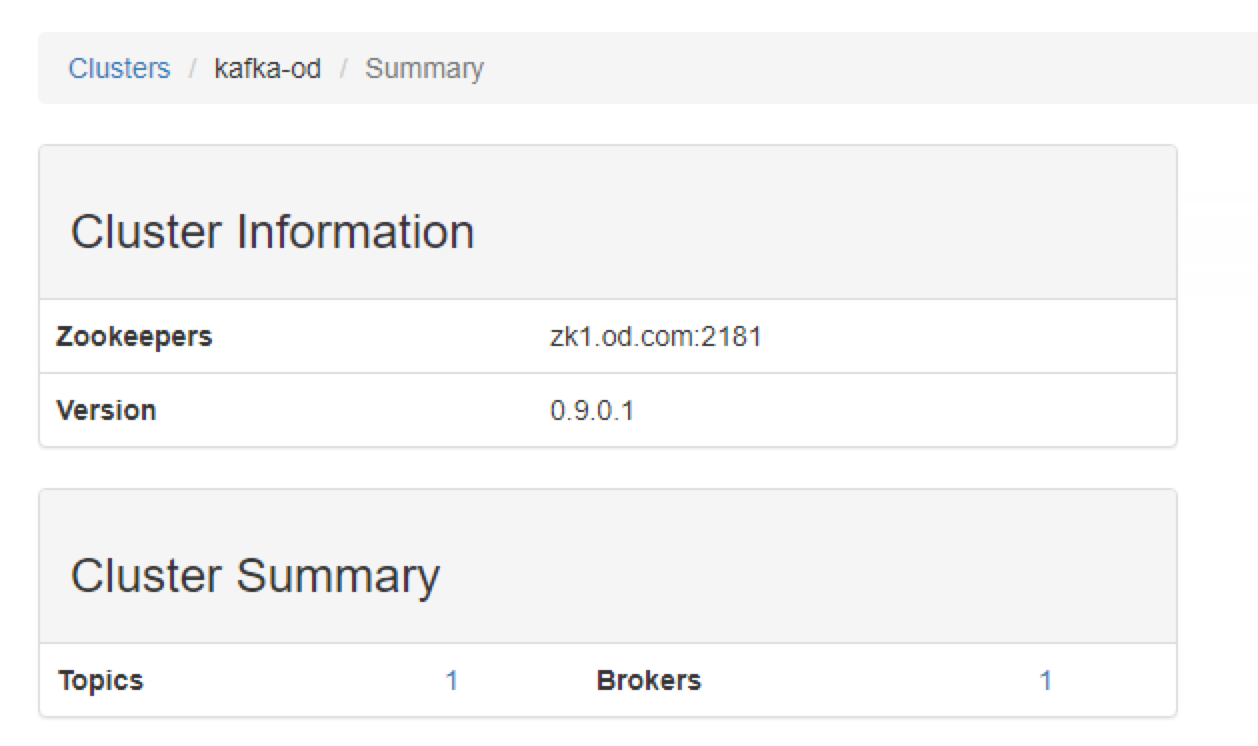

添加集群

查看集群信息

5 部署filebeat

官方下载地址

运维主机HDSS7-200.host.com上:

5.1 制作docker镜像

mkdir /data/dockerfile/filebeatcd /data/dockerfile/filebeat

5.1.1 准备Dockerfile

cat >Dockerfile <<'EOF'FROM debian:jessie# 如果更换版本,需在官网下载同版本LINUX64-BIT的sha替换FILEBEAT_SHA1ENV FILEBEAT_VERSION=7.5.1 \ FILEBEAT_SHA1=daf1a5e905c415daf68a8192a069f913a1d48e2c79e270da118385ba12a93aaa91bda4953c3402a6f0abf1c177f7bcc916a70bcac41977f69a6566565a8fae9cRUN set -x && \apt-get update && \apt-get install -y wget && \wget https://artifacts.elastic.co/downloads/beats/filebeat/filebeat-${FILEBEAT_VERSION}-linux-x86_64.tar.gz -O /opt/filebeat.tar.gz && \cd /opt && \echo "${FILEBEAT_SHA1} filebeat.tar.gz" | sha512sum -c - && \tar xzvf filebeat.tar.gz && \cd filebeat-* && \cp filebeat /bin && \cd /opt && \rm -rf filebeat* && \apt-get purge -y wget && \apt-get autoremove -y && \apt-get clean && rm -rf /var/lib/apt/lists/* /tmp/* /var/tmp/*COPY filebeat.yaml /etc/COPY docker-entrypoint.sh /ENTRYPOINT ["/bin/bash","/docker-entrypoint.sh"]EOF

5.1.2 准备filebeat配置文件

cat >/etc/filebeat.yaml << EOFfilebeat.inputs:- type: logfields_under_root: truefields:topic: logm-PROJ_NAMEpaths:- /logm/*.log- /logm/*/*.log- /logm/*/*/*.log- /logm/*/*/*/*.log- /logm/*/*/*/*/*.logscan_frequency: 120smax_bytes: 10485760multiline.pattern: 'MULTILINE'multiline.negate: truemultiline.match: aftermultiline.max_lines: 100- type: logfields_under_root: truefields:topic: logu-PROJ_NAMEpaths:- /logu/*.log- /logu/*/*.log- /logu/*/*/*.log- /logu/*/*/*/*.log- /logu/*/*/*/*/*.log- /logu/*/*/*/*/*/*.logoutput.kafka:hosts: ["10.4.7.11:9092"]topic: k8s-fb-ENV-%{[topic]}version: 2.0.0 # kafka版本超过2.0,默认写2.0.0required_acks: 0max_message_bytes: 10485760EOF

5.1.3 准备启动脚本

cat >docker-entrypoint.sh <<'EOF'#!/bin/bashENV=${ENV:-"test"} # 定义日志收集的环境PROJ_NAME=${PROJ_NAME:-"no-define”} # 定义项目名称MULTILINE=${MULTILINE:-"^\d{2}"} # 多行匹配,以2个数据开头的为一行,反之# 替换配置文件中的内容sed -i 's#PROJ_NAME#${PROJ_NAME}#g' /etc/filebeat.yamlsed -i 's#MULTILINE#${MULTILINE}#g' /etc/filebeat.yamlsed -i 's#ENV#${ENV}#g' /etc/filebeat.yamlif [[ "$1" == "" ]]; thenexec filebeat -c /etc/filebeat.yamlelseexec "$@"fiEOF

5.1.4 构建镜像

docker build . -t harbor.od.com/infra/filebeat:v7.5.1docker push harbor.od.com/infra/filebeat:v7.5.1

5.2 以边车模式运行POD

5.2.1 准备资源配置清单

使用dubbo-demo-consumer的镜像,以边车模式运行filebeat

]# vim /data/k8s-yaml/test/dubbo-demo-consumer/deployment.yamlkind: DeploymentapiVersion: extensions/v1beta1metadata:name: dubbo-demo-consumernamespace: testlabels:name: dubbo-demo-consumerspec:replicas: 1selector:matchLabels:name: dubbo-demo-consumertemplate:metadata:labels:app: dubbo-demo-consumername: dubbo-demo-consumerannotations:blackbox_path: "/hello?name=health"blackbox_port: "8080"blackbox_scheme: "http"prometheus_io_scrape: "true"prometheus_io_port: "12346"prometheus_io_path: "/"spec:containers:- name: dubbo-demo-consumerimage: harbor.zq.com/app/dubbo-tomcat-web:apollo_200513_1808ports:- containerPort: 8080protocol: TCP- containerPort: 20880protocol: TCPenv:- name: JAR_BALLvalue: dubbo-client.jar- name: C_OPTSvalue: -Denv=fat -Dapollo.meta=http://config-test.zq.comimagePullPolicy: IfNotPresent#--------新增内容--------volumeMounts:- mountPath: /opt/tomcat/logsname: logm- name: filebeatimage: harbor.zq.com/infra/filebeat:v7.5.1imagePullPolicy: IfNotPresentenv:- name: ENVvalue: test # 测试环境- name: PROJ_NAMEvalue: dubbo-demo-web # 项目名volumeMounts:- mountPath: /logmname: logmvolumes:- emptyDir: {} #随机在宿主机找目录创建,容器删除时一起删除name: logm#--------新增结束--------imagePullSecrets:- name: harborrestartPolicy: AlwaysterminationGracePeriodSeconds: 30securityContext:runAsUser: 0schedulerName: default-schedulerstrategy:type: RollingUpdaterollingUpdate:maxUnavailable: 1maxSurge: 1revisionHistoryLimit: 7progressDeadlineSeconds: 600

5.2.2 应用资源清单

任意node节点

kubectl apply -f http://k8s-yaml.od.com/test/dubbo-demo-consumer/deployment.yaml

5.2.3 验证

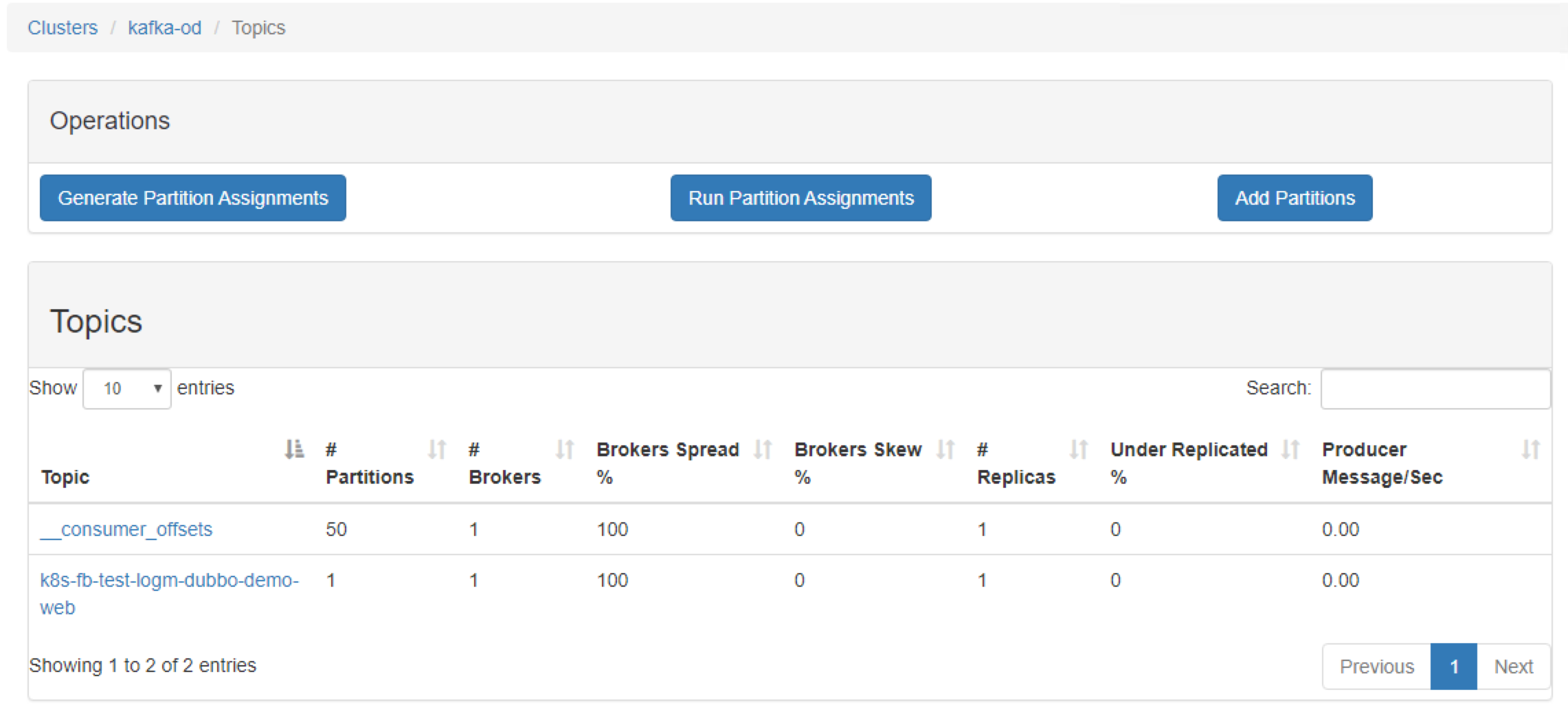

浏览器访问http://km.zq.com,看到kafaka-manager里,topic打进来,即为成功

进入dubbo-demo-consumer的容器中,查看logm目录下是否有日志

kubectl -n test exec -it dobbo...... -c filebeat /bin/bashls /logm# -c参数指定pod中的filebeat容器# /logm是filebeat容器挂载的目录

6 部署logstash

6.1 准备docker镜像

6.1.1 下载官方镜像

docker pull logstash:6.8.6docker tag d0a2dac51fcb harbor.od.com/infra/logstash:v6.8.6docker push harbor.zq.com/infra/logstash:v6.8.6

6.1.2 准备配置文件

准备目录

mkdir /etc/logstash/

创建test.conf

cat >/etc/logstash/logstash-test.conf <<'EOF'input {kafka {bootstrap_servers => "10.4.7.11:9092"client_id => "10.4.7.200"consumer_threads => 4group_id => "k8s_test" # 为test组topics_pattern => "k8s-fb-test-.*" # 只收集k8s-fb-test开头的topics}}filter {json {source => "message"}}output {elasticsearch {hosts => ["10.4.7.12:9200"]index => "k8s-test-%{+YYYY.MM.DD}"}}EOF

创建prod.conf

cat >/etc/logstash/logstash-prod.conf <<'EOF'input {kafka {bootstrap_servers => "10.4.7.11:9092"client_id => "10.4.7.200"consumer_threads => 4group_id => "k8s_prod"topics_pattern => "k8s-fb-prod-.*"}}filter {json {source => "message"}}output {elasticsearch {hosts => ["10.4.7.12:9200"]index => “k8s-prod-%{+YYYY.MM.DD}"}}EOF

6.2 启动logstash

6.2.1 启动测试环境的logstash

docker run -d \--restart=always \--name logstash-test \-v /etc/logstash:/etc/logstash \-f /etc/logstash/logstash-test.conf \harbor.od.com/infra/logstash:v6.8.6~]# docker ps -a|grep logstash

6.2.2 查看es是否接收数据

~]# curl http://10.4.7.12:9200/_cat/indices?vhealth status index uuid pri rep docs.count docs.deleted store.size pri.store.sizegreen open k8s-test-2020.01.07 mFEQUyKVTTal8c97VsmZHw 5 0 12 0 78.4kb 78.4kb

6.2.3 启动正式环境的logstash

docker run -d \--restart=always \--name logstash-prod \-v /etc/logstash:/etc/logstash \-f /etc/logstash/logstash-prod.conf \harbor.od.com/infra/logstash:v6.8.6

7 部署Kibana

7.1 准备相关资源

7.1.1 准备docker镜像

docker pull kibana:6.8.6docker tag adfab5632ef4 harbor.od.com/infra/kibana:v6.8.6docker push harbor.zq.com/infra/kibana:v6.8.6

准备目录

mkdir /data/k8s-yaml/kibanacd /data/k8s-yaml/kibana

7.1.3 准备dp资源清单

cat >deployment.yaml <<'EOF'kind: DeploymentapiVersion: extensions/v1beta1metadata:name: kibananamespace: infralabels:name: kibanaspec:replicas: 1selector:matchLabels:name: kibanatemplate:metadata:labels:app: kibananame: kibanaspec:containers:- name: kibanaimage: harbor.zq.com/infra/kibana:v6.8.6imagePullPolicy: IfNotPresentports:- containerPort: 5601protocol: TCPenv:- name: ELASTICSEARCH_URLvalue: http://10.4.7.12:9200imagePullSecrets:- name: harborsecurityContext:runAsUser: 0strategy:type: RollingUpdaterollingUpdate:maxUnavailable: 1maxSurge: 1revisionHistoryLimit: 7progressDeadlineSeconds: 600EOF

7.1.4 准备svc资源清单

cat >service.yaml <<'EOF'kind: ServiceapiVersion: v1metadata:name: kibananamespace: infraspec:ports:- protocol: TCPport: 5601targetPort: 5601selector:app: kibanaEOF

7.1.5 准备ingress资源清单

cat >ingress.yaml <<'EOF'kind: IngressapiVersion: extensions/v1beta1metadata:name: kibananamespace: infraspec:rules:- host: kibana.zq.comhttp:paths:- path: /backend:serviceName: kibanaservicePort: 5601EOF

7.2 应用资源

7.2.1 应用资源配置清单

kubectl apply -f http://k8s-yaml.zq.com/kibana/deployment.yamlkubectl apply -f http://k8s-yaml.zq.com/kibana/service.yamlkubectl apply -f http://k8s-yaml.zq.com/kibana/ingress.yaml

7.2.2 解析域名

~]# vim /var/named/od.com.zonekibana A 10.4.7.10~]# systemctl restart named~]# dig -t A kibana.od.com @10.4.7.11 +short10.4.7.10

7.2.3 浏览器访问

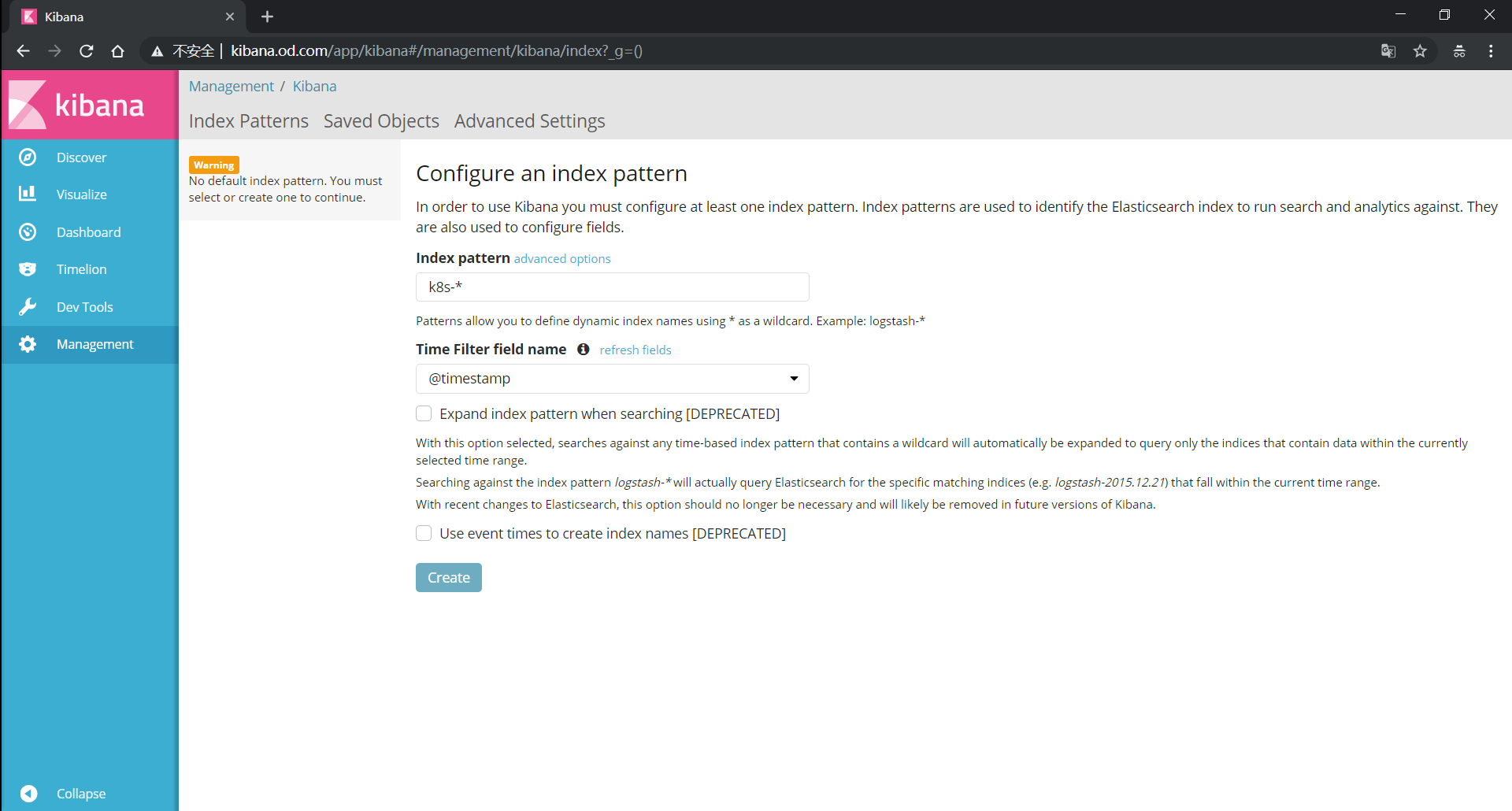

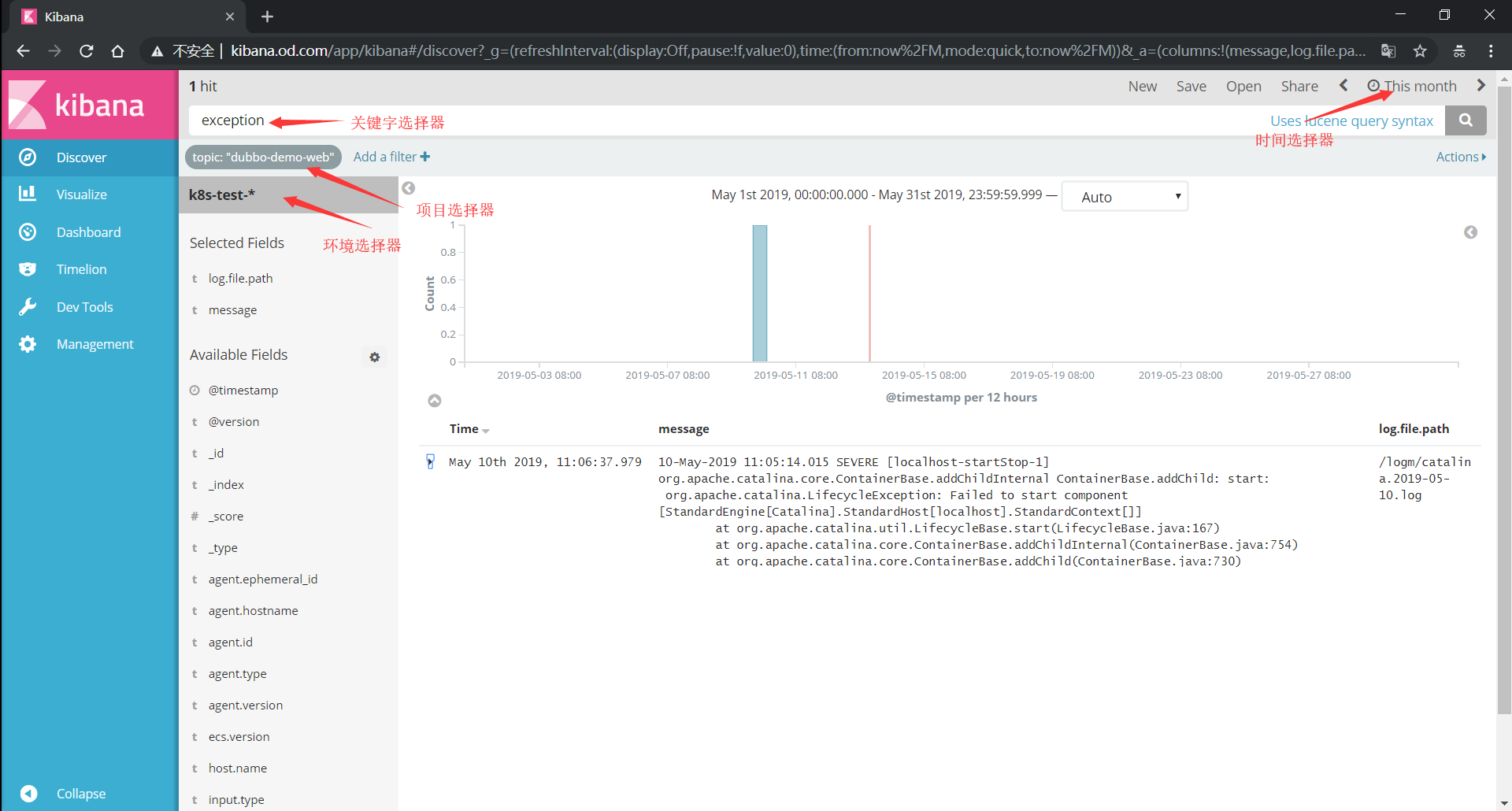

7.3 kibana的使用

选择区域 | 项目 | 用途 | | —- | —- | | @timestamp | 对应日志的时间戳 | | og.file.path | 对应日志文件名 | | message | 对应日志内容 |

时间选择器

选择日志时间快速时间绝对时间相对时间

环境选择器

选择对应环境的日志k8s-test-*k8s-prod-*

项目选择器

- 对应filebeat的PROJ_NAME值

- Add a fillter

- topic is ${PROJ_NAME}

dubbo-demo-service

dubbo-demo-web

- 关键字选择器

exception

error