服务器信息

| IP | 配置 | hostname |

|---|---|---|

| 192.168.41.136 | 8g | master |

| 192.168.41.137 | 4g | worker1 |

| 192.168.41.138 | 4g | worker2 |

# manager节点执行以下命令:hostnamectl --static set-hostname manager# worker节点执行以下命令:hostnamectl --static set-hostname worker[序号]

docker安装

# docker installcurl -fsSL get.docker.com -o get-docker.shsudo sh get-docker.sh --mirror Aliyun# docker startsystemctl start docker# 开机启动systemctl enable docker或者chkconfig docker on

docker login -u username -p password registry.cn-beijing.aliyuncs.com

docker swarm安装

开放swarm 使用端口

集群节点之间保证TCP 2377、TCP/UDP 7946和UDP 4789端口通信

- TCP端口2377集群管理端口

- TCP与UDP端口7946节点之间通讯端口

- TCP与UDP端口4789 overlay网络通讯端口

firewall-cmd --zone=public --add-port=2377/tcp --permanentfirewall-cmd --zone=public --add-port=7946/tcp --permanentfirewall-cmd --zone=public --add-port=7946/udp --permanentfirewall-cmd --zone=public --add-port=4789/tcp --permanentfirewall-cmd --zone=public --add-port=4789/udp --permanentfirewall-cmd --add-port=9000/tcp --permanentfirewall-cmd --add-port=2375/tcp --permanentfirewall-cmd --reload

查看已开启端口firewall-cmd --list-port

初始化管理节点

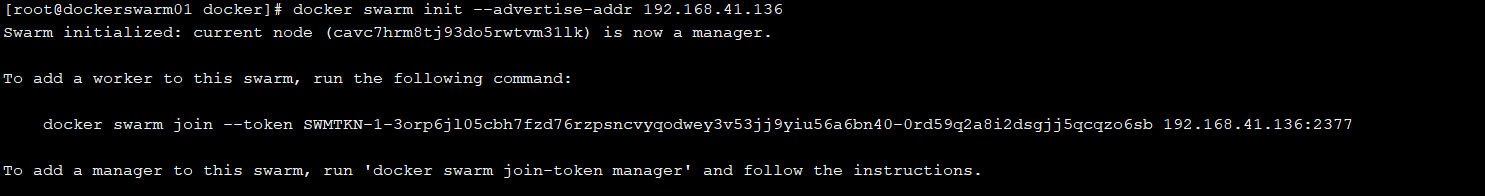

docker swarm init --advertise-addr <MANAGER-IP># docker swarm init --advertise-addr 192.168.41.136

执行结果

该结果中给出了后续操作引导信息,告诉我们如何将一个Worker Node加入到Swarm集群中。

添加其它节点前查看token

添加其它节点到集群,必须先在管理节点执行如下命令,它会打印出在其它节点将要执行的包含token的完整脚本。

查看如何添加work节点docker swarm join-token worker

docker swarm join-token worker

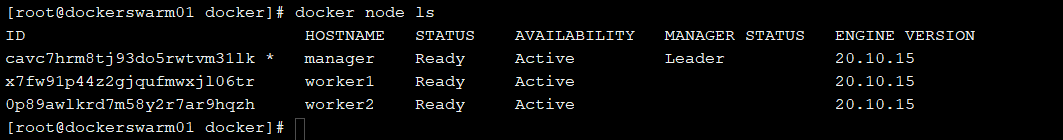

查看docker swarm 集群信息 docker info,docker node ls

上面信息中,AVAILABILITY表示Swarm Scheduler是否可以向集群中的某个Node指派Task,对应有如下三种状态:

- Active:集群中该Node可以被指派Task

- Pause:集群中该Node不可以被指派新的Task,但是其他已经存在的Task保持运行

- Drain:集群中该Node不可以被指派新的Task,Swarm Scheduler停掉已经存在的Task,并将它们调度到可用的Node上

查看某一个Node的状态信息,可以在该Node上执行如下命令:docker node inspect self

[{"ID": "cavc7hrm8tj93do5rwtvm31lk","Version": {"Index": 9},"CreatedAt": "2022-05-10T06:43:05.112178247Z","UpdatedAt": "2022-05-10T06:43:05.723262101Z","Spec": {"Labels": {},"Role": "manager","Availability": "active"},"Description": {"Hostname": "manager","Platform": {"Architecture": "x86_64","OS": "linux"},"Resources": {"NanoCPUs": 2000000000,"MemoryBytes": 8181821440},"Engine": {"EngineVersion": "20.10.15","Plugins": [{"Type": "Log","Name": "awslogs"},{"Type": "Log","Name": "fluentd"},{"Type": "Log","Name": "gcplogs"},{"Type": "Log","Name": "gelf"},{"Type": "Log","Name": "journald"},{"Type": "Log","Name": "json-file"},{"Type": "Log","Name": "local"},{"Type": "Log","Name": "logentries"},{"Type": "Log","Name": "splunk"},{"Type": "Log","Name": "syslog"},{"Type": "Network","Name": "bridge"},{"Type": "Network","Name": "host"},{"Type": "Network","Name": "ipvlan"},{"Type": "Network","Name": "macvlan"},{"Type": "Network","Name": "null"},{"Type": "Network","Name": "overlay"},{"Type": "Volume","Name": "local"}]},"TLSInfo": {"TrustRoot": "-----BEGIN CERTIFICATE-----\nMIIBajCCARCgAwIBAgIUfLOj3Lu72NmJ8Ru26dSuXqHGDVswCgYIKoZIzj0EAwIw\nEzERMA8GA1UEAxMIc3dhcm0tY2EwHhcNMjIwNTEwMDYzODAwWhcNNDIwNTA1MDYz\nODAwWjATMREwDwYDVQQDEwhzd2FybS1jYTBZMBMGByqGSM49AgEGCCqGSM49AwEH\nA0IABAcfyEUnuOC/D0oWCzPkSJTWWKovxOih9zWcVdtPgIGzdUIvw0K8b3AnOEiU\nUsuwUJz/euc06MDzVEtQ8mHYOT+jQjBAMA4GA1UdDwEB/wQEAwIBBjAPBgNVHRMB\nAf8EBTADAQH/MB0GA1UdDgQWBBRVWdfH72g1VDgsE7vbg+l9VG2IgzAKBggqhkjO\nPQQDAgNIADBFAiBXOIuel39krzyWleTfl5fuDyZLuh+/OVVzEta4PgepDAIhAJ1n\nLN44fHJbBCIFxWXv1rP4O0yJzfMX/84xFB9LntMC\n-----END CERTIFICATE-----\n","CertIssuerSubject": "MBMxETAPBgNVBAMTCHN3YXJtLWNh","CertIssuerPublicKey": "MFkwEwYHKoZIzj0CAQYIKoZIzj0DAQcDQgAEBx/IRSe44L8PShYLM+RIlNZYqi/E6KH3NZxV20+AgbN1Qi/DQrxvcCc4SJRSy7BQnP965zTowPNUS1DyYdg5Pw=="}},"Status": {"State": "ready","Addr": "192.168.41.136"},"ManagerStatus": {"Leader": true,"Reachability": "reachable","Addr": "192.168.41.136:2377"}}]

查看如何添加manager节点docker swarm join-token manager

创建应用跨机器网络

- 创建overlay网络(主节点执行) ```bash docker network create —attachable —driver overlay my_network

输出: 8fvtabqh3uce2bg19zkk84ype

- --attachable 参数为了兼容单机的容器可以加入此网络。network_name 自定义- 创建完Overlay网络my-network以后,Swarm集群中所有的Manager Node都可以访问该网络。然后,在创建服务的时候,只需要指定使用的网络为已存在<br />的Overlay网络即可,如下命令所示:```bashdocker service create \ --replicas 3 \--network my-network \--name myweb \nginx

创建ui管理容器

使用ui页面管理容器集群

docker run -d -p 9000:9000 -v /var/run/docker.sock:/var/run/docker.sock portainer/portainer

需要修改docker 启动文件

vi /usr/lib/systemd/system/docker.service

ExecStart=/usr/bin/dockerd -H tcp://0.0.0.0:2375 -H unix://var/run/docker.sock

#ExecStart=/usr/bin/dockerd -H fd:// -H tcp://0.0.0.0:2375 --containerd=/run/containerd/containerd.sock

systemctl daemon-reload

systemctl restart docker

访问地址: IP:9000

选择 remote,填写服务器ip端口信息,注意开通对应端口2375

firewall-cmd --add-port=2375/tcp --permanent

firewall-cmd --reload

此处设置docker远程接口为免密方式,生产环境不建议使用ui管理

添加节点配置

其他节点机器同样开通2375端口(修改docker配置)