- 1. Ozone安装包下载地址

- 2. 解压安装包到指定目录下

- 3. 配置环境变量

- 在所有Ozone节点配置Ozone适配Hadoop环境的jar包,使hdfs命令可以识别Ozone的schema

- 分发安装包

scp -r /home/hadoop/core/ozone-1.2.1 hadoop@${ip}:/home/hadoop/core/

#配置发生变化,执行同步文件

rsync -av /home/hadoop/core/ozone-1.2.1/etc/ozone-site.xml hadoop@${ip}:/home/hadoop/core/ozone-1.2.1/etc/ozone-site.xml

#查看ozone角色节点

ozone admin om roles -id=om

ozone admin scm roles -id=scm

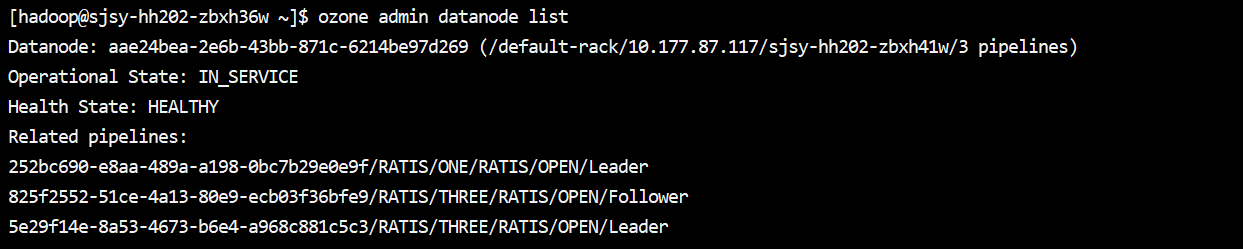

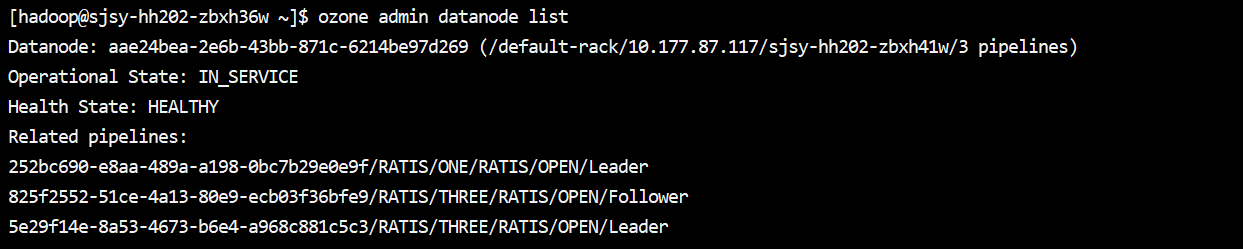

#列出所有的datanode

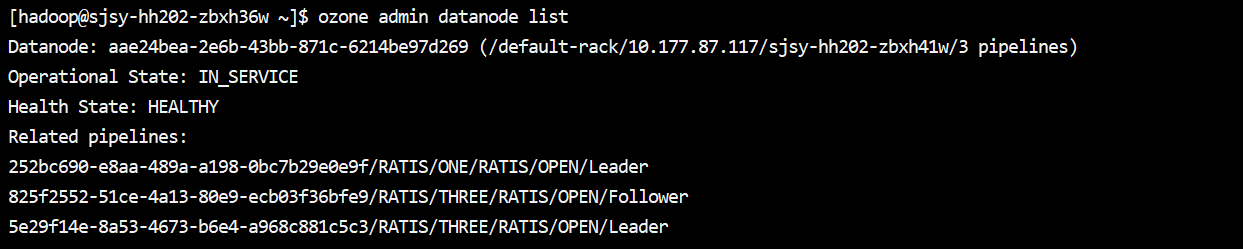

ozone admin datanode list

SCM和OM各自有独立的UI界面端口:

SCM:http://ip:9876/

OM:http:http://ip:9874/

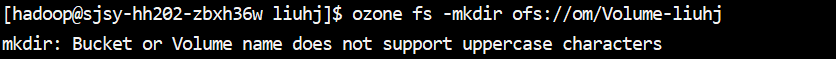

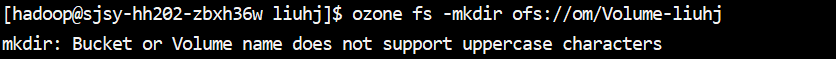

Recon:http://ip:9888/">创建volume、bucket,卷桶不能用大写字母、下划线和纯数字

创建方式一:

ozone sh bucket create /test_volume

ozone sh bucket create /test_volume/test_bucket

创建方式二:

ozone fs -mkdir ofs://om/test_volume

#查看ozone角色节点

ozone admin om roles -id=om

ozone admin scm roles -id=scm

#列出所有的datanode

ozone admin datanode list

SCM和OM各自有独立的UI界面端口:

SCM:http://ip:9876/

OM:http:http://ip:9874/

Recon:http://ip:9888/

1. Ozone安装包下载地址

https://ozone.apache.org/downloads/

2. 解压安装包到指定目录下

tar -zxvf ozone-1.2.1.tar.gz -C /home/hadoop/core

3. 配置环境变量

新增环境变量信息:

export JAVA_HOME=/usr/java/jdk1.8.0_271-amd64 export OZONE_HOME=/home/hadoop/core/ozone-1.2.1 export PATH=$JAVA_HOME/bin:$OZONE_HOME/bin\$PATH

在所有Ozone节点配置Ozone适配Hadoop环境的jar包,使hdfs命令可以识别Ozone的schema

export HADOOP_CLASSPATH=/home/hadoop/core/ozone-1.2.1/share/ozone/lib/ozone-filesystem-hadoop3-*.jar:$HADOOP_CLASSPATH

具体脚本命令:

:::tips

sudo sed -i ‘80iexport OZONE_HOME=/home/hadoop/core/ozone-1.2.1’ /etc/profile

sudo sed -i ‘81iexport JAVA_HOME=/usr/java/jdk1.8.0_271-amd64’ /etc/profile

sudo sed -i ‘s#export PATH=\$ALLUXIO_HOME/bin:\$PATH#export PATH=\$JAVA_HOME/bin:\$ALLUXIO_HOME/bin:\$OZONE_HOME/bin:\$PATH#g’ /etc/profile

source /etc/profile

:::

注意:java环境变量必须配置上,否则启动报错,找不到java环境信息

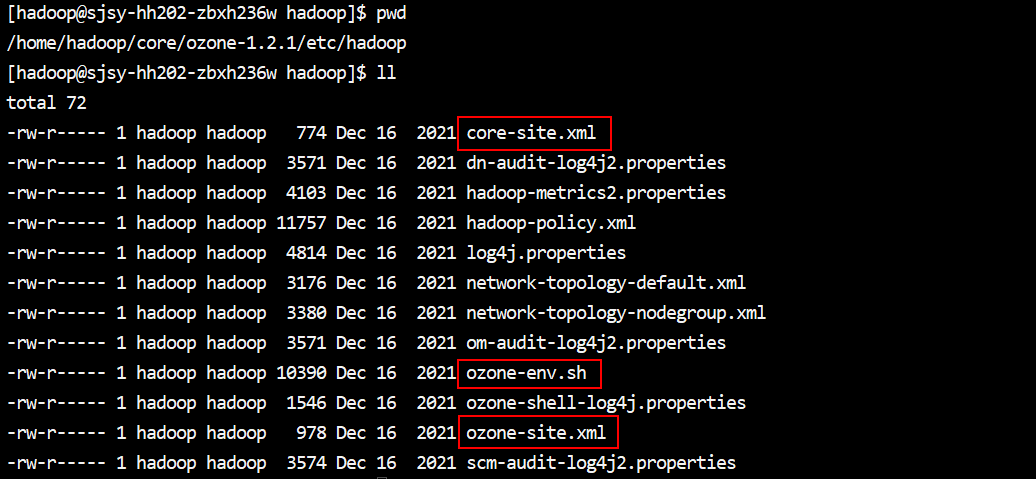

4. 配置文件

生成配置文件:ozone genconf $OZONE_HOME/etc/hadoop

运行完成后,到/home/hadoop/core/ozone-1.2.1/etc/hadoop目录下确认是否配置文件已经全部生成;这里生成的配置信息并不是全量的配置,只生成了几个关键的配置,根据需要额外添加即可;

ozone-env.sh配置信息如下:

export OZONE_OPTS=”-XX:ParallelGCThreads=8 -XX:+UseConcMarkSweepGC -XX:CMSInitiatingOccupancyFraction=75 -XX:+CMSParallelRemarkEnabled”

ozone-site.xml配置信息如下:

<configuration>

<!-- SCM -->

<property>

<name>ozone.scm.ratis.enable</name>

<value>true</value>

</property>

<property>

<name>ozone.scm.service.ids</name>

<value>scm</value>

</property>

<property>

<name>ozone.scm.nodes.scm</name>

<value>scm1,scm2,scm3</value>

</property>

<property>

<name>ozone.scm.address.scm.scm1</name>

<value>sjsy-hh202-zbxh36w</value>

</property>

<property>

<name>ozone.scm.address.scm.scm2</name>

<value>sjsy-hh202-zbxh37w</value>

</property>

<property>

<name>ozone.scm.address.scm.scm3</name>

<value>sjsy-hh202-zbxh38w</value>

</property>

<property>

<name>ozone.scm.container.size</name>

<value>5GB</value>

</property>

<property>

<name>ozone.scm.db.dirs</name>

<value>/data/disk03/metadata/ozone/scm</value>

</property>

<property>

<name>ozone.scm.pipeline.owner.container.count</name>

<value>3</value>

</property>

<!-- OM -->

<property>

<name>ozone.om.ratis.enable</name>

<value>true</value>

</property>

<property>

<name>ozone.om.service.ids</name>

<value>om</value>

</property>

<property>

<name>ozone.om.nodes.om</name>

<value>om1,om2,om3</value>

</property>

<property>

<name>ozone.om.db.dirs</name>

<value>/data/disk03/metadata/ozone/om</value>

<description>

Directory where the OzoneManager stores its metadata. This should

be specified as a single directory. If the directory does not

exist then the OM will attempt to create it.

If undefined, then the OM will log a warning and fallback to

ozone.metadata.dirs. This fallback approach is not recommended for

production environments.

</description>

</property>

<property>

<name>ozone.om.address.om.om1</name>

<value>sjsy-hh202-zbxh36w</value>

</property>

<property>

<name>ozone.om.address.om.om2</name>

<value>sjsy-hh202-zbxh37w</value>

</property>

<property>

<name>ozone.om.address.om.om3</name>

<value>sjsy-hh202-zbxh38w</value>

</property>

<!-- datanode -->

<property>

<name>ozone.scm.datanode.address</name>

<value>sjsy-hh202-zbxh39w,sjsy-hh202-zbxh40w,sjsy-hh202-zbxh41w,sjsy-hh202-zbxh42w</value>

</property>

<property>

<name>ozone.scm.datanode.id.dir</name>

<value>/data/disk03/metadata/ozone/node</value>

</property>

<property>

<name>hdds.datanode.dir</name>

<value>/data/disk01/ozonedata,/data/disk02/ozonedata,/data/disk03/ozonedata,/data/disk04/ozonedata,/data/disk05/ozonedata,/data/disk06/ozonedata,/data/disk07/ozonedata,/data/disk08/ozonedata,/data/disk09/ozonedata,/data/disk10/ozonedata,/data/disk11/ozonedata,/data/disk12/ozonedata,/data/disk13/ozonedata,/data/disk14/ozonedata,/data/disk15/ozonedata,/data/disk16/ozonedata,/data/disk17/ozonedata,/data/disk18/ozonedata,/data/disk19/ozonedata,/data/disk20/ozonedata,/data/disk21/ozonedata,/data/disk22/ozonedata,/data/disk23/ozonedata,/data/disk24/ozonedata,/data/disk25/ozonedata,/data/disk26/ozonedata,/data/disk27/ozonedata,/data/disk28/ozonedata,/data/disk29/ozonedata,/data/disk30/ozonedata,/data/disk31/ozonedata,/data/disk32/ozonedata,/data/disk33/ozonedata,/data/disk34/ozonedata,/data/disk35/ozonedata,/data/disk36/ozonedata</value>

</property>

<property>

<name>dfs.container.ratis.datanode.storage.dir</name>

<value>/data/disk03/metadata/ozone/ratisdatanode</value>

</property>

<!-- recon -->

<property>

<name>ozone.recon.db.dir</name>

<value>/data/disk03/metadata/ozone/recon</value>

</property>

<property>

<name>ozone.recon.address</name>

<value>sjsy-hh202-zbxh37w:9891</value>

</property>

<property>

<name>recon.om.snapshot.task.interval.delay</name>

<value>1m</value>

</property>

<property>

<name>ozone.om.address</name>

<value>sjsy-hh202-zbxh36w,sjsy-hh202-zbxh37w,sjsy-hh202-zbxh38w</value>

<tag>OM, REQUIRED</tag>

<description>

The address of the Ozone OM service. This allows clients to discover

the address of the OM.

</description>

</property>

<property>

<name>ozone.metadata.dirs</name>

<value>/data/disk03/metadata/ozone/om/metadata</value>

<tag>OZONE, OM, SCM, CONTAINER, STORAGE, REQUIRED</tag>

<description>

This setting is the fallback location for SCM, OM, Recon and DataNodes

to store their metadata. This setting may be used only in test/PoC

clusters to simplify configuration.

For production clusters or any time you care about performance, it is

recommended that ozone.om.db.dirs, ozone.scm.db.dirs and

dfs.container.ratis.datanode.storage.dir be configured separately.

</description>

</property>

<property>

<name>ozone.scm.client.address</name>

<value>sjsy-hh202-zbxh36w,sjsy-hh202-zbxh37w,sjsy-hh202-zbxh38w</value>

<tag>OZONE, SCM, REQUIRED</tag>

<description>

The address of the Ozone SCM client service. This is a required setting.

It is a string in the host:port format. The port number is optional

and defaults to 9860.

</description>

</property>

<property>

<name>ozone.scm.names</name>

<value>sjsy-hh202-zbxh36w,sjsy-hh202-zbxh37w,sjsy-hh202-zbxh38w</value>

<tag>OZONE, REQUIRED</tag>

<description>

The value of this property is a set of DNS | DNS:PORT | IP

Address | IP:PORT. Written as a comma separated string. e.g. scm1,

scm2:8020, 7.7.7.7:7777.

This property allows datanodes to discover where SCM is, so that

datanodes can send heartbeat to SCM.

</description>

</property>

<!--这个配置默认为org.apache.hadoop.ozone.security.acl.OzoneAccessAuthorizer-->

<!--authorizer的配置,来选择用哪个authorizer类做ACL的访问控制-->

<property>

<name>ozone.acl.authorizer.class</name>

<value>org.apache.hadoop.ozone.security.acl.OzoneNativeAuthorizer</value>

<tag>OZONE, SECURITY, ACL</tag>

<description>Acl authorizer for Ozone.

</description>

</property>

<!--默认为false,需要改为true-->

<property>

<name>ozone.acl.enabled</name>

<value>true</value>

<tag>OZONE, SECURITY, ACL</tag>

<description>Key to enable/disable ozone acls.</description>

</property>

<property>

<name>ozone.security.enabled</name>

<value>false</value>

<tag>OZONE, SECURITY, KERBEROS</tag>

<description>True if security is enabled for ozone. When this property is

true, hadoop.security.authentication should be Kerberos.

</description>

</property>

<!--挂载Alluxio需要配置,否则会出现alluxio无法读取挂载的文件-->

<property>

<name>scm.container.client.max.size</name>

<value>256</value>

</property>

<property>

<name>scm.container.client.idle.threshold</name>

<value>10s</value>

</property>

<property>

<name>hdds.ratis.raft.client.rpc.request.timeout</name>

<value>60s</value>

</property>

<property>

<name>hdds.ratis.raft.client.async.outstanding-requests.max</name>

<value>32</value>

</property>

<property>

<name>hdds.ratis.raft.client.rpc.watch.request.timeout</name>

<value>180s</value>

</property>

</configuration>

hdfs的core-site.xml配置文件拷贝或软链接到ozone1.2.1/etc/hadoop目录下。

5. 配置中文件目录赋权

本次测试使用的是hadoop用户启动ozone,因此这些目录需要有hadoop读写执行权限

如:sudo setfacl -R -m user:hadoop:rwx,user:hadoop:rwx /data

根据具体配置进行赋权

6.其他主机节点配置相同

:::tips

分发安装包

scp -r /home/hadoop/core/ozone-1.2.1 hadoop@${ip}:/home/hadoop/core/

#配置发生变化,执行同步文件

rsync -av /home/hadoop/core/ozone-1.2.1/etc/ozone-site.xml hadoop@${ip}:/home/hadoop/core/ozone-1.2.1/etc/ozone-site.xml

7.启动及基本命令

SCM初始化:ozone scm —init 这条命令会使 SCM 创建集群 ID 并初始化它的状态。 init 命令和 Namenode 的 format 命令类似,只需要执行一次,SCM 就可以在磁盘上准备好正常运行所需的数据结构;

OM初始化:ozone om —init 如果 SCM 未启动,om初始化命令会失败,同样,如果磁盘上的元数据缺失,SCM 也无法启动,所以请确保 scm —init 和 om —init 两条命令都成功执行了,因此om初始化这一步应该在SCM初始化并启动之后;

第一个scm节点执行命令:

ozone scm —init

ozone —daemon start scm

其余scm节点执行命令:

ozone scm —bootstrap

ozone —daemon start scm

ozone —daemon stop scm

每一个om节点执行命令:

ozone om —init

ozone —daemon start om

ozone —daemon stop om

recon节点:

ozone —daemon start recon

ozone —daemon stop recon

datanode节点:

ozone —daemon start datanode

ozone —daemon stop datanode

创建volume、bucket,卷桶不能用大写字母、下划线和纯数字

创建方式一:

ozone sh bucket create /test_volume

ozone sh bucket create /test_volume/test_bucket

创建方式二:

ozone fs -mkdir ofs://om/test_volume

#查看ozone角色节点

ozone admin om roles -id=om

ozone admin scm roles -id=scm

#列出所有的datanode

ozone admin datanode list

SCM和OM各自有独立的UI界面端口:

SCM:http://ip:9876/

OM:http:http://ip:9874/

Recon:http://ip:9888/

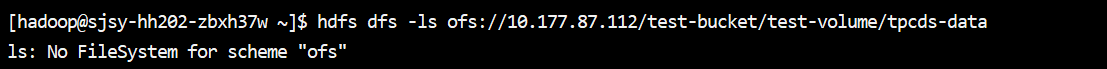

配置环境变量:export HADOOP_CLASSPATH=/opt/Ozone/ozone-1.2.1/share/ozone/lib/ozone-filesystem-hadoop3-.jar:$HADOOP_CLASSPATH,此时才使用hdfs+指定Ozone文件系统的目录结构来操作Ozone

hdfs dfs -ls ofs://xx.xx.xx.112/test-volume/test-bucket/tpcds-data

hdfs dfs -mkdir -p ofs://xx.xx.xx.112/test-volume/test-bucket/tpcds-data

hdfs dfs -ls o3fs://test-volume.test-bucket.xx.xx.xx.112/tpcds-data

如果未配置步骤3中的ozone-filesystem-hadoop3-.jar,则报错

不配置的话可以直接使用如下操作:

ozone fs -ls o3fs://test-volume.test-bucket.om/tpcds-data

ozone fs -ls ofs://xx.xx.xx.112:9862/test-bucket/test-volume/tpcds-data

ozone fs -ls ofs://xx.xx.xx.112/test-bucket/test-volume/tpcds-data

ozone fs -ls ofs://om/test-bucket/test-volume/tpcds-data

Ozone支持两种scheme:o3fs://和ofs://,两者的最大不同在于o3fs仅支持在同一个volume和bucket中进行操作,而ofs可以支持在所有的volume和bucket中执行操作,并且通过ofs方式可以获取所有volume/buckets的总览。

o3fs://bucket.volume.om/key

ofs://om/volume/bucket/key

8.问题总结

(1)ozone-env.sh中配置ulimit -c unlimited,设置core文件大小为不限制大小

启动过程中会错误信息:

bash: ulimit: core file size: cannot modify limit: Operation not permitted,但不影响正常启动

原因:

用户没有权限

解决方法:

sudo vim /etc/security/limits.conf

hadoop hard core unlimited

hadoop soft core unlimited

(2)ozone fs -ls /报错

[hadoop@sjsy-hh202-zbxh43w ~]$ ozone fs -ls /

2022-06-29 18:31:53,845 [main] WARN fs.FileSystem: Failed to initialize fileystem hdfs://Mycluster: java.lang.IllegalArgumentException: java.net.UnknownHostException: Mycluster

-ls: java.net.UnknownHostException: Mycluster

分析原因:

ozone fs -ls / 执行时,会读取core-site.xml中的fs.defaultFS配置项,但该配置项为hdfs集群配置,导致无法识别,直接指定集群信息ozone fs -ls ofs://xx.xx.xx.112/test-bucket/test-volume/tpcds-data是可以的

解决办法:

如果想要使用类似于ozone fs -ls /dir或 hdfs dfs -ls /dir 不指定文件系统的方式操作Ozone文件系统,需要修改core-site.xml中的相关配置;

o3fs:

ofs:

(3)alluxio挂载Ozone报错问题

执行命令:

alluxio fs mount /alluxio_ozone_119_data/ o3fs://test-bucket.test-volume.om/test-data —option alluxio.underfs.hdfs.configuration=/home/hadoop/alluxio_ozone_119/ozone-site.xml:/home/hadoop/alluxio_ozone_119/core-site.xml

报错信息一:

2022-07-07 12:37:54,511 INFO RetryInvocationHandler - alluxio.shaded.hdfs.com.google.protobuf.ServiceException: java.net.UnknownHostException: Invalid host name: local host is: (unknown); destination host is: “om”:9862; java.net.UnknownHostException; For more details see: http://wiki.apache.org/hadoop/UnknownHost, while invoking $Proxy103.submitRequest over nodeId=null,nodeAddress=om:9862 after 67 failover attempts. Trying to failover after sleeping for 136000ms.

2022-07-07 12:40:10,512 INFO RetryInvocationHandler - alluxio.shaded.hdfs.com.google.protobuf.ServiceException: java.net.UnknownHostException: Invalid host name: local host is: (unknown); destination host is: “om”:9862; java.net.UnknownHostException; For more details see: http://wiki.apache.org/hadoop/UnknownHost, while invoking $Proxy103.submitRequest over nodeId=null,nodeAddress=om:9862 after 68 failover attempts. Trying to failover after sleeping for 138000ms.

问题分析:

执行挂载命令,会一直卡着,查看alluxio的active master leader节点的master.log日志发现,alluxio无法识别om,原因是命令中指定的core-site.xml配置文件缺少配置项

解决方法:

首先core-site.xml及ozone-site.xml文件需要存在于ozone的各个节点,其次在core-site.xml中增加两个配置项:

<property>

<name>fs.AbstractFileSystem.o3fs.impl</name>

<value>org.apache.hadoop.fs.ozone.BasicOzFs</value>

</property>

<property>

<name>fs.o3fs.impl</name>

<value>org.apache.hadoop.fs.ozone.BasicOzoneFileSystem</value>

</property>

报错信息二:

2022-06-29 19:08:38,887 ERROR FileSystemMasterClientServiceHandler - Exit (Error): Mount: request=alluxioPath: "/alluxio_ozone_data"

ufsPath: "o3fs://test-volume.test-bucket.om/tpcds-data"

options {

readOnly: false

properties {

key: "alluxio.underfs.hdfs.configuration"

value: "/home/hadoop/alluxio_ozone/ozone-site.xml:/home/hadoop/alluxio_ozone/core-site.xml"

}

shared: false

commonOptions {

syncIntervalMs: -1

ttl: -1

ttlAction: DELETE

}

}

java.lang.IllegalArgumentException: Unable to create an UnderFileSystem instance for path: o3fs://test-volume.test-bucket.om/tpcds-data

at alluxio.underfs.UnderFileSystem$Factory.create(UnderFileSystem.java:121)

at alluxio.underfs.AbstractUfsManager.getOrAdd(AbstractUfsManager.java:132)

at alluxio.underfs.AbstractUfsManager.lambda$addMount$0(AbstractUfsManager.java:171)

at alluxio.underfs.UfsManager$UfsClient.acquireUfsResource(UfsManager.java:61)

at alluxio.master.file.DefaultFileSystemMaster.prepareForMount(DefaultFileSystemMaster.java:2914)

at alluxio.master.file.DefaultFileSystemMaster.mountInternal(DefaultFileSystemMaster.java:3079)

at alluxio.master.file.DefaultFileSystemMaster.mountInternal(DefaultFileSystemMaster.java:3040)

at alluxio.master.file.DefaultFileSystemMaster.mount(DefaultFileSystemMaster.java:3015)

at alluxio.master.file.FileSystemMasterClientServiceHandler.lambda$mount$11(FileSystemMasterClientServiceHandler.java:273)

at alluxio.RpcUtils.callAndReturn(RpcUtils.java:122)

at alluxio.RpcUtils.call(RpcUtils.java:83)

at alluxio.RpcUtils.call(RpcUtils.java:58)

at alluxio.master.file.FileSystemMasterClientServiceHandler.mount(FileSystemMasterClientServiceHandler.java:272)

at alluxio.grpc.FileSystemMasterClientServiceGrpc$MethodHandlers.invoke(FileSystemMasterClientServiceGrpc.java:2408)

at io.grpc.stub.ServerCalls$UnaryServerCallHandler$UnaryServerCallListener.onHalfClose(ServerCalls.java:182)

at io.grpc.PartialForwardingServerCallListener.onHalfClose(PartialForwardingServerCallListener.java:35)

at io.grpc.ForwardingServerCallListener.onHalfClose(ForwardingServerCallListener.java:23)

at io.grpc.ForwardingServerCallListener$SimpleForwardingServerCallListener.onHalfClose(ForwardingServerCallListener.java:40)

at alluxio.security.authentication.ClientIpAddressInjector$1.onHalfClose(ClientIpAddressInjector.java:57)

at io.grpc.ForwardingServerCallListener$SimpleForwardingServerCallListener.onHalfClose(ForwardingServerCallListener.java:40)

at alluxio.security.authentication.AuthenticatedUserInjector$1.onHalfClose(AuthenticatedUserInjector.java:67)

at io.grpc.internal.ServerCallImpl$ServerStreamListenerImpl.halfClosed(ServerCallImpl.java:331)

at io.grpc.internal.ServerImpl$JumpToApplicationThreadServerStreamListener$1HalfClosed.runInContext(ServerImpl.java:797)

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624)

at java.lang.Thread.run(Thread.java:748)

Suppressed: java.lang.ExceptionInInitializerError

at java.lang.Class.forName0(Native Method)

at java.lang.Class.forName(Class.java:264)

at alluxio.underfs.hdfs.HdfsUnderFileSystem.<init>(HdfsUnderFileSystem.java:176)

at alluxio.underfs.hdfs.HdfsUnderFileSystem.createInstance(HdfsUnderFileSystem.java:118)

at alluxio.underfs.hdfs.HdfsUnderFileSystemFactory.create(HdfsUnderFileSystemFactory.java:43)

at alluxio.underfs.UnderFileSystem$Factory.create(UnderFileSystem.java:106)

... 29 more

at javax.ws.rs.ext.RuntimeDelegate.findDelegate(RuntimeDelegate.java:122)

at javax.ws.rs.ext.RuntimeDelegate.getInstance(RuntimeDelegate.java:91)

at javax.ws.rs.core.MediaType.<clinit>(MediaType.java:44)

... 35 more

at javax.ws.rs.ext.FactoryFinder.newInstance(FactoryFinder.java:69)

... 37 more

Caused by: java.lang.ClassCastException: Cannot cast com.sun.jersey.core.impl.provider.header.LocaleProvider to com.sun.jersey.spi.HeaderDelegateProvider

at java.lang.Class.cast(Class.java:3369)

at com.sun.jersey.spi.service.ServiceFinder$LazyObjectIterator.hasNext(ServiceFinder.java:833)

at com.sun.jersey.core.spi.factory.AbstractRuntimeDelegate.<init>(AbstractRuntimeDelegate.java:76)

at com.sun.ws.rs.ext.RuntimeDelegateImpl.<init>(RuntimeDelegateImpl.java:56)

at sun.reflect.NativeConstructorAccessorImpl.newInstance0(Native Method)

at sun.reflect.NativeConstructorAccessorImpl.newInstance(NativeConstructorAccessorImpl.java:62)

at sun.reflect.DelegatingConstructorAccessorImpl.newInstance(DelegatingConstructorAccessorImpl.java:45)

at java.lang.reflect.Constructor.newInstance(Constructor.java:423)

at java.lang.Class.newInstance(Class.java:442)

at javax.ws.rs.ext.FactoryFinder.newInstance(FactoryFinder.java:65)

... 39 more

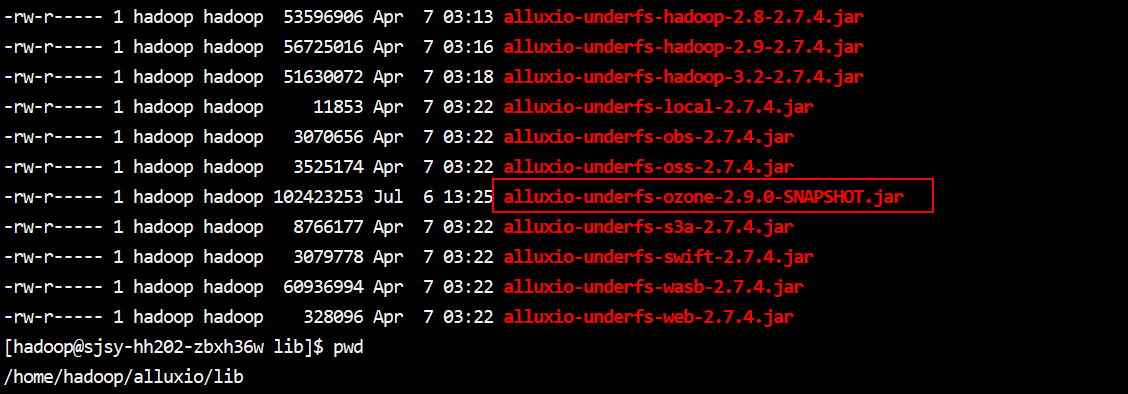

经过分析排查,可能是alluxio适配ozone的jar包有问题,alluxio安装目录下的lib中存在alluxio-underfs-ozone-xx.jar,alluxio2.7.4版本的jar包存在问题,替换成原厂提供的jar包,挂载成功!!!

(4)从HDFS向Ozone导数

执行命令:

hdfs dfs -cp hdfs://mycluster/warehouse/tablespace/managed/hive/tpcds_bin_partitioned_orc_10.db ofs://xx.xx.xx.112/test-bucket/test-volume/tpcds-data

执行过程中提示信息:

cp: Allocated 0 blocks. Requested 1 blocks

分析原因:

block块无法接收到,执行ozone fs -ls ofs://xx.xx.xx.112/test-bucket/test-volume/tpcds-data,发现目录可以导入,但数据文件未导入;

查看ozone启动情况发现,部分scm、om未启动成功,并且无法启动,查看VERSION文件:

nodeType=SCM scmHA=true clusterID=CID-ca3b26f3-2c36-47f3-bc52-69abe8234426 scmUuid=0bfa3ee7-a495-4f37-80c8-5e7bb3807d58 cTime=1656411754471 primaryScmNodeId=0bfa3ee7-a495-4f37-80c8-5e7bb3807d58 layoutVersion=2

scm各节点的clusterID及primaryScmNodeId应该是统一的,发现无法启动的scm节点的VERSION中这两个参数与其他scm节点不同,替换之后可以启动成功。