容器主要包括 Collection 和 Map 两种, Collection 存储着对象的集合,而 Map 存储着键值对(两个对象)的映射表。

Collection

Collection 接口是 List、 Set 和 Queue 接口的父接口,该接口里定义的方法既可用于操作 Set 集合,也可用于操作 List 和 Queue 集合。

JDK不提供此接口的任何直接实现,而是提供更具体的子接口(如: Set和List)实现。

在 Java5 之前, Java 集合会丢失容器中所有对象的数据类型,把所有对象都当成 Object 类型处理; 从 JDK 5.0 增加了泛型以后, Java 集合可以记住容器中对象的数据类型。

Iterator迭代器

Iterator对象称为迭代器(设计模式的一种),主要用于遍历 Collection 集合中的元素。

迭代器模式的定义为:提供一种方法访问一个容器(container)对象中各个元素,而又不需暴露该对象的内部细节。 迭代器模式,就是为容器而生。

Collection接口继承了java.lang.Iterable接口,该接口有一个iterator()方法,那么所有实现了Collection接口的集合类都有一个iterator()方法,用以返回一个实现了Iterator接口的对象。

Iterator 仅用于遍历集合, Iterator 本身并不提供承装对象的能力。如果需要创建Iterator 对象,则必须有一个被迭代的集合。

集合对象每次调用iterator()方法都得到一个全新的迭代器对象,默认游标都在集合的第一个元素之前。

在调用iter.next()方法之前必须要调用iter.hasNext()进行检测。若不调用,且下一条记录无效,直接调用iter.next()会抛出NoSuchElementException异常。

Iterator可以删除集合的元素, 但是是遍历过程中通过迭代器对象的remove方法, 不是集合对象的remove方法。

如果还未调用next()或在上一次调用 next 方法之后已经调用了 remove 方法,再调用remove都会报IllegalStateException。

使用 foreach 循环遍历集合元素

Java 5.0 提供了 foreach 循环迭代访问 Collection和数组。

遍历操作不需获取Collection或数组的长度,无需使用索引访问元素。

遍历集合的底层调用Iterator完成操作。

foreach还可以用来遍历数组。

List

ArrayList

ArrayList:基于动态数组实现,支持快速随机访问 。RandomAccess 接口标识着该类支持快速随机访问。

数组的默认大小为 10。

ArrayList的JDK1.8之前与之后的实现区别?

JDK1.7: ArrayList像饿汉式,直接创建一个初始容量为10的数组

JDK1.8: ArrayList像懒汉式,一开始创建一个长度为0的数组,当添加第一个元素时再创建一个始容量为10的数组

扩容

添加元素时使用 ensureCapacityInternal() 方法来保证容量足够,如果不够时,需要使用 grow() 方法进行扩容,新容量的大小为 oldCapacity + (oldCapacity >> 1) ,即 oldCapacity+oldCapacity/2。其中 oldCapacity >> 1 需要取整,所以新容量大约是旧容量的 1.5 倍左右。(oldCapacity 为偶数就是1.5 倍,为奇数就是 1.5 倍-0.5)

扩容操作需要调用 Arrays.copyOf() 把原数组整个复制到新数组中,这个操作代价很高,因此最好在创建 ArrayList 对象时就指定大概的容量大小,减少扩容操作的次数。

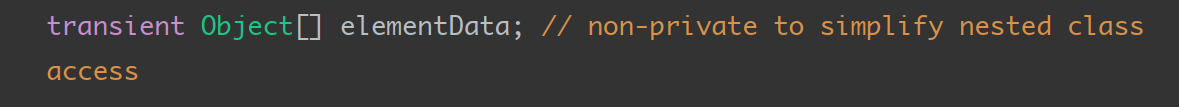

序列化

ArrayList 基于数组实现,并且具有动态扩容特性,因此保存元素的数组不一定都会被使用,那么就没必要全部进行序列化。

保存元素的数组 elementData 使用 transient 修饰,该关键字声明数组默认不会被序列化。

ArrayList 实现了 writeObject() 和 readObject() 来控制只序列化数组中有元素填充那部分内容。

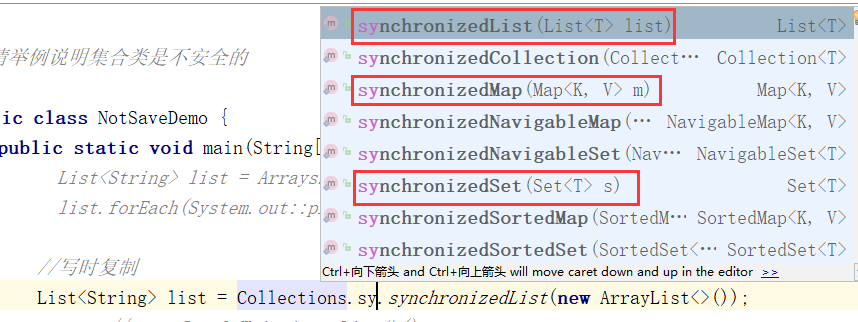

ArrayList 不是线程安全的,Collections提供了方法synchronizedList保证list是同步线程安全的,HashMap,HashSet同样有线程安全的方法。

或者使用CopyOnWriteArrayList,CopyOnWrite容器即写时复制的容器。往一个容器添加元素的时候,不直接往当前容器Object[]添加,

而是先将当前容器Object[]进行Copy,复制出一个新的容器Object[] newElements,然后向新的容器Object[] newElements里添加元素。

添加元素后,再将原容器的引用指向新的容器setArray(newElements)。

这样做的好处是可以对CopyOnWrite容器进行并发的读,而不需要加锁,因为当前容器不会添加任何元素。

所以CopyOnWrite容器也是一种读写分离的思想,读和写不同的容器。

CopyOnWriteArrayList适用于读多写少的场景。

Vector

Vector:和 ArrayList 类似,但它是线程安全的。性能较差,不推荐使用。

LinkedList

LinkedList:基于双向链表实现,使用 Node 存储链表节点信息。只能顺序访问,但是可以快速地在链表中间插入和删除元素。不仅如此, LinkedList 还可以用作栈、队列和双向队列。

三者对比

ArrayList和Vector结构一样都是动态数组,区别是ArrayList是线程不安全的,Vector是线程安全的,ArrayList性能好于Vector,ArrayList是应用最多的集合。

ArrayList和LinkedList的区别是他们的数据结构不同,ArrayList是动态数组,LinkedList是双向链表,在查询操作较多,在特定位置插入数据和删除数据较少的情况下一般选用ArrayList,在特定位置插入数据,删除数据操作较多,查询较少的情况下一般选用LinkedList,但是在大多数应用中都是对查询效率要求较高,所以ArrayList集合应用更广泛一些。

Set

HashSet

HashSet 是 Set 接口的典型实现,大多数时候使用 Set 集合时都使用这个实现类。HashSet 按 Hash 算法来存储集合中的元素,因此具有很好的存取、查找、删除性能。HashSet 底层也是数组, 初始容量为16, 当如果使用率超过0.75, (160.75=12)就会扩大容量为原来的2倍。 (16扩容为32, 依次为64,128….等)

HashSet 具有以下特点:

不能保证元素的排列顺序

HashSet 不是线程安全的

集合元素可以是 null

HashSet 集合判断两个元素相等的标准: 两个对象通过 hashCode() 方法比较相等,并且两个对象的 equals() 方法返回值也相等。

对于存放在Set容器中的对象, 对应的类一定要重写equals()和hashCode(Objectobj)方法,以实现对象相等规则。即“相等的对象必须具有相等的散列码” 。

*向HashSet中添加元素的过程:

当向 HashSet 集合中存入一个元素时, HashSet 会调用该对象的 hashCode() 方法来得到该对象的 hashCode 值, 然后根据 hashCode 值, 通过某种散列函数决定该对象在 HashSet 底层数组中的存储位置。 (这个散列函数会与底层数组的长度相计算得到在数组中的下标, 并且这种散列函数计算还尽可能保证能均匀存储元素, 越是散列分布,该散列函数设计的越好)

如果两个元素的hashCode()值相等, 会再继续调用equals方法, 如果equals方法结果为true, 添加失败; 如果为false, 那么会保存该元素, 但是该数组的位置已经有元素了,那么会通过链表的方式继续链接。

如果两个元素的 equals() 方法返回 true,但它们的 hashCode() 返回值不相等, hashSet 将会把它们存储在不同的位置,但依然可以添加成功。

LinkedHashSet

LinkedHashSet 是 HashSet 的子类

LinkedHashSet 根据元素的 hashCode 值来决定元素的存储位置,但它同时使用双向链表维护元素的次序,这使得元素看起来是以插入

顺序保存的。

LinkedHashSet插入性能略低于 HashSet, 但在迭代访问 Set 里的全部元素时有很好的性能。

LinkedHashSet 不允许集合元素重复。

TreeSet

TreeSet 是 SortedSet 接口的实现类, TreeSet 可以确保集合元素处于排序状态。

TreeSet底层使用红黑树结构存储数据

TreeSet 两种排序方法: 自然排序和定制排序。默认情况下, TreeSet 采用自然排序。

Queue

LinkedList:可以用它来实现双向队列。

PriorityQueue:基于堆结构实现,可以用它来实现优先队列。

Map

HashMap

public class HashMap<K,V> extends AbstractMap<K,V> implements Map<K,V>, Cloneable, Serializable {private static final long serialVersionUID = 362498820763181265L;/*** hashMap 哈希桶数组的默认大小 16*/static final int DEFAULT_INITIAL_CAPACITY = 1 << 4; // aka 16/*** 哈希桶数组的最大值*/static final int MAXIMUM_CAPACITY = 1 << 30;/*** 负载因子*/static final float DEFAULT_LOAD_FACTOR = 0.75f;/*** 链表长度大于 8 ,转化成红黑树*/static final int TREEIFY_THRESHOLD = 8;/*** 红黑树的node节点小于 6 是转化为链表*/static final int UNTREEIFY_THRESHOLD = 6;/*** 链表转化为红黑树,哈希桶数组的大小至少为64,如果小于64,要先进行扩容,扩容之后,数组的大小大于64,链表的长度大于8,转化为红黑树*/static final int MIN_TREEIFY_CAPACITY = 64;/*** Node是HashMap的一个内部类,实现了Map.Entry接口,本质是就是一个映射(键值对)。哈希数组存储的就是一个Node对象。*/static class Node<K,V> implements Map.Entry<K,V> {final int hash;final K key;V value;Node<K,V> next;Node(int hash, K key, V value, Node<K,V> next) {...}public final K getKey() { ... }public final V getValue() { ... }public final String toString() { ... }public final int hashCode() {... }public final V setValue(V newValue) { ... }public final boolean equals(Object o) { ... }}/*** 计算哈希值*/static final int hash(Object key) {int h;return (key == null) ? 0 : (h = key.hashCode()) ^ (h >>> 16);}/*** 把给定值转化为2的n次幂*/static final int tableSizeFor(int cap) {int n = cap - 1;n |= n >>> 1;n |= n >>> 2;n |= n >>> 4;n |= n >>> 8;n |= n >>> 16;return (n < 0) ? 1 : (n >= MAXIMUM_CAPACITY) ? MAXIMUM_CAPACITY : n + 1;}/*** 存储元素的数组,总是2的n次幂*/transient Node<K,V>[] table;/*** 存储具体元素的集*/transient Set<Map.Entry<K,V>> entrySet;/*** HashMap中存储的键值对的数量*/transient int size;/*** HashMap扩容和结构改变的次数。主要用于迭代的快速失败*/transient int modCount;/*** 扩容的临界值, =容量*填充因子*/int threshold;/*** 填充因子*/final float loadFactor;/*** 根据key获取value*/public V get(Object key) {Node<K,V> e;return (e = getNode(hash(key), key)) == null ? null : e.value;}final Node<K,V> getNode(int hash, Object key) {Node<K,V>[] tab; Node<K,V> first, e; int n; K k;if ((tab = table) != null && (n = tab.length) > 0 &&(first = tab[(n - 1) & hash]) != null) {if (first.hash == hash && // always check first node((k = first.key) == key || (key != null && key.equals(k))))return first;if ((e = first.next) != null) {if (first instanceof TreeNode)return ((TreeNode<K,V>)first).getTreeNode(hash, key);do {if (e.hash == hash &&((k = e.key) == key || (key != null && key.equals(k))))return e;} while ((e = e.next) != null);}}return null;}/*** 添加数据*/public V put(K key, V value) {return putVal(hash(key), key, value, false, true);}final V putVal(int hash, K key, V value, boolean onlyIfAbsent,boolean evict) {Node<K,V>[] tab; Node<K,V> p; int n, i;// 步骤1:tab为空则创建if ((tab = table) == null || (n = tab.length) == 0)n = (tab = resize()).length;// 步骤2:计算index,如果当前位置==null,直接插入if ((p = tab[i = (n - 1) & hash]) == null)tab[i] = newNode(hash, key, value, null);else {Node<K,V> e; K k;// 步骤3:节点key存在,直接覆盖valueif (p.hash == hash &&((k = p.key) == key || (key != null && key.equals(k))))e = p;// 步骤4:判断该链为红黑树else if (p instanceof TreeNode)e = ((TreeNode<K,V>)p).putTreeVal(this, tab, hash, key, value);// 步骤5:该链为链表else {for (int binCount = 0; ; ++binCount) {if ((e = p.next) == null) {p.next = newNode(hash, key, value, null);//链表长度大于8转换为红黑树进行处理if (binCount >= TREEIFY_THRESHOLD - 1) // -1 for 1sttreeifyBin(tab, hash);break;}// key已经存在直接覆盖valueif (e.hash == hash &&((k = e.key) == key || (key != null && key.equals(k))))break;p = e;}}if (e != null) { // existing mapping for keyV oldValue = e.value;if (!onlyIfAbsent || oldValue == null)e.value = value;afterNodeAccess(e);return oldValue;}}++modCount;// 步骤6:超过最大容量 就扩容if (++size > threshold)resize();afterNodeInsertion(evict);return null;}/*** 扩容*/final Node<K,V>[] resize() {Node<K,V>[] oldTab = table;int oldCap = (oldTab == null) ? 0 : oldTab.length;int oldThr = threshold;int newCap, newThr = 0;if (oldCap > 0) {// 超过最大值就不再扩充了,就只好随你碰撞去吧if (oldCap >= MAXIMUM_CAPACITY) {threshold = Integer.MAX_VALUE;return oldTab;}// 没超过最大值,就扩充为原来的2倍else if ((newCap = oldCap << 1) < MAXIMUM_CAPACITY &&oldCap >= DEFAULT_INITIAL_CAPACITY)newThr = oldThr << 1; // double threshold}else if (oldThr > 0) // initial capacity was placed in thresholdnewCap = oldThr;else { // zero initial threshold signifies using defaultsnewCap = DEFAULT_INITIAL_CAPACITY;newThr = (int)(DEFAULT_LOAD_FACTOR * DEFAULT_INITIAL_CAPACITY);}if (newThr == 0) {float ft = (float)newCap * loadFactor;newThr = (newCap < MAXIMUM_CAPACITY && ft < (float)MAXIMUM_CAPACITY ?(int)ft : Integer.MAX_VALUE);}threshold = newThr;@SuppressWarnings({"rawtypes","unchecked"})Node<K,V>[] newTab = (Node<K,V>[])new Node[newCap];table = newTab;if (oldTab != null) {for (int j = 0; j < oldCap; ++j) {Node<K,V> e;if ((e = oldTab[j]) != null) {oldTab[j] = null;if (e.next == null)newTab[e.hash & (newCap - 1)] = e;else if (e instanceof TreeNode)((TreeNode<K,V>)e).split(this, newTab, j, oldCap);else { // preserve orderNode<K,V> loHead = null, loTail = null;Node<K,V> hiHead = null, hiTail = null;Node<K,V> next;do {next = e.next;if ((e.hash & oldCap) == 0) {if (loTail == null)loHead = e;elseloTail.next = e;loTail = e;}else {if (hiTail == null)hiHead = e;elsehiTail.next = e;hiTail = e;}} while ((e = next) != null);if (loTail != null) {loTail.next = null;newTab[j] = loHead;}if (hiTail != null) {hiTail.next = null;newTab[j + oldCap] = hiHead;}}}}}return newTab;}/*** Replaces all linked nodes in bin at index for given hash unless* table is too small, in which case resizes instead.*/final void treeifyBin(Node<K,V>[] tab, int hash) {int n, index; Node<K,V> e;if (tab == null || (n = tab.length) < MIN_TREEIFY_CAPACITY)resize();else if ((e = tab[index = (n - 1) & hash]) != null) {TreeNode<K,V> hd = null, tl = null;do {TreeNode<K,V> p = replacementTreeNode(e, null);if (tl == null)hd = p;else {p.prev = tl;tl.next = p;}tl = p;} while ((e = e.next) != null);if ((tab[index] = hd) != null)hd.treeify(tab);}}/*** 向hashMap中添加Map*/public void putAll(Map<? extends K, ? extends V> m) {putMapEntries(m, true);}/*** 移除指定的key*/public V remove(Object key) {Node<K,V> e;return (e = removeNode(hash(key), key, null, false, true)) == null ?null : e.value;}final Node<K,V> removeNode(int hash, Object key, Object value,boolean matchValue, boolean movable) {Node<K,V>[] tab; Node<K,V> p; int n, index;if ((tab = table) != null && (n = tab.length) > 0 &&(p = tab[index = (n - 1) & hash]) != null) {Node<K,V> node = null, e; K k; V v;if (p.hash == hash &&((k = p.key) == key || (key != null && key.equals(k))))node = p;else if ((e = p.next) != null) {if (p instanceof TreeNode)node = ((TreeNode<K,V>)p).getTreeNode(hash, key);else {do {if (e.hash == hash &&((k = e.key) == key ||(key != null && key.equals(k)))) {node = e;break;}p = e;} while ((e = e.next) != null);}}if (node != null && (!matchValue || (v = node.value) == value ||(value != null && value.equals(v)))) {if (node instanceof TreeNode)((TreeNode<K,V>)node).removeTreeNode(this, tab, movable);else if (node == p)tab[index] = node.next;elsep.next = node.next;++modCount;--size;afterNodeRemoval(node);return node;}}return null;}/*** 清空Map*/public void clear() {Node<K,V>[] tab;modCount++;if ((tab = table) != null && size > 0) {size = 0;for (int i = 0; i < tab.length; ++i)tab[i] = null;}}/*** Returns <tt>true</tt> if this map maps one or more keys to the* specified value.** @param value value whose presence in this map is to be tested* @return <tt>true</tt> if this map maps one or more keys to the* specified value*/public boolean containsValue(Object value) {Node<K,V>[] tab; V v;if ((tab = table) != null && size > 0) {for (int i = 0; i < tab.length; ++i) {for (Node<K,V> e = tab[i]; e != null; e = e.next) {if ((v = e.value) == value ||(value != null && value.equals(v)))return true;}}}return false;}/*** Returns a {@link Set} view of the keys contained in this map.* The set is backed by the map, so changes to the map are* reflected in the set, and vice-versa. If the map is modified* while an iteration over the set is in progress (except through* the iterator's own <tt>remove</tt> operation), the results of* the iteration are undefined. The set supports element removal,* which removes the corresponding mapping from the map, via the* <tt>Iterator.remove</tt>, <tt>Set.remove</tt>,* <tt>removeAll</tt>, <tt>retainAll</tt>, and <tt>clear</tt>* operations. It does not support the <tt>add</tt> or <tt>addAll</tt>* operations.** @return a set view of the keys contained in this map*/public Set<K> keySet() {Set<K> ks;return (ks = keySet) == null ? (keySet = new KeySet()) : ks;}final class KeySet extends AbstractSet<K> {public final int size() { return size; }public final void clear() { HashMap.this.clear(); }public final Iterator<K> iterator() { return new KeyIterator(); }public final boolean contains(Object o) { return containsKey(o); }public final boolean remove(Object key) {return removeNode(hash(key), key, null, false, true) != null;}public final Spliterator<K> spliterator() {return new KeySpliterator<>(HashMap.this, 0, -1, 0, 0);}public final void forEach(Consumer<? super K> action) {Node<K,V>[] tab;if (action == null)throw new NullPointerException();if (size > 0 && (tab = table) != null) {int mc = modCount;for (int i = 0; i < tab.length; ++i) {for (Node<K,V> e = tab[i]; e != null; e = e.next)action.accept(e.key);}if (modCount != mc)throw new ConcurrentModificationException();}}}/*** Returns a {@link Collection} view of the values contained in this map.* The collection is backed by the map, so changes to the map are* reflected in the collection, and vice-versa. If the map is* modified while an iteration over the collection is in progress* (except through the iterator's own <tt>remove</tt> operation),* the results of the iteration are undefined. The collection* supports element removal, which removes the corresponding* mapping from the map, via the <tt>Iterator.remove</tt>,* <tt>Collection.remove</tt>, <tt>removeAll</tt>,* <tt>retainAll</tt> and <tt>clear</tt> operations. It does not* support the <tt>add</tt> or <tt>addAll</tt> operations.** @return a view of the values contained in this map*/public Collection<V> values() {Collection<V> vs;return (vs = values) == null ? (values = new Values()) : vs;}final class Values extends AbstractCollection<V> {public final int size() { return size; }public final void clear() { HashMap.this.clear(); }public final Iterator<V> iterator() { return new ValueIterator(); }public final boolean contains(Object o) { return containsValue(o); }public final Spliterator<V> spliterator() {return new ValueSpliterator<>(HashMap.this, 0, -1, 0, 0);}public final void forEach(Consumer<? super V> action) {Node<K,V>[] tab;if (action == null)throw new NullPointerException();if (size > 0 && (tab = table) != null) {int mc = modCount;for (int i = 0; i < tab.length; ++i) {for (Node<K,V> e = tab[i]; e != null; e = e.next)action.accept(e.value);}if (modCount != mc)throw new ConcurrentModificationException();}}}/*** Returns a {@link Set} view of the mappings contained in this map.* The set is backed by the map, so changes to the map are* reflected in the set, and vice-versa. If the map is modified* while an iteration over the set is in progress (except through* the iterator's own <tt>remove</tt> operation, or through the* <tt>setValue</tt> operation on a map entry returned by the* iterator) the results of the iteration are undefined. The set* supports element removal, which removes the corresponding* mapping from the map, via the <tt>Iterator.remove</tt>,* <tt>Set.remove</tt>, <tt>removeAll</tt>, <tt>retainAll</tt> and* <tt>clear</tt> operations. It does not support the* <tt>add</tt> or <tt>addAll</tt> operations.** @return a set view of the mappings contained in this map*/public Set<Map.Entry<K,V>> entrySet() {Set<Map.Entry<K,V>> es;return (es = entrySet) == null ? (entrySet = new EntrySet()) : es;}final class EntrySet extends AbstractSet<Map.Entry<K,V>> {public final int size() { return size; }public final void clear() { HashMap.this.clear(); }public final Iterator<Map.Entry<K,V>> iterator() {return new EntryIterator();}public final boolean contains(Object o) {if (!(o instanceof Map.Entry))return false;Map.Entry<?,?> e = (Map.Entry<?,?>) o;Object key = e.getKey();Node<K,V> candidate = getNode(hash(key), key);return candidate != null && candidate.equals(e);}public final boolean remove(Object o) {if (o instanceof Map.Entry) {Map.Entry<?,?> e = (Map.Entry<?,?>) o;Object key = e.getKey();Object value = e.getValue();return removeNode(hash(key), key, value, true, true) != null;}return false;}public final Spliterator<Map.Entry<K,V>> spliterator() {return new EntrySpliterator<>(HashMap.this, 0, -1, 0, 0);}public final void forEach(Consumer<? super Map.Entry<K,V>> action) {Node<K,V>[] tab;if (action == null)throw new NullPointerException();if (size > 0 && (tab = table) != null) {int mc = modCount;for (int i = 0; i < tab.length; ++i) {for (Node<K,V> e = tab[i]; e != null; e = e.next)action.accept(e);}if (modCount != mc)throw new ConcurrentModificationException();}}}// Overrides of JDK8 Map extension methods@Overridepublic V getOrDefault(Object key, V defaultValue) {Node<K,V> e;return (e = getNode(hash(key), key)) == null ? defaultValue : e.value;}@Overridepublic V putIfAbsent(K key, V value) {return putVal(hash(key), key, value, true, true);}@Overridepublic boolean remove(Object key, Object value) {return removeNode(hash(key), key, value, true, true) != null;}@Overridepublic boolean replace(K key, V oldValue, V newValue) {Node<K,V> e; V v;if ((e = getNode(hash(key), key)) != null &&((v = e.value) == oldValue || (v != null && v.equals(oldValue)))) {e.value = newValue;afterNodeAccess(e);return true;}return false;}@Overridepublic V replace(K key, V value) {Node<K,V> e;if ((e = getNode(hash(key), key)) != null) {V oldValue = e.value;e.value = value;afterNodeAccess(e);return oldValue;}return null;}@Overridepublic V computeIfAbsent(K key,Function<? super K, ? extends V> mappingFunction) {if (mappingFunction == null)throw new NullPointerException();int hash = hash(key);Node<K,V>[] tab; Node<K,V> first; int n, i;int binCount = 0;TreeNode<K,V> t = null;Node<K,V> old = null;if (size > threshold || (tab = table) == null ||(n = tab.length) == 0)n = (tab = resize()).length;if ((first = tab[i = (n - 1) & hash]) != null) {if (first instanceof TreeNode)old = (t = (TreeNode<K,V>)first).getTreeNode(hash, key);else {Node<K,V> e = first; K k;do {if (e.hash == hash &&((k = e.key) == key || (key != null && key.equals(k)))) {old = e;break;}++binCount;} while ((e = e.next) != null);}V oldValue;if (old != null && (oldValue = old.value) != null) {afterNodeAccess(old);return oldValue;}}V v = mappingFunction.apply(key);if (v == null) {return null;} else if (old != null) {old.value = v;afterNodeAccess(old);return v;}else if (t != null)t.putTreeVal(this, tab, hash, key, v);else {tab[i] = newNode(hash, key, v, first);if (binCount >= TREEIFY_THRESHOLD - 1)treeifyBin(tab, hash);}++modCount;++size;afterNodeInsertion(true);return v;}public V computeIfPresent(K key,BiFunction<? super K, ? super V, ? extends V> remappingFunction) {if (remappingFunction == null)throw new NullPointerException();Node<K,V> e; V oldValue;int hash = hash(key);if ((e = getNode(hash, key)) != null &&(oldValue = e.value) != null) {V v = remappingFunction.apply(key, oldValue);if (v != null) {e.value = v;afterNodeAccess(e);return v;}elseremoveNode(hash, key, null, false, true);}return null;}@Overridepublic V compute(K key,BiFunction<? super K, ? super V, ? extends V> remappingFunction) {if (remappingFunction == null)throw new NullPointerException();int hash = hash(key);Node<K,V>[] tab; Node<K,V> first; int n, i;int binCount = 0;TreeNode<K,V> t = null;Node<K,V> old = null;if (size > threshold || (tab = table) == null ||(n = tab.length) == 0)n = (tab = resize()).length;if ((first = tab[i = (n - 1) & hash]) != null) {if (first instanceof TreeNode)old = (t = (TreeNode<K,V>)first).getTreeNode(hash, key);else {Node<K,V> e = first; K k;do {if (e.hash == hash &&((k = e.key) == key || (key != null && key.equals(k)))) {old = e;break;}++binCount;} while ((e = e.next) != null);}}V oldValue = (old == null) ? null : old.value;V v = remappingFunction.apply(key, oldValue);if (old != null) {if (v != null) {old.value = v;afterNodeAccess(old);}elseremoveNode(hash, key, null, false, true);}else if (v != null) {if (t != null)t.putTreeVal(this, tab, hash, key, v);else {tab[i] = newNode(hash, key, v, first);if (binCount >= TREEIFY_THRESHOLD - 1)treeifyBin(tab, hash);}++modCount;++size;afterNodeInsertion(true);}return v;}@Overridepublic V merge(K key, V value,BiFunction<? super V, ? super V, ? extends V> remappingFunction) {if (value == null)throw new NullPointerException();if (remappingFunction == null)throw new NullPointerException();int hash = hash(key);Node<K,V>[] tab; Node<K,V> first; int n, i;int binCount = 0;TreeNode<K,V> t = null;Node<K,V> old = null;if (size > threshold || (tab = table) == null ||(n = tab.length) == 0)n = (tab = resize()).length;if ((first = tab[i = (n - 1) & hash]) != null) {if (first instanceof TreeNode)old = (t = (TreeNode<K,V>)first).getTreeNode(hash, key);else {Node<K,V> e = first; K k;do {if (e.hash == hash &&((k = e.key) == key || (key != null && key.equals(k)))) {old = e;break;}++binCount;} while ((e = e.next) != null);}}if (old != null) {V v;if (old.value != null)v = remappingFunction.apply(old.value, value);elsev = value;if (v != null) {old.value = v;afterNodeAccess(old);}elseremoveNode(hash, key, null, false, true);return v;}if (value != null) {if (t != null)t.putTreeVal(this, tab, hash, key, value);else {tab[i] = newNode(hash, key, value, first);if (binCount >= TREEIFY_THRESHOLD - 1)treeifyBin(tab, hash);}++modCount;++size;afterNodeInsertion(true);}return value;}@Overridepublic void forEach(BiConsumer<? super K, ? super V> action) {Node<K,V>[] tab;if (action == null)throw new NullPointerException();if (size > 0 && (tab = table) != null) {int mc = modCount;for (int i = 0; i < tab.length; ++i) {for (Node<K,V> e = tab[i]; e != null; e = e.next)action.accept(e.key, e.value);}if (modCount != mc)throw new ConcurrentModificationException();}}@Overridepublic void replaceAll(BiFunction<? super K, ? super V, ? extends V> function) {Node<K,V>[] tab;if (function == null)throw new NullPointerException();if (size > 0 && (tab = table) != null) {int mc = modCount;for (int i = 0; i < tab.length; ++i) {for (Node<K,V> e = tab[i]; e != null; e = e.next) {e.value = function.apply(e.key, e.value);}}if (modCount != mc)throw new ConcurrentModificationException();}}/* ------------------------------------------------------------ */// Cloning and serialization/*** Returns a shallow copy of this <tt>HashMap</tt> instance: the keys and* values themselves are not cloned.** @return a shallow copy of this map*/@SuppressWarnings("unchecked")@Overridepublic Object clone() {HashMap<K,V> result;try {result = (HashMap<K,V>)super.clone();} catch (CloneNotSupportedException e) {// this shouldn't happen, since we are Cloneablethrow new InternalError(e);}result.reinitialize();result.putMapEntries(this, false);return result;}// These methods are also used when serializing HashSetsfinal float loadFactor() { return loadFactor; }final int capacity() {return (table != null) ? table.length :(threshold > 0) ? threshold :DEFAULT_INITIAL_CAPACITY;}/*** Save the state of the <tt>HashMap</tt> instance to a stream (i.e.,* serialize it).** @serialData The <i>capacity</i> of the HashMap (the length of the* bucket array) is emitted (int), followed by the* <i>size</i> (an int, the number of key-value* mappings), followed by the key (Object) and value (Object)* for each key-value mapping. The key-value mappings are* emitted in no particular order.*/private void writeObject(java.io.ObjectOutputStream s)throws IOException {int buckets = capacity();// Write out the threshold, loadfactor, and any hidden stuffs.defaultWriteObject();s.writeInt(buckets);s.writeInt(size);internalWriteEntries(s);}/*** Reconstitute the {@code HashMap} instance from a stream (i.e.,* deserialize it).*/private void readObject(java.io.ObjectInputStream s)throws IOException, ClassNotFoundException {// Read in the threshold (ignored), loadfactor, and any hidden stuffs.defaultReadObject();reinitialize();if (loadFactor <= 0 || Float.isNaN(loadFactor))throw new InvalidObjectException("Illegal load factor: " +loadFactor);s.readInt(); // Read and ignore number of bucketsint mappings = s.readInt(); // Read number of mappings (size)if (mappings < 0)throw new InvalidObjectException("Illegal mappings count: " +mappings);else if (mappings > 0) { // (if zero, use defaults)// Size the table using given load factor only if within// range of 0.25...4.0float lf = Math.min(Math.max(0.25f, loadFactor), 4.0f);float fc = (float)mappings / lf + 1.0f;int cap = ((fc < DEFAULT_INITIAL_CAPACITY) ?DEFAULT_INITIAL_CAPACITY :(fc >= MAXIMUM_CAPACITY) ?MAXIMUM_CAPACITY :tableSizeFor((int)fc));float ft = (float)cap * lf;threshold = ((cap < MAXIMUM_CAPACITY && ft < MAXIMUM_CAPACITY) ?(int)ft : Integer.MAX_VALUE);@SuppressWarnings({"rawtypes","unchecked"})Node<K,V>[] tab = (Node<K,V>[])new Node[cap];table = tab;// Read the keys and values, and put the mappings in the HashMapfor (int i = 0; i < mappings; i++) {@SuppressWarnings("unchecked")K key = (K) s.readObject();@SuppressWarnings("unchecked")V value = (V) s.readObject();putVal(hash(key), key, value, false, false);}}}/* ------------------------------------------------------------ */// iteratorsabstract class HashIterator {Node<K,V> next; // next entry to returnNode<K,V> current; // current entryint expectedModCount; // for fast-failint index; // current slotHashIterator() {expectedModCount = modCount;Node<K,V>[] t = table;current = next = null;index = 0;if (t != null && size > 0) { // advance to first entrydo {} while (index < t.length && (next = t[index++]) == null);}}public final boolean hasNext() {return next != null;}final Node<K,V> nextNode() {Node<K,V>[] t;Node<K,V> e = next;if (modCount != expectedModCount)throw new ConcurrentModificationException();if (e == null)throw new NoSuchElementException();if ((next = (current = e).next) == null && (t = table) != null) {do {} while (index < t.length && (next = t[index++]) == null);}return e;}public final void remove() {Node<K,V> p = current;if (p == null)throw new IllegalStateException();if (modCount != expectedModCount)throw new ConcurrentModificationException();current = null;K key = p.key;removeNode(hash(key), key, null, false, false);expectedModCount = modCount;}}final class KeyIterator extends HashIteratorimplements Iterator<K> {public final K next() { return nextNode().key; }}final class ValueIterator extends HashIteratorimplements Iterator<V> {public final V next() { return nextNode().value; }}final class EntryIterator extends HashIteratorimplements Iterator<Map.Entry<K,V>> {public final Map.Entry<K,V> next() { return nextNode(); }}/* ------------------------------------------------------------ */// spliteratorsstatic class HashMapSpliterator<K,V> {final HashMap<K,V> map;Node<K,V> current; // current nodeint index; // current index, modified on advance/splitint fence; // one past last indexint est; // size estimateint expectedModCount; // for comodification checksHashMapSpliterator(HashMap<K,V> m, int origin,int fence, int est,int expectedModCount) {this.map = m;this.index = origin;this.fence = fence;this.est = est;this.expectedModCount = expectedModCount;}final int getFence() { // initialize fence and size on first useint hi;if ((hi = fence) < 0) {HashMap<K,V> m = map;est = m.size;expectedModCount = m.modCount;Node<K,V>[] tab = m.table;hi = fence = (tab == null) ? 0 : tab.length;}return hi;}public final long estimateSize() {getFence(); // force initreturn (long) est;}}static final class KeySpliterator<K,V>extends HashMapSpliterator<K,V>implements Spliterator<K> {KeySpliterator(HashMap<K,V> m, int origin, int fence, int est,int expectedModCount) {super(m, origin, fence, est, expectedModCount);}public KeySpliterator<K,V> trySplit() {int hi = getFence(), lo = index, mid = (lo + hi) >>> 1;return (lo >= mid || current != null) ? null :new KeySpliterator<>(map, lo, index = mid, est >>>= 1,expectedModCount);}public void forEachRemaining(Consumer<? super K> action) {int i, hi, mc;if (action == null)throw new NullPointerException();HashMap<K,V> m = map;Node<K,V>[] tab = m.table;if ((hi = fence) < 0) {mc = expectedModCount = m.modCount;hi = fence = (tab == null) ? 0 : tab.length;}elsemc = expectedModCount;if (tab != null && tab.length >= hi &&(i = index) >= 0 && (i < (index = hi) || current != null)) {Node<K,V> p = current;current = null;do {if (p == null)p = tab[i++];else {action.accept(p.key);p = p.next;}} while (p != null || i < hi);if (m.modCount != mc)throw new ConcurrentModificationException();}}public boolean tryAdvance(Consumer<? super K> action) {int hi;if (action == null)throw new NullPointerException();Node<K,V>[] tab = map.table;if (tab != null && tab.length >= (hi = getFence()) && index >= 0) {while (current != null || index < hi) {if (current == null)current = tab[index++];else {K k = current.key;current = current.next;action.accept(k);if (map.modCount != expectedModCount)throw new ConcurrentModificationException();return true;}}}return false;}public int characteristics() {return (fence < 0 || est == map.size ? Spliterator.SIZED : 0) |Spliterator.DISTINCT;}}static final class ValueSpliterator<K,V>extends HashMapSpliterator<K,V>implements Spliterator<V> {ValueSpliterator(HashMap<K,V> m, int origin, int fence, int est,int expectedModCount) {super(m, origin, fence, est, expectedModCount);}public ValueSpliterator<K,V> trySplit() {int hi = getFence(), lo = index, mid = (lo + hi) >>> 1;return (lo >= mid || current != null) ? null :new ValueSpliterator<>(map, lo, index = mid, est >>>= 1,expectedModCount);}public void forEachRemaining(Consumer<? super V> action) {int i, hi, mc;if (action == null)throw new NullPointerException();HashMap<K,V> m = map;Node<K,V>[] tab = m.table;if ((hi = fence) < 0) {mc = expectedModCount = m.modCount;hi = fence = (tab == null) ? 0 : tab.length;}elsemc = expectedModCount;if (tab != null && tab.length >= hi &&(i = index) >= 0 && (i < (index = hi) || current != null)) {Node<K,V> p = current;current = null;do {if (p == null)p = tab[i++];else {action.accept(p.value);p = p.next;}} while (p != null || i < hi);if (m.modCount != mc)throw new ConcurrentModificationException();}}public boolean tryAdvance(Consumer<? super V> action) {int hi;if (action == null)throw new NullPointerException();Node<K,V>[] tab = map.table;if (tab != null && tab.length >= (hi = getFence()) && index >= 0) {while (current != null || index < hi) {if (current == null)current = tab[index++];else {V v = current.value;current = current.next;action.accept(v);if (map.modCount != expectedModCount)throw new ConcurrentModificationException();return true;}}}return false;}public int characteristics() {return (fence < 0 || est == map.size ? Spliterator.SIZED : 0);}}static final class EntrySpliterator<K,V>extends HashMapSpliterator<K,V>implements Spliterator<Map.Entry<K,V>> {EntrySpliterator(HashMap<K,V> m, int origin, int fence, int est,int expectedModCount) {super(m, origin, fence, est, expectedModCount);}public EntrySpliterator<K,V> trySplit() {int hi = getFence(), lo = index, mid = (lo + hi) >>> 1;return (lo >= mid || current != null) ? null :new EntrySpliterator<>(map, lo, index = mid, est >>>= 1,expectedModCount);}public void forEachRemaining(Consumer<? super Map.Entry<K,V>> action) {int i, hi, mc;if (action == null)throw new NullPointerException();HashMap<K,V> m = map;Node<K,V>[] tab = m.table;if ((hi = fence) < 0) {mc = expectedModCount = m.modCount;hi = fence = (tab == null) ? 0 : tab.length;}elsemc = expectedModCount;if (tab != null && tab.length >= hi &&(i = index) >= 0 && (i < (index = hi) || current != null)) {Node<K,V> p = current;current = null;do {if (p == null)p = tab[i++];else {action.accept(p);p = p.next;}} while (p != null || i < hi);if (m.modCount != mc)throw new ConcurrentModificationException();}}public boolean tryAdvance(Consumer<? super Map.Entry<K,V>> action) {int hi;if (action == null)throw new NullPointerException();Node<K,V>[] tab = map.table;if (tab != null && tab.length >= (hi = getFence()) && index >= 0) {while (current != null || index < hi) {if (current == null)current = tab[index++];else {Node<K,V> e = current;current = current.next;action.accept(e);if (map.modCount != expectedModCount)throw new ConcurrentModificationException();return true;}}}return false;}public int characteristics() {return (fence < 0 || est == map.size ? Spliterator.SIZED : 0) |Spliterator.DISTINCT;}}/* ------------------------------------------------------------ */// LinkedHashMap support/** The following package-protected methods are designed to be* overridden by LinkedHashMap, but not by any other subclass.* Nearly all other internal methods are also package-protected* but are declared final, so can be used by LinkedHashMap, view* classes, and HashSet.*/// Create a regular (non-tree) nodeNode<K,V> newNode(int hash, K key, V value, Node<K,V> next) {return new Node<>(hash, key, value, next);}// For conversion from TreeNodes to plain nodesNode<K,V> replacementNode(Node<K,V> p, Node<K,V> next) {return new Node<>(p.hash, p.key, p.value, next);}// Create a tree bin nodeTreeNode<K,V> newTreeNode(int hash, K key, V value, Node<K,V> next) {return new TreeNode<>(hash, key, value, next);}// For treeifyBinTreeNode<K,V> replacementTreeNode(Node<K,V> p, Node<K,V> next) {return new TreeNode<>(p.hash, p.key, p.value, next);}/*** Reset to initial default state. Called by clone and readObject.*/void reinitialize() {table = null;entrySet = null;keySet = null;values = null;modCount = 0;threshold = 0;size = 0;}// Callbacks to allow LinkedHashMap post-actionsvoid afterNodeAccess(Node<K,V> p) { }void afterNodeInsertion(boolean evict) { }void afterNodeRemoval(Node<K,V> p) { }// Called only from writeObject, to ensure compatible ordering.void internalWriteEntries(java.io.ObjectOutputStream s) throws IOException {Node<K,V>[] tab;if (size > 0 && (tab = table) != null) {for (int i = 0; i < tab.length; ++i) {for (Node<K,V> e = tab[i]; e != null; e = e.next) {s.writeObject(e.key);s.writeObject(e.value);}}}}/* ------------------------------------------------------------ */// Tree bins/*** Entry for Tree bins. Extends LinkedHashMap.Entry (which in turn* extends Node) so can be used as extension of either regular or* linked node.*/static final class TreeNode<K,V> extends LinkedHashMap.Entry<K,V> {TreeNode<K,V> parent; // red-black tree linksTreeNode<K,V> left;TreeNode<K,V> right;TreeNode<K,V> prev; // needed to unlink next upon deletionboolean red;TreeNode(int hash, K key, V val, Node<K,V> next) {super(hash, key, val, next);}/*** Returns root of tree containing this node.*/final TreeNode<K,V> root() {for (TreeNode<K,V> r = this, p;;) {if ((p = r.parent) == null)return r;r = p;}}/*** Ensures that the given root is the first node of its bin.*/static <K,V> void moveRootToFront(Node<K,V>[] tab, TreeNode<K,V> root) {int n;if (root != null && tab != null && (n = tab.length) > 0) {int index = (n - 1) & root.hash;TreeNode<K,V> first = (TreeNode<K,V>)tab[index];if (root != first) {Node<K,V> rn;tab[index] = root;TreeNode<K,V> rp = root.prev;if ((rn = root.next) != null)((TreeNode<K,V>)rn).prev = rp;if (rp != null)rp.next = rn;if (first != null)first.prev = root;root.next = first;root.prev = null;}assert checkInvariants(root);}}/*** Finds the node starting at root p with the given hash and key.* The kc argument caches comparableClassFor(key) upon first use* comparing keys.*/final TreeNode<K,V> find(int h, Object k, Class<?> kc) {TreeNode<K,V> p = this;do {int ph, dir; K pk;TreeNode<K,V> pl = p.left, pr = p.right, q;if ((ph = p.hash) > h)p = pl;else if (ph < h)p = pr;else if ((pk = p.key) == k || (k != null && k.equals(pk)))return p;else if (pl == null)p = pr;else if (pr == null)p = pl;else if ((kc != null ||(kc = comparableClassFor(k)) != null) &&(dir = compareComparables(kc, k, pk)) != 0)p = (dir < 0) ? pl : pr;else if ((q = pr.find(h, k, kc)) != null)return q;elsep = pl;} while (p != null);return null;}/*** Calls find for root node.*/final TreeNode<K,V> getTreeNode(int h, Object k) {return ((parent != null) ? root() : this).find(h, k, null);}/*** Tie-breaking utility for ordering insertions when equal* hashCodes and non-comparable. We don't require a total* order, just a consistent insertion rule to maintain* equivalence across rebalancings. Tie-breaking further than* necessary simplifies testing a bit.*/static int tieBreakOrder(Object a, Object b) {int d;if (a == null || b == null ||(d = a.getClass().getName().compareTo(b.getClass().getName())) == 0)d = (System.identityHashCode(a) <= System.identityHashCode(b) ?-1 : 1);return d;}/*** Forms tree of the nodes linked from this node.* @return root of tree*/final void treeify(Node<K,V>[] tab) {TreeNode<K,V> root = null;for (TreeNode<K,V> x = this, next; x != null; x = next) {next = (TreeNode<K,V>)x.next;x.left = x.right = null;if (root == null) {x.parent = null;x.red = false;root = x;}else {K k = x.key;int h = x.hash;Class<?> kc = null;for (TreeNode<K,V> p = root;;) {int dir, ph;K pk = p.key;if ((ph = p.hash) > h)dir = -1;else if (ph < h)dir = 1;else if ((kc == null &&(kc = comparableClassFor(k)) == null) ||(dir = compareComparables(kc, k, pk)) == 0)dir = tieBreakOrder(k, pk);TreeNode<K,V> xp = p;if ((p = (dir <= 0) ? p.left : p.right) == null) {x.parent = xp;if (dir <= 0)xp.left = x;elsexp.right = x;root = balanceInsertion(root, x);break;}}}}moveRootToFront(tab, root);}/*** Returns a list of non-TreeNodes replacing those linked from* this node.*/final Node<K,V> untreeify(HashMap<K,V> map) {Node<K,V> hd = null, tl = null;for (Node<K,V> q = this; q != null; q = q.next) {Node<K,V> p = map.replacementNode(q, null);if (tl == null)hd = p;elsetl.next = p;tl = p;}return hd;}/*** Tree version of putVal.*/final TreeNode<K,V> putTreeVal(HashMap<K,V> map, Node<K,V>[] tab,int h, K k, V v) {Class<?> kc = null;boolean searched = false;TreeNode<K,V> root = (parent != null) ? root() : this;for (TreeNode<K,V> p = root;;) {int dir, ph; K pk;if ((ph = p.hash) > h)dir = -1;else if (ph < h)dir = 1;else if ((pk = p.key) == k || (k != null && k.equals(pk)))return p;else if ((kc == null &&(kc = comparableClassFor(k)) == null) ||(dir = compareComparables(kc, k, pk)) == 0) {if (!searched) {TreeNode<K,V> q, ch;searched = true;if (((ch = p.left) != null &&(q = ch.find(h, k, kc)) != null) ||((ch = p.right) != null &&(q = ch.find(h, k, kc)) != null))return q;}dir = tieBreakOrder(k, pk);}TreeNode<K,V> xp = p;if ((p = (dir <= 0) ? p.left : p.right) == null) {Node<K,V> xpn = xp.next;TreeNode<K,V> x = map.newTreeNode(h, k, v, xpn);if (dir <= 0)xp.left = x;elsexp.right = x;xp.next = x;x.parent = x.prev = xp;if (xpn != null)((TreeNode<K,V>)xpn).prev = x;moveRootToFront(tab, balanceInsertion(root, x));return null;}}}/*** Removes the given node, that must be present before this call.* This is messier than typical red-black deletion code because we* cannot swap the contents of an interior node with a leaf* successor that is pinned by "next" pointers that are accessible* independently during traversal. So instead we swap the tree* linkages. If the current tree appears to have too few nodes,* the bin is converted back to a plain bin. (The test triggers* somewhere between 2 and 6 nodes, depending on tree structure).*/final void removeTreeNode(HashMap<K,V> map, Node<K,V>[] tab,boolean movable) {int n;if (tab == null || (n = tab.length) == 0)return;int index = (n - 1) & hash;TreeNode<K,V> first = (TreeNode<K,V>)tab[index], root = first, rl;TreeNode<K,V> succ = (TreeNode<K,V>)next, pred = prev;if (pred == null)tab[index] = first = succ;elsepred.next = succ;if (succ != null)succ.prev = pred;if (first == null)return;if (root.parent != null)root = root.root();if (root == null || root.right == null ||(rl = root.left) == null || rl.left == null) {tab[index] = first.untreeify(map); // too smallreturn;}TreeNode<K,V> p = this, pl = left, pr = right, replacement;if (pl != null && pr != null) {TreeNode<K,V> s = pr, sl;while ((sl = s.left) != null) // find successors = sl;boolean c = s.red; s.red = p.red; p.red = c; // swap colorsTreeNode<K,V> sr = s.right;TreeNode<K,V> pp = p.parent;if (s == pr) { // p was s's direct parentp.parent = s;s.right = p;}else {TreeNode<K,V> sp = s.parent;if ((p.parent = sp) != null) {if (s == sp.left)sp.left = p;elsesp.right = p;}if ((s.right = pr) != null)pr.parent = s;}p.left = null;if ((p.right = sr) != null)sr.parent = p;if ((s.left = pl) != null)pl.parent = s;if ((s.parent = pp) == null)root = s;else if (p == pp.left)pp.left = s;elsepp.right = s;if (sr != null)replacement = sr;elsereplacement = p;}else if (pl != null)replacement = pl;else if (pr != null)replacement = pr;elsereplacement = p;if (replacement != p) {TreeNode<K,V> pp = replacement.parent = p.parent;if (pp == null)root = replacement;else if (p == pp.left)pp.left = replacement;elsepp.right = replacement;p.left = p.right = p.parent = null;}TreeNode<K,V> r = p.red ? root : balanceDeletion(root, replacement);if (replacement == p) { // detachTreeNode<K,V> pp = p.parent;p.parent = null;if (pp != null) {if (p == pp.left)pp.left = null;else if (p == pp.right)pp.right = null;}}if (movable)moveRootToFront(tab, r);}/*** Splits nodes in a tree bin into lower and upper tree bins,* or untreeifies if now too small. Called only from resize;* see above discussion about split bits and indices.** @param map the map* @param tab the table for recording bin heads* @param index the index of the table being split* @param bit the bit of hash to split on*/final void split(HashMap<K,V> map, Node<K,V>[] tab, int index, int bit) {TreeNode<K,V> b = this;// Relink into lo and hi lists, preserving orderTreeNode<K,V> loHead = null, loTail = null;TreeNode<K,V> hiHead = null, hiTail = null;int lc = 0, hc = 0;for (TreeNode<K,V> e = b, next; e != null; e = next) {next = (TreeNode<K,V>)e.next;e.next = null;if ((e.hash & bit) == 0) {if ((e.prev = loTail) == null)loHead = e;elseloTail.next = e;loTail = e;++lc;}else {if ((e.prev = hiTail) == null)hiHead = e;elsehiTail.next = e;hiTail = e;++hc;}}if (loHead != null) {if (lc <= UNTREEIFY_THRESHOLD)tab[index] = loHead.untreeify(map);else {tab[index] = loHead;if (hiHead != null) // (else is already treeified)loHead.treeify(tab);}}if (hiHead != null) {if (hc <= UNTREEIFY_THRESHOLD)tab[index + bit] = hiHead.untreeify(map);else {tab[index + bit] = hiHead;if (loHead != null)hiHead.treeify(tab);}}}/* ------------------------------------------------------------ */// Red-black tree methods, all adapted from CLRstatic <K,V> TreeNode<K,V> rotateLeft(TreeNode<K,V> root,TreeNode<K,V> p) {TreeNode<K,V> r, pp, rl;if (p != null && (r = p.right) != null) {if ((rl = p.right = r.left) != null)rl.parent = p;if ((pp = r.parent = p.parent) == null)(root = r).red = false;else if (pp.left == p)pp.left = r;elsepp.right = r;r.left = p;p.parent = r;}return root;}static <K,V> TreeNode<K,V> rotateRight(TreeNode<K,V> root,TreeNode<K,V> p) {TreeNode<K,V> l, pp, lr;if (p != null && (l = p.left) != null) {if ((lr = p.left = l.right) != null)lr.parent = p;if ((pp = l.parent = p.parent) == null)(root = l).red = false;else if (pp.right == p)pp.right = l;elsepp.left = l;l.right = p;p.parent = l;}return root;}static <K,V> TreeNode<K,V> balanceInsertion(TreeNode<K,V> root,TreeNode<K,V> x) {x.red = true;for (TreeNode<K,V> xp, xpp, xppl, xppr;;) {if ((xp = x.parent) == null) {x.red = false;return x;}else if (!xp.red || (xpp = xp.parent) == null)return root;if (xp == (xppl = xpp.left)) {if ((xppr = xpp.right) != null && xppr.red) {xppr.red = false;xp.red = false;xpp.red = true;x = xpp;}else {if (x == xp.right) {root = rotateLeft(root, x = xp);xpp = (xp = x.parent) == null ? null : xp.parent;}if (xp != null) {xp.red = false;if (xpp != null) {xpp.red = true;root = rotateRight(root, xpp);}}}}else {if (xppl != null && xppl.red) {xppl.red = false;xp.red = false;xpp.red = true;x = xpp;}else {if (x == xp.left) {root = rotateRight(root, x = xp);xpp = (xp = x.parent) == null ? null : xp.parent;}if (xp != null) {xp.red = false;if (xpp != null) {xpp.red = true;root = rotateLeft(root, xpp);}}}}}}static <K,V> TreeNode<K,V> balanceDeletion(TreeNode<K,V> root,TreeNode<K,V> x) {for (TreeNode<K,V> xp, xpl, xpr;;) {if (x == null || x == root)return root;else if ((xp = x.parent) == null) {x.red = false;return x;}else if (x.red) {x.red = false;return root;}else if ((xpl = xp.left) == x) {if ((xpr = xp.right) != null && xpr.red) {xpr.red = false;xp.red = true;root = rotateLeft(root, xp);xpr = (xp = x.parent) == null ? null : xp.right;}if (xpr == null)x = xp;else {TreeNode<K,V> sl = xpr.left, sr = xpr.right;if ((sr == null || !sr.red) &&(sl == null || !sl.red)) {xpr.red = true;x = xp;}else {if (sr == null || !sr.red) {if (sl != null)sl.red = false;xpr.red = true;root = rotateRight(root, xpr);xpr = (xp = x.parent) == null ?null : xp.right;}if (xpr != null) {xpr.red = (xp == null) ? false : xp.red;if ((sr = xpr.right) != null)sr.red = false;}if (xp != null) {xp.red = false;root = rotateLeft(root, xp);}x = root;}}}else { // symmetricif (xpl != null && xpl.red) {xpl.red = false;xp.red = true;root = rotateRight(root, xp);xpl = (xp = x.parent) == null ? null : xp.left;}if (xpl == null)x = xp;else {TreeNode<K,V> sl = xpl.left, sr = xpl.right;if ((sl == null || !sl.red) &&(sr == null || !sr.red)) {xpl.red = true;x = xp;}else {if (sl == null || !sl.red) {if (sr != null)sr.red = false;xpl.red = true;root = rotateLeft(root, xpl);xpl = (xp = x.parent) == null ?null : xp.left;}if (xpl != null) {xpl.red = (xp == null) ? false : xp.red;if ((sl = xpl.left) != null)sl.red = false;}if (xp != null) {xp.red = false;root = rotateRight(root, xp);}x = root;}}}}}/*** Recursive invariant check*/static <K,V> boolean checkInvariants(TreeNode<K,V> t) {TreeNode<K,V> tp = t.parent, tl = t.left, tr = t.right,tb = t.prev, tn = (TreeNode<K,V>)t.next;if (tb != null && tb.next != t)return false;if (tn != null && tn.prev != t)return false;if (tp != null && t != tp.left && t != tp.right)return false;if (tl != null && (tl.parent != t || tl.hash > t.hash))return false;if (tr != null && (tr.parent != t || tr.hash < t.hash))return false;if (t.red && tl != null && tl.red && tr != null && tr.red)return false;if (tl != null && !checkInvariants(tl))return false;if (tr != null && !checkInvariants(tr))return false;return true;}}}

HashMap是 Map 接口使用频率最高的实现类。

允许使用null键和null值,与HashSet一样,不保证映射的顺序。

所有的key构成的集合是Set:无序的、不可重复的。所以, key所在的类要重写:equals()和hashCode()

所有的value构成的集合是Collection:无序的、可以重复的。所以, value所在的类要重写: equals()

一个key-value构成一个entry

所有的entry构成的集合是Set:无序的、不可重复的

HashMap 判断两个 key 相等的标准是:两个 key 通过 equals() 方法返回 true,hashCode 值也相等。

HashMap 判断两个 value相等的标准是:两个 value 通过 equals() 方法返回 true。

JDK 7及以前版本: HashMap是数组+链表结构(即为链地址法)

JDK 8版本发布以后: HashMap是数组+链表+红黑树实现。

HashMap源码中的重要常量

DEFAULT_INITIAL_CAPACITY : HashMap的默认容量, 16

MAXIMUM_CAPACITY : HashMap的最大支持容量, 2^30

DEFAULT_LOAD_FACTOR: HashMap的默认加载因子

TREEIFY_THRESHOLD: Bucket中链表长度大于该默认值,转化为红黑树

UNTREEIFY_THRESHOLD: Bucket中红黑树存储的Node小于该默认值,转化为链表

MIN_TREEIFY_CAPACITY: 桶中的Node被树化时最小的hash表容量。(当桶中Node的

数量大到需要变红黑树时,若hash表容量小于MIN_TREEIFY_CAPACITY时,此时应执行resize扩容操作这个MIN_TREEIFY_CAPACITY的值至少是TREEIFY_THRESHOLD的4倍。)

table: 存储元素的数组,总是2的n次幂

entrySet: 存储具体元素的集

size: HashMap中存储的键值对的数量

modCount: HashMap扩容和结构改变的次数。

threshold: 扩容的临界值, =容量*填充因子

loadFactor: 填充因子

在HashMap中,哈希桶数组table的长度length大小必须为2的n次方(一定是合数),这是一种非常规的设计,常规的设计是把桶的大小设计为素数。

当HashMap中的其中一个链的对象个数如果达到了8个,此时如果capacity没有达到64,那么HashMap会先扩容解决,如果已经达到了64,那么这个链会变成树,结点类型由Node变成TreeNode类型。当然,如果当映射关系被移除后,下次resize方法时判断树的结点个数低于6个,也会把树再转为链表。

LinkedHashMap

LinkedHashMap 是 HashMap 的子类。

在HashMap存储结构的基础上,使用了一对双向链表来记录添加元素的顺序或者 LRU 顺序 。

与LinkedHashSet类似, LinkedHashMap 可以维护 Map 的迭代顺序:迭代顺序与 Key-Value 对的插入顺序一致。

TreeMap

TreeMap存储 Key-Value 对时, 需要根据 key-value 对进行排序。

TreeMap 可以保证所有的 Key-Value 对处于有序状态。

TreeSet底层使用红黑树结构存储数据

TreeMap 的 Key 的排序:

自然排序: TreeMap 的所有的 Key 必须实现 Comparable 接口,而且所有的 Key 应该是同一个类的对象,否则将会抛出 ClasssCastException

定制排序:创建 TreeMap 时,传入一个 Comparator 对象,该对象负责对TreeMap 中的所有 key 进行排序。此时不需要 Map 的 Key 实现

Comparable 接口

TreeMap判断两个key相等的标准:两个key通过compareTo()方法或者compare()方法返回0。

Hashtable

Hashtable是个古老的 Map 实现类, JDK1.0就提供了。不同于HashMap,Hashtable是线程安全的。

性能较弱,不推荐使用。

ConcurrentHashMap

ConcurrentHashMap 和 HashMap 实现上类似,最主要的差别是 ConcurrentHashMap 采用了了分段锁(Segment),每个分段锁维护着几个桶(HashEntry),多个线程可以同时访问不同分段锁上的桶,从而使其并发度更高(并发度就是 Segment 的个数)。

Segment 继承自 ReentrantLock。 默认的并发级别为 16,也就是说默认创建 16 个 Segment。

JDK 1.7 使用分段锁机制来实现并发更新操作,核心类为 Segment,它继承自重入锁 ReentrantLock,并发度与 Segment 数量量相等。

JDK 1.8 使用了 CAS 操作来支持更高的并发度,在 CAS 操作失败时使用内置锁 synchronized。并且 JDK 1.8 的实现也在链表过长时会转换为红黑树。

WeakHashMap

WeakHashMap 的 Entry 继承自 WeakReference,被 WeakReference 关联的对象在下一次垃圾回收时会被回收。

WeakHashMap 主要用来实现缓存,通过使用 WeakHashMap 来引用缓存对象,由 JVM 对这部分缓存进行回收。