kubernetes云平台部署

基础准备

1、规划节点

| ip | 主机名 | 节点 |

|---|---|---|

| 10.30.59.221 | master | master节点 |

| 10.30.59.222 | node | node节点 |

所有节点安装好centos_7.5.1804系统,保持网络畅通

案例实施

1、基础环境配置

(1)配置yum源

所有节点将提供的压缩包K8S.tar.gz上传至/root目录并解压 。

[root@master ~]# tar -zxvf K8S.tar.gz

所有节点配置本地YUM源。

[root@master ~]# vi /etc/yum.repos.d/local.repo

[kubernetes]

name=kubernetes

baseurl=file:///root/Kubernetes

gpgcheck=0

enabled=1

(2)升级系统内核

所有节点升级系统内核

[root@master ~]# yum upgrade -y

(3)配置主机映射

[root@master ~]# vi /etc/hosts

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

10.30.59.221 master

10.30.59.222 node

(4)配置防火墙及SELinux

[root@master ~]# systemctl stop firewalld && systemctl disable firewalld

[root@master ~]# iptables -F

[root@master ~]# iptables -X

[root@master ~]# iptables -Z

[root@master ~]# iptables-save

[root@master ~]# sed -i 's/SELINUX=enforcing/SELINUX=disabled/g' /etc/selinux/config

[root@master ~]# reboot

(5)关闭Swap

[root@master ~]# swapoff -a

[root@master ~]# sed -i "s/\/dev\/mapper\/centos-swap/\#\/dev\/mapper\/centos-swap/g" /etc/fstab

(6)配置时间同步

master节点:

[root@master ~]# yum install -y chrony

[root@master ~]# sed -i 's/^server/#&/' /etc/chrony.conf

[root@master ~]# cat >>/etc/chrony.conf<<EOF

> local stratum 10

> server master iburst

> allow all

> EOF

[root@master ~]# systemctl enable chronyd && systemctl restart chronyd

[root@master ~]# timedatectl set-ntp true

(6)

配置时间同步

node节点:

[root@master ~]# yum install -y chrony

[root@node ~]# sed -i 's/^server/#&/' /etc/chrony.conf

[root@node ~]# echo server 10.30.59.221 iburst >> /etc/chrony.conf

[root@node ~]# systemctl enable chronyd && systemctl restart chronyd

[root@node ~]# chronyc sources

210 Number of sources = 1

MS Name/IP address Stratum Poll Reach LastRx Last sample

===============================================================================

^* master 11 6 17 13 -18us[ -21us] +/- 275ms

^*代表时间同步成功

(7)配置路由转发

所有节点:

[root@master ~]# cat << EOF | tee /etc/sysctl.d/k8s.conf

net.ipv4.ip_forward = 1

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

EOF

[root@master ~]# modprobe br_netfilter

[root@master ~]# sysctl -p /etc/sysctl.d/k8s.conf

net.ipv4.ip_forward = 1

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

(8)配置IPVS

所有节点:

cat > /etc/sysconfig/modules/ipvs.modules <<EOF

#!/bin/bash

modprobe -- ip_vs

modprobe -- ip_vs_rr

modprobe -- ip_vs_wrr

modprobe -- ip_vs_sh

modprobe -- nf_conntrack_ipv4

EOF

# chmod 755 /etc/sysconfig/modules/ipvs.modules

# bash /etc/sysconfig/modules/ipvs.modules && lsmod | grep -e ip_vs -e nf_conntrack_ipv4

所有节点安装 ipset 软件包。

[root@master ~]# yum install ipset ipvsadm -y

(9)安装Docker

所有节点安装Docker,启动Docker引擎并设置开机启动

[root@master ~]# yum install -y yum-utils device-mapper-persistent-data lvm2

[root@master ~]# yum install -y docker-ce-18.09.6 docker-ce-cli-18.09.6 containerd.io

[root@master ~]# mkdir -p /etc/docker

[root@master ~]# tee /etc/docker/daemon,json<<-'EOF'

{

"exec-opts": ["native.cgroupdriver=systemd"]

}

EOF

[root@master ~]# systemctl daemon-reload

[root@master ~]# systemctl restart docker

[root@master ~]# systemctl enable docker

[root@master ~]# docker info | grep Cgroup

2、安装Kubernetes集群

(1)安装工具

Kubelet负责与其他节点集群通信,并进行本节点pod和容器生命周期的管理,Kubeadm是Kubernetes的自动话部署工具,降低了部署难度,提高效率。Kubectl是Kubernetes集群命令行管理工具。

所有节点安装Kubernetes工具并启动Kubelet。

[root@master ~]# yum install -y kubelet-1.14.1 kubeadm-1.14.1 kubectl-1.14.1

[root@master ~]# systemctl enable kubelet && systemctl start kubelet

//此时启动不成功正常,后面初始化的时候会变成功

(2)初始化Kubernetes集群

[root@master ~]# kubeadm init --apiserver-advertise-address 10.30.59.221 --kubernetes-version="v1.14.1" --pod-network-cidr=10.16.0.0/16 --image-repository=registry.aliyuncs.com/google_containers

Kubectl默认会在执行的用户home目录下面的.kube目录中寻找config文件,配置kubectl工具。

[root@master ~]# mkdir -p $HOME/.kube

[root@master ~]# sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

[root@master ~]# sudo chown $(id -u):$(id -g) $HOME/.kube/config

检查集群状态。

[root@master ~]# kubectl get cs

NAME STATUS MESSAGE ERROR

scheduler Healthy ok

controller-manager Healthy ok

etcd-0 Healthy {"health":"true"}

(3)配置Kubernetes网络

登录Master节点,部署flannel网络。

[root@master yaml]# kubectl apply -f kube-flannel.yaml

[root@master ~]# kubectl get pods -n kube-system

NAME READY STATUS RESTARTS AGE

coredns-8686dcc4fd-9lrf8 1/1 Running 0 39m

coredns-8686dcc4fd-j6rp8 1/1 Running 0 39m

etcd-master 1/1 Running 0 38m

kube-apiserver-master 1/1 Running 0 38m

kube-controller-manager-master 1/1 Running 0 38m

kube-flannel-ds-amd64-whptf 1/1 Running 0 98s

kube-proxy-8d82r 1/1 Running 0 39m

kube-scheduler-master 1/1 Running 0 38m

(4)Node节点加入集群

登录Node节点,使用Kubeadm join命令将Node节点加入集群。

kubeadm join 10.30.59.221:6443 --token kvbheb.bpw065esfk5r9btb --discovery-token-ca-cert-hash sha256:11db315c4dd80be10b59c0782f845c4a2d00fa56120bcf40fb1c8d9c7178e16e

登录Master节点,检查个节点状态。

[root@master ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

master Ready master 44m v1.14.1

node Ready <none> 47s v1.14.1

(5)安装Dashboard

使用Kubectl create命令安装Dashboard

[root@master ~]# kubectl create -f yaml/kubernetes-dashboard.yaml

创建管理员

[root@master ~]# kubectl create -f yaml/dashboard-adminuser.yaml

检查所有Pod状态

[root@master ~]# kubectl get pods -n kube-system

NAME READY STATUS RESTARTS AGE

coredns-8686dcc4fd-9lrf8 1/1 Running 0 48m

coredns-8686dcc4fd-j6rp8 1/1 Running 0 48m

etcd-master 1/1 Running 0 47m

kube-apiserver-master 1/1 Running 0 47m

kube-controller-manager-master 1/1 Running 0 47m

kube-flannel-ds-amd64-w9prv 1/1 Running 0 4m36s

kube-flannel-ds-amd64-whptf 1/1 Running 0 10m

kube-proxy-4bsjx 1/1 Running 0 4m36s

kube-proxy-8d82r 1/1 Running 0 48m

kube-scheduler-master 1/1 Running 0 47m

kubernetes-dashboard-5f7b999d65-8pxkk 1/1 Running 0 115s

查看Dashboard端口号

[root@master ~]# kubectl get svc -n kube-system

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kube-dns ClusterIP 10.96.0.10 <none> 53/UDP,53/TCP,9153/TCP 49m

kubernetes-dashboard NodePort 10.108.102.164 <none> 443:30000/TCP 2m56s

可以查看到 kubernetes-dashboard 对外暴露的端口号为 30000,在浏览器中输入地址访问(https://10.30.59.221:30000)

登录kubernetes Dashboard需要输入令牌,通过以下命令获取访问Dashboard的认证令牌。

[root@master ~]# kubectl -n kube-system describe secret $(kubectl -n kube-system get secret | grep kubernetes-dashboard-admin-token | awk '{print $1}')

将获取到的令牌输入浏览器,认证后即可进入Kubernetes控制台

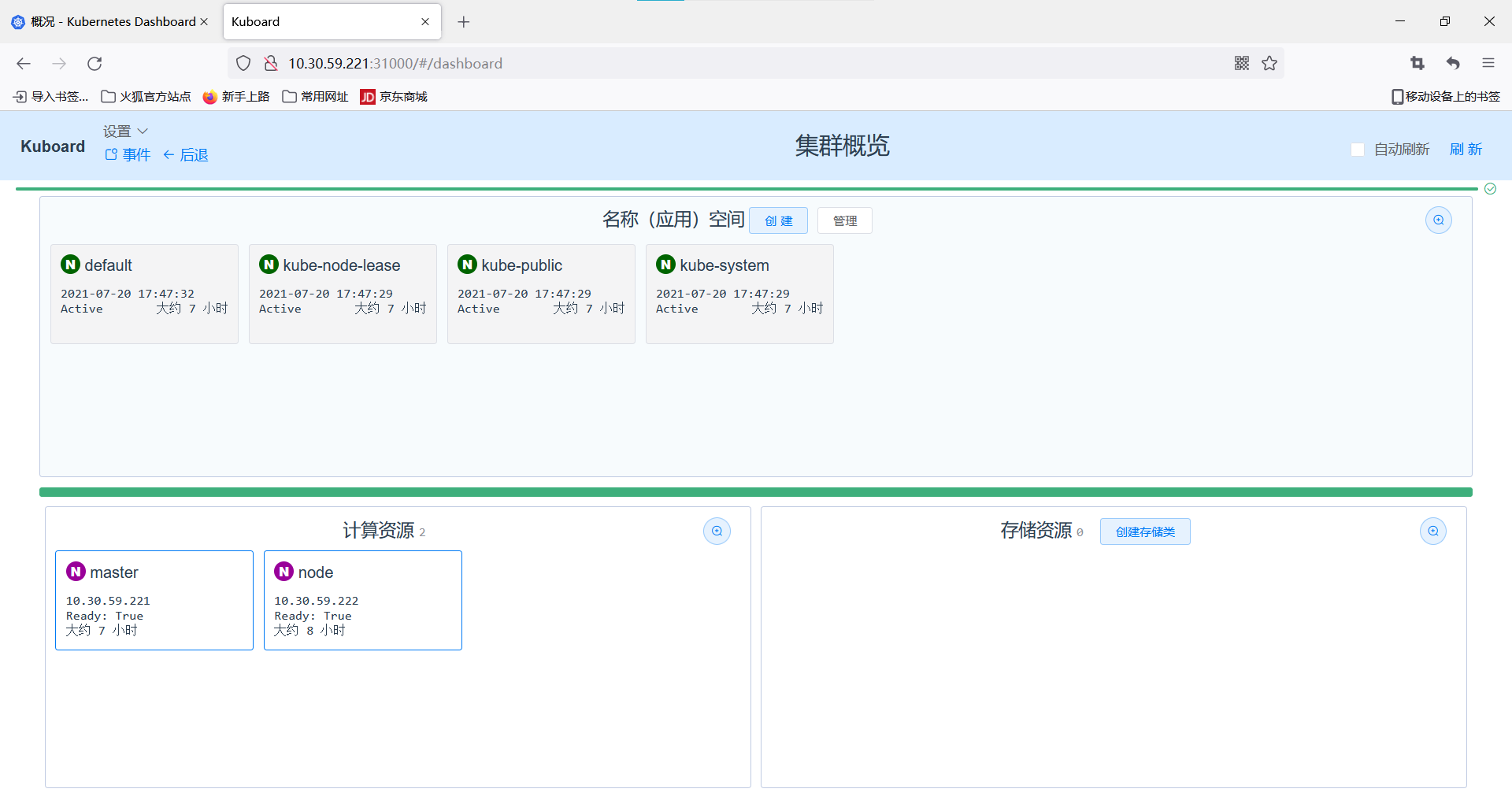

(6)配置Kuboard

Kuboard是一款免费的Kubernetes图形化管理工具, 其力图帮助用户快速在Kubernetes上落地微服务。

登录Master节点,使用kuboard.yaml文件部署Kuboard

[root@master ~]# kubectl create -f yaml/kuboard.yaml

deployment.apps/kuboard created

service/kuboard created

serviceaccount/kuboard-user created

clusterrolebinding.rbac.authorization.k8s.io/kuboard-user created

serviceaccount/kuboard-viewer created

clusterrolebinding.rbac.authorization.k8s.io/kuboard-viewer created

clusterrolebinding.rbac.authorization.k8s.io/kuboard-viewer-node created

clusterrolebinding.rbac.authorization.k8s.io/kuboard-viewer-pvp created

ingress.extensions/kuboard created

在浏览器中输入http://10.30.59.221:31000,即可进入Kuboard的认证界面

在Kuboard控制台中可以查看到集群概览,至此Kubernetes容器云平台就部署完成了

3、配置kubernetes集群

(1)开启IPVS

登录 Master 节点,修改 ConfigMap 的 kube-system/kube-proxy 中的 config.conf 文件,修 改为 mode: “ipvs”。

[root@master ~]# kubectl edit cm kube-proxy -n kube-system

ipvs:

excludeCIDRs: null

minSyncPeriod: 0s

scheduler: ""

syncPeriod: 30s

kind: KubeProxyConfiguration

metricsBindAddress: 127.0.0.1:10249

mode: "ipvs" //修改此处

nodePortAddresses: null

oomScoreAdj: -999

portRange: ""

resourceContainer: /kube-proxy

udpIdleTimeout: 250ms

(2)重启kube-proxy

kubectl get pod -n kube-system | grep kube-proxy | awk '{system("kubectl delete pod "$1" -n kube-system")}'

由于已经通过 ConfigMap 修改了 kube-proxy 的配置,所以后期增加的 Node 节点,会直 接使用 IPVS 模式。查看日志。