- 安装 kubebuilder

- 创建项目

- 编写业务逻辑

- 测试

一、安装kubebuilder

kubebuilder 和 operator-sdk 两个框架,基于 CRD 开发的框架进一步封装出来的框架,这两者大同小异,都是基于controller-runtime 项目实现的 Controller 逻辑, 帮助开发者创建 CRD 并生成 controller 脚手架 ,不同的是 CRD 资源的创建过程。

安装

依赖要求:

Prerequisitesgo version v1.15+ (kubebuilder v3.0 < v3.1).go version v1.16+ (kubebuilder v3.1 < v3.3).go version v1.17+ (kubebuilder v3.3+).docker version 17.03+.kubectl version v1.11.3+.Access to a Kubernetes v1.11.3+ cluster.

下载安装

[root@master1 ~]# go env GOOS GOARCHlinuxamd64[root@master1 ~]# wget https://github.com/kubernetes-sigs/kubebuilder/releases/download/v3.2.0/kubebuilder_linux_amd64[root@master1 ~]# mv kubebuilder_linux_amd64 kubebuilder && chmod +x kubebuilder[root@master1 ~]# mv kubebuilder /usr/local/bin/[root@master1 ~]# kubebuilder versionVersion: main.version{KubeBuilderVersion:"3.2.0", KubernetesVendor:"1.22.1", GitCommit:"b7a730c84495122a14a0faff95e9e9615fffbfc5", BuildDate:"2021-10-29T18:32:16Z", GoOs:"linux", GoArch:"amd64"}

二、创建项目

2.1 预设CRD资源

比如我们想要通过下面的 CRD 资源来创建对应的 etcd 集群:

apiVersion: etcd.ydzs.io/v1alpha1

kind: EtcdCluster

metadata:

name: demo

spec:

size: 3 # 副本数量

image: cnych/etcd:v3.4.13 # 镜像

2.2 初始化项目

mkdir -p gitee.com/fym89/etcd-operator && cd gitee.com/fym89/etcd-operator

export GO111MODULE=on

export GOPROXY=https://goproxy.cn

kubebuilder init --domain fuyu.io --owner fuyu --repo gitee.com/fym89/etcd-operator

2.3 添加api

kubebuilder create api --group etcd --version v1alpha1 --kind EtcdCluster --resource=true --controller=true

附:把linux服务器上的项目复制到本地windows的Goland编辑开发

参考附:Goland配置远程连接项目篇,地址:

https://www.yuque.com/docs/share/3416886a-96d0-4787-bb9d-9f04d32009f9?#

1、打开本地windows的Goland,新建项目,取名为etcdOperator;

2、工具 —> 部署 —> 配置,连接 —> 根路径(R)为远程服务器的项目目录,映射 —>部署路径(E)为 /。

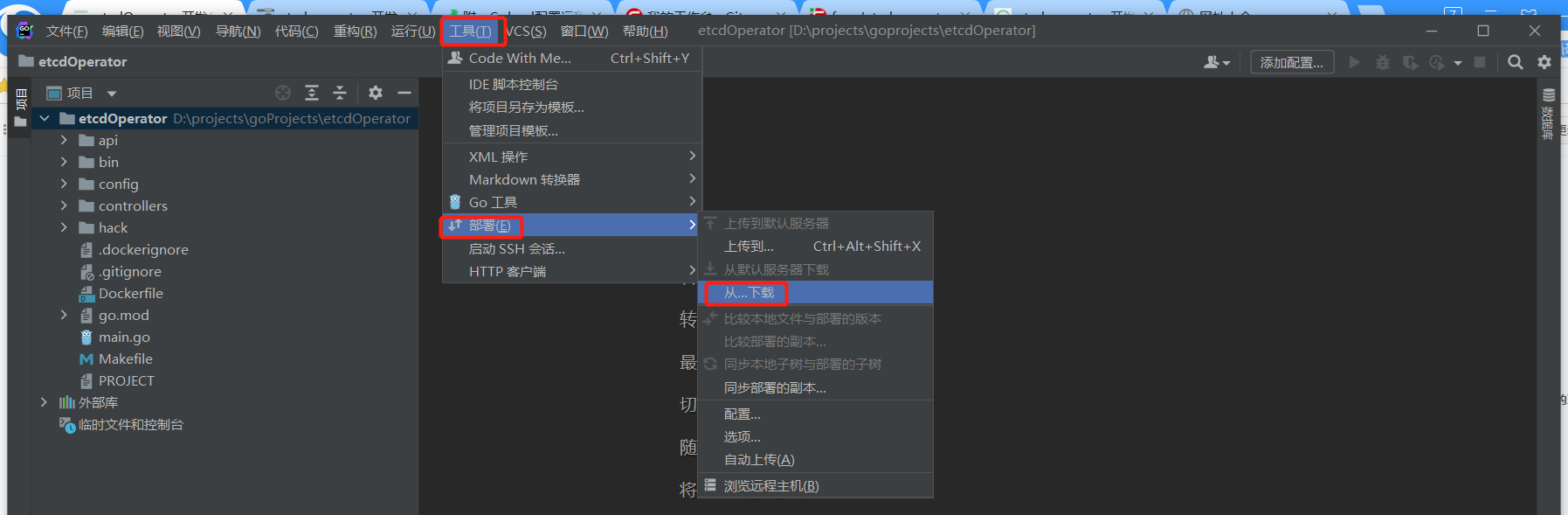

3、从虚拟机A下载项目

若跳出文件覆盖提示,直接选择 总是(A).

三、编写业务逻辑

3.1 基础修改

3.1.1 修改config/samples/etcd_v1alpha1_etcdcluster.yaml

apiVersion: etcd.fuyu.io/v1alpha1

kind: EtcdCluster

metadata:

name: etcdcluster-sample

spec:

size: 3 # 副本数量 (应该是奇数,后期可以做优化判断)

image: cnych/etcd:v3.4.13 # 镜像

和我们预设的crd资源清单保持格式一致。

3.1.2 在main.go里添加Log初始化

if err = (&controllers.EtcdClusterReconciler{

Client: mgr.GetClient(),

Log: ctrl.Log.WithName("controllers").WithName("EtcdCluster"), // 初始化日志

Scheme: mgr.GetScheme(),

}).SetupWithManager(mgr); err != nil {

setupLog.Error(err, "unable to create controller", "controller", "EtcdCluster")

os.Exit(1)

}

3.1.3 修改api/v1alpha1/etcdcluster_types.go

1)处

在 EtcdClusterSpec struct 结构体里添加yaml解析字段,和预设CRD资源清单保持一致。

type EtcdClusterSpec struct {

// INSERT ADDITIONAL SPEC FIELDS - desired state of cluster

// Important: Run "make" to regenerate code after modifying this file

// Foo is an example field of EtcdCluster. Edit etcdcluster_types.go to remove/update

Size *int32 `json:"size"`

Image string `json:"image"`

}

2)处

添加自定义列,举个例子,如下面的资源sts,有NAME、READY、AGE,怎么再定义一个自定义列?

[root@master1 ~]# kubectl get svc -n test3

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

nginx-svc NodePort 10.105.221.68 <none> 80:31450/TCP 31d

nginx-svc11 NodePort 10.99.41.47 <none> 8080:30886/TCP 30d

其值来自kubectl get sts 对象 -o yaml里面的值:

[root@master1 home]# kubectl get svc nginx-svc -n test3 -o yaml | grep clusterIP

clusterIP: 10.105.221.68

[root@master1 home]#

言归正传,在//+kubebuilder:object:root=true后面添加自己的列:

优先级设置:默认优先级为0,kubectl get xxx xxxx显示,优先级priority=1,加-o wide才显示。

type EtcdClusterStatus struct {

// INSERT ADDITIONAL STATUS FIELD - define observed state of cluster

// Important: Run "make" to regenerate code after modifying this file

}

//+kubebuilder:object:root=true

//+kubebuilder:subresource:status

//+kubebuilder:printcolumn:name="Image",type="string",JSONPath=".spec.image", description="The Docker Image of EtcdCluster"

//+kubebuilder:printcolumn:name="Size", type="integer",JSONPath=".spec.size"

//+kubebuilder:printcolumn:name="Age", type="date",priority=1,JSONPath=".metadata.creationTimestamp"

效果举例:

[root@master1 home]# kubectl get EtcdCluster -n test1

NAME IMAGE SIZE

etcdcluster-sample cnych/etcd:v3.4.13 3

[root@master1 home]#

[root@master1 home]# kubectl get EtcdCluster -n test1 -o wide

NAME IMAGE SIZE AGE

etcdcluster-sample cnych/etcd:v3.4.13 3 8s

[root@master1 home]#

printcolumn 注释有几个不同的选项,在这里我们只使用了其中一部分:

- name:这是我们新增的列的标题,由 kubectl 打印在标题中 ;

- type:要打印的值的数据类型,有效类型为 integer、number、string、boolean 和 date ;

- JSONPath:这是要打印数据的路径,在我们的例子中,镜像 image 属于 spec 下面的属性,所以我们使用 .spec.image。需要注意的是 JSONPath 属性引用的是生成的 JSON CRD,而不是引用本地 Go 类。

- description:描述列的可读字符串,目前暂未发现该属性的作用…

修改完这个文件先把项目上传到远程虚拟机编译一下,再拉到本地重新编写。

1.1 )在Goland上选择项目目录,工具 —> 部署 —>上传到…

1.2)到远程服务器虚拟机A上的项目根目录,执行make命令;

1.3)工具 —> 部署 —>从…下载

3.1.3 修改controllers/etcdcluster_controller.go

通用业务逻辑 — 调谐部分 - Reconcile函数

/*

Copyright 2022 fuyu.

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License.

*/

package controllers

import (

"context"

etcdv1alpha1 "gitee.com/fym89/etcd-operator/api/v1alpha1"

"github.com/go-logr/logr"

appsv1 "k8s.io/api/apps/v1"

corev1 "k8s.io/api/core/v1"

// v1 "k8s.io/apimachinery/pkg/apis/meta/v1"

"k8s.io/apimachinery/pkg/runtime"

ctrl "sigs.k8s.io/controller-runtime"

"sigs.k8s.io/controller-runtime/pkg/client"

"sigs.k8s.io/controller-runtime/pkg/controller/controllerutil"

)

// EtcdClusterReconciler reconciles a EtcdCluster object

type EtcdClusterReconciler struct {

client.Client

Log logr.Logger

Scheme *runtime.Scheme

}

//+kubebuilder:rbac:groups=apps,resources=statefulsets,verbs=get;list;watch;create;update;patch;delete

//+kubebuilder:rbac:groups=core,resources=services,verbs=get;list;watch;create;update;patch;delete

//+kubebuilder:rbac:groups=etcd.fuyu.io,resources=etcdclusters,verbs=get;list;watch;create;update;patch;delete

//+kubebuilder:rbac:groups=etcd.fuyu.io,resources=etcdclusters/status,verbs=get;update;patch

//+kubebuilder:rbac:groups=etcd.fuyu.io,resources=etcdclusters/finalizers,verbs=update

// Reconcile is part of the main kubernetes reconciliation loop which aims to

// move the current state of the cluster closer to the desired state.

// TODO(user): Modify the Reconcile function to compare the state specified by

// the EtcdCluster object against the actual cluster state, and then

// perform operations to make the cluster state reflect the state specified by

// the user.

//

// For more details, check Reconcile and its Result here:

// - https://pkg.go.dev/sigs.k8s.io/controller-runtime@v0.10.0/pkg/reconcile

func (r *EtcdClusterReconciler) Reconcile(ctx context.Context, req ctrl.Request) (ctrl.Result, error) {

ctx = context.Background()

log := r.Log.WithValues("etcdcluster", req.NamespacedName)

// 首先获取EtcdCluster 实例

var etcdCluster etcdv1alpha1.EtcdCluster

if err := r.Get(ctx, req.NamespacedName, &etcdCluster); err != nil {

// 如果EtcdCluster 是被删除的,那么我们应该忽略掉

return ctrl.Result{}, client.IgnoreNotFound(err)

}

// 已经获取到了 EtcdCluster 实例

// 创建或是更新对应的 deployment 和 svc 对象,使用 CreateOrUpdate 进行调谐即可

// CreateOrUpdate svc

var svc corev1.Service

svc.Name = etcdCluster.Name

svc.Namespace = etcdCluster.Namespace

op, err := ctrl.CreateOrUpdate(ctx, r.Client, &svc, func() error {

// 调谐的函数必须在这里实现,实际上就是去拼接 service

MutateHeadlessSvc(&etcdCluster, &svc)

return controllerutil.SetControllerReference(&etcdCluster, &svc, r.Scheme)

})

if err != nil {

return ctrl.Result{}, err

}

log.Info("CreateOrUpdate Result", "Service", op)

// CreateOrUpdate StatefulSet

var sts appsv1.StatefulSet

sts.Name = etcdCluster.Name

sts.Namespace = etcdCluster.Namespace

op, err = ctrl.CreateOrUpdate(ctx, r.Client, &sts, func() error {

// 调谐的函数必须在这里实现,实际上就是去拼接 StatefulSet

MutateStatefulSet(&etcdCluster, &sts)

return controllerutil.SetControllerReference(&etcdCluster, &sts, r.Scheme)

})

if err != nil {

return ctrl.Result{}, err

}

log.Info("CreateOrUpdate Result", "StatefulSet", op)

return ctrl.Result{}, nil

}

// SetupWithManager sets up the controller with the Manager.

func (r *DeployServiceReconciler) SetupWithManager(mgr ctrl.Manager) error {

return ctrl.NewControllerManagedBy(mgr).

For(&appv1beta1.DeployService{}).

Owns(&appsv1.StatefulSet{}).

Owns(&corev1.Service{}).

Complete(r)

}

3.2 核心代码

3.2.1 编辑controllers/resources.go

差异化逻辑 - 添加MutateHeadlessSvc和MutateStatefulSet方法的实现。

package controllers

import (

"gitee.com/fym89/etcd-operator/api/v1alpha1"

appsv1 "k8s.io/api/apps/v1"

corev1 "k8s.io/api/core/v1"

"k8s.io/apimachinery/pkg/api/resource"

metav1 "k8s.io/apimachinery/pkg/apis/meta/v1"

"strconv"

)

var (

EtcdClusterLabelKey = "etcd.fuyu.io/cluster"

EtcdClusterCommonLabelKey = "app"

EtcdDataDirName = "datadir"

)

func MutateHeadlessSvc(cluster *v1alpha1.EtcdCluster, svc *corev1.Service) {

svc.Labels = map[string]string{

EtcdClusterCommonLabelKey: "etcd",

}

svc.Spec = corev1.ServiceSpec{

ClusterIP: corev1.ClusterIPNone,

Selector: map[string]string{

EtcdClusterLabelKey: cluster.Name,

},

Ports: []corev1.ServicePort{corev1.ServicePort{

Name: "peer",

Port: 2380,

}, corev1.ServicePort{

Name: "client",

Port: 2379,

}},

}

}

func MutateStatefulSet(cluster *v1alpha1.EtcdCluster, sts *appsv1.StatefulSet) {

sts.Labels = map[string]string{

EtcdClusterCommonLabelKey: "etcd",

}

sts.Spec = appsv1.StatefulSetSpec{

Replicas: cluster.Spec.Size,

ServiceName: cluster.Name,

Selector: &metav1.LabelSelector{MatchLabels: map[string]string{

EtcdClusterLabelKey: cluster.Name,

}},

Template: corev1.PodTemplateSpec{

ObjectMeta: metav1.ObjectMeta{

Labels: map[string]string{

EtcdClusterLabelKey: cluster.Name,

EtcdClusterCommonLabelKey: "etcd",

},

},

Spec: corev1.PodSpec{

Containers: newContainers(cluster),

},

},

VolumeClaimTemplates: []corev1.PersistentVolumeClaim{

corev1.PersistentVolumeClaim{

ObjectMeta: metav1.ObjectMeta{

Name: EtcdDataDirName,

},

Spec: corev1.PersistentVolumeClaimSpec{

AccessModes: []corev1.PersistentVolumeAccessMode{

corev1.ReadWriteOnce,

},

Resources: corev1.ResourceRequirements{

Requests: corev1.ResourceList{

corev1.ResourceStorage: resource.MustParse("1Gi"),

},

},

},

},

},

}

}

func newContainers(cluster *v1alpha1.EtcdCluster) []corev1.Container {

return []corev1.Container{

corev1.Container{

Name: "etcd",

Image: cluster.Spec.Image,

ImagePullPolicy: corev1.PullIfNotPresent,

Ports: []corev1.ContainerPort{

corev1.ContainerPort{

Name: "peer",

ContainerPort: 2380,

},

corev1.ContainerPort{

Name: "client",

ContainerPort: 2379,

},

},

Env: []corev1.EnvVar{

corev1.EnvVar{

Name: "INITIAL_CLUSTER_SIZE",

Value: strconv.Itoa(int(*cluster.Spec.Size)),

},

corev1.EnvVar{

Name: "SET_NAME",

Value: cluster.Name,

},

corev1.EnvVar{

Name: "MY_NAMESPACE",

ValueFrom: &corev1.EnvVarSource{

FieldRef: &corev1.ObjectFieldSelector{

FieldPath: "metadata.namespace",

},

},

},

corev1.EnvVar{

Name: "POD_IP",

ValueFrom: &corev1.EnvVarSource{

FieldRef: &corev1.ObjectFieldSelector{

FieldPath: "status.podIP",

},

},

},

},

VolumeMounts: []corev1.VolumeMount{

corev1.VolumeMount{

Name: EtcdDataDirName,

MountPath: "/var/run/etcd",

},

},

Command: []string{

"/bin/sh", "-ec",

"HOSTNAME=$(hostname)\n\n ETCDCTL_API=3\n\n eps() {\n EPS=\"\"\n for i in $(seq 0 $((${INITIAL_CLUSTER_SIZE} - 1))); do\n EPS=\"${EPS}${EPS:+,}http://${SET_NAME}-${i}.${SET_NAME}.${MY_NAMESPACE}.svc.cluster.local:2379\"\n done\n echo ${EPS}\n }\n\n member_hash() {\n etcdctl member list | grep -w \"$HOSTNAME\" | awk '{ print $1}' | awk -F \",\" '{ print $1}'\n }\n\n initial_peers() {\n PEERS=\"\"\n for i in $(seq 0 $((${INITIAL_CLUSTER_SIZE} - 1))); do\n PEERS=\"${PEERS}${PEERS:+,}${SET_NAME}-${i}=http://${SET_NAME}-${i}.${SET_NAME}.${MY_NAMESPACE}.svc.cluster.local:2380\"\n done\n echo ${PEERS}\n }\n\n # etcd-SET_ID\n SET_ID=${HOSTNAME##*-}\n\n # adding a new member to existing cluster (assuming all initial pods are available)\n if [ \"${SET_ID}\" -ge ${INITIAL_CLUSTER_SIZE} ]; then\n # export ETCDCTL_ENDPOINTS=$(eps)\n # member already added?\n\n MEMBER_HASH=$(member_hash)\n if [ -n \"${MEMBER_HASH}\" ]; then\n # the member hash exists but for some reason etcd failed\n # as the datadir has not be created, we can remove the member\n # and retrieve new hash\n echo \"Remove member ${MEMBER_HASH}\"\n etcdctl --endpoints=$(eps) member remove ${MEMBER_HASH}\n fi\n\n echo \"Adding new member\"\n\n etcdctl member --endpoints=$(eps) add ${HOSTNAME} --peer-urls=http://${HOSTNAME}.${SET_NAME}.${MY_NAMESPACE}.svc.cluster.local:2380 | grep \"^ETCD_\" > /var/run/etcd/new_member_envs\n\n if [ $? -ne 0 ]; then\n echo \"member add ${HOSTNAME} error.\"\n rm -f /var/run/etcd/new_member_envs\n exit 1\n fi\n\n echo \"==> Loading env vars of existing cluster...\"\n sed -ie \"s/^/export /\" /var/run/etcd/new_member_envs\n cat /var/run/etcd/new_member_envs\n . /var/run/etcd/new_member_envs\n\n exec etcd --listen-peer-urls http://${POD_IP}:2380 \\\n --listen-client-urls http://${POD_IP}:2379,http://127.0.0.1:2379 \\\n --advertise-client-urls http://${HOSTNAME}.${SET_NAME}.${MY_NAMESPACE}.svc.cluster.local:2379 \\\n --data-dir /var/run/etcd/default.etcd\n fi\n\n for i in $(seq 0 $((${INITIAL_CLUSTER_SIZE} - 1))); do\n while true; do\n echo \"Waiting for ${SET_NAME}-${i}.${SET_NAME}.${MY_NAMESPACE}.svc.cluster.local to come up\"\n ping -W 1 -c 1 ${SET_NAME}-${i}.${SET_NAME}.${MY_NAMESPACE}.svc.cluster.local > /dev/null && break\n sleep 1s\n done\n done\n\n echo \"join member ${HOSTNAME}\"\n # join member\n exec etcd --name ${HOSTNAME} \\\n --initial-advertise-peer-urls http://${HOSTNAME}.${SET_NAME}.${MY_NAMESPACE}.svc.cluster.local:2380 \\\n --listen-peer-urls http://${POD_IP}:2380 \\\n --listen-client-urls http://${POD_IP}:2379,http://127.0.0.1:2379 \\\n --advertise-client-urls http://${HOSTNAME}.${SET_NAME}.${MY_NAMESPACE}.svc.cluster.local:2379 \\\n --initial-cluster-token etcd-cluster-1 \\\n --data-dir /var/run/etcd/default.etcd \\\n --initial-cluster $(initial_peers) \\\n --initial-cluster-state new",

},

Lifecycle: &corev1.Lifecycle{

PreStop: &corev1.Handler{

Exec: &corev1.ExecAction{

Command: []string{

"/bin/sh", "-ec",

"HOSTNAME=$(hostname)\n\n member_hash() {\n etcdctl member list | grep -w \"$HOSTNAME\" | awk '{ print $1}' | awk -F \",\" '{ print $1}'\n }\n\n eps() {\n EPS=\"\"\n for i in $(seq 0 $((${INITIAL_CLUSTER_SIZE} - 1))); do\n EPS=\"${EPS}${EPS:+,}http://${SET_NAME}-${i}.${SET_NAME}.${MY_NAMESPACE}.svc.cluster.local:2379\"\n done\n echo ${EPS}\n }\n\n export ETCDCTL_ENDPOINTS=$(eps)\n SET_ID=${HOSTNAME##*-}\n\n # Removing member from cluster\n if [ \"${SET_ID}\" -ge ${INITIAL_CLUSTER_SIZE} ]; then\n echo \"Removing ${HOSTNAME} from etcd cluster\"\n etcdctl member remove $(member_hash)\n if [ $? -eq 0 ]; then\n # Remove everything otherwise the cluster will no longer scale-up\n rm -rf /var/run/etcd/*\n fi\n fi",

},

},

},

},

},

}

}

四、测试

4.1 上传代码

在Goland上选择项目目录,然后依次 工具—> 部署 —> 上传到…

4.2 编译安装

4.3 创建EtcdCluster资源测试

vim etcdOperator-crd.yaml

apiVersion: etcd.fuyu.io/v1alpha1

kind: EtcdCluster

metadata:

name: etcdcluster-sample

spec:

size: 3 # 副本数量 (应该是奇数,后期可以做优化判断)

image: cnych/etcd:v3.4.13 # 镜像

kubectl apply -f etcdOperator-crd.yaml

4.4 功能测试

4.4.1 扩容、缩容

执行扩容、缩容命令,sts示例是否正常增加、减少。先加后减。

kubectl scale sts etcdcluster-sample --replicas=5

kubectl scale sts etcdcluster-sample --replicas=3

4.4.2 自动创建测试

手动删除一个pod实例,是否回自动创建;

手动删除掉service资源,是否回自动创建;

手动删除掉sts资源,是否回自动创建;

kubectl delete pod etcdcluster-sample-2

kubectl get pods

kubectl delete svc etcdcluster-sample

kubectl get svc

kubectl delete sts etcdcluster-sample

kubectl get sts

你本节课的不能分解