- 1、修改主机名和hosts文件

- 2、集群免密

- 3、优化集群

- 4、升级系统内核

- 5、安装Docker

- 6、安装部署Master节点

- 下载

- 设置执行权限

- 移动到/usr/local/bin

- 6.2、签发Master证书

- 6.2.1、节点规划

- 6.2.2、创建CA证书

- 6.2.3、创建根证书签名

- 6.2.4、生成CA证书

- 6.2.5、签发kube-apiserver证书

- 6.2.6、生成api server的证书

- 6.2.7、创建kube-controller-manager证书签名配置

- 6.2.8、生成kube-controller-manager的证书

- 6.2.9、创建kube-scheduler签名配置

- 6.2.10、生成kube-scheduler证书

- 6.2.11、创建kube-proxy证书签名配置

- 6.2.12、生成kube-proxy证书

- 6.2.13、签发管理员用户证书

- 6.2.14、生成管理员证书

- 6.2.15、颁发证书

- 6.3、部署Master节点

- 6.4、部署网络插件

- 6.5、部署集群DNS

- 6.6、测试集群

- 7、部署Worker节点

- 8、设置集群角色

二进制部署各个组件。

1、修改主机名和hosts文件

192.168.13.51 kubernetes-master-01 m1192.168.13.52 kubernetes-master-02 m2192.168.13.53 kubernetes-master-03 m3192.168.13.54 kubernetes-node-01 n1192.168.13.55 kubernetes-node-02 n2192.168.13.56 kubernetes-node-03 n3192.168.13.57 kubernetes-node-04 n4192.168.13.58 kubernetes-node-05 n5hostnamectl set-hostname kubernetes-master-01hostnamectl set-hostname kubernetes-master-02hostnamectl set-hostname kubernetes-master-03hostnamectl set-hostname kubernetes-node-01hostnamectl set-hostname kubernetes-node-02hostnamectl set-hostname kubernetes-node-03hostnamectl set-hostname kubernetes-node-04hostnamectl set-hostname kubernetes-node-05

2、集群免密

[root@kubernetes-master-01 ~]# for i in m1 m2 n1 n2 n3 n4 n5; do scp /etc/hosts root@$i:/etc/hosts; done

3、优化集群

[root@kubernetes-master-01 bash]# wget http://106.13.81.75/init.sh

[root@kubernetes-master-01 bash]# ./init.sh

4、升级系统内核

[root@kubernetes-master-01 bash]# curl -o kernel-lt-devel-4.4.233-1.el7.elrepo.x86_64.rpm http://106.13.81.75/kernel-lt-devel-4.4.233-1.el7.elrepo.x86_64.rpm

[root@kubernetes-master-01 bash]# curl -o kernel-lt-4.4.233-1.el7.elrepo.x86_64.rpm http://106.13.81.75/kernel-lt-4.4.233-1.el7.elrepo.x86_64.rpm

cd /opt

yum localinstall -y kernel-lt*

grub2-set-default 0 && grub2-mkconfig -o /etc/grub2.cfg

grubby --default-kernel

# 重启

reboot

5、安装Docker

# step 1: 安装必要的一些系统工具

sudo yum install -y yum-utils device-mapper-persistent-data lvm2

# Step 2: 添加软件源信息

sudo yum-config-manager --add-repo https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

# Step 3

sudo sed -i 's+download.docker.com+mirrors.aliyun.com/docker-ce+' /etc/yum.repos.d/docker-ce.repo

# Step 4: 更新并安装Docker-CE

sudo yum makecache fast

# Step 5: 安装docker

yum install docker-ce-19.03.12 -y

# Step 6 : 启动Docker

systemctl enable --now docker

6、安装部署Master节点

Etcd、api server、控制器、调度器、kubelet、kubectl和kube-proxy。

6.1、部署Etcd集群

相当于k8s的数据库。

- 部署整数生成工具

```bash

下载

wget https://pkg.cfssl.org/R1.2/cfssl_linux-amd64 wget https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64

设置执行权限

chmod +x cfssljson_linux-amd64 chmod +x cfssl_linux-amd64

移动到/usr/local/bin

mv cfssljson_linux-amd64 cfssljson mv cfssl_linux-amd64 cfssl mv cfssljson cfssl /usr/local/bin

<a name="NxUo6"></a>

#### 6.1.1、 **创建集群根证书**

> 从整个架构来看,集群环境中最重要的部分就是etcd和API server。所以集群当中的证书都是针对etcd和api server来设置的。

>

> 所谓根证书,是CA认证中心与用户建立信任关系的基础,用户的数字证书必须有一个受信任的根证书,用户的数字证书才是有效的。从技术上讲,证书其实包含三部分,用户的信息,用户的公钥,以及证书签名。CA负责数字证书的批审、发放、归档、撤销等功能,CA颁发的数字证书拥有CA的数字签名,所以除了CA自身,其他机构无法不被察觉的改动。

```bash

mkdir -p /opt/cert/ca

cat > /opt/cert/ca/ca-config.json <<EOF

{

"signing": {

"default": {

"expiry": "8760h"

},

"profiles": {

"kubernetes": {

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

],

"expiry": "8760h"

}

}

}

}

EOF

- default是默认策略,指定证书默认有效期是1年

2. profiles是定义使用场景,这里只是kubernetes,其实可以定义多个场景,分别指定不同的过期时间,使用场景等参数,后续签名证书时使用某个profile;

3. signing: 表示该证书可用于签名其它证书,生成的ca.pem证书

4. server auth: 表示client 可以用该CA 对server 提供的证书进行校验;

5. client auth: 表示server 可以用该CA 对client 提供的证书进行验证。

6.1.2、创建根CA证书签名请求文件

cat > /opt/cert/ca/ca-csr.json << EOF

{

"CN": "kubernetes",

"key": {

"algo": "rsa",

"size": 2048

},

"names":[{

"C": "CN",

"ST": "ShangHai",

"L": "ShangHai"

}]

}

EOF

6.1.3、生成CA证书

[root@kubernetes-master-01 ca]#

[root@kubernetes-master-01 ca]# pwd

/opt/cert/ca

[root@kubernetes-master-01 ca]# cfssl gencert -initca ca-csr.json | cfssljson -bare ca -

2022/03/25 11:35:09 [INFO] generating a new CA key and certificate from CSR

2022/03/25 11:35:09 [INFO] generate received request

2022/03/25 11:35:09 [INFO] received CSR

2022/03/25 11:35:09 [INFO] generating key: rsa-2048

2022/03/25 11:35:09 [INFO] encoded CSR

2022/03/25 11:35:09 [INFO] signed certificate with serial number 278279985013865953231953860064933218314938581534

6.1.4、部署Etcd集群

Etcd是基于Raft的分布式key-value存储系统,由CoreOS团队开发,常用于服务发现,共享配置,以及并发控制(如leader选举,分布式锁等等)。Kubernetes使用Etcd进行状态和数据存储!

1、ETCD集群规划

| ETCD节点 | IP |

|---|---|

| kubernetes-master-01 | 192.168.13.51 |

| kubernetes-master-02 | 192.168.13.52 |

| kubernetes-master-03 | 192.168.13.53 |

2、创建ETCD证书

mkdir -p /opt/cert/etcd

cd /opt/cert/etcd

cat > etcd-csr.json << EOF

{

"CN": "etcd",

"hosts": [

"127.0.0.1",

"192.168.13.51",

"192.168.13.52",

"192.168.13.53",

"192.168.13.54",

"192.168.13.55",

"192.168.13.56",

"192.168.13.57",

"192.168.13.58",

"192.168.13.59"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "ShangHai",

"L": "ShangHai"

}

]

}

EOF

3、生成Etcd证书

[root@kubernetes-master-01 etcd]# cfssl gencert -ca=../ca/ca.pem -ca-key=../ca/ca-key.pem -config=../ca/ca-config.json -profile=kubernetes etcd-csr.json | cfssljson -bare etcd

2022/03/25 11:39:58 [INFO] generate received request

2022/03/25 11:39:58 [INFO] received CSR

2022/03/25 11:39:58 [INFO] generating key: rsa-2048

2022/03/25 11:39:59 [INFO] encoded CSR

2022/03/25 11:39:59 [INFO] signed certificate with serial number 32240788461966473615036737107650713841065904168

2022/03/25 11:39:59 [WARNING] This certificate lacks a "hosts" field. This makes it unsuitable for

websites. For more information see the Baseline Requirements for the Issuance and Management

of Publicly-Trusted Certificates, v.1.1.6, from the CA/Browser Forum (https://cabforum.org);

specifically, section 10.2.3 ("Information Requirements").

- 证书配置项详解 | gencert | 生成新的key(密钥)和签名证书 | | —- | —- | | -initca | 初始化一个新ca | | -ca-key | 指明ca的证书 | | -config | 指明ca的私钥文件 | | -profile | 指明请求证书的json文件 | | -ca | 与config中的profile对应,是指根据config中的profile段来生成证书的相关信息 |

4、分发证书

[root@kubernetes-master-01 etcd]# for ip in m1 m2 m3; do ssh root@${ip} "mkdir -pv /etc/etcd/ssl"; scp ../ca/ca*.pem root@${ip}:/etc/etcd/ssl; scp ./etcd*.pem root@${ip}:/etc/etcd/ssl ;done

5、部署ETCD

[root@kubernetes-master-01 etcd]# mkdir /opt/data

[root@kubernetes-master-01 etcd]# cd /opt/data

# 下载ETCD安装包

[root@kubernetes-master-01 data]# wget https://mirrors.huaweicloud.com/etcd/v3.3.24/etcd-v3.3.24-linux-amd64.tar.gz

# 解压

[root@kubernetes-master-01 data]# tar xf etcd-v3.3.24-linux-amd64.tar.gz

# 分发至其他节点

[root@kubernetes-master-01 data]# for i in m1 m2 m3; do scp ./etcd-v3.3.24-linux-amd64/etcd* root@$i:/usr/local/bin/; done

# 查看ETCD安装是否成功

[root@kubernetes-master-01 data]# etcd --version

etcd Version: 3.3.24

Git SHA: bdd57848d

Go Version: go1.12.17

Go OS/Arch: linux/amd64

6、注册Etcd服务

mkdir -pv /etc/kubernetes/conf/etcd

ETCD_NAME=`hostname`

INTERNAL_IP=`hostname -i`

INITIAL_CLUSTER=kubernetes-master-01=https://192.168.13.51:2380,kubernetes-master-02=https://192.168.13.52:2380,kubernetes-master-03=https://192.168.13.53:2380

cat << EOF | sudo tee /usr/lib/systemd/system/etcd.service

[Unit]

Description=etcd

Documentation=https://github.com/coreos

[Service]

ExecStart=/usr/local/bin/etcd \\

--name ${ETCD_NAME} \\

--cert-file=/etc/etcd/ssl/etcd.pem \\

--key-file=/etc/etcd/ssl/etcd-key.pem \\

--peer-cert-file=/etc/etcd/ssl/etcd.pem \\

--peer-key-file=/etc/etcd/ssl/etcd-key.pem \\

--trusted-ca-file=/etc/etcd/ssl/ca.pem \\

--peer-trusted-ca-file=/etc/etcd/ssl/ca.pem \\

--peer-client-cert-auth \\

--client-cert-auth \\

--initial-advertise-peer-urls https://${INTERNAL_IP}:2380 \\

--listen-peer-urls https://${INTERNAL_IP}:2380 \\

--listen-client-urls https://${INTERNAL_IP}:2379,https://127.0.0.1:2379 \\

--advertise-client-urls https://${INTERNAL_IP}:2379 \\

--initial-cluster-token etcd-cluster \\

--initial-cluster ${INITIAL_CLUSTER} \\

--initial-cluster-state new \\

--data-dir=/var/lib/etcd

Restart=on-failure

RestartSec=5

[Install]

WantedBy=multi-user.target

EOF

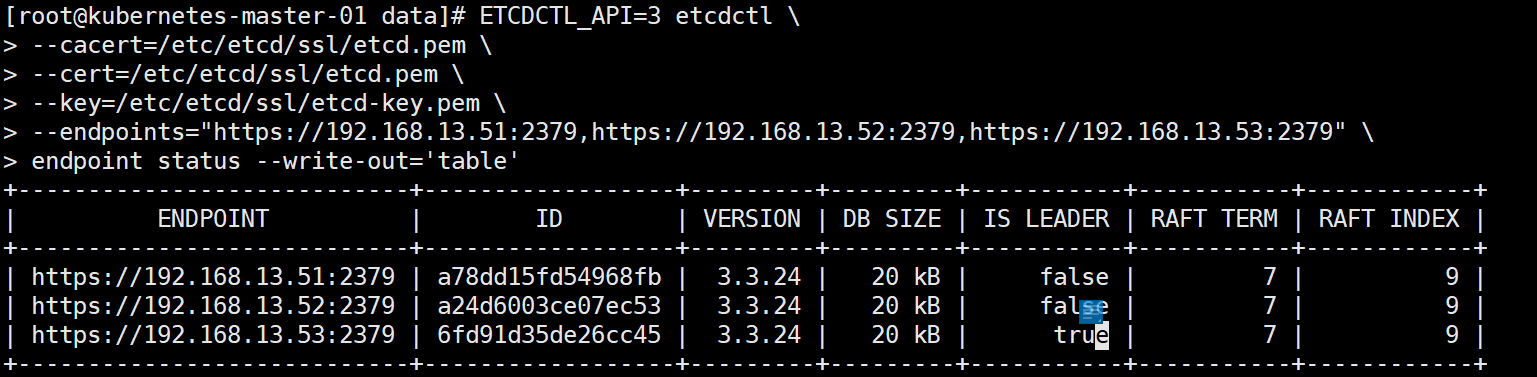

7、启动Etcd并测试

[root@kubernetes-master-01 data]# systemctl enable --now etcd

Created symlink from /etc/systemd/system/multi-user.target.wants/etcd.service to /usr/lib/systemd/system/etcd.service.

ETCDCTL_API=3 etcdctl \

--cacert=/etc/etcd/ssl/etcd.pem \

--cert=/etc/etcd/ssl/etcd.pem \

--key=/etc/etcd/ssl/etcd-key.pem \

--endpoints="https://192.168.13.51:2379,https://192.168.13.52:2379,https://192.168.13.53:2379" \

endpoint status --write-out='table'

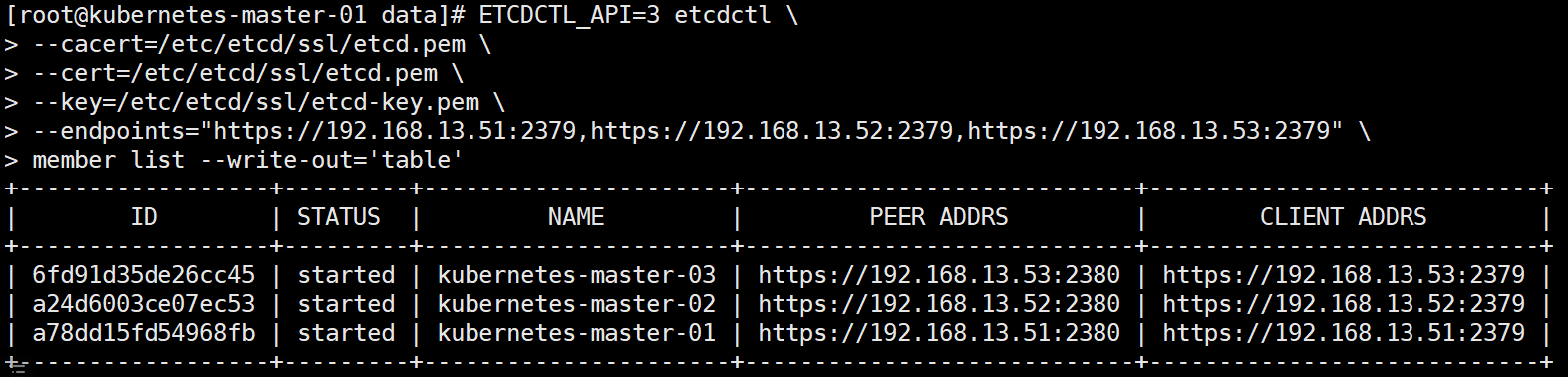

ETCDCTL_API=3 etcdctl \

--cacert=/etc/etcd/ssl/etcd.pem \

--cert=/etc/etcd/ssl/etcd.pem \

--key=/etc/etcd/ssl/etcd-key.pem \

--endpoints="https://192.168.13.51:2379,https://192.168.13.52:2379,https://192.168.13.53:2379" \

member list --write-out='table'

6.2、签发Master证书

api server是k8s集群的一个核心控制器。

6.2.1、节点规划

| 节点 | IP |

|---|---|

| Kubernetes-master-01 | 192.168.13.51 |

| Kubernetes-master-02 | 192.168.13.52 |

| Kubernetes-master-03 | 192.168.13.53 |

6.2.2、创建CA证书

[root@kubernetes-master-01 data]# mkdir /opt/cert/k8s

[root@kubernetes-master-01 data]# cd /opt/cert/k8s

[root@kubernetes-master-01 k8s]# cat > ca-config.json << EOF

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"kubernetes": {

"expiry": "87600h",

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

]

}

}

}

}

EOF

6.2.3、创建根证书签名

cat > ca-csr.json << EOF

{

"CN": "kubernetes",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "ShangHai",

"ST": "ShangHai"

}

]

}

EOF

6.2.4、生成CA证书

[root@kubernetes-master-01 k8s]# cfssl gencert -initca ca-csr.json | cfssljson -bare ca -

2022/03/25 11:59:00 [INFO] generating a new CA key and certificate from CSR

2022/03/25 11:59:00 [INFO] generate received request

2022/03/25 11:59:00 [INFO] received CSR

2022/03/25 11:59:00 [INFO] generating key: rsa-2048

2022/03/25 11:59:01 [INFO] encoded CSR

2022/03/25 11:59:01 [INFO] signed certificate with serial number 557033930062038497217823569026707631621389779840

6.2.5、签发kube-apiserver证书

cat > server-csr.json << EOF

{

"CN": "kubernetes",

"hosts": [

"127.0.0.1",

"192.168.13.51",

"192.168.13.52",

"192.168.13.53",

"192.168.13.59",

"10.96.0.1",

"kubernetes",

"kubernetes.default",

"kubernetes.default.svc",

"kubernetes.default.svc.cluster",

"kubernetes.default.svc.cluster.local"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "ShangHai",

"ST": "ShangHai"

}

]

}

EOF

6.2.6、生成api server的证书

[root@kubernetes-master-01 k8s]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes server-csr.json | cfssljson -bare server

2022/03/25 12:00:58 [INFO] generate received request

2022/03/25 12:00:58 [INFO] received CSR

2022/03/25 12:00:58 [INFO] generating key: rsa-2048

2022/03/25 12:00:59 [INFO] encoded CSR

2022/03/25 12:00:59 [INFO] signed certificate with serial number 299244441748286486676641922424888451483508161336

2022/03/25 12:00:59 [WARNING] This certificate lacks a "hosts" field. This makes it unsuitable for

websites. For more information see the Baseline Requirements for the Issuance and Management

of Publicly-Trusted Certificates, v.1.1.6, from the CA/Browser Forum (https://cabforum.org);

specifically, section 10.2.3 ("Information Requirements").

6.2.7、创建kube-controller-manager证书签名配置

cat > kube-controller-manager-csr.json << EOF

{

"CN": "system:kube-controller-manager",

"hosts": [

"127.0.0.1",

"192.168.13.51",

"192.168.13.52",

"192.168.13.53",

"192.168.13.59"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "system:kube-controller-manager",

"OU": "System"

}

]

}

EOF

6.2.8、生成kube-controller-manager的证书

[root@kubernetes-master-01 k8s]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-controller-manager-csr.json | cfssljson -bare kube-controller-manager

2022/03/25 12:03:14 [INFO] generate received request

2022/03/25 12:03:14 [INFO] received CSR

2022/03/25 12:03:14 [INFO] generating key: rsa-2048

2022/03/25 12:03:15 [INFO] encoded CSR

2022/03/25 12:03:15 [INFO] signed certificate with serial number 701056850441385917317093134169378495228757679360

2022/03/25 12:03:15 [WARNING] This certificate lacks a "hosts" field. This makes it unsuitable for

websites. For more information see the Baseline Requirements for the Issuance and Management

of Publicly-Trusted Certificates, v.1.1.6, from the CA/Browser Forum (https://cabforum.org);

specifically, section 10.2.3 ("Information Requirements").

6.2.9、创建kube-scheduler签名配置

cat > kube-scheduler-csr.json << EOF

{

"CN": "system:kube-scheduler",

"hosts": [

"127.0.0.1",

"192.168.13.51",

"192.168.13.52",

"192.168.13.53",

"192.168.13.59"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "system:kube-scheduler",

"OU": "System"

}

]

}

EOF

6.2.10、生成kube-scheduler证书

[root@kubernetes-master-01 k8s]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-scheduler-csr.json | cfssljson -bare kube-scheduler

2022/03/25 12:05:20 [INFO] generate received request

2022/03/25 12:05:20 [INFO] received CSR

2022/03/25 12:05:20 [INFO] generating key: rsa-2048

2022/03/25 12:05:21 [INFO] encoded CSR

2022/03/25 12:05:21 [INFO] signed certificate with serial number 671456219169171506748357027847459681207635107252

2022/03/25 12:05:21 [WARNING] This certificate lacks a "hosts" field. This makes it unsuitable for

websites. For more information see the Baseline Requirements for the Issuance and Management

of Publicly-Trusted Certificates, v.1.1.6, from the CA/Browser Forum (https://cabforum.org);

specifically, section 10.2.3 ("Information Requirements").

6.2.11、创建kube-proxy证书签名配置

cat > kube-proxy-csr.json << EOF

{

"CN":"system:kube-proxy",

"hosts":[],

"key":{

"algo":"rsa",

"size":2048

},

"names":[

{

"C":"CN",

"L":"BeiJing",

"ST":"BeiJing",

"O":"system:kube-proxy",

"OU":"System"

}

]

}

EOF

6.2.12、生成kube-proxy证书

[root@kubernetes-master-01 k8s]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-proxy-csr.json | cfssljson -bare kube-proxy

2022/03/25 12:06:21 [INFO] generate received request

2022/03/25 12:06:21 [INFO] received CSR

2022/03/25 12:06:21 [INFO] generating key: rsa-2048

2022/03/25 12:06:22 [INFO] encoded CSR

2022/03/25 12:06:22 [INFO] signed certificate with serial number 552247360872836395161135503274741807724881078070

2022/03/25 12:06:22 [WARNING] This certificate lacks a "hosts" field. This makes it unsuitable for

websites. For more information see the Baseline Requirements for the Issuance and Management

of Publicly-Trusted Certificates, v.1.1.6, from the CA/Browser Forum (https://cabforum.org);

specifically, section 10.2.3 ("Information Requirements").

6.2.13、签发管理员用户证书

cat > admin-csr.json << EOF

{

"CN":"admin",

"key":{

"algo":"rsa",

"size":2048

},

"names":[

{

"C":"CN",

"L":"BeiJing",

"ST":"BeiJing",

"O":"system:masters",

"OU":"System"

}

]

}

EOF

6.2.14、生成管理员证书

[root@kubernetes-master-01 k8s]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes admin-csr.json | cfssljson -bare admin

2022/03/25 12:07:53 [INFO] generate received request

2022/03/25 12:07:53 [INFO] received CSR

2022/03/25 12:07:53 [INFO] generating key: rsa-2048

2022/03/25 12:07:54 [INFO] encoded CSR

2022/03/25 12:07:54 [INFO] signed certificate with serial number 486942786622900385389032072667688322104491971804

2022/03/25 12:07:54 [WARNING] This certificate lacks a "hosts" field. This makes it unsuitable for

websites. For more information see the Baseline Requirements for the Issuance and Management

of Publicly-Trusted Certificates, v.1.1.6, from the CA/Browser Forum (https://cabforum.org);

specifically, section 10.2.3 ("Information Requirements").

6.2.15、颁发证书

mkdir -pv /etc/kubernetes/ssl

cp -p ./{ca*pem,server*pem,kube-controller-manager*pem,kube-scheduler*.pem,kube-proxy*pem,admin*.pem} /etc/kubernetes/ssl

for i in m1 m2 m3; do

ssh root@$i "mkdir -pv /etc/kubernetes/ssl"

scp /etc/kubernetes/ssl/* root@$i:/etc/kubernetes/ssl

done

6.3、部署Master节点

签发完所有的证书之后,开始部署Master节点。

6.3.1、下载Master节点的安装包

[root@kubernetes-master-01 data]# wget https://dl.k8s.io/v1.18.8/kubernetes-server-linux-amd64.tar.gz

6.3.2、解压并安装

[root@kubernetes-master-01 data]# tar -xf kubernetes-server-linux-amd64.tar.gz

[root@kubernetes-master-01 data]# cd /opt/data/kubernetes/server/bin

[root@kubernetes-master-01 data]# for i in m1 m2 m3; do scp kube-apiserver kube-controller-manager kube-scheduler kubectl root@$i:/usr/local/bin/; done

6.3.3、创建集群配置文件

集群证书需要有集群配置文件才能够识别。

1、创建kube-controller-manager.kubeconfig文件

[root@kubernetes-master-01 bin]# cd /opt/cert/k8s/

export KUBE_APISERVER="https://192.168.13.59:8443"

# 设置集群参数

kubectl config set-cluster kubernetes \

--certificate-authority=/etc/kubernetes/ssl/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=kube-controller-manager.kubeconfig

# 设置客户端认证参数

kubectl config set-credentials "kube-controller-manager" \

--client-certificate=/etc/kubernetes/ssl/kube-controller-manager.pem \

--client-key=/etc/kubernetes/ssl/kube-controller-manager-key.pem \

--embed-certs=true \

--kubeconfig=kube-controller-manager.kubeconfig

# 设置上下文参数(在上下文参数中将集群参数和用户参数关联起来)

kubectl config set-context default \

--cluster=kubernetes \

--user="kube-controller-manager" \

--kubeconfig=kube-controller-manager.kubeconfig

# 配置默认上下文

kubectl config use-context default --kubeconfig=kube-controller-manager.kubeconfig

2、 创建kube-scheduler.kubeconfig文件

export KUBE_APISERVER="https://192.168.13.59:8443"

# 设置集群参数

kubectl config set-cluster kubernetes \

--certificate-authority=/etc/kubernetes/ssl/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=kube-scheduler.kubeconfig

# 设置客户端认证参数

kubectl config set-credentials "kube-scheduler" \

--client-certificate=/etc/kubernetes/ssl/kube-scheduler.pem \

--client-key=/etc/kubernetes/ssl/kube-scheduler-key.pem \

--embed-certs=true \

--kubeconfig=kube-scheduler.kubeconfig

# 设置上下文参数(在上下文参数中将集群参数和用户参数关联起来)

kubectl config set-context default \

--cluster=kubernetes \

--user="kube-scheduler" \

--kubeconfig=kube-scheduler.kubeconfig

# 配置默认上下文

kubectl config use-context default --kubeconfig=kube-scheduler.kubeconfig

3、创建kube-proxy.kubeconfig文件

export KUBE_APISERVER="https://192.168.13.59:8443"

# 设置集群参数

kubectl config set-cluster kubernetes \

--certificate-authority=/etc/kubernetes/ssl/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=kube-proxy.kubeconfig

# 设置客户端认证参数

kubectl config set-credentials "kube-proxy" \

--client-certificate=/etc/kubernetes/ssl/kube-proxy.pem \

--client-key=/etc/kubernetes/ssl/kube-proxy-key.pem \

--embed-certs=true \

--kubeconfig=kube-proxy.kubeconfig

# 设置上下文参数(在上下文参数中将集群参数和用户参数关联起来)

kubectl config set-context default \

--cluster=kubernetes \

--user="kube-proxy" \

--kubeconfig=kube-proxy.kubeconfig

# 配置默认上下文

kubectl config use-context default --kubeconfig=kube-proxy.kubeconfig

4、创建admin.kubeconfig文件

export KUBE_APISERVER="https://192.168.13.59:8443"

# 设置集群参数

kubectl config set-cluster kubernetes \

--certificate-authority=/etc/kubernetes/ssl/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=admin.kubeconfig

# 设置客户端认证参数

kubectl config set-credentials "admin" \

--client-certificate=/etc/kubernetes/ssl/admin.pem \

--client-key=/etc/kubernetes/ssl/admin-key.pem \

--embed-certs=true \

--kubeconfig=admin.kubeconfig

# 设置上下文参数(在上下文参数中将集群参数和用户参数关联起来)

kubectl config set-context default \

--cluster=kubernetes \

--user="admin" \

--kubeconfig=admin.kubeconfig

# 配置默认上下文

kubectl config use-context default --kubeconfig=admin.kubeconfig

5、签发认证Token

# 必须要用自己机器创建的Token

TLS_BOOTSTRAPPING_TOKEN=`head -c 16 /dev/urandom | od -An -t x | tr -d ' '`

cat > token.csv << EOF

${TLS_BOOTSTRAPPING_TOKEN},kubelet-bootstrap,10001,"system:kubelet-bootstrap"

EOF

6、签发TLS Bootstrapping集群配置文件

export KUBE_APISERVER="https://192.168.13.59:8443"

# 设置集群参数

kubectl config set-cluster kubernetes \

--certificate-authority=/etc/kubernetes/ssl/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=kubelet-bootstrap.kubeconfig

# 设置客户端认证参数,此处token必须用上叙token.csv中的token

kubectl config set-credentials "kubelet-bootstrap" \

--token=61b157e65dcbf0f0d64045388c098181 \

--kubeconfig=kubelet-bootstrap.kubeconfig

# 设置上下文参数(在上下文参数中将集群参数和用户参数关联起来)

kubectl config set-context default \

--cluster=kubernetes \

--user="kubelet-bootstrap" \

--kubeconfig=kubelet-bootstrap.kubeconfig

# 配置默认上下文

kubectl config use-context default --kubeconfig=kubelet-bootstrap.kubeconfig

7、分发集群配置文件

for i in m1 m2 m3;

do

ssh root@$i "mkdir -p /etc/kubernetes/cfg";

scp token.csv kube-scheduler.kubeconfig kube-controller-manager.kubeconfig admin.kubeconfig kube-proxy.kubeconfig kubelet-bootstrap.kubeconfig root@$i:/etc/kubernetes/cfg;

done

6.3.4、部署Api Server

创建kube-apiserver服务配置文件(三个节点都要执行,不能复制,注意api server IP)。

1、创建api server的配置文件

KUBE_APISERVER_IP=`hostname -i`

cat > /etc/kubernetes/cfg/kube-apiserver.conf << EOF

KUBE_APISERVER_OPTS="--logtostderr=false \\

--v=2 \\

--log-dir=/var/log/kubernetes \\

--advertise-address=${KUBE_APISERVER_IP} \\

--default-not-ready-toleration-seconds=360 \\

--default-unreachable-toleration-seconds=360 \\

--max-mutating-requests-inflight=2000 \\

--max-requests-inflight=4000 \\

--default-watch-cache-size=200 \\

--delete-collection-workers=2 \\

--bind-address=0.0.0.0 \\

--secure-port=6443 \\

--allow-privileged=true \\

--service-cluster-ip-range=10.96.0.0/16 \\

--service-node-port-range=30000-52767 \\

--enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,ResourceQuota,NodeRestriction \\

--authorization-mode=RBAC,Node \\

--enable-bootstrap-token-auth=true \\

--token-auth-file=/etc/kubernetes/cfg/token.csv \\

--kubelet-client-certificate=/etc/kubernetes/ssl/server.pem \\

--kubelet-client-key=/etc/kubernetes/ssl/server-key.pem \\

--tls-cert-file=/etc/kubernetes/ssl/server.pem \\

--tls-private-key-file=/etc/kubernetes/ssl/server-key.pem \\

--client-ca-file=/etc/kubernetes/ssl/ca.pem \\

--service-account-key-file=/etc/kubernetes/ssl/ca-key.pem \\

--audit-log-maxage=30 \\

--audit-log-maxbackup=3 \\

--audit-log-maxsize=100 \\

--audit-log-path=/var/log/kubernetes/k8s-audit.log \\

--etcd-servers=https://192.168.13.51:2379,https://192.168.13.52:2379,https://192.168.13.53:2379 \\

--etcd-cafile=/etc/etcd/ssl/ca.pem \\

--etcd-certfile=/etc/etcd/ssl/etcd.pem \\

--etcd-keyfile=/etc/etcd/ssl/etcd-key.pem"

EOF

| 配置选项 | 选项说明 |

|---|---|

| —logtostderr=false | 输出日志到文件中,不输出到标准错误控制台 |

| —v=2 | 指定输出日志的级别 |

| —advertise-address | 向集群成员通知apiserver 消息的 IP 地址 |

| —etcd-servers | 连接的etcd 服务器列表 |

| —etcd-cafile | 用于etcd 通信的 SSL CA 文件 |

| —etcd-certfile | 用于etcd 通信的的 SSL 证书文件 |

| —etcd-keyfile | 用于etcd 通信的 SSL 密钥文件 |

| —service-cluster-ip-range | Service网络地址分配 |

| —bind-address | 监听—seure-port 的 IP 地址,如果为空,则将使用所有接口(0.0.0.0) |

| —secure-port=6443 | 用于监听具有认证授权功能的HTTPS 协议的端口,默认值是6443 |

| —allow-privileged | 是否启用授权功能 |

| —service-node-port-range | Service使用的端口范围 |

| —default-not-ready-toleration-seconds | 表示notReady状态的容忍度秒数 |

| —default-unreachable-toleration-seconds | 表示unreachable状态的容忍度秒数: |

| —max-mutating-requests-inflight=2000 | 在给定时间内进行中可变请求的最大数量,0 值表示没有限制(默认值 200) |

| —default-watch-cache-size=200 | 默认监视缓存大小,0 表示对于没有设置默认监视大小的资源,将禁用监视缓存 |

| —delete-collection-workers=2 | 用于DeleteCollection 调用的工作者数量,这被用于加速 namespace 的清理( 默认值 1) |

| —enable-admission-plugins | 资源限制的相关配置 |

| —authorization-mode | 在安全端口上进行权限验证的插件的顺序列表,以逗号分隔的列表。 |

2、注册api server服务

cat > /usr/lib/systemd/system/kube-apiserver.service << EOF

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

After=network.target

[Service]

EnvironmentFile=/etc/kubernetes/cfg/kube-apiserver.conf

ExecStart=/usr/local/bin/kube-apiserver \$KUBE_APISERVER_OPTS

Restart=on-failure

RestartSec=10

Type=notify

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF

3、测试启动api server

[root@kubernetes-master-01 k8s]# mkdir -p /var/log/kubernetes/

[root@kubernetes-master-01 k8s]# systemctl daemon-reload

[root@kubernetes-master-01 k8s]# systemctl enable --now kube-apiserver

Created symlink from /etc/systemd/system/multi-user.target.wants/kube-apiserver.service to /usr/lib/systemd/system/kube-apiserver.service.

6.3.5、部署高可用软件

通常采用keepalived haproxy作为高可用软件。

1、安装高可用软件

yum install -y keepalived haproxy

2、配置haproxy服务

cat > /etc/haproxy/haproxy.cfg <<EOF

global

maxconn 2000

ulimit-n 16384

log 127.0.0.1 local0 err

stats timeout 30s

defaults

log global

mode http

option httplog

timeout connect 5000

timeout client 50000

timeout server 50000

timeout http-request 15s

timeout http-keep-alive 15s

frontend monitor-in

bind *:33305

mode http

option httplog

monitor-uri /monitor

listen stats

bind *:8006

mode http

stats enable

stats hide-version

stats uri /stats

stats refresh 30s

stats realm Haproxy\ Statistics

stats auth admin:admin

frontend k8s-master

bind 0.0.0.0:8443

bind 127.0.0.1:8443

mode tcp

option tcplog

tcp-request inspect-delay 5s

default_backend k8s-master

backend k8s-master

mode tcp

option tcplog

option tcp-check

balance roundrobin

default-server inter 10s downinter 5s rise 2 fall 2 slowstart 60s maxconn 250 maxqueue 256 weight 100

server kubernetes-master-01 192.168.13.51:6443 check inter 2000 fall 2 rise 2 weight 100

server kubernetes-master-02 192.168.13.52:6443 check inter 2000 fall 2 rise 2 weight 100

server kubernetes-master-03 192.168.13.53:6443 check inter 2000 fall 2 rise 2 weight 100

EOF

3、配置keepalived服务

注意,每一个节点的IP和优先级都不一致,注意修改。

mv /etc/keepalived/keepalived.conf /etc/keepalived/keepalived.conf_bak

cd /etc/keepalived

cat > /etc/keepalived/keepalived.conf <<EOF

! Configuration File for keepalived

global_defs {

router_id LVS_DEVEL

}

vrrp_instance VI_1 {

state MASTER

interface eth0

mcast_src_ip 192.168.13.51

virtual_router_id 51

priority 100

advert_int 2

authentication {

auth_type PASS

auth_pass K8SHA_KA_AUTH

}

virtual_ipaddress {

192.168.13.59

}

}

EOF

4、启动高可用软件

systemctl enable --now keepalived haproxy

6.3.5、部署TLS bootstrapping

TLS bootstrapping 是用来简化管理员配置kubelet 与 apiserver 双向加密通信的配置步骤的一种机制。当集群开启了 TLS 认证后,每个节点的 kubelet 组件都要使用由 apiserver 使用的 CA 签发的有效证书才能与 apiserver 通讯,此时如果有很多个节点都需要单独签署证书那将变得非常繁琐且极易出错,导致集群不稳。

TLS bootstrapping 功能就是让 node节点上的kubelet组件先使用一个预定的低权限用户连接到 apiserver,然后向 apiserver 申请证书,由 apiserver 动态签署颁发到Node节点,实现证书签署自动化。

[root@kubernetes-master-01 keepalived]# kubectl create clusterrolebinding kubelet-bootstrap \

> --clusterrole=system:node-bootstrapper \

> --user=kubelet-bootstrap

clusterrolebinding.rbac.authorization.k8s.io/kubelet-bootstrap created

6.3.6、部署kube-controller-manager服务

Controller Manager作为集群内部的管理控制中心,负责集群内的Node、Pod副本、服务端点(Endpoint)、命名空间(Namespace)、服务账号(ServiceAccount)、资源定额(ResourceQuota)的管理,当某个Node意外宕机时,Controller Manager会及时发现并执行自动化修复流程,确保集群始终处于预期的工作状态。如果多个控制器管理器同时生效,则会有一致性问题,所以kube-controller-manager的高可用,只能是主备模式,而kubernetes集群是采用租赁锁实现leader选举,需要在启动参数中加入 —leader-elect=true。

1、创建kube-controller-manager配置文件

cat > /etc/kubernetes/cfg/kube-controller-manager.conf << EOF

KUBE_CONTROLLER_MANAGER_OPTS="--logtostderr=false \\

--v=2 \\

--log-dir=/var/log/kubernetes \\

--leader-elect=true \\

--cluster-name=kubernetes \\

--bind-address=127.0.0.1 \\

--allocate-node-cidrs=true \\

--cluster-cidr=10.244.0.0/12 \\

--service-cluster-ip-range=10.96.0.0/16 \\

--cluster-signing-cert-file=/etc/kubernetes/ssl/ca.pem \\

--cluster-signing-key-file=/etc/kubernetes/ssl/ca-key.pem \\

--root-ca-file=/etc/kubernetes/ssl/ca.pem \\

--service-account-private-key-file=/etc/kubernetes/ssl/ca-key.pem \\

--kubeconfig=/etc/kubernetes/cfg/kube-controller-manager.kubeconfig \\

--tls-cert-file=/etc/kubernetes/ssl/kube-controller-manager.pem \\

--tls-private-key-file=/etc/kubernetes/ssl/kube-controller-manager-key.pem \\

--experimental-cluster-signing-duration=87600h0m0s \\

--controllers=*,bootstrapsigner,tokencleaner \\

--use-service-account-credentials=true \\

--node-monitor-grace-period=10s \\

--horizontal-pod-autoscaler-use-rest-clients=true"

EOF

| 配置选项 | 选项意义 |

|---|---|

| —leader-elect | 高可用时启用选举功能。 |

| —master | 通过本地非安全本地端口8080连接apiserver |

| —bind-address | 监控地址 |

| —allocate-node-cidrs | 是否应在node节点上分配和设置Pod的CIDR |

| —cluster-cidr | Controller Manager在启动时如果设置了—cluster-cidr参数,防止不同的节点的CIDR地址发生冲突 |

| —service-cluster-ip-range | 集群Services 的CIDR范围 |

| —cluster-signing-cert-file | 指定用于集群签发的所有集群范围内证书文件(根证书文件) |

| —cluster-signing-key-file | 指定集群签发证书的key |

| —root-ca-file | 如果设置,该根证书权限将包含service acount的toker secret,这必须是一个有效的PEM编码CA 包 |

| —service-account-private-key-file | 包含用于签署service account token的PEM编码RSA或者ECDSA私钥的文件名 |

| —experimental-cluster-signing-duration | 证书签发时间 |

2、注册kube-controller-manager服务

cat > /usr/lib/systemd/system/kube-controller-manager.service << EOF

[Unit]

Description=Kubernetes Controller Manager

Documentation=https://github.com/kubernetes/kubernetes

After=network.target

[Service]

EnvironmentFile=/etc/kubernetes/cfg/kube-controller-manager.conf

ExecStart=/usr/local/bin/kube-controller-manager \$KUBE_CONTROLLER_MANAGER_OPTS

Restart=on-failure

RestartSec=5

[Install]

WantedBy=multi-user.target

EOF

3、启动

systemctl daemon-reload

systemctl enable --now kube-controller-manager

systemctl status kube-controller-manager

6.3.7、部署 kube-scheduler服务

kube-scheduler是 Kubernetes 集群的默认调度器,并且是集群 控制面 的一部分。对每一个新创建的 Pod 或者是未被调度的 Pod,kube-scheduler 会过滤所有的node,然后选择一个最优的 Node 去运行这个 Pod。kube-scheduler 调度器是一个策略丰富、拓扑感知、工作负载特定的功能,调度器显著影响可用性、性能和容量。调度器需要考虑个人和集体的资源要求、服务质量要求、硬件/软件/政策约束、亲和力和反亲和力规范、数据局部性、负载间干扰、完成期限等。工作负载特定的要求必要时将通过 API 暴露。

1、 创建kube-scheduler配置文件

cat > /etc/kubernetes/cfg/kube-scheduler.conf << EOF

KUBE_SCHEDULER_OPTS="--logtostderr=false \\

--v=2 \\

--log-dir=/var/log/kubernetes \\

--kubeconfig=/etc/kubernetes/cfg/kube-scheduler.kubeconfig \\

--leader-elect=true \\

--master=http://127.0.0.1:8080 \\

--bind-address=127.0.0.1 "

EOF

2、注册服务

cat > /usr/lib/systemd/system/kube-scheduler.service << EOF

[Unit]

Description=Kubernetes Scheduler

Documentation=https://github.com/kubernetes/kubernetes

After=network.target

[Service]

EnvironmentFile=/etc/kubernetes/cfg/kube-scheduler.conf

ExecStart=/usr/local/bin/kube-scheduler \$KUBE_SCHEDULER_OPTS

Restart=on-failure

RestartSec=5

[Install]

WantedBy=multi-user.target

EOF

3、启动

systemctl daemon-reload

systemctl enable --now kube-scheduler

systemctl status kube-scheduler

6.3.8、查看集群状态

[root@kubernetes-master-01 keepalived]# kubectl get cs

NAME STATUS MESSAGE ERROR

scheduler Healthy ok

controller-manager Healthy ok

etcd-2 Healthy {"health":"true"}

etcd-0 Healthy {"health":"true"}

etcd-1 Healthy {"health":"true"}

6.3.9、部署kubelet服务

kubelet主要是用来管理容器的。

1、创建kubelet配置

KUBE_HOSTNAME=`hostname`

cat > /etc/kubernetes/cfg/kubelet.conf << EOF

KUBELET_OPTS="--logtostderr=false \\

--v=2 \\

--log-dir=/var/log/kubernetes \\

--hostname-override=${KUBE_HOSTNAME} \\

--container-runtime=docker \\

--kubeconfig=/etc/kubernetes/cfg/kubelet.kubeconfig \\

--bootstrap-kubeconfig=/etc/kubernetes/cfg/kubelet-bootstrap.kubeconfig \\

--config=/etc/kubernetes/cfg/kubelet-config.yml \\

--cert-dir=/etc/kubernetes/ssl \\

--image-pull-progress-deadline=15m \\

--pod-infra-container-image=registry.cn-hangzhou.aliyuncs.com/k8sos/pause:3.2"

EOF

2、创建kubelet-config配置文件

KUBE_HOSTNAME_IP=`hostname -i`

cat > /etc/kubernetes/cfg/kubelet-config.yml << EOF

kind: KubeletConfiguration

apiVersion: kubelet.config.k8s.io/v1beta1

address: ${KUBE_HOSTNAME_IP}

port: 10250

readOnlyPort: 10255

cgroupDriver: cgroupfs

clusterDNS:

- 10.96.0.2

clusterDomain: cluster.local

failSwapOn: false

authentication:

anonymous:

enabled: false

webhook:

cacheTTL: 2m0s

enabled: true

x509:

clientCAFile: /etc/kubernetes/ssl/ca.pem

authorization:

mode: Webhook

webhook:

cacheAuthorizedTTL: 5m0s

cacheUnauthorizedTTL: 30s

evictionHard:

imagefs.available: 15%

memory.available: 100Mi

nodefs.available: 10%

nodefs.inodesFree: 5%

maxOpenFiles: 1000000

maxPods: 110

EOF

3、kubelet的启动脚本

cat > /usr/lib/systemd/system/kubelet.service << EOF

[Unit]

Description=Kubernetes Kubelet

After=docker.service

[Service]

EnvironmentFile=/etc/kubernetes/cfg/kubelet.conf

ExecStart=/usr/local/bin/kubelet \$KUBELET_OPTS

Restart=on-failure

RestartSec=10

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF

4、开启并启动

systemctl daemon-reload

systemctl enable --now kubelet

systemctl status kubelet.service

6.3.10、部署kube-proxy

kube-proxy是Kubernetes的核心组件,部署在每个Node节点上,它是实现Kubernetes Service的通信与负载均衡机制的重要组件; kube-proxy负责为Pod创建代理服务,从apiserver获取所有server信息,并根据server信息创建代理服务,实现server到Pod的请求路由和转发,从而实现K8s层级的虚拟转发网络。

1、创建kube-proxy配置文件

cat > /etc/kubernetes/cfg/kube-proxy.conf << EOF

KUBE_PROXY_OPTS="--logtostderr=false \\

--v=2 \\

--log-dir=/var/log/kubernetes \\

--config=/etc/kubernetes/cfg/kube-proxy-config.yml"

EOF

2、创建kube-proxy-config配置文件

KUBE_HOSTNAME=`hostname`

KUBE_HOSTNAME_IP=`hostname -i`

cat > /etc/kubernetes/cfg/kube-proxy-config.yml << EOF

kind: KubeProxyConfiguration

apiVersion: kubeproxy.config.k8s.io/v1alpha1

bindAddress: ${KUBE_HOSTNAME_IP}

healthzBindAddress: ${KUBE_HOSTNAME_IP}:10256

metricsBindAddress: ${KUBE_HOSTNAME_IP}:10249

clientConnection:

burst: 200

kubeconfig: /etc/kubernetes/cfg/kube-proxy.kubeconfig

qps: 100

hostnameOverride: ${KUBE_HOSTNAME}

clusterCIDR: 10.96.0.0/16

enableProfiling: true

mode: "ipvs"

kubeProxyIPTablesConfiguration:

masqueradeAll: false

kubeProxyIPVSConfiguration:

scheduler: rr

excludeCIDRs: []

EOF

3、创建kube-proxy启动脚本

cat > /usr/lib/systemd/system/kube-proxy.service << EOF

[Unit]

Description=Kubernetes Proxy

After=network.target

[Service]

EnvironmentFile=/etc/kubernetes/cfg/kube-proxy.conf

ExecStart=/usr/local/bin/kube-proxy \$KUBE_PROXY_OPTS

Restart=on-failure

RestartSec=10

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF

4、启动

systemctl daemon-reload

systemctl enable --now kube-proxy

systemctl status kube-proxy

6.3.11、 查看kubelet加入集群请求

[root@kubernetes-master-01 keepalived]# kubectl get csr

NAME AGE SIGNERNAME REQUESTOR CONDITION

node-csr-2UpZcGNblIH3oq0eHsWl4nAZywPTYMRLjSgSuefL-aw 8m12s kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap Pending

node-csr-TxTPkrin4PCmbbLrp_-4KNbtKKyO8zpiD_hK9mOOG1g 8m16s kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap Pending

node-csr-aIyiuvCj6OT1x84SIVXf_42uAmp9G0dQJGOUGovxztI 8m13s kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap Pending

6.3.12、批准加入请求

kubectl certificate approve `kubectl get csr | grep "Pending" | awk '{print $1}'`

6.3.14、查看集群节点

[root@kubernetes-master-01 keepalived]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

kubernetes-master-01 NotReady <none> 6s v1.18.8

kubernetes-master-02 NotReady <none> 6s v1.18.8

kubernetes-master-03 NotReady <none> 6s v1.18.8

6.3.15、创建超管用户

[root@kubernetes-master-01 keepalived]# kubectl create clusterrolebinding cluster-system-anonymous --clusterrole=cluster-admin --user=kubernetes

clusterrolebinding.rbac.authorization.k8s.io/cluster-system-anonymous created

6.4、部署网络插件

kubernetes设计了网络模型,但却将它的实现交给了网络插件,CNI网络插件最主要的功能就是实现POD资源能够跨主机进行通讯。

- Flannel:性能强

- Calico:功能更全

6.4.1、下载Flannel网络插件

[root@kubernetes-master-01 ~]# wget https://github.com/coreos/flannel/releases/download/v0.13.1-rc1/flannel-v0.13.1-rc1-linux-amd64.tar.gz

6.4.2、将Flannel网络插件配置写入Etcd

Etcd一定要用3.3.24版本。

# 写入集群数据库

etcdctl \

--ca-file=/etc/etcd/ssl/ca.pem \

--cert-file=/etc/etcd/ssl/etcd.pem \

--key-file=/etc/etcd/ssl/etcd-key.pem \

--endpoints="https://192.168.13.51:2379,https://192.168.13.52:2379,https://192.168.13.53:2379" \

mk /coreos.com/network/config '{"Network":"10.244.0.0/12", "SubnetLen": 21, "Backend": {"Type": "vxlan", "DirectRouting": true}}'

# 查看集群数据库

etcdctl \

--ca-file=/etc/etcd/ssl/ca.pem \

--cert-file=/etc/etcd/ssl/etcd.pem \

--key-file=/etc/etcd/ssl/etcd-key.pem \

--endpoints="https://192.168.13.51:2379,https://192.168.13.52:2379,https://192.168.13.53:2379" \

get /coreos.com/network/config

6.4.3、注册Flannel服务

cat > /usr/lib/systemd/system/flanneld.service << EOF

[Unit]

Description=Flanneld address

After=network.target

After=network-online.target

Wants=network-online.target

After=etcd.service

Before=docker.service

[Service]

Type=notify

ExecStart=/usr/local/bin/flanneld \\

-etcd-cafile=/etc/etcd/ssl/ca.pem \\

-etcd-certfile=/etc/etcd/ssl/etcd.pem \\

-etcd-keyfile=/etc/etcd/ssl/etcd-key.pem \\

-etcd-endpoints=https://192.168.13.51:2379,https://192.168.13.52:2379,https://192.168.13.53:2379 \\

-etcd-prefix=/coreos.com/network \\

-ip-masq

ExecStartPost=/usr/local/bin/mk-docker-opts.sh -k DOCKER_NETWORK_OPTIONS -d /run/flannel/subnet.env

Restart=always

RestartSec=5

StartLimitInterval=0

[Install]

WantedBy=multi-user.target

RequiredBy=docker.service

EOF

6.4.4、分发Flanneld配置

# 分发Flannel启动脚本

[root@kubernetes-master-01 data]# for i in m1 m2 m3 n1 n2 n3 n4 n5; do scp /usr/lib/systemd/system/flanneld.service root@$i:/usr/lib/systemd/system/flanneld.service; done

# 分发命令

[root@kubernetes-master-01 data]# tar -xf flannel-v0.13.1-rc1-linux-amd64.tar.gz

[root@kubernetes-master-01 data]# for i in m1 m2 m3 n1 n2 n3 n4 n5 ; do scp flanneld mk-docker-opts.sh root@$i:/usr/local/bin/; done

# 分发Etcd证书

[root@kubernetes-master-01 ssl]# for i in n1 n2 n3 n4 n5; do ssh root@$i "mkdir -pv /etc/etcd/ssl/"; scp ca.pem etcd-key.pem etcd.pem root@$i:/etc/etcd/ssl/; done

6.4.5、修改Docker的默认网络

sed -i '/ExecStart/s/\(.*\)/#\1/' /usr/lib/systemd/system/docker.service

sed -i '/ExecReload/a ExecStart=/usr/bin/dockerd $DOCKER_NETWORK_OPTIONS -H fd:// --containerd=/run/containerd/containerd.sock' /usr/lib/systemd/system/docker.service

sed -i '/ExecReload/a EnvironmentFile=-/run/flannel/subnet.env' /usr/lib/systemd/system/docker.service

[root@kubernetes-master-01 ~]# for i in m2 m3 n1 n2 n3 n4 n5; do scp /usr/lib/systemd/system/docker.service root@$i:/usr/lib/systemd/system/docker.service; done

6.4.6、启动docker和flannel

[root@kubernetes-master-01 ssl]# systemctl enable --now flanneld

Created symlink from /etc/systemd/system/multi-user.target.wants/flanneld.service to /usr/lib/systemd/system/flanneld.service.

Created symlink from /etc/systemd/system/docker.service.requires/flanneld.service to /usr/lib/systemd/system/flanneld.service.

[root@kubernetes-master-01 ssl]# systemctl restart docker

# 启动其他所有的flannel和docker

[root@kubernetes-master-01 ~]# for i in m2 m3 n1 n2 n3 n4 n5; do ssh root@$i "systemctl enable --now flanneld && systemctl restart docker "; done

6.5、部署集群DNS

CoreDNS用于集群中Pod解析Service的名字,Kubernetes基于CoreDNS用于服务发现功能。

6.5.1、下载CoreDNS安装包

下载连接:https://github.com/coredns/deployment

6.5.2、解压

[root@kubernetes-master-01 ~]# unzip deployment-master.zip

6.5.3、部署CoreDNS

[root@kubernetes-master-01 kubernetes]# pwd

/opt/deployment-master/kubernetes

[root@kubernetes-master-01 kubernetes]# ./deploy.sh -i 10.96.0.2 -s | kubectl apply -f -

6.5.4、查看CoreDNS的部署结果

[root@kubernetes-master-01 kubernetes]# kubectl get pods -n kube-system -w

NAME READY STATUS RESTARTS AGE

coredns-7c7c876d56-hscvc 1/1 Running 0 30s

6.6、测试集群

[root@kubernetes-master-01 kubernetes]# kubectl run --rm -it test --image=busybox:1.28.3

If you don't see a command prompt, try pressing enter.

/ # nslookup kubernetes

Server: 10.96.0.2

Address 1: 10.96.0.2 kube-dns.kube-system.svc.cluster.local

Name: kubernetes

Address 1: 10.96.0.1 kubernetes.default.svc.cluster.local

7、部署Worker节点

Worker节点就是我们所说的工作节点,在企业中worker节点根据业务的需求,可以有多个。

7.1、分发工具

[root@kubernetes-master-01 bin]# pwd

/opt/data/kubernetes/server/bin

[root@kubernetes-master-01 bin]# for i in n1 n2 n3 n4 n5 ; do scp kubelet kube-proxy root@$i:/usr/local/bin/; done

7.2、分发证书

[root@kubernetes-master-01 ssl]# pwd

/etc/kubernetes/ssl

[root@kubernetes-master-01 ssl]# for i in n1 n2 n3 n4 n5; do

ssh root@$i "mkdir -pv /etc/kubernetes/ssl";

scp -pr ./{ca*.pem,admin*pem,kube-proxy*pem} root@$i:/etc/kubernetes/ssl;

done

7.3、配置kubelet服务

for ip in n1 n2 n3 n4 n5;do

ssh root@${ip} "mkdir -pv /var/log/kubernetes"

ssh root@${ip} "mkdir -pv /etc/kubernetes/cfg/"

scp /etc/kubernetes/cfg/{kubelet-config.yml,kubelet.conf} root@${ip}:/etc/kubernetes/cfg

scp /usr/lib/systemd/system/kubelet.service root@${ip}:/usr/lib/systemd/system

done

sed -i 's#kubernetes-master-01#kubernetes-node-05#g' /etc/kubernetes/cfg/kubelet.conf

sed -i 's#192.168.13.51#192.168.13.58#g' /etc/kubernetes/cfg/kubelet-config.yml

7.4、启动kubelet服务

systemctl daemon-reload

systemctl enable --now kubelet

systemctl status kubelet.service

7.4、配置kube-proxy服务

for ip in n1 n2 n3 n4 n5;do

scp /etc/kubernetes/cfg/{kube-proxy-config.yml,kube-proxy.conf} root@${ip}:/etc/kubernetes/cfg/

scp /usr/lib/systemd/system/kube-proxy.service root@${ip}:/usr/lib/systemd/system/

scp /etc/kubernetes/cfg/kube-proxy.kubeconfig root@${ip}:/etc/kubernetes/cfg/kube-proxy.kubeconfig

scp /etc/kubernetes/cfg/kubelet-bootstrap.kubeconfig root@${ip}:/etc/kubernetes/cfg/kubelet-bootstrap.kubeconfig

done

sed -i 's#192.168.13.58#192.168.13.57#g' /etc/kubernetes/cfg/kube-proxy-config.yml

sed -i 's#kubernetes-node-05#kubernetes-node-04#g' /etc/kubernetes/cfg/kube-proxy-config.yml

7.5、启动kube-proxy服务

systemctl daemon-reload; systemctl enable --now kube-proxy; systemctl status kube-proxy

7.6、查看加入集群请求

[root@kubernetes-master-01 cfg]# kubectl get csr

NAME AGE SIGNERNAME REQUESTOR CONDITION

node-csr-5ZwEAq3ujzq5YiCFhZp1enRXVr_NbtkzgLOWEGQeNY4 23s kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap Pending

node-csr-TLc-qSwJ-2ju9zotBNaj65qjpYcbeY4_dhlVFFAaFY4 19s kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap Pending

node-csr-VLGr0oc8-j8PsO_kj2uVcaUfzDWqb64jfkm7eLdeFrs 16s kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap Pending

node-csr-f7ij0cZNfHSUnmGXn3CtQfdWe-GX-W74glyCBLJFPes 14s kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap Pending

node-csr-xKPxjHqSa_ZkZrID8LuFX_oGi34uV7jkZXI7Q-3Te5Q 17s kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap Pending

7.7、批准加入

[root@kubernetes-master-01 cfg]# kubectl certificate approve `kubectl get csr | grep "Pending" | awk '{print $1}'`

8、设置集群角色

k8s集群涉及Master节点和Workder节点。

8.1、设置Master节点集群角色

kubectl label nodes kubernetes-master-01 node-role.kubernetes.io/master=kubernetes-master-01

kubectl label nodes kubernetes-master-02 node-role.kubernetes.io/master=kubernetes-master-02

kubectl label nodes kubernetes-master-03 node-role.kubernetes.io/master=kubernetes-master-03

8.2、设置Workder节点集群角色

kubectl label nodes kubernetes-node-01 node-role.kubernetes.io/worker=kubernetes-node-01

kubectl label nodes kubernetes-node-02 node-role.kubernetes.io/worker=kubernetes-node-02

kubectl label nodes kubernetes-node-03 node-role.kubernetes.io/worker=kubernetes-node-03

kubectl label nodes kubernetes-node-04 node-role.kubernetes.io/worker=kubernetes-node-04

kubectl label nodes kubernetes-node-05 node-role.kubernetes.io/worker=kubernetes-node-05

至此,k8s二进制部署完毕。