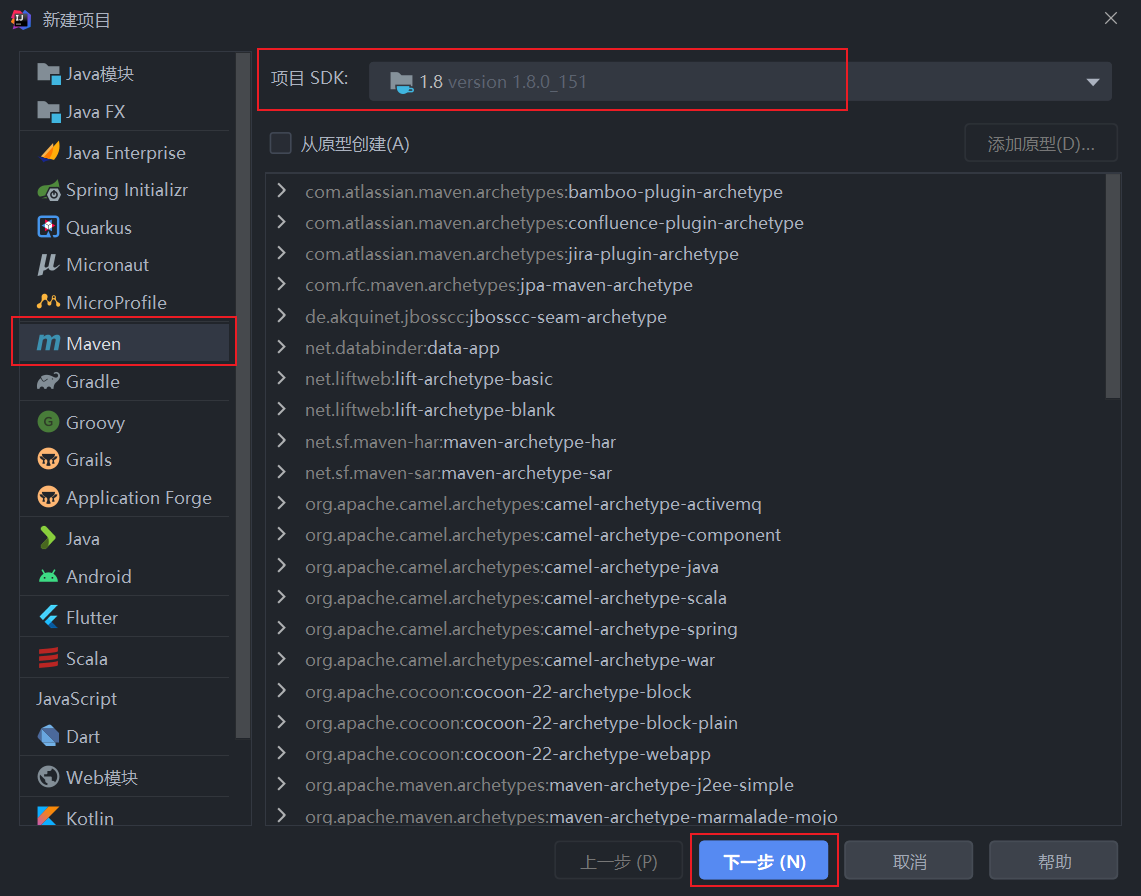

一、创建Maven项目

二、配置Maven

右键 pom.xml ,选择Maven->打开’settings.xml’

修改配置为:

<settings xmlns="http://maven.apache.org/SETTINGS/1.0.0"xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"xsi:schemaLocation="http://maven.apache.org/SETTINGS/1.0.0http://maven.apache.org/xsd/settings-1.0.0.xsd"><localRepository/><interactiveMode/><usePluginRegistry/><offline/><pluginGroups/><servers/><mirrors><mirror><id>aliyunmaven</id><mirrorOf>central</mirrorOf><name>阿里云公共仓库</name><url>https://maven.aliyun.com/repository/central</url></mirror><mirror><id>repo1</id><mirrorOf>central</mirrorOf><name>central repo</name><url>http://repo1.maven.org/maven2/</url></mirror><mirror><id>aliyunmaven</id><mirrorOf>apache snapshots</mirrorOf><name>阿里云阿帕奇仓库</name><url>https://maven.aliyun.com/repository/apache-snapshots</url></mirror></mirrors><proxies/><activeProfiles/><profiles><profile><repositories><repository><id>aliyunmaven</id><name>aliyunmaven</name><url>https://maven.aliyun.com/repository/public</url><layout>default</layout><releases><enabled>true</enabled></releases><snapshots><enabled>true</enabled></snapshots></repository><repository><id>MavenCentral</id><url>http://repo1.maven.org/maven2/</url></repository><repository><id>aliyunmavenApache</id><url>https://maven.aliyun.com/repository/apache-snapshots</url></repository></repositories></profile></profiles></settings>

三、配置pom.xml

<?xml version="1.0" encoding="UTF-8"?><project xmlns="http://maven.apache.org/POM/4.0.0"xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd"><modelVersion>4.0.0</modelVersion><groupId>org.example</groupId><artifactId>Demo3</artifactId><version>1.0-SNAPSHOT</version><properties><maven.compiler.source>8</maven.compiler.source><maven.compiler.target>8</maven.compiler.target><scala.version>2.12.10</scala.version><spark.version>3.1.1</spark.version></properties><dependencies><!--scala--><dependency><groupId>org.scala-lang</groupId><artifactId>scala-library</artifactId><version>${scala.version}</version></dependency><!--spark--><dependency><groupId>org.apache.spark</groupId><artifactId>spark-core_2.12</artifactId><version>${spark.version}</version></dependency><!--hadoop--><dependency><groupId>org.apache.hadoop</groupId><artifactId>hadoop-client</artifactId><version>2.7.0</version></dependency></dependencies></project>

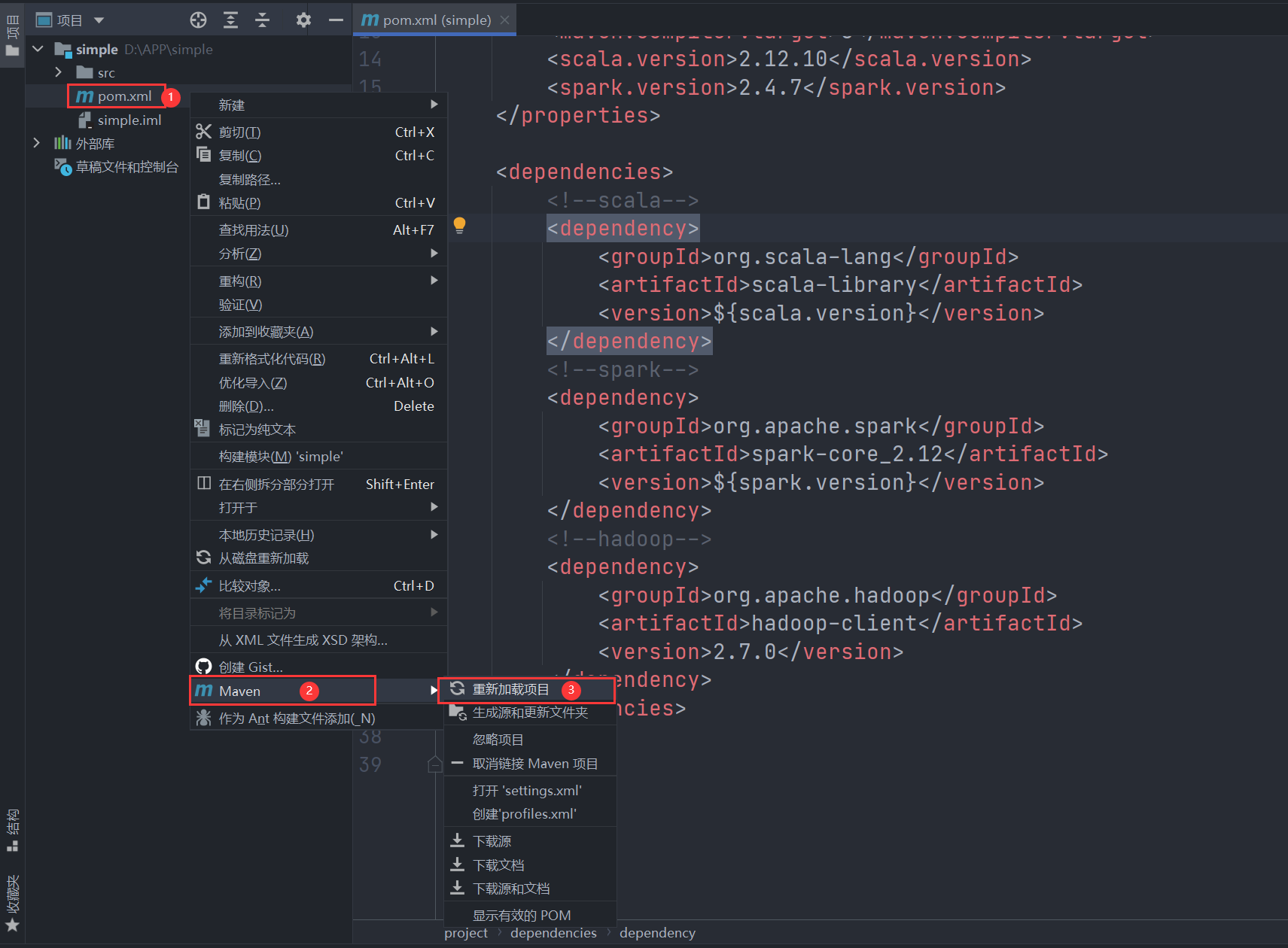

右键pom.xml,选择 Maven -> 重新加载项目

四、编写Spark程序

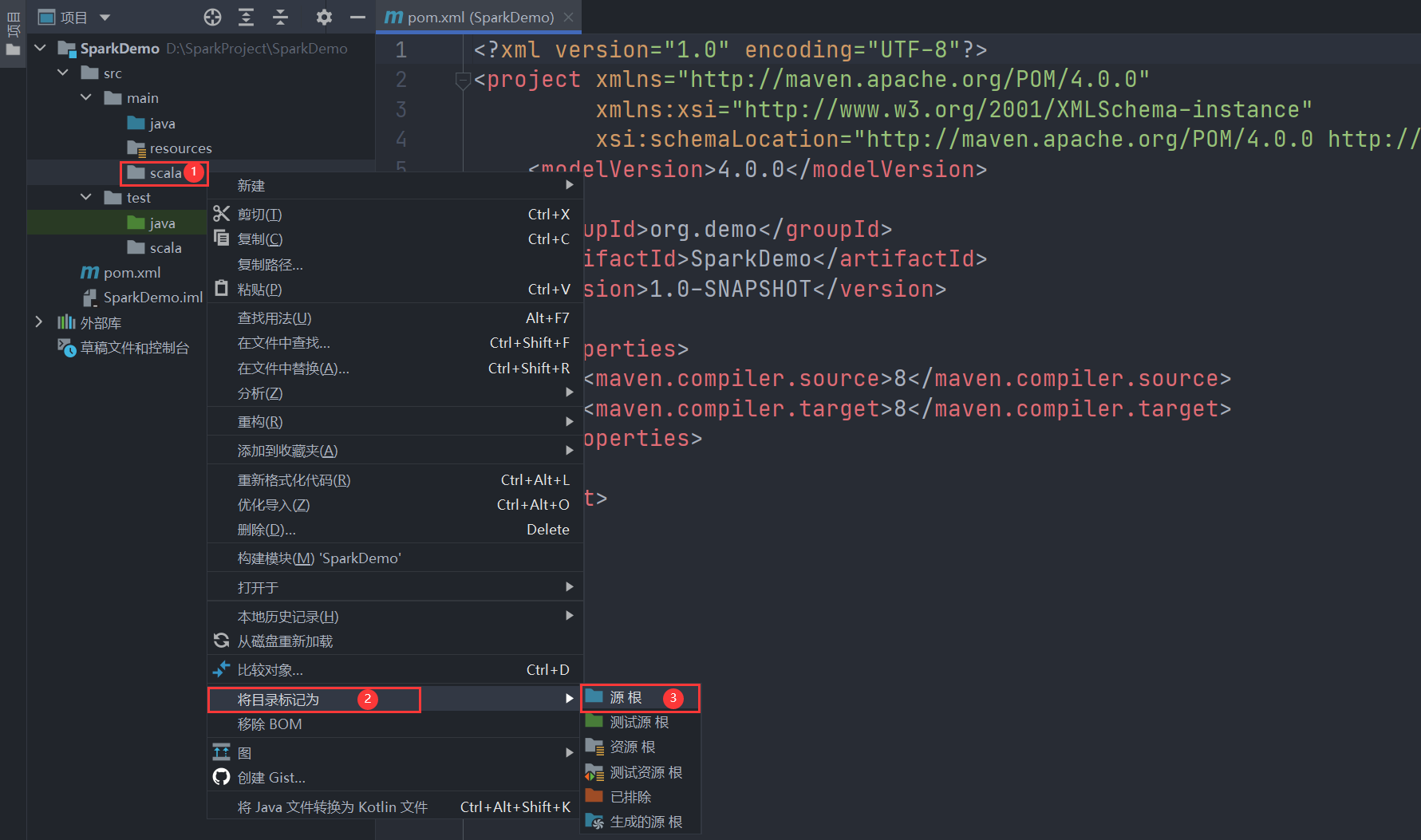

- 在main包下新建scala目录,并调整为suorces root

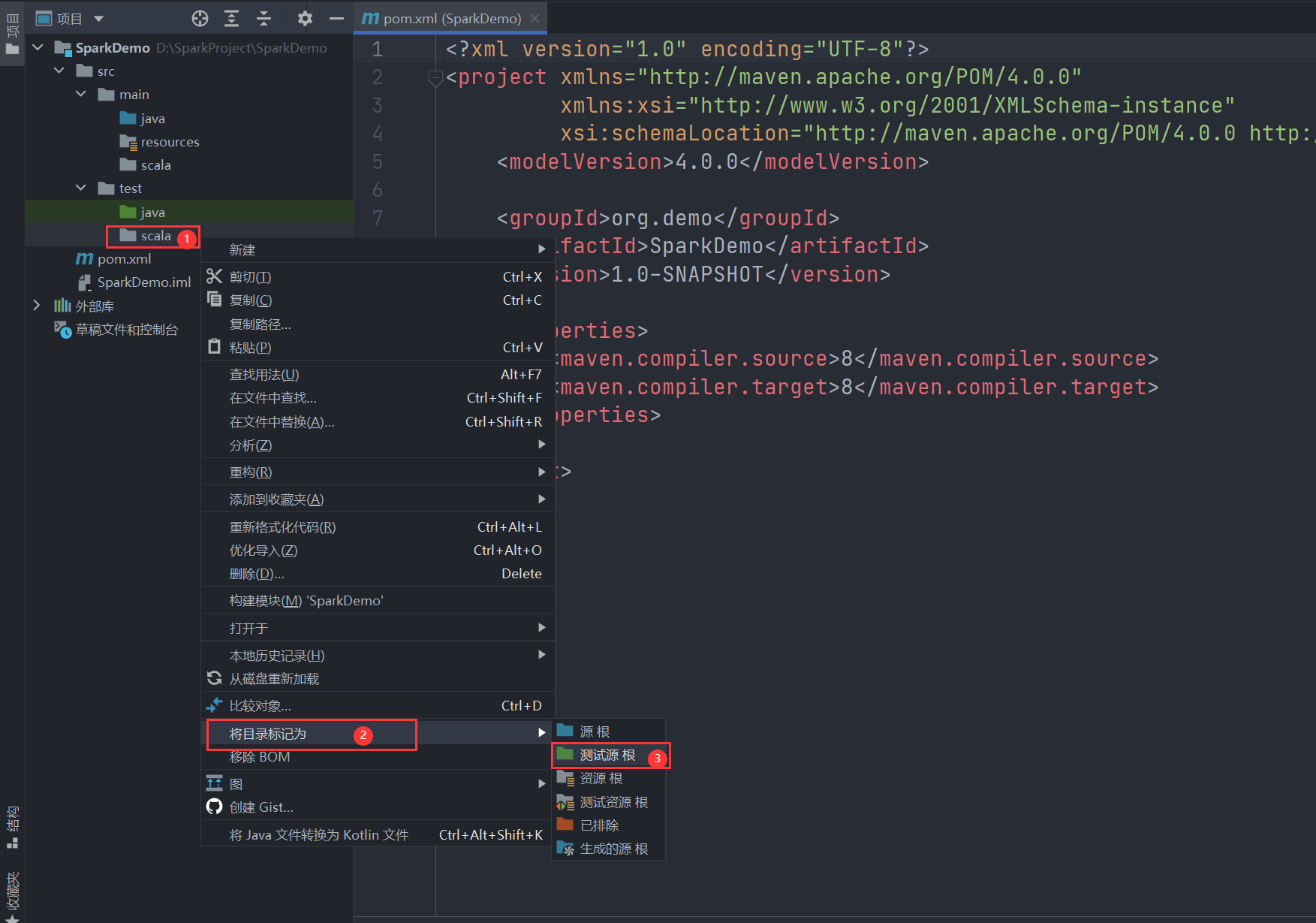

- 在test包下新建scala目录,并调整为test suorces root

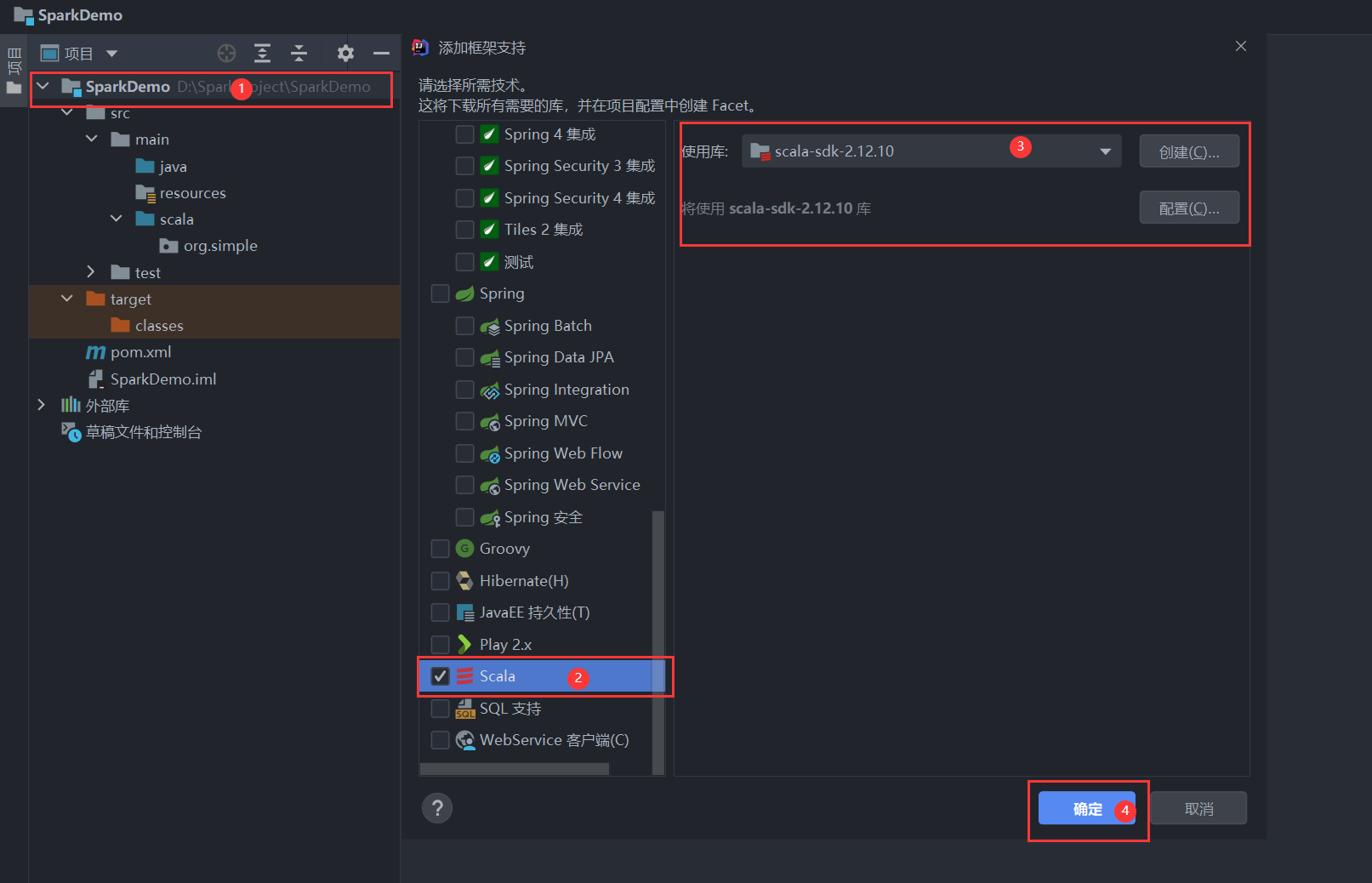

- 为项目添加scala支持

右键项目名称,选择->添加框架支持

- 在main/scala下添加软件包,然后创建Scala类->Object

- 词频统计Demo ```scala package org.simple

import org.apache.spark.{SparkContext, SparkConf} import org.apache.log4j.{Level, Logger}

object Demo { def main(args: Array[String]): Unit = { Logger.getLogger(“org”).setLevel(Level.ERROR)

val sparkConf = new SparkConf().setAppName("WordCount").setMaster("local")

val sc = new SparkContext(sparkConf)

// 词频统计

val dataRDD = sc.textFile("D:\\SparkProject\\data\\test.txt")

.flatMap(_.split(" "))

.map(x => (x, 1))

.reduceByKey((a, b) => (a + b))

dataRDD.foreach(println)

} }

<a name="DB4Bp"></a>

## 五、运行程序

1. 直接运行

右键直接运行

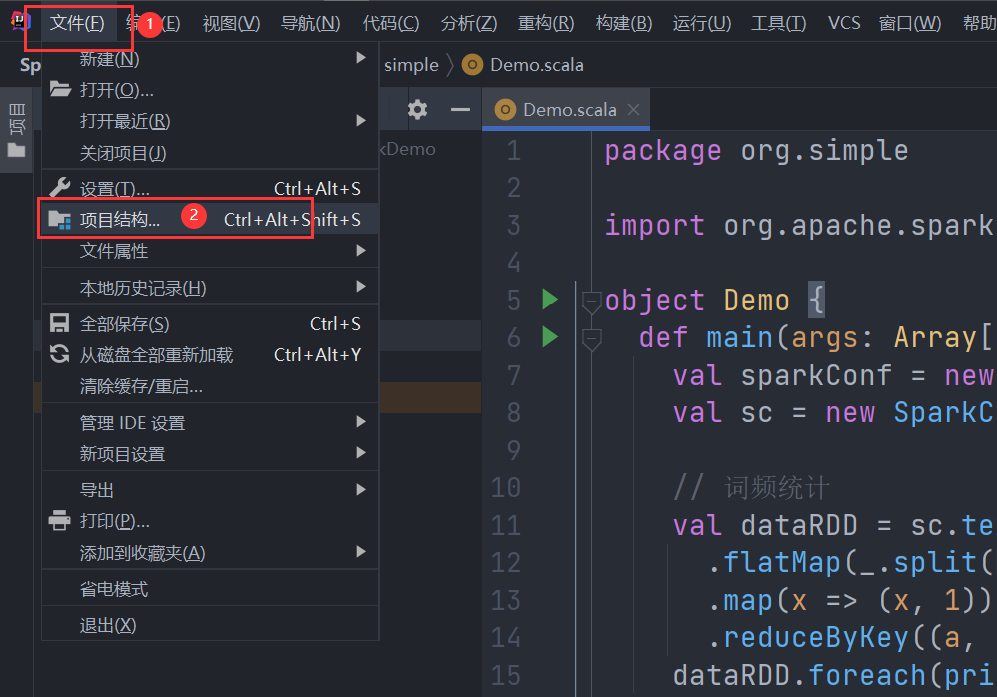

2. 打包运行

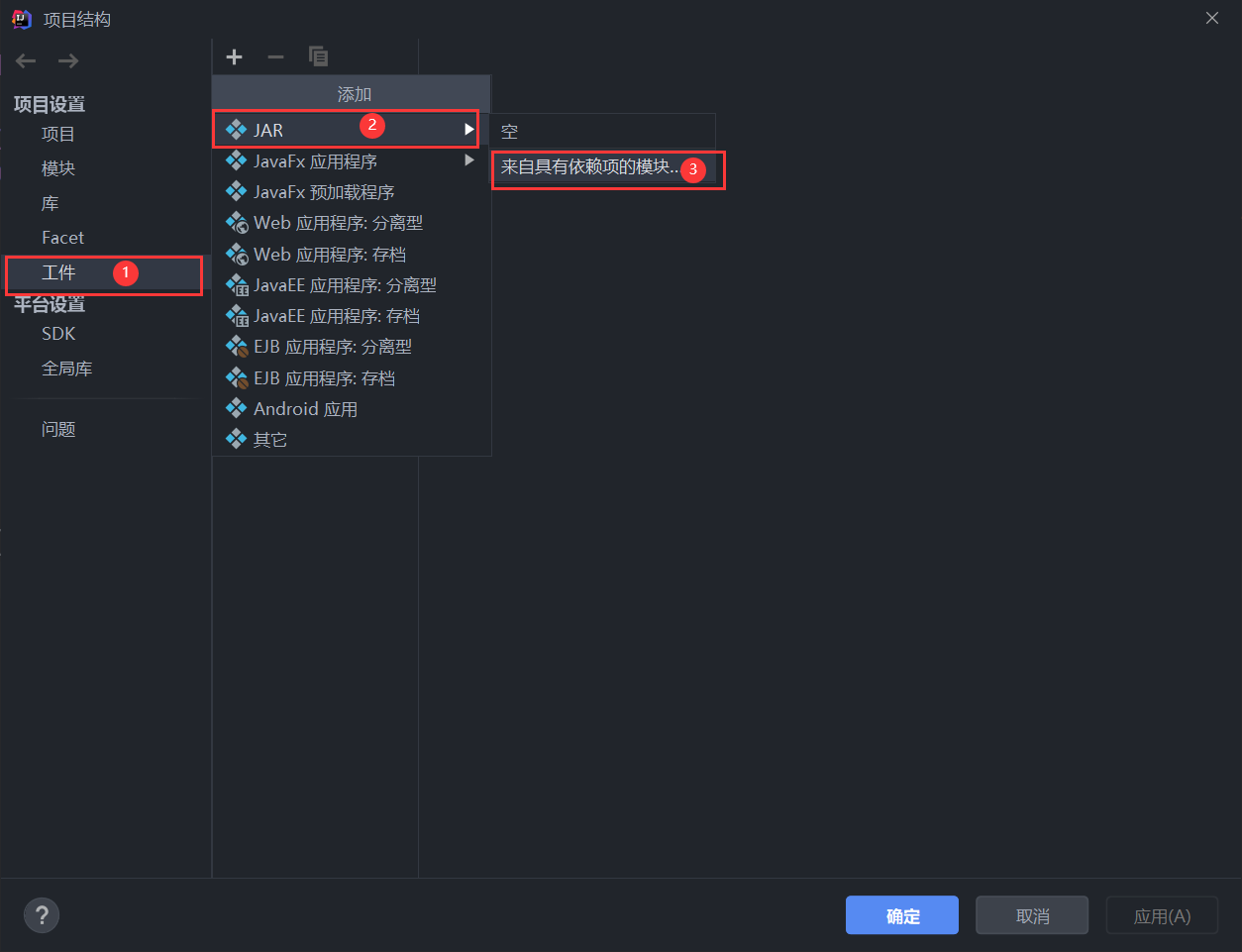

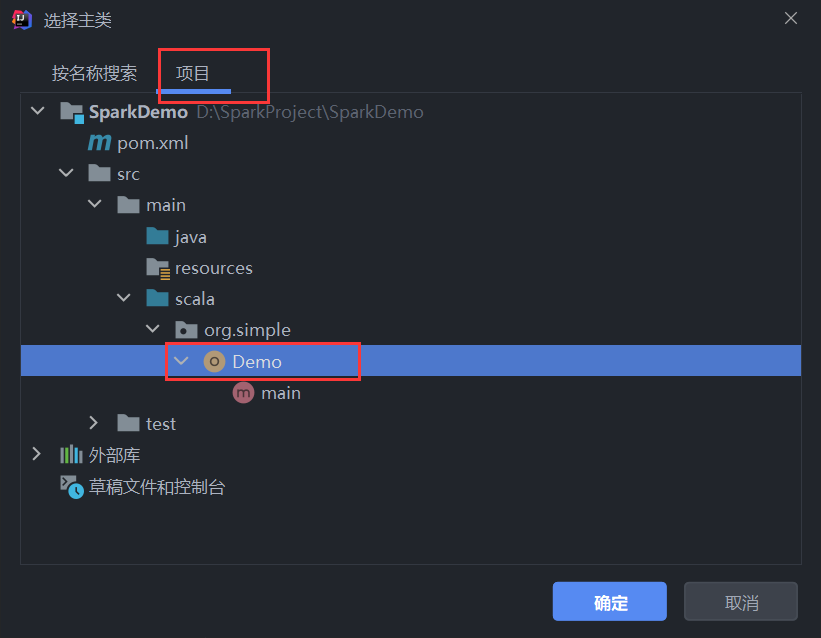

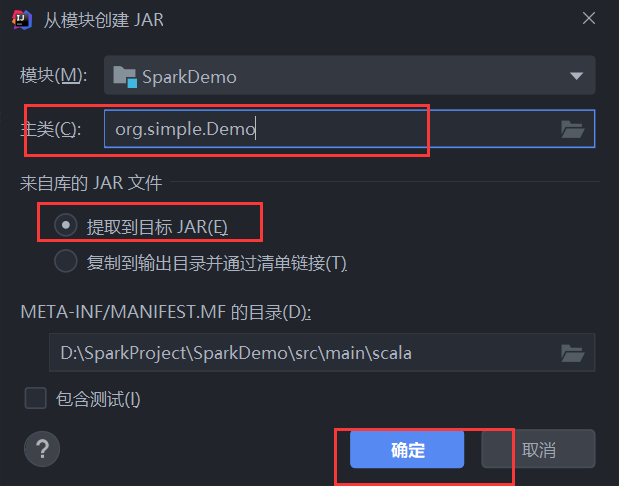

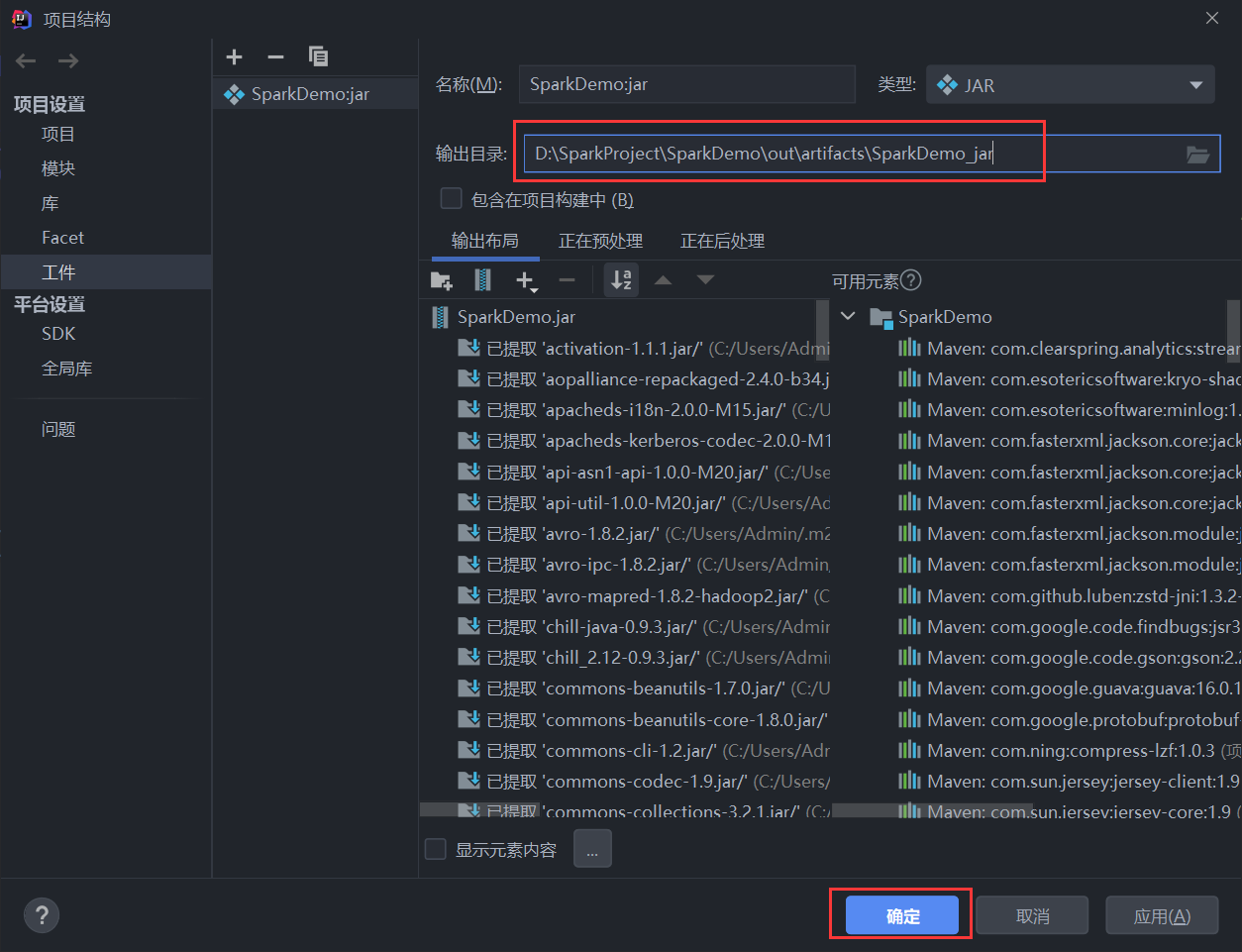

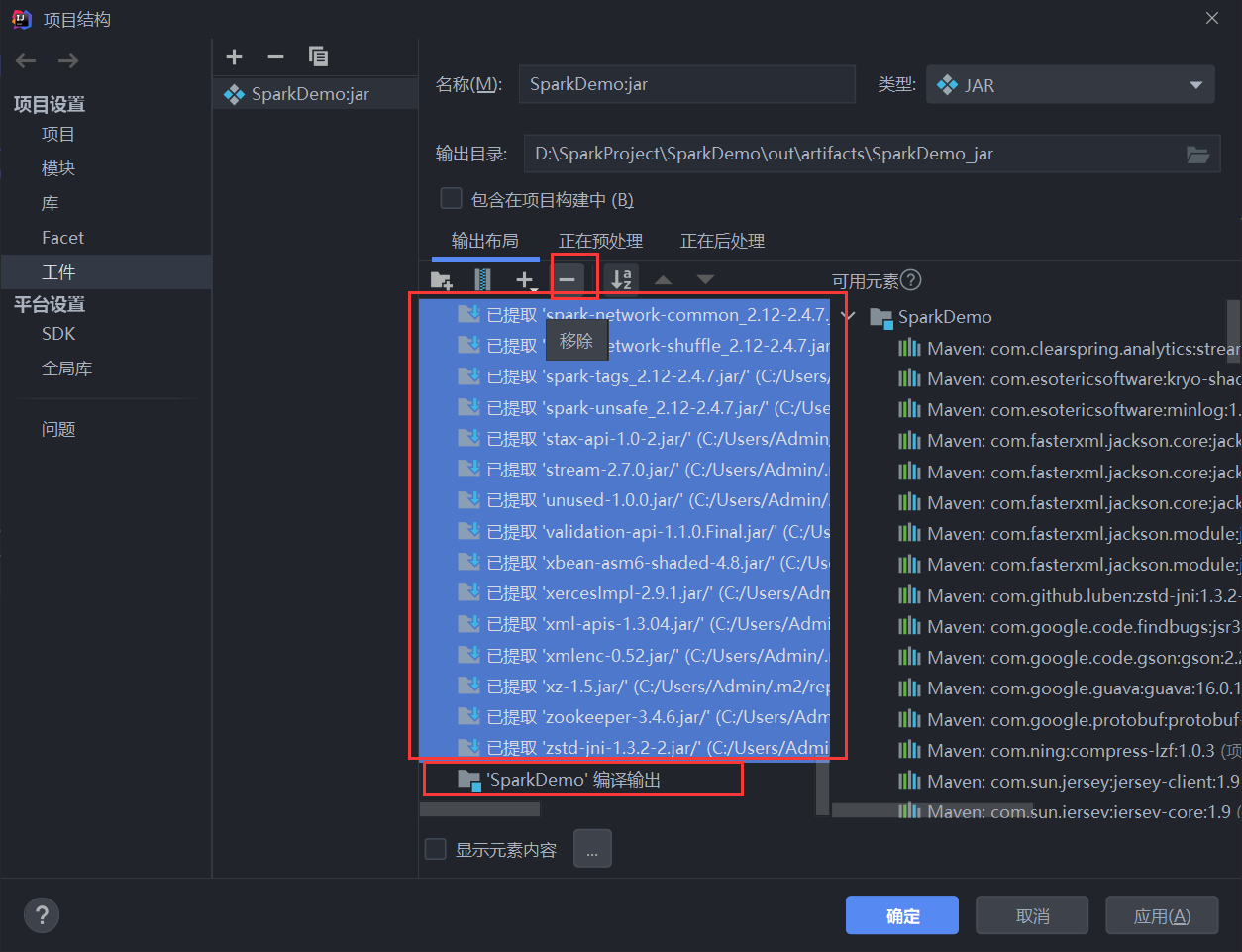

选择文件->项目结构<br /> <br />然后如图所示进行如下操作<br /><br />选择主类<br /><br /><br /><br />移除不需要的依赖包,只保留最后一个<br /><br />构建<br /><br />上传到spark集群后运行

```bash

spark-submit --class org.simple.Demo --master spark://spark0:7077 ./SparkDemo.jar