ReplicaSet 管理副本

启动三个nginx的pod,当有一个pod终止时就会立马启动一个新pod,始终保持三个pod

apiVersion: apps/v1kind: ReplicaSetmetadata:name: nginxspec:replicas: 3selector:matchLabels:app: nginxtemplate:metadata:labels:app: nginxspec:containers:- name: nginximage: nginxports:- containerPort: 80

一般不直接创建ReplicaSet 直接用deployment就行了

Deployment 管理 ReplicaSet

Deployment控制器为Pods和ReplicaSet提供声明式更新的更新能力

Deployment可以管理ReplicaSet, ReplicaSet来管理pod

创建一个deployment 生成2个pod

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx

namespace: default

labels:

app: nginx

spec:

selector:

matchLabels:

app: nginx

replicas: 2

template:

metadata:

labels:

app: nginx

spec:

containers:

- name: nginx

image: nginx

ports:

- containerPort: 80

可以发现deployment创建会自动创建ReplicaSet

deployment yaml文件修改可以直接对pod实现水平扩容

有状态应用 StatefulSet

用来管理有状态应用的工作负载API对象

数据落在磁盘上,不希望突然飘到其他节点

对于StatefulSet中的Pod,每个Pod挂载自己独立的存储,如果一个Pod出现故障,从其他节点启动一个同样名字的Pod,要挂载上原来Pod的存储继续以它的状态提供服务。

限制

- 给定Pod的存储必须由PersistentVolume驱动 基于请求的storage class来提供

- 删除或收缩statefulset并不会删除它关联的存储卷

- statefulset当前需要headless服务来负责pod的网络标记

service文件中的clusterip=none,不让其获取clusterip, DNS解析的时候直接走pod

事例

创建无头service,然后创建StatefulSet,配置挂载的持久化存储,创建持久化存储模板

apiVersion: v1

kind: Service

metadata:

name: nginx

labels:

app: nginx

spec:

selector:

app: nginx

clusterIP: None

ports:

- name: web

port: 80

---

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: web

spec:

selector:

matchLabels:

app: nginx

serviceName: nginx

replicas: 3

template:

metadata:

labels:

app: nginx

spec:

terminationGracePeriodSeconds: 10

containers:

- name: nginx

image: nginx

ports:

- containerPort: 80

name: web

volumeMounts:

- name: www

mountPath: /usr/share/nginx/html

volumeClaimTemplates:

- metadata:

name: www

spec:

accessModes: [ "ReadWriteOnce" ]

storageClassName: "my-storage-class"

resources:

requests:

storage: 1Gi

storageClassName需要指定一个已经存在的storageClass,在虚拟机中开辟一个新空间存放 /usr/share/nginx/html

DaemonSet 后台任务

DaemonSet确保全部节点上运行一个Pod副本,当有节点加入集群,也会为他们新增pod,当有节点从集群移除,pod会被回收,删除Daemonset将会删除他创建的所有pod

一些典型用法

- 在集群的每个节点上运行存储Daemon,比如glusterd或ceph

- 在每个节点上运行日志收集Deamon,比如logstash

- 在每个节点上运行监控Daemon

事例

收集node上的日志

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: ds-test

labels:

app: filebeat

spec:

selector:

matchLabels:

app: filebeat

template:

metadata:

labels:

app: filebeat

spec:

containers:

- name: logs

image: nginx

ports:

- containerPort: 80

volumeMounts:

- name: varlog

mountPath: /tmp/log

volumes:

- name: varlog

hostPath:

path: /var/log

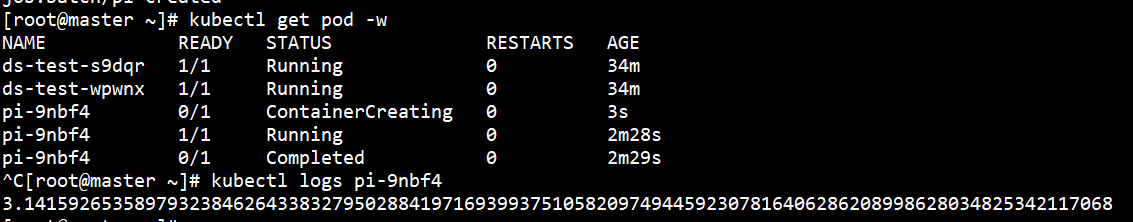

Job 任务

Job会创建一个或多个pod,确保指定数量的pod成功终止,随着pod成功结束,Job跟踪记录成功完成的pod个数,数量达到指定成功个数阈值时,Job结束,删除job会清除创建的全部job

一次性任务

创建一个job 求π前100位

apiVersion: batch/v1

kind: Job

metadata:

name: pi

spec:

template:

spec:

containers:

- name: pi

image: registry.cn-beijing.aliyuncs.com/google_registry/perl:5.26

command: ['perl', '-Mbignum=bpi', '-wle', 'print bpi(100)']

restartPolicy: Never

backoffLimit: 4

cron任务

cron表达式不需要最后加个?

apiVersion: batch/v1beta1

kind: CronJob

metadata:

name: hello

namespace: default

spec:

schedule: "0/5 * * * *"

jobTemplate:

spec:

template:

spec:

containers:

- name: hello

image: busybox

args: ['/bin/sh', '-c', 'date; echo Hello from the Kubernetes cluster']

restartPolicy: OnFailure