Kubernetes结构图

kubeadm安装k8s

master & node

硬件配置

Linux配置

#各个机器设置自己的域名hostnamectl set-hostname xxxx# 将 SELinux 设置为 permissive 模式(相当于将其禁用)sudo setenforce 0sudo sed -i 's/^SELINUX=enforcing$/SELINUX=permissive/' /etc/selinux/config#关闭swapswapoff -ased -ri 's/.*swap.*/#&/' /etc/fstab#允许 iptables 检查桥接流量cat <<EOF | sudo tee /etc/modules-load.d/k8s.confbr_netfilterEOFcat <<EOF | sudo tee /etc/sysctl.d/k8s.confnet.bridge.bridge-nf-call-ip6tables = 1net.bridge.bridge-nf-call-iptables = 1EOFsudo sysctl --system

安装Docker

# 卸载已存在的dockersudo yum remove docker \docker-client \docker-client-latest \docker-common \docker-latest \docker-latest-logrotate \docker-logrotate \docker-engine# 安装yum工具yum install -y yum-utils# 配置docker repo的国内地址yum-config-manager \--add-repo \https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo# 刷新yum索引yum makecache fast# 安装docker相关的 docker-ce 社区版(ee是企业版,要收费)yum install docker-ce docker-ce-cli containerd.io# 启动dockersystemctl enable dockersystemctl start docker# 使用docker version查看是否按照成功[root@VM-8-9-centos ~]# docker versionClient: Docker Engine - CommunityVersion: 20.10.9API version: 1.41Go version: go1.16.8Git commit: c2ea9bcBuilt: Mon Oct 4 16:08:25 2021OS/Arch: linux/amd64Context: defaultExperimental: trueServer: Docker Engine - CommunityEngine:Version: 20.10.9API version: 1.41 (minimum version 1.12)Go version: go1.16.8Git commit: 79ea9d3Built: Mon Oct 4 16:06:48 2021OS/Arch: linux/amd64Experimental: falsecontainerd:Version: 1.4.11GitCommit: 5b46e404f6b9f661a205e28d59c982d3634148f8runc:Version: 1.0.2GitCommit: v1.0.2-0-g52b36a2docker-init:Version: 0.19.0GitCommit: de40ad0

下载可能用到的镜像

sudo tee ./images.sh <<-'EOF'#!/bin/bashimages=(kube-apiserver:v1.20.9kube-proxy:v1.20.9kube-controller-manager:v1.20.9kube-scheduler:v1.20.9coredns:1.7.0etcd:3.4.13-0pause:3.2)for imageName in ${images[@]} ; dodocker pull registry.cn-hangzhou.aliyuncs.com/lfy_k8s_images/$imageNamedoneEOFchmod +x ./images.sh && ./images.sh

配置master节点域名

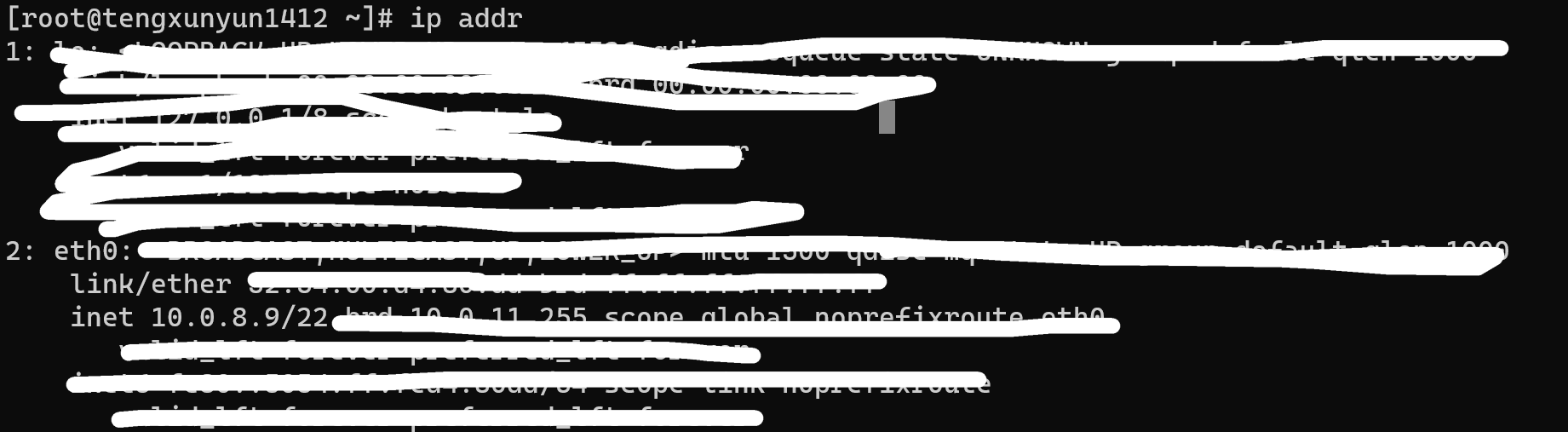

挑选一台机子作为master节点,其他机子作为node节点,查看master节点ip地址

将这个地址写入master节点和所有node节点的hosts。

echo "xx.xx.xx.xx cluster-endpoint" >> /etc/hosts

安装kubelet、kubeadm、kubectl

cat <<EOF | sudo tee /etc/yum.repos.d/kubernetes.repo[kubernetes]name=Kubernetesbaseurl=http://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64enabled=1gpgcheck=0repo_gpgcheck=0gpgkey=http://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpghttp://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpgexclude=kubelet kubeadm kubectlEOFsudo yum install -y kubelet-1.20.9 kubeadm-1.20.9 kubectl-1.20.9 --disableexcludes=kubernetessudo systemctl enable --now kubelet

master

安装控制平面

在master节点安装控制平面。安装的时候需要保证service-cidr、pod-network-cidr、docker0和eth0的网络范围不重叠。

kubeadm init \--apiserver-advertise-address=`master 节点的IP` \--control-plane-endpoint=cluster-endpoint \--image-repository registry.cn-hangzhou.aliyuncs.com/lfy_k8s_images \--kubernetes-version v1.20.9 \--service-cidr=10.96.0.0/16 \--pod-network-cidr=192.168.0.0/16

安装好之后会出现下面一段话。

Your Kubernetes control-plane has initialized successfully!To start using your cluster, you need to run the following as a regular user:mkdir -p $HOME/.kubesudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/configsudo chown $(id -u):$(id -g) $HOME/.kube/configAlternatively, if you are the root user, you can run:export KUBECONFIG=/etc/kubernetes/admin.confYou should now deploy a pod network to the cluster.Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:https://kubernetes.io/docs/concepts/cluster-administration/addons/You can now join any number of control-plane nodes by copying certificate authoritiesand service account keys on each node and then running the following as root:kubeadm join cluster-endpoint:6443 --token hums8f.vyx71prsg74ofce7 \--discovery-token-ca-cert-hash sha256:a394d059dd51d68bb007a532a037d0a477131480ae95f75840c461e85e2c6ae3 \--control-planeThen you can join any number of worker nodes by running the following on each as root:kubeadm join cluster-endpoint:6443 --token hums8f.vyx71prsg74ofce7 \--discovery-token-ca-cert-hash sha256:a394d059dd51d68bb007a532a037d0a477131480ae95f75840c461e85e2c6ae3

处理配置文件

mkdir -p $HOME/.kubesudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/configsudo chown $(id -u):$(id -g) $HOME/.kube/config

export KUBECONFIG=/etc/kubernetes/admin.conf

安装网络组件

curl https://docs.projectcalico.org/manifests/calico.yaml -Okubectl apply -f calico.yaml

node

加入集群

下面的命令是控制平面安装好之后输出的内容中最后一段后,给它拷贝到node节点执行即可。

kubeadm join cluster-endpoint:6443 --token hums8f.vyx71prsg74ofce7 \--discovery-token-ca-cert-hash sha256:a394d059dd51d68bb007a532a037d0a477131480ae95f75840c461e85e2c6ae3

获取token

安装控制平面之后输出的令牌,只在一段时间内有效,如果过了时间,node想加入集群,需要执行

[root@k8s-master ~]# kubeadm token create --print-join-commandkubeadm join cluster-endpoint:6443 --token 0d4c8q.tjsk2ge3u95070ll --discovery-token-ca-cert-hash sha256:5f7659f39b097ed9312bb5b266d629551d93aaaa087c40076b9cba03988f50f9

查看集群状态

查询集群的节点

[root@k8s-master ~]# kubectl get nodesNAME STATUS ROLES AGE VERSIONk8s-master Ready control-plane,master 44h v1.20.9k8s-node1 Ready <none> 44h v1.20.9k8s-node2 Ready <none> 44h v1.20.9

集群是否正常运行

[root@k8s-master ~]# kubectl get pods -ANAMESPACE NAME READY STATUS RESTARTS AGEkube-system calico-kube-controllers-659bd7879c-7r9cg 1/1 Running 2 44hkube-system calico-node-c5bcv 1/1 Running 2 44hkube-system calico-node-plbqz 1/1 Running 2 44hkube-system calico-node-v9lrm 1/1 Running 2 44hkube-system coredns-5897cd56c4-lb5zl 1/1 Running 2 44hkube-system coredns-5897cd56c4-x9sc9 1/1 Running 2 44hkube-system etcd-k8s-master 1/1 Running 2 44hkube-system kube-apiserver-k8s-master 1/1 Running 3 44hkube-system kube-controller-manager-k8s-master 1/1 Running 2 44hkube-system kube-proxy-58mnj 1/1 Running 2 44hkube-system kube-proxy-9wxjw 1/1 Running 2 44hkube-system kube-proxy-jzv5s 1/1 Running 2 44hkube-system kube-scheduler-k8s-master 1/1 Running 2 44h

当我们新创建一个集群之后,如果上面获取集群所有pod的命令输出的结果是所有的pod都READY 1/1,就说明这个集群正常运行了。