- Chapter 3. 常见任务

- 3.1 理解并创建Layer

- 3.2. 定制化镜像

- 3.3 编写新Recipe

- 3.3.1 概述

- 3.3.2 手动/自动创建基本Recipe

- 3.3.3 保存并为recipe命名

- 3.3.4 使用Recipe运行构建

- 3.3.5. 获取代码

- 3.3.6 升级代码

- 3.3.7 打补丁

- 3.3.8 许可证书

- 3.3.9 依赖

- 3.3.10 配置recipe

- 3.3.11 使用Headers与设备连接

- 3.3.12 编译

- 3.3.13 安装

- 3.3.14 启用系统服务

- 3.3.15 打包

- 3.3.16 Recipe间共享文件

- 3.3.17 使用虚拟提供者

- 3.3.18 给待发布Recipe正确创建版本

- 3.3.19 Post-Installation Scripts

- 3.3.20 测试

- 3.3.21 示例

- 3.3.21.1 Single .c File Package (Hello World!)

- 3.3.21.2 Autotool构建的包

- 3.3.21.5 Packaging Externally Produced Binaries

- 3.3.22 Following Recipe Style Guidelines

- 3.3.23 Recipe语法

- 3.4 增加一个新的机器

- 3.5 升级Recipe

- 3.6 寻找临时源代码

- 3.7 在工作流中使用Quilt

- 3.8 使用devShell

- 3.9 使用

devpyshell - 3.10 构建

- 3.11 加速构建

- 3.12 使用库文件

- 3.13. 使用 x32 psABI

- 3.14 Enabling GObject Introspection Support

- 3.15 Optionally Using an External Toolchain

- 3.16 Creating Partitioned Images Using Wic

- 3.17 Flashing Images Using

bmaptool - 3.18 Making Images More Secure

- 3.19. Creating Your Own Distribution

- 3.20. Creating a Custom Template Configuration Directory

- 3.21. Conserving Disk Space During Builds

- 3.22. Working with Packages

- 3.23. Efficiently Fetching Source Files During a Build

- 3.24. Selecting an Initialization Manager

- 3.25. Selecting a Device Manager

- 3.26. Using an External SCM

- 3.27. Creating a Read-Only Root Filesystem

- 3.28. Maintaining Build Output Quality

- 3.29. Performing Automated Runtime Testing

- 3.30. Debugging Tools and Techniques

- 3.30.1. Viewing Logs from Failed Tasks

- 3.30.2. Viewing Variable Values

- 3.30.3. Viewing Package Information with oe-pkgdata-util

- 3.30.4. Viewing Dependencies Between Recipes and Tasks

- 3.30.5. Viewing Task Variable Dependencies

- 3.30.6. Viewing Metadata Used to Create the Input Signature of a Shared State Task

- 3.30.7. Invalidating Shared State to Force a Task to Run

- 3.30.8. Running Specific Tasks

- 3.30.9. General BitBake Problems

- 3.30.10. Building with No Dependencies

- 3.30.11. Recipe Logging Mechanisms

- 3.30.12. 调试并行Make冲突

- 3.30.13. Debugging With the GNU Project Debugger (GDB) Remotely

- 3.30.14. Debugging with the GNU Project Debugger (GDB) on the Target

- 3.30.15. Other Debugging Tips

- 3.31. Making Changes to the Yocto Project

- 3.32. Working With Licenses

- 3.33. Using the Error Reporting Tool

- 3.34. Using Wayland and Weston

Chapter 3. 常见任务

- 3.1 理解并创建Layer

- 3.2. 定制化镜像

- 3.3 编写新Recipe

- 3.3.1 概述

- 3.3.2 手动/自动创建基本Recipe

- 3.3.3 保存并为recipe命名

- 3.3.4 使用Recipe运行构建

- 3.3.5. 获取代码

- 3.3.6 升级代码

- 3.3.7 打补丁

- 3.3.8 许可证书

- 3.3.9 依赖

- 3.3.10 配置recipe

- 3.3.11 使用Headers与设备连接

- 3.3.12 编译

- 3.3.13 安装

- 3.3.14 启用系统服务

- 3.3.15 打包

- 3.3.16 Recipe间共享文件

- 3.3.17 使用虚拟提供者

- 3.3.18 给待发布Recipe正确创建版本

- 3.3.19 Post-Installation Scripts

- 3.3.20 测试

- 3.3.21 示例

- 3.3.21.1 Single .c File Package (Hello World!)

- 3.3.21.2 Autotool构建的包

- 3.3.21.5 Packaging Externally Produced Binaries

- 3.3.22 Following Recipe Style Guidelines

- 3.3.23 Recipe语法

- 3.4 增加一个新的机器

- 3.5 升级Recipe

- 3.6 寻找临时源代码

- 3.7 在工作流中使用Quilt

- 3.8 使用devShell

- 3.9 使用

devpyshell - 3.10 构建

- 3.11 加速构建

- 3.12 使用库文件

- 3.13. 使用 x32 psABI

- 3.14 Enabling GObject Introspection Support

- 3.15 Optionally Using an External Toolchain

- 3.16 Creating Partitioned Images Using Wic

- 3.17 Flashing Images Using

bmaptool - 3.18 Making Images More Secure

- 3.19. Creating Your Own Distribution

- 3.20. Creating a Custom Template Configuration Directory

- 3.21. Conserving Disk Space During Builds

- 3.22. Working with Packages

- 3.23. Efficiently Fetching Source Files During a Build

- 3.24. Selecting an Initialization Manager

- 3.25. Selecting a Device Manager

- 3.26. Using an External SCM

- 3.27. Creating a Read-Only Root Filesystem

- 3.28. Maintaining Build Output Quality

- 3.29. Performing Automated Runtime Testing

- 3.30. Debugging Tools and Techniques

- 3.30.1. Viewing Logs from Failed Tasks

- 3.30.2. Viewing Variable Values

- 3.30.3. Viewing Package Information with oe-pkgdata-util

- 3.30.4. Viewing Dependencies Between Recipes and Tasks

- 3.30.5. Viewing Task Variable Dependencies

- 3.30.6. Viewing Metadata Used to Create the Input Signature of a Shared State Task

- 3.30.7. Invalidating Shared State to Force a Task to Run

- 3.30.8. Running Specific Tasks

- 3.30.9. General BitBake Problems

- 3.30.10. Building with No Dependencies

- 3.30.11. Recipe Logging Mechanisms

- 3.30.12. 调试并行Make冲突

- 3.30.13. Debugging With the GNU Project Debugger (GDB) Remotely

- 3.30.14. Debugging with the GNU Project Debugger (GDB) on the Target

- 3.30.15. Other Debugging Tips

- 3.31. Making Changes to the Yocto Project

- 3.32. Working With Licenses

- 3.33. Using the Error Reporting Tool

- 3.34. Using Wayland and Weston

本章将介绍在使用Yocto Project时,需要经常处理的任务,例如创建layer,新加软件包,扩展/定制化镜像,移植到新硬件等基础功能。

3.1 理解并创建Layer

OpenEmbedded(译者注:后文以OE代替)构建系统支持管理多个layer的元数据(Metadata)。Layer允许你将不同类型的自定义设置独立开来。请阅读《Yocto Project Overview and Concepts Manual》的“The Yocto Project Layer Model”章节以获取Layer Model的介绍信息。

3.1.1 创建你自己的Layer

使用OE构建系统来创建你自己的Layer是很容易的一件事,Yocto Project还提供了工具让你更加快速地创建Layer。为了让你更好地理解Layer这个概念,本节将一步步的展示如何创建Layer。请阅读《Yocto Project Board Support Package (BSP) Developer’s Guide》的“Creating a New BSP Layer Using the bitbake-layers Script”章节和本文档的”使用bitbake-layers脚本创建通用Layer”章节以了解更多Layer创建工具。

参照以下步骤,在不使用工具地情况下来创建Layer:

查看已有Layer: 在创建一个新Layer之前,你需要确认下元数据并没有被包含于其他人创建的Layer中。你可以查看OE社区的OpenEmbedded MEtadata Index中可以被用在Yocto Project里的Layer索引。你可以找到你想要的或是差不多的一个Layer。

创建新目录: 为你的Layer创建目录。创建Layer时,确保目录和Yocto Project代码目录(例如克隆下来的poky仓库)无关。

尽管没有强制要求,最好在目录名前加上”meta-“前缀,例如:

meta-mylayermeta-GUI_xyzmeta-mymachine

除例外情况,Layer名方方式如下:

meta-root_name

遵守这样的命名规范,你就不会遇到因工具,模块或者变量默认你的Layer名是以”meta-“前缀开始而产生的困扰。相关配置文件示例在后续步骤中有所展示,不带”Meta-“前缀的Layer名会自动加上这个前缀赋值给配置中的几个变量。

创建Layer配置文件: 在新建的Layer文件夹中,你需要创建

conf/layer.conf文件。最简单的方式是拷贝一份已有配置到你的Layer配置目录中,然后根据需要改动它。Yocto Project代码仓库的

meta-yocto-bsp/conf/layer.conf文件说明了其语法。你需要在你的配置文件中,将”yoctobsp”替换成一个唯一标识(例如Layer “meta-machinexyz”的名字”machinexyz”):# We have a conf and classes directory, add to BBPATHBBPATH .= ":${LAYERDIR}"# We have recipes-* directories, add to BBFILESBBFILES += "${LAYERDIR}/recipes-*/*/*.bb \${LAYERDIR}/recipes-*/*/*.bbappend"BBFILE_COLLECTIONS += "yoctobsp"BBFILE_PATTERN_yoctobsp = "^${LAYERDIR}/"BBFILE_PRIORITY_yoctobsp = "5"LAYERVERSION_yoctobsp = "4"LAYERSERIES_COMPAT_yoctobsp = "warrior"

以下是Layer配置文件说明:

BBPATH: 将此Layer根目录添加到BitaBake搜索路径中。利用BBPATH变量,BitBake可以定位类文件(.bbclass),配置文件,和被include的文件。BitBake使用匹配BBPATH名字的第一个文件,这与给二进制文件使用的PATH变量类似。同样也推荐你为你的Layer中类文件和配置文件起一个唯一的名字。

BBFILES: 定义Layer中recipe的路径

BBFILE_COLLECTIONS: 创建唯一标识符以给OE构建系统参照。此示例中,标识符”yoctobsp”代表”meta-yocto-bsp”Layer。

BBFILE_PATTERN: 解析时提供Layer目录

BBFILE_PRIORITY: OE构建系统在不同Layer找到相同名字recipe时所参考的使用优先级

LAYERVERSION: Layer的版本号。你可以通过LAYERDEPENDS变量指定使用特定版本号的Layer

LAYERSERIES_COMPAT: Lists the Yocto Project releases for which the current version is compatible. This variable is a good way to indicate if your particular layer is current.列出当前版本兼容的Yocto Project释放版本。它可以表示Layer是否有效。

添加内容: 根据Layer类型添加内容。如果这个Layer支持某一设备,在

conf/machine/目录下的文件中添加此设备的配置。如果这个Layer新增发行版本策略,就在conf/distro/下文件添加发行版本配置。如果这个Layer引入新的recipe,把recipe放入recipes-*前缀的子目录中。注释

请参考《Yocto Project Board Support Package (BSP) Developer’s Guide》以阅读更多关于遵从Yocto Project的Layer层级的解释(可选)测试兼容性: 如果你希望获得许可,以便在你的Layer或使用了你的Layer的应用中使用Yocto Project Compatibility Logo,请阅读3.1.3 确保你的Layer兼容Yocto Project章节以获得更多信息。

3.1.2 创建Layer的最佳实践

T如果想创建易于维护并且不会影响其他设备的构建的Layer,你需要考虑一下列表的建议:

避免在你的配置中覆盖其他Layer的所有Recipe: 也就是说,不要拷贝整个recipe到你的Layer中然后修改它,而是应该使用

.bbappend文件去重写那些你需要修改的部分。避免重复include文件: 给使用include文件的recipe使用

.bbappend文件。或者,当你想要引入一个新的recipe而这个recipe需要include文件时,使用相对于原始Layer目录的路径去引入它。比如说,使用require recipes-core/package/file.inc这样的路径,而不是require file.inc。如果你发现你需要重写include文件,这可能意味着这个include文件是有问题的,这种情况下,你应该指出问题而不是直接重写它。例如,你可以与维护者取得联系,新加变量以使得可能被重写的部分更容易被修改。结构化Layer: 正确使用append文件进行重写并在Layer中放置设备相关文件,可以确保构建不会使用错误的元数据并影响其他设备的构建。以下时一些示例:

修改变量以支持不同机器: 假设你有一个为支持构建”one”设备的

meta-oneLayer,你创建了base-files.bbappend文件并以修改DEPENDS变量的方式创建了”foo”依赖项:DEPENDS = "foo"

每当构建包含

meta-oneLayer时这个依赖就会被创建。然而,你可能不希望为所有设备添加此依赖。比如,你想为”two”设备进行构建,但bblayers.conf却包含了meta-oneLayer,构建时,设备”two”的base-files也会有foo的依赖。为了确保你的修改仅仅应用于设备”one”,用设备重写

DEPENDS语句:DEPENDS_one = "foo"

使用

_append和_prepend操作时同样遵守此策略:DEPENDS_append_one = " foo"DEPENDS_prepend_one = "foo "

拿实际案例来说,如下是用

musl C库构建gnutls时,添加的”argp-standalone”依赖:DEPENDS_append_libc-musl = " argp-standalone"

注释

使用指定设备的_append和_prepend操作时避免使用”+=””=+”在指定机器特定路径的地方设置机器特定文件: When you have a base recipe, such as

base-files.bb, that contains a SRC_URI statement to a file, you can use an append file to cause the build to use your own version of the file. For example, an append file in your layer atmeta-one/recipes-core/base-files/base-files.bbappendcould extend FILESPATH using FILESEXTRAPATHS as follows:FILESEXTRAPATHS_prepend := "${THISDIR}/${BPN}:"

The build for machine “one” will pick up your machine-specific file as long as you have the file in

meta-one/recipes-core/base-files/base-files/. However, if you are building for a different machine and thebblayers.conffile includes themeta-onelayer and the location of your machine-specific file is the first location where that file is found according toFILESPATH, builds for all machines will also use that machine-specific file.You can make sure that a machine-specific file is used for a particular machine by putting the file in a subdirectory specific to the machine. For example, rather than placing the file in

meta-one/recipes-core/base-files/base-files/as shown above, put it inmeta-one/recipes-core/base-files/base-files/one/. Not only does this make sure the file is used only when building for machine “one”, but the build process locates the file more quickly.In summary, you need to place all files referenced from

SRC_URIin a machine-specific subdirectory within the layer in order to restrict those files to machine-specific builds.

兼容Yocto Project: If you want permission to use the Yocto Project Compatibility logo with your layer or application that uses your layer, perform the steps to apply for compatibility. 请阅读3.1.3 确保你的Layer兼容Yocto Project章节以获得更多信息。

遵守Layer命名约定: 使用

meta-layer_name的命名格式将自定义Layer存储在Git仓库本地将Layer成组: Clone your repository alongside other cloned meta directories from the Source Directory.

3.1.3 确保你的Layer兼容Yocto Project

When you create a layer used with the Yocto Project, it is advantageous to make sure that the layer interacts well with existing Yocto Project layers (i.e. the layer is compatible with the Yocto Project). Ensuring compatibility makes the layer easy to be consumed by others in the Yocto Project community and could allow you permission to use the Yocto Project Compatible Logo.

Note

Only Yocto Project member organizations are permitted to use the Yocto Project Compatible Logo. The logo is not available for general use. For information on how to become a Yocto Project member organization, see the Yocto Project Website.

The Yocto Project Compatibility Program consists of a layer application process that requests permission to use the Yocto Project Compatibility Logo for your layer and application. The process consists of two parts:

Successfully passing a script (

yocto-check-layer) that when run against your layer, tests it against constraints based on experiences of how layers have worked in the real world and where pitfalls have been found. Getting a “PASS” result from the script is required for successful compatibility registration.Completion of an application acceptance form, which you can find at https://www.yoctoproject.org/webform/yocto-project-compatible-registration.

To be granted permission to use the logo, you need to satisfy the following:

Be able to check the box indicating that you got a “PASS” when running the script against your layer.

Answer “Yes” to the questions on the form or have an acceptable explanation for any questions answered “No”.

Be a Yocto Project Member Organization.

The remainder of this section presents information on the registration form and on the yocto-check-layer script.

3.1.3.1 Yocto Project兼容程序应用

Use the form to apply for your layer’s approval. Upon successful application, you can use the Yocto Project Compatibility Logo with your layer and the application that uses your layer.

To access the form, use this link: https://www.yoctoproject.org/webform/yocto-project-compatible-registration. Follow the instructions on the form to complete your application.

The application consists of the following sections:

联系方式: Provide your contact information as the fields require. Along with your information, provide the released versions of the Yocto Project for which your layer is compatible.

验收标准: Provide “Yes” or “No” answers for each of the items in the checklist. Space exists at the bottom of the form for any explanations for items for which you answered “No”.

Recommendations: Provide answers for the questions regarding Linux kernel use and build success.

3.1.3.2 yocto-check-layer 脚本

The yocto-check-layer script provides you a way to assess how compatible your layer is with the Yocto Project. You should run this script prior to using the form to apply for compatibility as described in the previous section. You need to achieve a “PASS” result in order to have your application form successfully processed.

The script divides tests into three areas: COMMON, BSP, and DISTRO. For example, given a distribution layer (DISTRO), the layer must pass both the COMMON and DISTRO related tests. Furthermore, if your layer is a BSP layer, the layer must pass the COMMON and BSP set of tests.

To execute the script, enter the following commands from your build directory:

$ source oe-init-build-env$ yocto-check-layer your_layer_directory

Be sure to provide the actual directory for your layer as part of the command.

Entering the command causes the script to determine the type of layer and then to execute a set of specific tests against the layer. The following list overviews the test:

common.test_readme: Tests if a README file exists in the layer and the file is not empty.common.test_parse: Tests to make sure that BitBake can parse the files without error (i.e. bitbake -p).common.test_show_environment: Tests that the global or per-recipe environment is in order without errors (i.e. bitbake -e).common.test_signatures: Tests to be sure that BSP and DISTRO layers do not come with recipes that change signatures.bsp.test_bsp_defines_machines: Tests if a BSP layer has machine configurations.bsp.test_bsp_no_set_machine: Tests to ensure a BSP layer does not set the machine when the layer is added.distro.test_distro_defines_distros: Tests if a DISTRO layer has distro configurations.distro.test_distro_no_set_distro: Tests to ensure a DISTRO layer does not set the distribution when the layer is added.

3.1.4 启用你的Layer

B在OE构建系统能够使用你创建的新Layer前,你需要启用它。是需要将Layer路径添加到build目录下conf/bblayers.conf的BBLAYERS变量就能很容易的使它起效。以下时启用名为meta-mylayer的示例:

# POKY_BBLAYERS_CONF_VERSION is increased each time build/conf/`bblayers.conf`# changes incompatiblyPOKY_BBLAYERS_CONF_VERSION = "2"BBPATH = "${TOPDIR}"BBFILES ?= ""BBLAYERS ?= " \/home/user/poky/meta \/home/user/poky/meta-poky \/home/user/poky/meta-yocto-bsp \/home/user/poky/meta-mylayer \"

BitBake自上而下解析conf/bblayers.conf文件中BBLAYERS变量设定的每一个conf/layer.conf文件。处理conf/layer.conf文件期间,BitBake会添加recipes,类文件喝配置文件到源目录。

3.1.5 在Layer中使用.bbappend文件

在另外一个recipe上附加元数据的recipe被称为BitBake append文件。BitBake append文件使用.bbappend作为文件类型后缀,被附加元数据的recipe则使用.bb文件类型后缀。

使用.bbappend文件,你可以在无需拷贝另一个Layer的recipe到你的Layer中,就能增加或修改内容。.bbappend文件在你的Layer中,而被附加内容的.bb文件则在另一个Layer中。

附加信息不仅仅能避免重复,也能将不同的Layer的改动自动应用到你的Layer中。如果你是拷贝recipe,当改动发生时你需要手动合并。

创建append文件时,必须使用对应recipe相同的名字。例如someapp_2.7.bbappend必须应用于someapp_2.7.bb,这意味着这两个文件是版本特定的。如果对应的recipe重命名升级了版本,你也需要同时改动.bbappend文件名。如果检测到.bbappend文件没有所匹配的recipe,BitBake会显示错误。阅读BB_DANGLINGAPPENDS_WARNONLY以了解更多如何处理此错误的信息。

作为例子,以下是源目录formfactor的recipe和append文件。首先是”meta”Layer的meta/recipes-bsp/formfactor目录下的formfactor_0.0.bb文件:

SUMMARY = "Device formfactor information"SECTION = "base"LICENSE = "MIT"LIC_FILES_CHKSUM = "file://${COREBASE}/meta/COPYING.MIT;`md5`=3da9cfbcb788c80a0384361b4de20420"PR = "r45"SRC_URI = "file://config file://machconfig"S = "${WORKDIR}"PACKAGE_ARCH = "${MACHINE_ARCH}"INHIBIT_DEFAULT_DEPS = "1"do_install() {# Install file only if it has contentsinstall -d ${D}${sysconfdir}/formfactor/install -m 0644 ${S}/config ${D}${sysconfdir}/formfactor/if [ -s "${S}/machconfig" ]; theninstall -m 0644 ${S}/machconfig ${D}${sysconfdir}/formfactor/fi}

注意recipe中的SRC_URI变量,它告诉OE构建系统在构建时从哪里取得文件。

以下是从树莓派BSP Layerrecipes-bsp/formfactor下名为formfactor_0.0.bbappend的append文件:

FILESEXTRAPATHS_prepend := "${THISDIR}/`${PN}`:"

默认地,构建系统会使用FILESPATH变量去定位文件,这个append文件通过设定FILESEXTRAPATHS变量扩展了文件路径。通过这样的方式是最可靠,最为推荐的方式来为构建系统增加搜索文件的搜索目录。

The statement in this example extends the directories to include ${THISDIR}/${PN}, which resolves to a directory named formfactor in the same directory in which the append file resides (i.e. meta-raspberrypi/recipes-bsp/formfactor. This implies that you must have the supporting directory structure set up that will contain any files or patches you will be including from the layer.

示例中的语句,扩展了${THISDIR}/${PN}路径,这个路径解析为append文件所在目录(meta-raspberrypi/recipes-bsp/formfactor)。这表明你必须有对应的目录结构,存放着将会包含的文件或补丁。(译者注:待确认此段翻译)

因为指向THISDIR,使用立即展开赋值操作符:=很重要,它保证列表中仍是按冒号分隔的。

注释

BitBake自动定义THISDIR变量,你不应该给它设定任何值。”_prepend”保证在最终列表里路径会优先于其他路径。 不是所有的append文件都会追加文件,许多append文件仅仅用来添加构建选项(例如systemd)。这些情况下,你的append文件甚至都不需要用到FILESEXTRAPATHS语句。

3.1.6 设置优先级

每个Layer都设定了优先值,当多个Layer有同名recipe时,优先值决定了哪一个Layer拥有更高的优先级。数字越大,代表优先级越高。优先值同样影响了扩展同一recipe的多个.bbappend的顺序。你可以手动指定优先值,也可以让构建系统根据Layer的依赖关系自动计算优先级。

手动设定优先值,需要使用BBFILE_PRIORITY加上layer名:

BBFILE_PRIORITY_mylayer = "1"

注释

拥有低版本号PV但是更高优先级的recipe是可能存在的。 目前,Layer优先级不会影响conf或.bbclass文件的优先顺序,BitBake的未来版本可能会处理这一点。

3.1.7 管理Layers

在多Layer的项目中,你可以使用BitBake Layer管理工具bitbake-layers显示recipe结构。它能够输出报告以获取已配置Layer的路径和优先级,以及.bbappend文件和它们应用的recipe文件,这个能够帮助展现潜在问题。

使用以下命令以获取BitBake Layer管理工具的帮助信息:

$ bitbake-layers --helpNOTE: Starting bitbake server...usage: bitbake-layers [-d] [-q] [-F] [--color COLOR] [-h] <subcommand> ...BitBake layers utilityoptional arguments:-d, --debug Enable debug output-q, --quiet Print only errors-F, --force Force add without recipe parse verification--color COLOR Colorize output (where COLOR is auto, always, never)-h, --help show this help message and exitsubcommands:<subcommand>show-layers show current configured layers.show-overlayed list overlayed recipes (where the same recipe existsin another layer)show-recipes list available recipes, showing the layer they areprovided byshow-appends list bbappend files and recipe files they apply toshow-cross-depends Show dependencies between recipes that cross layerboundaries.add-layer Add one or more layers to `bblayers.conf`.remove-layer Remove one or more layers from `bblayers.conf`.flatten flatten layer configuration into a separate outputdirectory.layerindex-fetch Fetches a layer from a layer index along with itsdependent layers, and adds them to conf/`bblayers.conf`.layerindex-show-dependsFind layer dependencies from layer index.create-layer Create a basic layerUse bitbake-layers <subcommand> --help to get help on a specific command

下列列表介绍了可用的命令:

help: 显示通用帮助信息或者一个具体命令的帮助信息

show-layers: 显示当前配置的Layer

show-overlayed: 列出被覆盖的recipe(另外一个Layer中有同名recipe,但优先级更高)

show-recipes: 列出有效recipe和提供这些recipe的Layer

show-appends: 列出

.bbappend文件和它们所应用的recipe文件show-cross-depends: 列出跨Layer的recipe的依赖关系

add-layer: 添加Layer至

bblayers.conf.remove-layer: 从

bblayers.conf移除Layerflatten: Flattens the layer configuration into a separate output directory. Flattening your layer configuration builds a “flattened” directory that contains the contents of all layers, with any overlayed recipes removed and any

.bbappendfiles appended to the corresponding recipes. You might have to perform some manual cleanup of the flattened layer as follows:Non-recipe files (such as patches) are overwritten. The flatten command shows a warning for these files.

Anything beyond the normal layer setup has been added to the

layer.conffile. Only the lowest priority layer’slayer.confis used.Overridden and appended items from

.bbappendfiles need to be cleaned up. The contents of each .bbappend end up in the flattened recipe. However, if there are appended or changed variable values, you need to tidy these up yourself. Consider the following example. Here, thebitbake-layerscommand adds the line#### bbappended ...so that you know where the following lines originate:...DESCRIPTION = "A useful utility"...EXTRA_OECONF = "--enable-something"...#### bbappended from meta-anotherlayer ####DESCRIPTION = "Customized utility"EXTRA_OECONF += "--enable-somethingelse"

Ideally, you would tidy up these utilities as follows:

...DESCRIPTION = "Customized utility"...EXTRA_OECONF = "--enable-something --enable-somethingelse"...

layerindex-fetch: Fetches a layer from a layer index, along with its dependent layers, and adds the layers to the

conf/bblayers.conffile.layerindex-show-depends: Finds layer dependencies from the layer index.

create-layer: 创建一个基础Layer

3.1.8 使用bitbake-layers脚本创建Layer

bitbake-layers脚本配上create-layer子命令,可以很简单地创建一个新Layer:

注释

- 阅读《Yocto Project Board Specific (BSP) Developer’s Guide》“BSP Layers”章节以获得更多关于BSP Layer的信息

- 在OE构建系统中使用Layer,你需要添加至

bblayers.conf配置文件中。阅读3.1.9 使用bitbake-layers脚本添加Layer以获得更多信息

使用此命令,默认创建如下一个Layer:

Layer优先级为6

包含

layer.conf文件的conf子目录recipes-example子目录下名为example的子目录,并在其中有一个example.bbrecipe文件Layer的证书说明

COPYING.MIT文件。脚本假定你使用大多数Layer都使用的MIT证书README文件,描述这个Layer的内容

以如下最简单的形式,你可以在当前目录下创建名为your_layer_name的Layer:

$ bitbake-layers create-layer your_layer_name

作为示例,以下命令为你在home目录创建一个名为meta-scottrif的Layer:

$ cd /usr/home$ bitbake-layers create-layer meta-scottrifNOTE: Starting bitbake server...Add your new layer with 'bitbake-layers add-layer meta-scottrif'

如果你想自定义优先级,可以使用‐‐priority选项,或者创建后在conf/layer.conf里修改BBFILE_PRIORITY.你也可以通过‐‐example-recipe-name选项,给默认的example recipe起别名。

实际操作bitbake-layers create-layer命令可以更容易清楚它是如何工作的,你也可以通过以下方式查看使用帮助:

$ bitbake-layers create-layer --helpNOTE: Starting bitbake server...usage: bitbake-layers create-layer [-h] [--priority PRIORITY][--example-recipe-name EXAMPLERECIPE]layerdirCreate a basic layerpositional arguments:layerdir Layer directory to createoptional arguments:-h, --help show this help message and exit--priority PRIORITY, -p PRIORITYLayer directory to create--example-recipe-name EXAMPLERECIPE, -e EXAMPLERECIPEFilename of the example recipe

3.1.9 使用bitbake-layers脚本添加Layer

当你创建Layer后,你必须将它添加至bblayers.conf中,添加后才能使OE购进系统意识到它的存在,并检索元数据。

使用bitbake-layers add-layer命令添加:

$ bitbake-layers add-layer your_layer_name

这里为你展示添加meta-scottrifLayer的示例。紧跟的命令可以展示bblayers.conf里已有Layer的列表:

$ bitbake-layers add-layer meta-scottrifNOTE: Starting bitbake server...Parsing recipes: 100% |##########################################################| Time: 0:00:49Parsing of 1441 .bb files complete (0 cached, 1441 parsed). 2055 targets, 56 skipped, 0 masked, 0 errors.$ bitbake-layers show-layersNOTE: Starting bitbake server...layer path priority==========================================================================meta /home/scottrif/poky/meta 5meta-poky /home/scottrif/poky/meta-poky 5meta-yocto-bsp /home/scottrif/poky/meta-yocto-bsp 5workspace /home/scottrif/poky/build/workspace 99meta-scottrif /home/scottrif/poky/build/meta-scottrif 6

添加这个Layer可以使构建系统在构建时定位Layer。

注释

构建时,OE构建系统根据此列表自上而下查找Layer。

3.2. 定制化镜像

你可以定制化镜像以满足特定需求,本章将介绍几种方式以及每种方法的指导。

3.2.1 使用local.conf定制化镜像

可能最简单的方式去定制化镜像,就是修改local.conf添加包。因为这仅限于本地使用,这个方法只允许你添加包,且不足够灵活以创建属于你自己的镜像。当你通过本地变量添加包,这意味着这些变量的修改会体现在每一次构建中,所有镜像都会受到影响,你可能不希望这样。

使用本地配置文件添加包,你需要使用带_append操作符的IMAGE_INSTALL变量:

IMAGE_INSTALL_append = " strace"

使用这样的语法非常重要,尤其是引号和包strace名字中间的空格。_append操作符不会添加空格,因此这个空格在这里是必须的。

如果你希望避免产生顺序问题,你必须使用 _append 而不是+=操作符。这样做的理由是,无条件附加在变量后,避免因镜像recipe设定的变量和带例如?=操作符的.bbclass文件而导致的顺序问题。使用_append确保此操作生效。

如上所示,IMAGE_INSTALL_append会影响所有镜像。使用下列方式可以让变量只对特定镜像有效:

IMAGE_INSTALL_append_pn-core-image-minimal = " strace"

这个示例仅仅向core-image-minimal添加strace

你也可以通过CORE_IMAGE_EXTRA_INSTALL变量来添加包,如果使用的话,只有core-image-*镜像会有影响。

3.2.2 使用自定义IMAGE_FEATURES 和 EXTRA_IMAGE_FEATURES定制化镜像

另外一种定制化镜像的方式是使用IMAGE_FEATURES和EXTRA_IMAGE_FEATURES开启或关闭high-level镜像功能。尽管两个变量的功能几乎相同,最佳实践是,在recipe中使用IMAGE_FEATURES,在Build目录下的local.conf使用EXTRA_IMAGE_FEATURES。

想要理解它们是怎么起效的,最好的参考就是meta/classes/core-image.bbclass,这个类列出了可用的IMAGE_FEATURES,大多数是包集合,也有一些诸如debug-tweaks 和 read-only-rootfs的通用配置设定。

总之,这个文件会查看IMAGE_FEATURES变量的内容,然后映射或者配置功能。基于这个信息,构建系统自动添加合适的包或配置到IMAGE_INSTALL变量中。实际上,你是通过扩展类或创建自定义类的方式启用额外功能。

Use the EXTRA_IMAGE_FEATURES variable from within your local configuration file. Using a separate area from which to enable features with this variable helps you avoid overwriting the features in the image recipe that are enabled with IMAGE_FEATURES. The value of EXTRA_IMAGE_FEATURES is added to IMAGE_FEATURES within meta/conf/bitbake.conf.

为了解释你是如何使用这些变量修改镜像,思考选择SSH服务器这个例子。Yocto Project为你的镜像提供两个SSH服务器:Dropbear和OpenSSH,Dropbear合适作为资源有限环境的最小化SSH服务器,而OpenSSH是众所周知的标准SSH服务器实现。默认地,core-image-sato配置使用Dropbear,core-image-full-cmdline 和 core-image-lsb使用OpenSSH,而core-image-minimal则不包含SSH服务器。

你可以定制化你的镜像,修改默认值。在recipe中编辑IMAGE_FEATURES或者在local.conf使用EXTRA_IMAGE_FEATURES,如此你就可以让你地镜像包含ssh-server-dropbear 或 ssh-server-openssh

注释

阅读《Yocto Project Reference Manual》“Images”章节以获取Yocto Project提供地完整镜像功能列表。

3.2.3 使用自定义.bb文件定制化镜像

你也可以通过创建自定义recipe的方式为你的镜像添加额外的软件。以下为示例格式:

IMAGE_INSTALL = "packagegroup-core-x11-base package1 package2"inherit core-image

Defining the software using a custom recipe gives you total control over the contents of the image. It is important to use the correct names of packages in the IMAGE_INSTALL variable. You must use the OpenEmbedded notation and not the Debian notation for the names (例如 glibc-dev instead of libc6-dev).通过自定义recipe的方式定义软件有助于你完全掌控镜像的内容。在IMAGE_INSTALL变量中使用包的正确名字是非常重要的,你必须使用OE而不是Debian的命名方式(例如,使用 glibc-dev 而不是 libc6-dev)

另外一种方式就是基于已有镜像。比如说,如果你想在core-image-sato添加额外的strace包,复制meta/recipes-sato/images/core-image-sato.bb的内容到一个新的.bb文件中,然后将下方一行语句加到末尾:

IMAGE_INSTALL += "strace"

3.2.4 使用自定义包合集定制化镜像

对于复杂的定制化,最好的方式是创建包集合recipe用来构建镜像,示例可参考meta/recipes-core/packagegroups/packagegroup-base.bb。

如果你查看这个recipe,你会看到PACKAGES变量列出了要生产的包集合。inherit packagegroup语句设定合适的默认值,并且自动为PACKAGES语句列出的包加上-dev, -dbg, 和 -ptest补充包。

注释

inherit packages应该在recipe几乎起始位置,必须在PACKAGES`前面。

PACKAGES所列出的包,你可以使用RDEPENDS 和 RRECOMMENDS入口提供父任务包应该包含的包列表。你可以参考如下packagegroup-base.bb。

这里有一段简短的示例:

DESCRIPTION = "My Custom Package Groups"inherit packagegroupPACKAGES = "\packagegroup-custom-apps \packagegroup-custom-tools \"RDEPENDS_packagegroup-custom-apps = "\dropbear \portmap \psplash"RDEPENDS_packagegroup-custom-tools = "\oprofile \oprofileui-server \lttng-tools"RRECOMMENDS_packagegroup-custom-tools = "\kernel-module-oprofile"

示例中,packagegroup-custom-apps, and packagegroup-custom-tools两个包集合被创建,同时还有它们的依赖和推荐包依赖。构建时使用这些包集合,你需要将packagegroup-custom-apps 和/或 packagegroup-custom-tools加入到IMAGE_INSTALL变量。其他镜像依赖的格式,请参考本节其他部分。

3.2.5 自定义镜像主机名

默认地,配置的主机名(即 /etc/hostname)就是设备名,例如,MACHINE 设定为 “qemux86”,被写入/etc/hostname的就是”qemux86”。

你可以通过使用append文件或配置文件的方式修改base-filesrecipe中”hostname”变量值。append文件需如下使用:

hostname="myhostname"

配置文件需如下使用:

hostname_pn-base-files = "myhostname"

有些情况下,改变”hostname”默认值是很有用的,例如,假设你需要对镜像做大量测试,希望能轻易地在使用默认主机名的镜像中识别你要做测试的镜像,你可以把默认主机名改为”testme”,结果是镜像会使用”testme”这个名字。一旦测试完成,你不需要再次构建镜像作为测试,你可以重置主机名。

另外一点是,如果你unset这个变量,镜像文件系统中就会没有默认主机名。这里是一个示例:

hostname_pn-base-files = ""

文件系统没有默认主机名对于例如虚拟机使用动态主机名的环境,是适宜的。

3.3 编写新Recipe

Recipe(.bb文件)是Yocto Project环境基本组件,每一个OE构建系统构建的软件组件都需要recipe定义这个组件。本节描述如何创建,编写,测试一个新Recipe。

注释

更多recipe有用的变量和recipe命名问题,请阅读《Yocto Project Reference Manual》“Required”章节

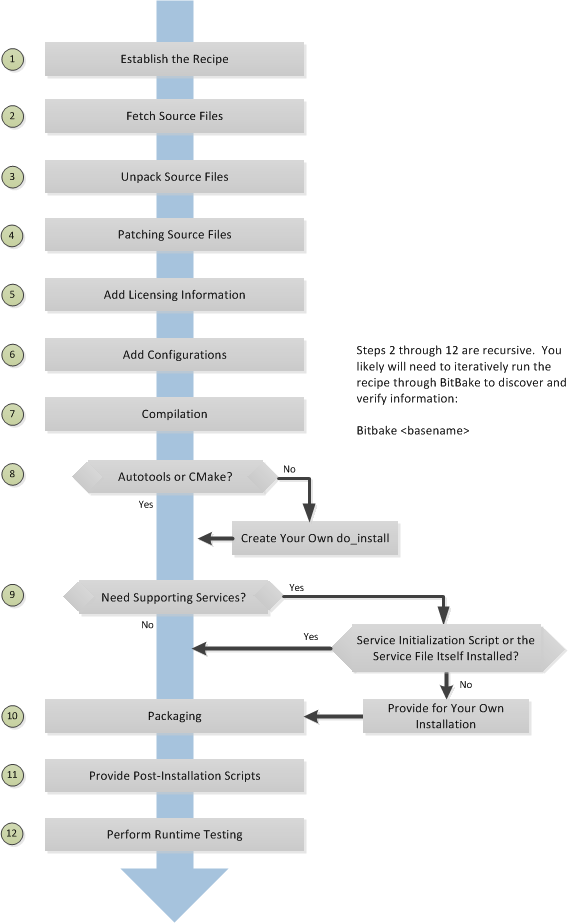

3.3.1 概述

下图展示了创建新recipe的基本过程,后面的内容会详细介绍这些步骤。

3.3.2 手动/自动创建基本Recipe

你可以完全从零编写Recipe,然而,这些选择可以帮助你快速开始一个新的recipe:

devtool add: 帮助创建recipe和有助于开发的环境的命令recipetool create: Yocto Project提供,自动根据代码文件创建基本recipe的命令已有recipe: 定位并改造一个功能和你需要的类似的recipe

注释

阅读3.3.23 Recipe语法关于Recipe语法的信息。

3.3.2.1 使用devtool add创建基本Recipe

devtool add命令使用与recipetool create同样的逻辑自动创建recipe。此外,然而,devtool add准备了一套环境,当你新加recipe以构建时构建新软件,你可以更容易地为代码打补丁,或者修改recipe。

你可以再《Yocto Project Application Development and the Extensible Software Development Kit (eSDK)》“A Closer Look at devtool add”找到devtool add命令的完整描述。

3.3.2.2 使用recipetool create创建基本Recipe

recipetool create自动创建给定一组代码文件的基本recipe。只要你能解压或者指向源代码文件,这个工具会自动创建recipe并自动将所有pre-build信息配置再recipe中。例如,假定你使用Autotools构建一个应用,使用recipetool创建recipe,会让你得到配置好pre-build依赖,证书要求,校验码的recipe。

运行这个工具,你只需要在Build目录并运行构建环境搭建脚本(即oe-init-build-env)。更多帮助,请参考以下命令:

$ recipetool -hNOTE: Starting bitbake server...usage: recipetool [-d] [-q] [--color COLOR] [-h] <subcommand> ...OpenEmbedded recipe tooloptions:-d, --debug Enable debug output-q, --quiet Print only errors--color COLOR Colorize output (where COLOR is auto, always, never)-h, --help show this help message and exitsubcommands:create Create a new recipenewappend Create a bbappend for the specified target in the specifiedlayersetvar Set a variable within a recipeappendfile Create/update a bbappend to replace a target fileappendsrcfiles Create/update a bbappend to add or replace source filesappendsrcfile Create/update a bbappend to add or replace a source fileUse recipetool <subcommand> --help to get help on a specific command

recipetool create -o OUTFILE在包含你的代码文件的Layer创建一个基本recipe,以下是一些语法示例:

使用这个语法基于source生成recipe,一旦生成,recipe将存在于此代码Layer中。

recipetool create -o OUTFILE source

使用这个语法使用你从source解压的代码生成recipe,解压缩的代码路径由EXTERNALSRC定义:

recipetool create -o OUTFILE -x EXTERNALSRC source

使用这个语法基于source生成recipe,这个选项使recipetool生成调试信息,一旦生成,recipe存在于已有代码Layer:

recipetool create -d -o OUTFILE source

3.3.2.3 找到并使用近似的recipe

从零开始编写recipe前,寻找是否有一个已有且满足(或近似满足)需求的recipe是很有帮助的一件事。Yocto Project 和 OE 社区维护着很多可能你用得上的recipe。你可以在OpenEmbedded Layer Index找到索引。

基于已有recipe或recipe提纲开始工作是最有效的方式,这里有一些你需要注意的地方:

找到并修改近似于你想要的recipe: 如果你对当前的recipe空间很熟悉,这个方法会很有效,对于初学Yocto Project编写recipe的人来说可能不太友好。

这个方法的风险是,可能recipe中有完全无关的内容,亦或是有些部分你还是需要从零开始,等等。这些风险因对不熟悉已有recipe空间而产生。

使用并修改如下recipe提纲: 如果某些原因你不想使用

recipetool,你也找不到类似满足需求的recipe,你可以使用下方提供了基本结构的recipe开始:DESCRIPTION = ""HOMEPAGE = ""LICENSE = ""SECTION = ""DEPENDS = ""LIC_FILES_CHKSUM = ""SRC_URI = ""

3.3.3 保存并为recipe命名

当你有了基本recipe后,你应该放到你自己的layer中并为它命名。将它放置正确保证OE构建系统可以在你使用BitBake处理recipe时找得到它。

保存Recipe: OE构建系统通过Layer的

conf/layer.conf中BBFILES变量定位recipe,这里是典型的应用:BBFILES += "${LAYERDIR}/recipes-*/*/*.bb \${LAYERDIR}/recipes-*/*/*.bbappend"

因此,你需要保证你的recipe能被找到。

你可以在3.1 理解并创建Layer找到更多关于Layer结构的信息。

为Recipe命名: 你需要按照以下约定命名recipe:

basename_version.bb

使用小写字母,不要随意包含保留后缀

-native,-cross,-initial, or-devcasually (即,除非后缀适用,不要将它们作为recipe名字的一部分). 示例如下:cups_1.7.0.bbgawk_4.0.2.bbirssi_0.8.16-rc1.bb

3.3.4 使用Recipe运行构建

创建新recipe,通常是一个迭代的过程,需要使用BitBake多次处理这个recipe以逐渐发现并向recipe中加入更多信息。

假定你已经运行了构建环境搭建脚本(即oe-init-build-env)并且你在Build目录下,使用BitaBkae去处理你的recipe。你只需要提供前面章节介绍过的recipe的basename:

$ bitbake basename

构建时,OE构建系统为每个recipe创建一个临时工作目录(${WORKDIR})),保存解压缩后的源文件,日志文件,编译中间文件和打包文件等等。

临时工作目录取决于正在所处的构建环境,最快知晓它的方式是使用BitBake返回它的值:

$ bitbake -e basename | grep ^WORKDIR=

作为示例,假定代码目录最上层文件夹名为poky,默认构建目录是poky/build,设备目标系统是qemux86-poky-linux。假定你的recipe名叫foo_1.3.0.bb,这种情况下,构建系统使用的工作目录是:

poky/build/tmp/work/qemux86-poky-linux/foo/1.3.0-r0

这个目录下你可以找到诸如image,packages-split,temp等子文件夹,构建后,你可以通过检查它们了解构建是否正常。

注释

你可以在temp目录找到每一个任务的日志文件 (例如poky/build/tmp/work/qemux86-poky-linux/foo/1.3.0-r0/temp). 日志文件命名为log.taskname(例如log.do_configure,log.do_fetch, 和log.do_compile).

你可以在《Yocto Project Overview and Concepts Manual》“The Yocto Project Development Environment”阅读到更多构建过程的信息。

3.3.5. 获取代码

Recipe第一件必须做的事就是,说明如何获取代码文件。获取过程主要由SRC_URI控制,你的recipe必须有SRC_URI变量指出代码位置。阅读《Yocto Project Overview and Concepts Manual》“Sources”章节以获得图形说明。

do_fetch任务根据SRC_URI每个入口的前缀决定使用哪个fetcher获取源代码,SRC_URI变量触发fetcher。获取代码后,do_patch任务用这个变量应用补丁。OE构建系统使用FILESOVERRIDES检索SRC_URI中本地文件目录路径。

Recipe中SRC_URI变量必须为各源码文件定义唯一路径。最佳实践是不要在SRC_URI中使用硬代码路径,而应该使用${PV}让获取过程使用recipe文件名指定的版本。这样指定意味着,升级recipe到新版本时,匹配新版本简单地就像重命名recipe名字一样。

这里是meta/recipes-devtools/cdrtools/cdrtools-native_3.01a20.bb的示例,代码从一个tar包获取,留意PV这个变量:

SRC_URI = "ftp://ftp.berlios.de/pub/cdrecord/alpha/cdrtools-${PV}.tar.bz2"

SRC_URI里提及的以文件扩展名结尾的文件(例如.tar, .tar.gz, .tar.bz2, .zip等等),在do_unpack任务中会被自动解压。阅读3.3.21.2 Autotooled Package以了解更多关于这些类型文件的示例。

Another way of specifying source is from an SCM. For Git repositories, you must specify SRCREV and you should specify PV to include the revision with SRCPV. Here is an example from the recipe meta/recipes-kernel/blktrace/blktrace_git.bb:另一个指定代码的方式是通过SCM(代码控制管理)。对于Git仓库,你必须指定SRCREV

SRCREV = "d6918c8832793b4205ed3bfede78c2f915c23385"PR = "r6"PV = "1.0.5+git${SRCPV}"SRC_URI = "git://git.kernel.dk/blktrace.git \file://ldflags.patch"

如果SRC_URI包含指向非版本管理系统的远程服务器获取的独立文件,BitBake尝试使用recipe中设定的校验值确保recipe编写后它们没有被篡改,否则就是被更改过。被使用的两种校验值为:SRC_URI[md5sum] 和 SRC_URI[sha256sum]

如果SRC_URI指向多个URL(不包括SCM URL),你需要为每一个URL提供md5 and sha256,这种情况下,你需要为每个URL起名,在校验值语句中指向它们:

SRC_URI = "${DEBIAN_MIRROR}/main/a/apmd/apmd_3.2.2.orig.tar.gz;name=tarball \${DEBIAN_MIRROR}/main/a/apmd/apmd_${PV}.diff.gz;name=patch"SRC_URI[tarball.`md5`sum] = "b1e6309e8331e0f4e6efd311c2d97fa8"SRC_URI[tarball.`sha256`sum] = "7f7d9f60b7766b852881d40b8ff91d8e39fccb0d1d913102a5c75a2dbb52332d"SRC_URI[patch.`md5`sum] = "57e1b689264ea80f78353519eece0c92"SRC_URI[patch.`sha256`sum] = "7905ff96be93d725544d0040e425c42f9c05580db3c272f11cff75b9aa89d430"

正确的md5 and sha256值应该能在代码下载页面,和其他签名在一起能被找到(例如 md5, sha1, sha256, GPG,等等)。由于OE构建系统仅支持sha256sum and md5sum,你应该自行验证所有签名。

如果构建时没有提供SRC_URI校验值,或者提供的校验值是错的,会产生缺失或不正确校验值的错误。构建系统在错误信息中会提供获取文件对应的校验值,当你有了正确的校验值后,你可以将他们粘贴到recipe中,重新构建以继续。

注释

如果上游代码提供验证下载代码的签名,你应该在设置recipe前手动验证它们,然后再继续构建。

最后一个示例有点复杂,它来自meta/recipes-sato/rxvt-unicode/rxvt-unicode_9.20.bb,它包含了几种文件作为源文件:tar包,补丁文件,桌面文件,和一个图标。

SRC_URI = "http://dist.schmorp.de/rxvt-unicode/Attic/rxvt-unicode-${PV}.tar.bz2 \file://xwc.patch \file://rxvt.desktop \file://rxvt.png"

当你使用file://指定本地文件时,构建系统从本地获取文件。这个路径相对于FILESPATH变量值,根据特定顺序寻找文件:${BP}, ${BPN}, 和 files。目录默认时recipe或append文件所在目录的子目录。阅读[3.3.21.1 Single .c File Package (Hello World!)]关于指定这些类型的文件的示例。

上面这个示例也指定了补丁文件,补丁文件通常以.patch 或 .diff结尾,但也能以diff.gz 和 patch.bz2这样的压缩格式结尾。例如,构建系统可以自动应用补丁,请阅读3.3.7 打补丁。

3.3.6 升级代码

构建时,do_unpack将代码解包到${S}。

如果你是从上行代码tar包获取的代码,tar包内部结构匹配顶层子目录常用约定${BPN}-${PV},那么就不需要设定S。然而,如果SRC_URI指向的包不遵从此约定,或是从Git或Subversion这样的SCM获取的,你的recipe需要定义S。

如果BitBake解包过程顺利,你需要保证${S}指向的目录匹配代码结构。

3.3.7 打补丁

有时候,需要在代码获取后给它打补丁,在SRC_URI中提到的任何以.patch ,.diff结尾,或是压缩格式(例如diff.gz)结尾的文件,都会被当作补丁文件。do_patch任务自动应用这些补丁。

The build system should be able to apply patches with the “-p1” option (i.e. one directory level in the path will be stripped off). If your patch needs to have more directory levels stripped off, specify the number of levels using the “striplevel” option in the SRC_URI entry for the patch. Alternatively, if your patch needs to be applied in a specific subdirectory that is not specified in the patch file, use the “patchdir” option in the entry.

As with all local files referenced in SRC_URI using file://, you should place patch files in a directory next to the recipe either named the same as the base name of the recipe (BP and BPN) or “files”.

3.3.8 许可证书

Recipe需要有LICENSE和LIC_FILES_CHKSUM变量:

LICENSE: 这个变量指定软件证书。如果你不知道软件基于何种许可证书分发,你可以到源代码中找到更多信息。包含这类信息的典型文件包括

COPYING,LICENSE, 和README文件,你也可以在代码顶端找到这个信息。例如,对于一个基于GNU General Public License version 2许可的软件,你会这样设置LICENSE:LICENSE = "GPLv2"

如果不使用空格,LICENSE名字可以很长,空格用来作为不同license名字的分隔符。对于标准license,使用

meta/files/common-licenses/或meta/conf/licenses.conf中SPDXLICENSEMAP定义的名字。LIC_FILES_CHKSUM: OE构建系统使用这个变量确保license文本没有被改动。如果有改动,构建过程会产生错误,让你能够找出问题并改正他。

你需要为软件指明所有适用的许可文件。配置步骤的最后,构建过程会对比文件的校验值以确保内容没有被修改,阅读3.32.1. 跟踪LICENING改动更多关于LIC_FILES_CHKSUM的解释和示例。

你可以在LIC_FILES_CHKSUM变量中指定对应文件和一个错误的md5字符串,尝试构建,留意错误信息中报告的正确md5值,来决定正确的校验值。阅读3.3.5. 获取代码了解更多信息。

以下示例假定软件有COPYING文件:

LIC_FILES_CHKSUM = "file://COPYING;md5=xxx"

尝试构建软件时,构建系统会产生错误,给你正确值,你可以用它来替换进去,继续构建。

3.3.9 依赖

大多数软件包都有它们必须拥有的其他包的列表,被称之为依赖。依赖主要有两部分:软件构建时需要的构建时依赖,以及需要安装到目标上以便运行软件的运行时依赖。

在recipe中,使用DEPENDS变量指定构建时依赖。尽管有些细微差别,DEPENDS指定的项应该是其他recipe的名字。清楚地指明所有构建时依赖是非常重要的,如果不这么做,由于BitBake并行执行的特性,你可能会遇到这样的竞争情况,recipe中某一任务(例如do_configure)有依赖,然后执行下一个任务(例如do_compile)时没有了。

需要考虑的另外一点是,配置脚本可能会自动检查可选依赖,如果依赖存在,启用对应的功能。这意味着,想要确保确定的结果并避免冲突,你也需要显式指明这些依赖,或者显示告诉配置脚本不要开启这个功能。如果你想创建一个更通用的recipe(例如,发布Layer中的recipe给其他人用),而不是直接关闭这个功能,你可以使用PACKAGECONFIG变量来允许他人使用recipe时可以轻而易举地开启或关闭这个功能和对应地依赖。

和构建时依赖类似,你需要通过RDEPENDS变量指定基于包的运行时依赖。所有基于包的变量需要将包名字加到最后作为重写。因为recipe主包和recipe拥有相同名字,recipe的名字可以通过${PN}找到,因此你需要为主包设定RDEPENDS_${PN}指明依赖。如果包名为${PN}-tools,你可以设置为RDEPENDS_${PN}-tools。

有的运行时依赖在打包时会被自动设置,包括共享库依赖(例如”example”包包含”libexample”,另外一个”mypackage”包包含的二进制链接到了”libexample”,OE构建系统会自动添加”mypackage”对于”example”的依赖)。阅读《Yocto Project Overview and Concepts Manual》的“Automatically Added Runtime Dependencies”章节了解更多信息。

3.3.10 配置recipe

大多数软件提供编译前不同的设置构建时配置选项的方法,一般来说,设定这些选项通过运行带参数的配置脚本,或者修改构建配置文件来完成。

注释

As of Yocto Project Release 1.7, some of the core recipes that package binary configuration scripts now disable the scripts due to the scripts previously requiring error-prone path substitution. The OpenEmbedded build system usespkg-confignow, which is much more robust. You can find a list of the*-configscripts that are disabled list in the “Binary Configuration Scripts Disabled” section in the Yocto Project Reference Manual.

构建时配置的主要部分,就是检查构建时依赖,可能需要启用哪些可选功能。依据其他满足这些依赖的recipe,你需要在DEPENDS指定你构建的软件的构建时依赖。一般你可以在软件文档中找到构建时依赖和运行时依赖的说明。

以下列表根据软件构建方式提供配置项目:

- Autotools: 如果源文件有

configure.ac文件,那么你的软件是通过Autotools构建的,这种情况下,你只需要考虑修改配置。

使用Autotools时,recipe需要继承autotools类,也不需要包含do_configure任务。然而,你可能想做一些调整,比如,你可以设置EXTRA_OECONF或PACKAGECONFIG_CONFARGS来传递需要的配置选项。

- CMake: 如果源文件有

CMakeLists.txt文件,那么你的软件时使用CMake构建的,这种情况下,你只需要考虑修改配置。

使用CMake时,recipe需要继承cmake类,也不需要包含do_configure任务。你可以通过设定EXTRA_OECMAKE的方式传递必要的配置选项。

- Other: I如果源文件没有

configure.ac或CMakeLists.txt,你的软件是由其他方法构建的,这种情况下,你通常需要提供do_configure任务。当然,如果没什么需要配置的,就不需要了。

Even if your software is not being built by Autotools or CMake, you still might not need to deal with any configuration issues. You need to determine if configuration is even a required step. You might need to modify a Makefile or some configuration file used for the build to specify necessary build options. Or, perhaps you might need to run a provided, custom configure script with the appropriate options.

For the case involving a custom configure script, you would run ./configure --help and look for the options you need to set.

Once configuration succeeds, it is always good practice to look at the log.do_configure file to ensure that the appropriate options have been enabled and no additional build-time dependencies need to be added to DEPENDS. For example, if the configure script reports that it found something not mentioned in DEPENDS, or that it did not find something that it needed for some desired optional functionality, then you would need to add those to DEPENDS. Looking at the log might also reveal items being checked for, enabled, or both that you do not want, or items not being found that are in DEPENDS, in which case you would need to look at passing extra options to the configure script as needed. For reference information on configure options specific to the software you are building, you can consult the output of the ./configure --help command within ${S} or consult the software’s upstream documentation.

3.3.11 使用Headers与设备连接

如果recipe构建一个需要和设备通讯,或是需要API访问自定义kernel的应用,你需要提供适当的头文件。你不应当改动已有的meta/recipes-kernel/linux-libc-headers/linux-libc-headers.inc文件。这些头文件用来构建libc,不能被自定义或者设备特定的头文件信息改动。如果你通过修改头文件的方式改动了libc,所有其他用到libc的应用程序都会收到影响。

警告

不要复制或者修改libc头文件 (即meta/recipes-kernel/linux-libc-headers/linux-libc-headers.inc).

访问设备或自定义kernel的正确方式是,使用一个提供设备额外头文件或者接口的包。这么做的话,应用也需要建立特定提供者的依赖。

考虑以下事情:

不要改动

linux-libc-headers.inc. 这个文件是libc系统的一部分,不是你用来直接访问kernel的东西,你需要通过libc调用访问libc。需要直接访问设备的应用,需要提供必要的头文件,或者建立起基于特定设备头文件包的依赖。

For example, suppose you want to modify an existing header that adds I/O control or network support. If the modifications are used by a small number programs, providing a unique version of a header is easy and has little impact. When doing so, bear in mind the guidelines in the previous list.例如,你想修改已有头文件添加I/O控制或者网络支持的功能,如果这个修改被一小部分程序使用,提供一个专有版本的头文件是一个简单的方式,影响很小。这样做的时候,牢记上文提到的指南。

注释

If for some reason your changes need to modify the behavior of thelibc, and subsequently all other applications on the system, use a.bbappendto modify thelinux-kernel-headers.incfile. However, take care to not make the changes machine specific.

Consider a case where your kernel is older and you need an older libc ABI. The headers installed by your recipe should still be a standard mainline kernel, not your own custom one.

When you use custom kernel headers you need to get them from STAGING_KERNEL_DIR, which is the directory with kernel headers that are required to build out-of-tree modules. Your recipe will also need the following:

do_configure[depends] += "virtual/kernel:do_shared_workdir"

3.3.12 编译

构建时,获取代码,解包,配置后,开始执行do_compile任务。如果recipe成功执行do_compile,那么什么也不需要做。

然而,如果编译失败,你需要诊察失败,这里有一些普遍原因:

注释

配置文件中有不正确的路径,或是库/头文件无法找到,确保你使用的是更robust的pkg-config。阅读3.3.10 配置recipe获得更多信息。

- 并行构建失败: 这些失败表明它们是偶发性错误,或者错误报告应当由其他部分创建的文件或者目录无法找到。进一步检查,甚至会发现构建失败时这个文件或目录依旧不存在,这是因为构建过程执行顺序出了问题。

修复这类问题,你需要在Makefile或生成Makefile的脚本中添加缺失的依赖,或者(作为变通),将PARALLEL_MAKE设为空值:

PARALLEL_MAKE = ""

阅读3.30.12. 调试并行Make冲突以获取更多关于并行Makefile问题的信息。

- 错误使用主机路径: 这类失败仅在构建目标镜像或

nativesdk时出现,当错误使用主机系统的头文件,库文件或者其他文件而又为目标设备交叉编译时,编译过程会出现此类失败。

To fix the problem, examine the log.do_compile file to identify the host paths being used (例如 /usr/include, /usr/lib, and so forth) and then either add configure options, apply a patch, or do both.修复这类问题,检查log.do_compile是否存在使用主机路径的情况(例如/usr/include, /usr/lib等),然后添加配置选项和/或应用补丁。

- 无法找到需要的库/头文件: 如果构建时依赖因为没有定义在

DEPENDS中,或是构建过程使用的查询路径不正确,配置过程也没有检测到这点而导致构建时依赖缺失,编译过程会失败。无论哪种,编译过程都会提示文件无法找到。这种情况下,你需要在配置脚本中添加选项,也可能需要添加构建时依赖到DEPENDS中。

有些时候,也需要打补丁保证使用了正确路径。你需要指定路径已找到其他recipe stage到systoor中的文件,使用OE构建系统提供的变量(例如STAGING_BINDIR, STAGING_INCDIR, STAGING_DATADIR等)。

3.3.13 安装

do_install任务将构建好的文件以及它们的层级复制到它们在目标设备上的路径。安装过程将${S}, ${B}, and ${WORKDIR}的文件复制到${D}目录,创建出它们在目标系统上应该所有的结构。

软件是如何构建的,影响着你需要做些什么来保证软件被正确安装。以下列表描述了根据构建的软件所使用的构建系统的类型,你必须做的事情:

Autotools 和 CMake: 如果使用Autotools或CMake构建,OE构建系统知道如何安装。也就是说在recipe中你不需要有

do_install任务,你只需要保证构建过程的安装部分顺利完成。然而,如果你希望安装还没有被make install安装的额外文件,你应当使用do_install_append来完成安装命令,下文“手动”部分有介绍。其他 (使用 make install): 你需要在recipe中定义

do_install功能,这个功能会调用oe_runmake install,也需要传入目标目录。如何传入,依赖于运行的Makefile是如何写的(例如DESTDIR=${D},PREFIX=${D},INSTALLROOT=${D}等)

阅读3.3.21.3 Makefile-Based Package关于使用make install的示例。

- 手动: 你需要在recipe中定义

do_install功能。这个功能首先必须使用install -d在${D}下创建目录。一旦目录存在,这个功能可以使用install手动安装构建好的软件到目录中。

你可以在这里找到更多关于 install 的信息: http://www.gnu.org/software/coreutils/manual/html_node/install-invocation.html.

For the scenarios that do not use Autotools or CMake, you need to track the installation and diagnose and fix any issues until everything installs correctly. You need to look in the default location of ${D}, which is ${WORKDIR}/image, to be sure your files have been installed correctly.

注释

During the installation process, you might need to modify some of the installed files to suit the target layout. For example, you might need to replace hard-coded paths in an initscript with values of variables provided by the build system, such as replacing/usr/bin/with${bindir}. If you do perform such modifications duringdo_install, be sure to modify the destination file after copying rather than before copying. Modifying after copying ensures that the build system can re-executedo_installif needed.oe_runmake install, which can be run directly or can be run indirectly by theautotoolsandcmakeclasses, runsmake installin parallel. Sometimes, a Makefile can have missing dependencies between targets that can result in race conditions. If you experience intermittent failures duringdo_install, you might be able to work around them by disabling parallel Makefile installs by adding the following to the recipe:

PARALLEL_MAKEINST = ""See PARALLEL_MAKEINST for additional information.

3.3.14 启用系统服务

如果你想安装一个服务,即一般在启动时开启,后台运行的处理,那么你必须在recipe中增加一些定义。

如果你要添加服务,并且服务安装脚本或服务本身没有被安装,你需要在recipe中提供do_install_append功能。如果recipe已经有do_install功能,在末尾更新它,而不用添加do_install_append function。

当你为服务创建安装时,你需要完成通常make install需要完成的事情。也就是说,确保安装过程按照目标系统上的方式放置你的输出文件。

OE构建系统提供两种方式来支持启动服务:

- SysVinit: SysVinit是系统和服务管理器,管理着用来控制系统最基本功能的初始化系统。初始化程序是系统启动时被Linux内核最先自动的第一个程序,初始化后控制所有其他程序的启动,运行和关闭。

启用SysVinit服务,recipe需要继承update-rc.d类,这个类帮助安全在目标系统上安装包。

你需要设定 INITSCRIPT_PACKAGES, INITSCRIPT_NAME, 和 INITSCRIPT_PARAMS 变量。

- systemd: 系统管理守护进程(systemd)被设计用来取代SysVinit,提供加强的服务管理。阅读systemd主页获取更多信息。

启用systemd服务,recipe需要继承systemd类。在Source Directory中查看systemd.bbclass文件以获取更多信息。

3.3.15 打包

成功的打包,需要OE构建系统执行的自动步骤,和一些具体你自己需要采取的步骤一起完成。以下列表描述了这个过程:

分离文件:

do_package任务将recipe产生的文件分割为逻辑组件,记似软件只有一个二进制文件,仍可能要分离出有调试符号,文档,和其他逻辑组件。do_package任务确保文件正确地分离和打包。运行QA检查:

insane类在生成包的步骤增加了一步,OE构建系统生成输出质量保证检查。这个步骤执行一系列检查确保没有常见的运行时产生的问题。阅读《Yocto Project Reference Manual》的“QA Error and Warning Messages”和insane章节了解更多关于检查的信息。手动检查包: 构建软件后,你需要保证包是正确的。检查

${WORKDIR}/packages-split目录,确保是你期望的文件,如果你发现问题,你可以根据需要设定PACKAGES,FILES,do_install(_append)等。将应用分割成多个包: 如果你需要将应用分割成几个包,请参考3.3.21.4 将应用分割成多个包示例。

安装安装后脚本: 请阅读3.3.19 Post-Installation Scripts了解如何安装post-installation脚本的示例。

标识包架构: 依据recipe构建的内容以及如何配置的,将包标识为给特定机器生产的,或与特定机器或加构无关,可能是非常重要的。

默认地,包可以应用到与目标机器同样加构的机器上,当recipe构建的包是机器相关的(例如MACHINE的值传递给了配置脚本,或者补丁仅使用于特定机器),你应该将下方语句添加到recipe中:

PACKAGE_ARCH = "${MACHINE_ARCH}"

另一方面,如果recipe构建的包不包含任何特定机器或加构的东西(例如recipe仅仅打包脚本文件和配置文件),你应该使用allarch类:

inherit allarch

刚开始构建recipe时,确保包架构是否正确并不关键。然而,确保recipe重新构建(或者没有重建)对应于配置的修改,确保安装在目标机器上的时恰当的包,尤其当你给不同目标机器运行不同构建的时候。

3.3.16 Recipe间共享文件

Recipe经常需要使用构建主机上其他recipe提供的文件。例如,一个链接到常用库的应用需要访问库本身和对应的头文件,可以用将文件填充至sysroot的方式来完成。每个recipe在工作目录下有两个sysroot,一个给目标文件(recipe-sysroot),另一个给构建主机原生文件(recipe-sysroot-native)。

注释

你可以在Yocto Project中找到关于填充sysroots文件的”Staging”术语(例如STAGING_DIR变量)。

Recipe永远不应该直接填充sysroot(即向sysroot写文件),取而代之的是,在do_install任务时文件应该根据${D}目录被安装到标准路径。这样限制的原因是,几乎所有写入sysroot的文件都在manifest中分类,以便于当recipe被修改或删除时,文件可以被移除。因此,sysroot能够不受失效文件影响。

A subset of the files installed by the do_install task are used by the do_populate_sysroot task as defined by the the SYSROOT_DIRS variable to automatically populate the sysroot. It is possible to modify the list of directories that populate the sysroot. The following example shows how you could add the /opt directory to the list of directories within a recipe:

SYSROOT_DIRS += "/opt"

For a more complete description of the do_populate_sysroot task and its associated functions, see the staging class.

3.3.17 使用虚拟提供者

Prior to a build, if you know that several different recipes provide the same functionality, you can use a virtual provider (i.e. virtual/*) as a placeholder for the actual provider. The actual provider is determined at build-time.

A common scenario where a virtual provider is used would be for the kernel recipe. Suppose you have three kernel recipes whose PN values map to kernel-big, kernel-mid, and kernel-small. Furthermore, each of these recipes in some way uses a PROVIDES statement that essentially identifies itself as being able to provide virtual/kernel. Here is one way through the kernel class:

PROVIDES += "${@ "virtual/kernel" if (d.getVar("KERNEL_PACKAGE_NAME") == "kernel") else "" }"

Any recipe that inherits the kernel class is going to utilize a PROVIDES statement that identifies that recipe as being able to provide the virtual/kernel item.

Now comes the time to actually build an image and you need a kernel recipe, but which one? You can configure your build to call out the kernel recipe you want by using the PREFERRED_PROVIDER variable. As an example, consider the x86-base.inc include file, which is a machine (i.e. MACHINE) configuration file. This include file is the reason all x86-based machines use the linux-yocto kernel. Here are the relevant lines from the include file:

PREFERRED_PROVIDER_virtual/kernel ??= "linux-yocto"PREFERRED_VERSION_linux-yocto ??= "4.15%"

When you use a virtual provider, you do not have to “hard code” a recipe name as a build dependency. You can use the DEPENDS variable to state the build is dependent on virtual/kernel for example:

DEPENDS = "virtual/kernel"

During the build, the OpenEmbedded build system picks the correct recipe needed for the virtual/kernel dependency based on the PREFERRED_PROVIDER variable. If you want to use the small kernel mentioned at the beginning of this section, configure your build as follows:

PREFERRED_PROVIDER_virtual/kernel ??= "kernel-small"

Note

Any recipe thatPROVIDESavirtual/*item that is ultimately not selected throughPREFERRED_PROVIDERdoes not get built. Preventing these recipes from building is usually the desired behavior since this mechanism’s purpose is to select between mutually exclusive alternative providers.

The following lists specific examples of virtual providers:

virtual/kernel: Provides the name of the kernel recipe to use when building a kernel image.virtual/bootloader: Provides the name of the bootloader to use when building an image.virtual/mesa: Providesgbm.pc.virtual/egl: Providesegl.pcand possiblywayland-egl.pc.virtual/libgl: Providesgl.pc(i.e.libGL).virtual/libgles1: Providesglesv1_cm.pc(i.e.libGLESv1_CM).virtual/libgles2: Providesglesv2.pc(i.e.libGLESv2).

3.3.18 给待发布Recipe正确创建版本

有时候recipe升级到最终发布版本时,recipe的名字可能会引出问题。例如,3.3.3 保存并为recipe命名示例中的irssi_0.8.16-rc1.bb文件,这个reipe处在发布候选阶段(即”rc1”),当recipe发布后,文件名变成irssi_0.8.16.bb,版本由0.8.16-rc1 变为 0.8.16被构建系统和包管理器视为降级,所以导致包不会被正确升级。

为了保证版本被正确对比,推荐的约定时recipe中设置PV变量值为”上一个版本+当前版本“。你可以用额外的变量以便你可以在其他地方也能使用,如下是示例:

REALPV = "0.8.16-rc1"PV = "0.8.15+${REALPV}"

3.3.19 Post-Installation Scripts

Post-installation scripts run immediately after installing a package on the target or during image creation when a package is included in an image. To add a post-installation script to a package, add a pkg_postinst_PACKAGENAME() function to the recipe file (.bb) and replace PACKAGENAME with the name of the package you want to attach to the postinst script. To apply the post-installation script to the main package for the recipe, which is usually what is required, specify ${PN} in place of PACKAGENAME.

A post-installation function has the following structure:

pkg_postinst_PACKAGENAME() {# Commands to carry out}

The script defined in the post-installation function is called when the root filesystem is created. If the script succeeds, the package is marked as installed. If the script fails, the package is marked as unpacked and the script is executed when the image boots again.

Note

Any RPM post-installation script that runs on the target should return a 0 exit code. RPM does not allow non-zero exit codes for these scripts, and the RPM package manager will cause the package to fail installation on the target.

Sometimes it is necessary for the execution of a post-installation script to be delayed until the first boot. For example, the script might need to be executed on the device itself. To delay script execution until boot time, you must explicitly mark post installs to defer to the target. You can use pkg_postinst_ontarget() or call postinst-intercepts defer_to_first_boot from pkg_postinst(). Any failure of a pkg_postinst() script (including exit 1) triggers an error during the do_rootfs task.

If you have recipes that use pkg_postinst function and they require the use of non-standard native tools that have dependencies during rootfs construction, you need to use the PACKAGE_WRITE_DEPS variable in your recipe to list these tools. If you do not use this variable, the tools might be missing and execution of the post-installation script is deferred until first boot. Deferring the script to first boot is undesirable and for read-only rootfs impossible.

Note

Equivalent support for pre-install, pre-uninstall, and post-uninstall scripts exist by way ofpkg_preinst,pkg_prerm, andpkg_postrm, respectively. These scrips work in exactly the same way as doespkg_postinstwith the exception that they run at different times. Also, because of when they run, they are not applicable to being run at image creation time likepkg_postinst.

3.3.20 测试

完成recipe的最后一个是确保构建的软件正确运行,为了完成运行时测试,将构建输出的包添加到镜像中并在目标设备上测试。

阅读3.2. 定制化镜像以了解更多如何添加特定包自定义镜像的信息。

3.3.21 示例

为了总结如何写一个recipe,本节提供不同场景下的示例:

使用本地文件的recipe

使用Autotool的包

使用基于Makefile的包

将应用分割成多个包

将二进制文件加到镜像

3.3.21.1 Single .c File Package (Hello World!)

由本地的单文件(例如files目录下)构建应用,recipe需要将文件列在SRC_URI中,另外,你需要手动编写do_compile和do_install任务。S变量定义了包含源代码的目录,这个示例中是WORKDIR,也是BitBake用作构建的目录。

SUMMARY = "Simple helloworld application"SECTION = "examples"LICENSE = "MIT"LIC_FILES_CHKSUM = "file://${COMMON_LICENSE_DIR}/MIT;`md5`=0835ade698e0bcf8506ecda2f7b4f302"SRC_URI = "file://helloworld.c"S = "${WORKDIR}"do_compile() {${CC} helloworld.c -o helloworld}do_install() {install -d ${D}${bindir}install -m 0755 helloworld ${D}${bindir}}

默认地helloworld, helloworld-dbg, and helloworld-dev包被构建出来,关于更多如何自定义打包过程的信息,请阅读3.3.21.4 将应用分割成多个包。

3.3.21.2 Autotool构建的包

Applications that use Autotools such as autoconf and automake require a recipe that has a source archive listed in SRC_URI and also inherit the autotools class, which contains the definitions of all the steps needed to build an Autotool-based application. The result of the build is automatically packaged. And, if the application uses NLS for localization, packages with local information are generated (one package per language). Following is one example: (hello_2.3.bb)

SUMMARY = "GNU Helloworld application"SECTION = "examples"LICENSE = "GPLv2+"LIC_FILES_CHKSUM = "file://COPYING;`md5`=751419260aa954499f7abaabaa882bbe"SRC_URI = "${GNU_MIRROR}/hello/hello-${PV}.tar.gz"inherit autotools gettext

The variable LIC_FILES_CHKSUM is used to track source license changes as described in the “Tracking License Changes” section in the Yocto Project Overview and Concepts Manual. You can quickly create Autotool-based recipes in a manner similar to the previous example.

3.3.21.3 基于Makefile的包

Applications that use GNU make also require a recipe that has the source archive listed in SRC_URI. You do not need to add a do_compile step since by default BitBake starts the make command to compile the application. If you need additional make options, you should store them in the EXTRA_OEMAKE or PACKAGECONFIG_CONFARGS variables. BitBake passes these options into the GNU make invocation. Note that a do_install task is still required. Otherwise, BitBake runs an empty do_install task by default.

Some applications might require extra parameters to be passed to the compiler. For example, the application might need an additional header path. You can accomplish this by adding to the CFLAGS variable. The following example shows this:

CFLAGS_prepend = "-I ${S}/include "

In the following example, mtd-utils is a makefile-based package:

SUMMARY = "Tools for managing memory technology devices"SECTION = "base"DEPENDS = "zlib lzo e2fsprogs util-linux"HOMEPAGE = "http://www.linux-mtd.infradead.org/"LICENSE = "GPLv2+"LIC_FILES_CHKSUM = "file://COPYING;`md5`=0636e73ff0215e8d672dc4c32c317bb3 \file://include/common.h;beginline=1;endline=17;`md5`=ba05b07912a44ea2bf81ce409380049c"# Use the latest version at 26 Oct, 2013SRCREV = "9f107132a6a073cce37434ca9cda6917dd8d866b"SRC_URI = "git://git.infradead.org/mtd-utils.git \file://add-exclusion-to-mkfs-jffs2-git-2.patch \"PV = "1.5.1+git${SRCPV}"S = "${WORKDIR}/git"EXTRA_OEMAKE = "'CC=${CC}' 'RANLIB=${RANLIB}' 'AR=${AR}' 'CFLAGS=${CFLAGS} -I${S}/include -DWITHOUT_XATTR' 'BUILDDIR=${S}'"do_install () {oe_runmake install DESTDIR=${D} SBINDIR=${sbindir} MANDIR=${mandir} INCLUDEDIR=${includedir}}PACKAGES =+ "mtd-utils-jffs2 mtd-utils-ubifs mtd-utils-misc"FILES_mtd-utils-jffs2 = "${sbindir}/mkfs.jffs2 ${sbindir}/jffs2dump ${sbindir}/jffs2reader ${sbindir}/sumtool"FILES_mtd-utils-ubifs = "${sbindir}/mkfs.ubifs ${sbindir}/ubi*"FILES_mtd-utils-misc = "${sbindir}/nftl* ${sbindir}/ftl* ${sbindir}/rfd* ${sbindir}/doc* ${sbindir}/serve_image ${sbindir}/recv_image"PARALLEL_MAKE = ""BBCLASSEXTEND = "native"

3.3.21.4 将应用分割成多个包

You can use the variables PACKAGES and FILES to split an application into multiple packages.

Following is an example that uses the libxpm recipe. By default, this recipe generates a single package that contains the library along with a few binaries. You can modify the recipe to split the binaries into separate packages:

require xorg-lib-common.incSUMMARY = "Xpm: X Pixmap extension library"LICENSE = "BSD"LIC_FILES_CHKSUM = "file://COPYING;`md5`=51f4270b012ecd4ab1a164f5f4ed6cf7"DEPENDS += "libxext libsm libxt"PE = "1"XORG_PN = "libXpm"PACKAGES =+ "sxpm cxpm"FILES_cxpm = "${bindir}/cxpm"FILES_sxpm = "${bindir}/sxpm"

In the previous example, we want to ship the sxpm and cxpm binaries in separate packages. Since bindir would be packaged into the main PN package by default, we prepend the PACKAGES variable so additional package names are added to the start of list. This results in the extra FILES_* variables then containing information that define which files and directories go into which packages. Files included by earlier packages are skipped by latter packages. Thus, the main PN package does not include the above listed files.

3.3.21.5 Packaging Externally Produced Binaries

Sometimes, you need to add pre-compiled binaries to an image. For example, suppose that binaries for proprietary code exist, which are created by a particular division of a company. Your part of the company needs to use those binaries as part of an image that you are building using the OpenEmbedded build system. Since you only have the binaries and not the source code, you cannot use a typical recipe that expects to fetch the source specified in SRC_URI and then compile it.

One method is to package the binaries and then install them as part of the image. Generally, it is not a good idea to package binaries since, among other things, it can hinder the ability to reproduce builds and could lead to compatibility problems with ABI in the future. However, sometimes you have no choice.

The easiest solution is to create a recipe that uses the bin_package class and to be sure that you are using default locations for build artifacts. In most cases, the bin_package class handles “skipping” the configure and compile steps as well as sets things up to grab packages from the appropriate area. In particular, this class sets noexec on both the do_configure and do_compile tasks, sets FILES_${PN}` to "/" so that it picks up all files, and sets up ado_installtask, which effectively copies all files from${S}to${D}. Thebin_packageclass works well when the files extracted into${S}are already laid out in the way they should be laid out on the target. For more information on these variables, see theFILES,PN,S, andD` variables in the Yocto Project Reference Manual’s variable glossary.

Notes

UsingDEPENDSis a good idea even for components distributed in binary form, and is often necessary for shared libraries. For a shared library, listing the library dependencies in DEPENDS makes sure that the libraries are available in the staging sysroot when other recipes link against the library, which might be necessary for successful linking.

UsingDEPENDSalso allows runtime dependencies between packages to be added automatically. See the “Automatically Added Runtime Dependencies” section in the Yocto Project Overview and Concepts Manual for more information.

If you cannot use the bin_package class, you need to be sure you are doing the following:

- Create a recipe where the

do_configureanddo_compiletasks do nothing: It is usually sufficient to just not define these tasks in the recipe, because the default implementations do nothing unless a Makefile is found in${S}.

If ${S} might contain a Makefile, or if you inherit some class that replaces do_configure and do_compile with custom versions, then you can use the [noexec] flag to turn the tasks into no-ops, as follows:

do_configure[noexec] = "1"do_compile[noexec] = "1"

Unlike deleting the tasks, using the flag preserves the dependency chain from the do_fetch, do_unpack, and do_patch tasks to the do_install task.

Make sure your

do_installtask installs the binaries appropriately.Ensure that you set up

FILES(usuallyFILES_${PN}``) to point to the files you have installed, which of course depends on where you have installed them and whether those files are in different locations than the defaults.

3.3.22 Following Recipe Style Guidelines

When writing recipes, it is good to conform to existing style guidelines. The OpenEmbedded Styleguide wiki page provides rough guidelines for preferred recipe style.

It is common for existing recipes to deviate a bit from this style. However, aiming for at least a consistent style is a good idea. Some practices, such as omitting spaces around = operators in assignments or ordering recipe components in an erratic way, are widely seen as poor style.

3.3.23 Recipe语法

理解recipe文件语法对于编写recipe来说是很重要的,以下列表列举了组成BitBake recipe文件的基本项,阅读《BitBake User Manual》“Syntax and Operators”了解完整的BitBake语法描述。

- 变量的赋值与处理: 变量赋值允许给变量赋值,可以是静态文本或包含其他变量内容。额外地,也支持append和prepend操作。

下方示例展示了几种使用变量的方式:

S = "${WORKDIR}/postfix-${PV}"CFLAGS += "-DNO_ASM"SRC_URI_append = " file://fixup.patch"

- 函数: 函数提供一系列可执行的动作。一般使用函数重写默认任务函数的实现(即对已有函数的append或prepend)。标准函数使用sh shell语法,尽管访问OE变量和内部方法仍然是可行的。

下方是sedrecipe中的函数示例:

do_install () {autotools_do_installinstall -d ${D}${base_bindir}mv ${D}${bindir}/sed ${D}${base_bindir}/sedrmdir ${D}${bindir}/}

实现新函数调用已有任务也是可以的,只要没有替换或补足默认函数。你可以用python而不是shell实现函数,Both of these options are not seen in the majority of recipes.

- 关键字: BitBake recipe只使用几个关键字,

inherit用来包含常用函数,include和require用来加载其他文件recipe内容,export用来导出变量到环境中。

下方示例展示这些关键字的使用:

export POSTCONF = "${STAGING_BINDIR}/postconf"inherit autoconfrequire otherfile.inc

注释 (#):

#开头的行被视作为注释行,被忽略:# This is a comment

接下来的列表总结了最重要的和最常用的recipe语法,你可以参考《BitBake User Manual》Syntax and Operators了解更多语法信息。

行连接符 (

\): 使用反斜线\分割一条语句的多行,在需要接续下一行的行末尾加上斜线:VAR = "A really long \line"

注释

你不能在斜线后输入任何空格或tab使用变量 (

${VARNAME}): 使用 ${VARNAME} 语法获取变量内容:SRC_URI = "${SOURCEFORGE_MIRROR}/libpng/zlib-${PV}.tar.gz"

注释

It is important to understand that the value of a variable expressed in this form does not get substituted automatically.

The expansion of these expressions happens on-demand later (例如 usually when a function that makes reference to the variable executes). This behavior ensures that the values are most appropriate for the context in which they are finally used. On the rare occasion that you do need the variable expression to be expanded immediately, you can use the := operator instead of = when you make the assignment, but this is not generally needed.Quote All Assignments (“value”): Use double quotes around values in all variable assignments (例如 “value”). Following is an example:

VAR1 = “${OTHERVAR}” VAR2 = “The version is ${PV}”

条件赋值 (?=): 条件赋值用来给一个变量赋值,仅当变量还未被赋值时。使用

?=作为‘软’赋值用来条件复制,典型地,‘软’赋值在local.conf文件中给允许从外部环境传值得变量使用。

如果VAR1当前未被设值,就会被设值为”New value”:

VAR1 ?= "New value"

这个示例中,VAR1得值仍是”Original value”:

VAR1 = "Original value"VAR1 ?= "New value"

- 附加Appending (+=): 使用

+=符号将值附加到变量中:

注释

操作符会在已有内容和新内容之间增加一个空格

示例:

SRC_URI += "file://fix-makefile.patch"

- 预附加Prepending (=+): U使用

=+符号将值预附加到变量中。

注释

操作符在新内容和已有内容之间增加一个空格

示例:

VAR =+ "Starts"

- 附加Appending (_append): 使用

_append操作符将值附加到变量中,操作符不会添加空格。并且这个操作符实在所有+=,=+起效,所有=赋值发生后才执行。

下方是列展示内容最前方显示添加得空格,保证附加值不会合并到已有值上:

SRC_URI_append = " file://fix-makefile.patch"

你也可以这么使用,仅对特定目标或机器起效:

SRC_URI_append_sh4 = " file://fix-makefile.patch"

- 预附加Prepending (_prepend): 使用

_preppend操作符将值附加到变量中,操作符不会添加空格。并且这个操作符实在所有+=,=+起效,所有=赋值发生后才执行。

下方是列展示内容最后方显示添加得空格,保证附加值不会合并到已有值上:

CFLAGS_prepend = "-I${S}/myincludes "

你也可以这么使用,仅对特定目标或机器起效:

CFLAGS_prepend_sh4 = "-I${S}/myincludes "

重载Overrides: 你可以使用重载的方式根据recipe如果被构建地,按条件设值。例如,给

KBRANCH变量设值为”standard/base”,只有当qemuarm设备时被设值为”standard/arm-versatile-926ejs”:KBRANCH = "standard/base"KBRANCH_qemuarm = "standard/arm-versatile-926ejs"

Overrides are also used to separate alternate values of a variable in other situations. For example, when setting variables such as

FILESandRDEPENDSthat are specific to individual packages produced by a recipe, you should always use an override that specifies the name of the package.缩进: 使用空格,而不要使用tab进行缩进。对于shell函数,它们都没问题。然而,Yocto Project约定在shell函数中使用tab。需要意识到地是,有的layer只允许用空格。

复杂操作时使用Python: 对于更复杂地处理,可以用python代码进行赋值(例如,寻找并替换变量值)。

使用${@python_code}语法指明使用python代码进行变量赋值:

SRC_URI = "ftp://ftp.info-zip.org/pub/infozip/src/zip${@d.getVar('PV',1).replace('.', '')}.tgz

- Shell函数语法: 如当你描述一系列需要执行的动作时编写shell脚本一样,写shell函数。你应该保证脚本可以使用通用的sh,不必需要

bash或其他shell指定的功能。对于其他系统工具(例如sed,grep,awk, 等)来说同样需要考虑到这点。If in doubt, you should check with multiple implementations - including those from BusyBox.

3.4 增加一个新的机器

向Yocto Project中添加一个新的机器很简单,本节介绍如何添加与Yocto Project已经支持机器相近的机器。

注释

尽管Yocto Project完全有这个能力,增加一个全新架构可能需要修改gcc/glibc和site information,这个不在本手册讨论范围内。

如何增加一个新机器的完整示例,请阅读《Yocto Project Board Support Package (BSP) Developer’s Guide》的“Creating a New BSP Layer Using the bitbake-layers Script”章节。

3.4.1 新增机器配置文件

增加新机器,你需要在layer的conf/machine目录下增加机器配置文件。这个文件提供你新增设备的细节。

OE构建系统使用机器配置文件的根名字指向新机器。比如说,给定名为crownbay.conf的机器配置文件,构建系统将认为机器名为”crownbay”。

机器配置文件中最重要的变量,你需要设置或者从下层配置文件include进来:

TARGET_ARCH (例如 “arm”)

PREFERREDPROVIDER

virtual/kernelMACHINE_FEATURES (例如 “apm screen wifi”)

你也可能需要使用这些变量:

SERIAL_CONSOLES (例如 “115200;ttyS0 115200;ttyS1”)

KERNEL_IMAGETYPE (例如 “zImage”)

IMAGE_FSTYPES (例如 “tar.gz jffs2”)

你可以在参考章节找到变量的所有细节,你可以利用meta-yocto-bsp/conf/machine/的.conf文件。

3.4.2 为机器增加Kernel

OE构建系统需要能够为机器构建kernel。你可以为机器新建一个新kernel,或者扩展已有的kernel recipe。你可以在meta/recipes-kernel/linux目录找到几个recipe示例作为参考。

如果你准备新建一个kernel recipe,通常的规则同样使用于SRC_URI。因此,你需要指定必须的补丁文件,将S指向源代码,你需要创建do_configure任务使用defconfig文件配置解包好的kernel,你可以使用make defconfig命令,或者更常见地,拷贝一个合适地defconfg文件然后运行make oldconfig。利用inherit kernel和部分linux-*.inc文件,大多数其他功能都几种了起来,类默认提供地功能通常也能工作地很好。

如果你是扩展kernel recipe,通常就是需要新增一个合适地defconifg文件,这个文件需要添加的路径和其他已有的近似。一个可行的方式是在SRC_URI中列举这些文件,在COMPATIBLE_MACHINE中添加机器:

COMPATIBLE_MACHINE = '(qemux86|qemumips)'

更多有关defconfig文件的信息,请阅读《Yocto Project Linux Kernel Development Manual》的“Changing the Configuration”章节。

3.4.3 Adding a Formfactor Configuration File

A formfactor configuration file provides information about the target hardware for which the image is being built and information that the build system cannot obtain from other sources such as the kernel. Some examples of information contained in a formfactor configuration file include framebuffer orientation, whether or not the system has a keyboard, the positioning of the keyboard in relation to the screen, and the screen resolution.

The build system uses reasonable defaults in most cases. However, if customization is necessary, you need to create a machconfig file in the meta/recipes-bsp/formfactor/files directory. This directory contains directories for specific machines such as qemuarm and qemux86. For information about the settings available and the defaults, see the meta/recipes-bsp/formfactor/files/config file found in the same area.

Following is an example for “qemuarm” machine:

HAVE_TOUCHSCREEN=1HAVE_KEYBOARD=1DISPLAY_CAN_ROTATE=0DISPLAY_ORIENTATION=0#DISPLAY_WIDTH_PIXELS=640#DISPLAY_HEIGHT_PIXELS=480#DISPLAY_BPP=16DISPLAY_DPI=150DISPLAY_SUBPIXEL_ORDER=vrgb

3.5 升级Recipe

上游开发者将layer recipe构建的新版本软件发布出来。推荐你保持recipe更新至上游最新发布的版本。

当存在几种升级方式时,你可能会考虑先检查recipe升级状态,你可以使用devtool check-upgrade-status命令。阅读《Yocto Project Reference Manual》的“Checking on the Upgrade Status of a Recipe”以了解更多。

本节介绍三种升级recipe的方式,你可以使用Automated Upgrade Helper (AUH)配置自动升级,你也可以使用devtool upgrade的方式配置半自动升级,当然,你也可以通过编辑recipe手动升级。

3.5.1 使用 Auto Upgrade Helper (AUH)

AUH工具和OE构建系统共同工作,根据上游发布的新版本自动更新recipe。如果你想创建一个服务自动执行升级,使用AUH就可以了,你还可以选择是否将升级结果以邮件形式发送给你。

AUH允许你一次性升级多个recipe,你也可以选择执行构建并在硬件上做集成测试,并将结果邮件给recipe维护者。最后,AUH也可以为recipe地改动创建Git提交以及合适的提交信息。

注释

也存在你不应该使用AUH进行升级情况,这时候你应该使用devtool upgrade或者手动升级:

- AUH不能完成升级,这种情况通常是由于自定义补丁无法自动rebase到新版本。这时候

devtool upgrade允许你手动解决冲突。- 当你想完全控制升级过程时(例如你有特殊的测试安排)

搭建AUH工具步骤如下:

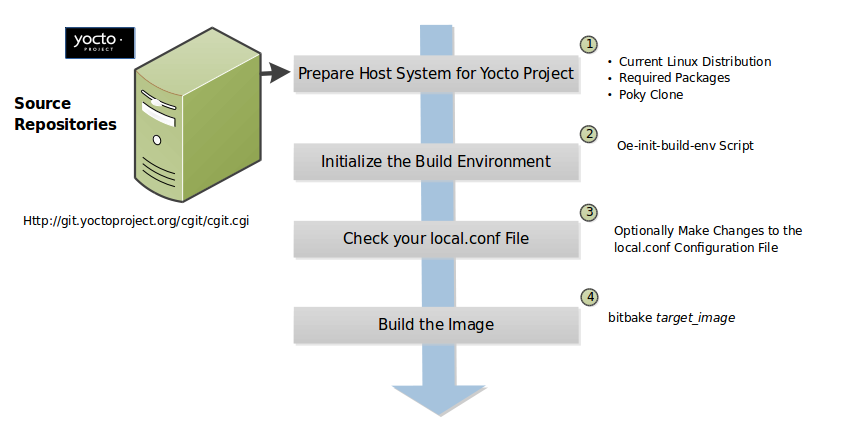

开发主机已搭建: 确保使用Yocto Project的开发主机已搭建。关于如何搭建,请阅读”Preparing the Build Host”

已配置: AUH使用Git保存升级,因此你必须设置Git用户和邮箱:

$ git config —list

如果还没有配置,用以下命令配置:

$ git config —global user.name some_name $ git config —global user.email username@domain.com

克隆AUH仓库: 使用以下命令用Git创建本地仓库拷贝:

$ git clone git://git.yoctoproject.org/auto-upgrade-helper Cloning into ‘auto-upgrade-helper’… remote: Counting objects: 768, done. remote: Compressing objects: 100% (300/300), done. remote: Total 768 (delta 499), reused 703 (delta 434) Receiving objects: 100% (768/768), 191.47 KiB | 98.00 KiB/s, done. Resolving deltas: 100% (499/499), done. Checking connectivity… done.

AUH不是OE-Core或Poky仓库的一部分。

创建专用构建目录 使用以下

oe-init-build-env脚本创建新的构建目录,给运行AUH工具使用:$ cd ~/poky $ source oe-init-build-env your_AUH_build_directory

不推荐使用已有的构建目录,其设定可能导致AUH失败或出现非预期现象。

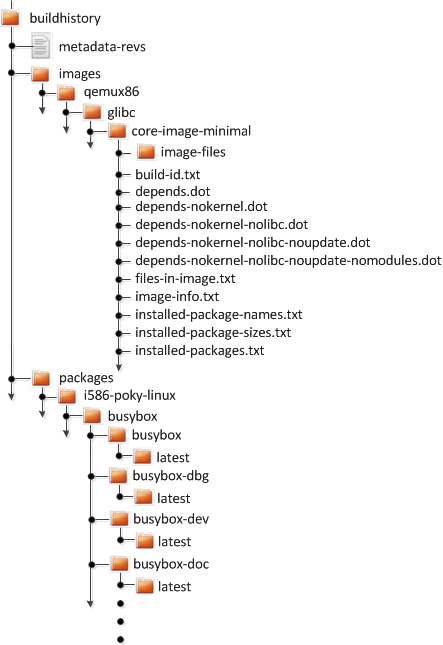

配置本地配置文件: 刚刚创建的构建目录下修改

local.conf文件:如果你想启用可选择性的构建历史功能,需要在

conf/local.conf中增加以下配置:INHERIT =+ “buildhistory” BUILDHISTORY_COMMIT = “1”

使用这个配置成功升级后,构建历史”diff”文件会在构建目录下出现

upgrade-helper/work/recipe/buildhistory-diff.txt文件。- 如果你想启用可选择性的使用testimage类测试的功能,你需要在

conf/local.conf中增加以下配置:

INHERIT += “testimage”

注释

If your distro does not enable by default ptest, which Poky does, you need the following in yourlocal.conffile:DISTRO_FEATURES_append = " ptest"

- 如果你想启用可选择性的使用testimage类测试的功能,你需要在

(可选)启动vncserver: 如果你想运行一个没有X11的服务器,你需要启动vncserver:

$ vncserver :1 $ export DISPLAY=:1

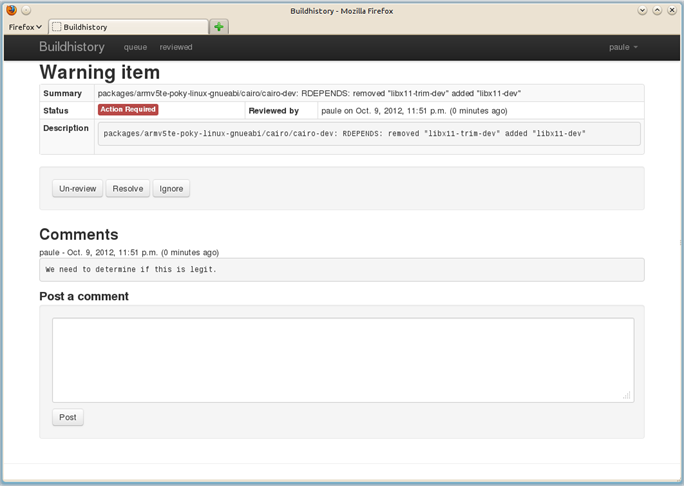

创建并编辑AUH配置文件: You need to have the

upgrade-helper/upgrade-helper.confconfiguration file in your build directory. You can find a sample configuration file in the AUH source repository.Read through the sample file and make configurations as needed. For example, if you enabled build history in your

local.confas described earlier, you must enable it inupgrade-helper.conf.Also, if you are using the default

maintainers.incfile supplied with Poky and located inmeta-yoctoand you do not set a “maintainers_whitelist” or “global_maintainer_override” in theupgrade-helper.confconfiguration, and you specify “-e all” on the AUH command-line, the utility automatically sends out emails to all the default maintainers. Please avoid this.This next set of examples describes how to use the AUH:

升级特定的Recipe: To upgrade a specific recipe, use the following form:

$ upgrade-helper.py recipe_name

For example, this command upgrades the xmodmap recipe:

$ upgrade-helper.py xmodmap

将特定recipe升级到特定版本: To upgrade a specific recipe to a particular version, use the following form:

$ upgrade-helper.py recipe_name -t version

For example, this command upgrades the xmodmap recipe to version 1.2.3:

$ upgrade-helper.py xmodmap -t 1.2.3

升级所有recipe到最新版本,但不发送邮件提醒: To upgrade all recipes to their most recent versions and suppress the email notifications, use the following command:

$ upgrade-helper.py all

升级所有recipe到最新版本,并发送邮件提醒: To upgrade all recipes to their most recent versions and send email messages to maintainers for each attempted recipe as well as a status email, use the following command:

$ upgrade-helper.py -e all

Once you have run the AUH utility, you can find the results in the AUH build directory:

${BUILDDIR}/upgrade-helper/timestamp

The AUH utility also creates recipe update commits from successful upgrade attempts in the layer tree.

You can easily set up to run the AUH utility on a regular basis by using a cron job. See the weeklyjob.sh file distributed with the utility for an example.

3.5.2 使用 devtool upgrade

As mentioned earlier, an alternative method for upgrading recipes to newer versions is to use devtool upgrade. You can read about devtool upgrade in general in the “Use devtool upgrade to Create a Version of the Recipe that Supports a Newer Version of the Software” section in the Yocto Project Application Development and the Extensible Software Development Kit (eSDK) Manual.

To see all the command-line options available with devtool upgrade, use the following help command:

$ devtool upgrade -h

If you want to find out what version a recipe is currently at upstream without any attempt to upgrade your local version of the recipe, you can use the following command:

$ devtool latest-version recipe_name

As mentioned in the previous section describing AUH, devtool upgrade works in a less-automated manner than AUH. Specifically, devtool upgrade only works on a single recipe that you name on the command line, cannot perform build and integration testing using images, and does not automatically generate commits for changes in the source tree. Despite all these “limitations”, devtool upgrade updates the recipe file to the new upstream version and attempts to rebase custom patches contained by the recipe as needed.

Note

AUH uses much ofdevtool upgradebehind the scenes making AUH somewhat of a “wrapper” application fordevtool upgrade.

A typical scenario involves having used Git to clone an upstream repository that you use during build operations. Because you are (or have) built the recipe in the past, the layer is likely added to your configuration already. If for some reason, the layer is not added, you could add it easily using the bitbake-layers script. For example, suppose you use the nano.bb recipe from the meta-oe layer in the meta-openembedded repository. For this example, assume that the layer has been cloned into following area:

/home/scottrif/meta-openembedded

The following command from your Build Directory adds the layer to your build configuration (i.e. ${BUILDDIR}/conf/bblayers.conf``):

$ bitbake-layers add-layer /home/scottrif/meta-openembedded/meta-oeNOTE: Starting bitbake server...Parsing recipes: 100% |##########################################| Time: 0:00:55Parsing of 1431 .bb files complete (0 cached, 1431 parsed). 2040 targets, 56 skipped, 0 masked, 0 errors.Removing 12 recipes from the x86_64 sysroot: 100% |##############| Time: 0:00:00Removing 1 recipes from the x86_64_i586 sysroot: 100% |##########| Time: 0:00:00Removing 5 recipes from the i586 sysroot: 100% |#################| Time: 0:00:00Removing 5 recipes from the qemux86 sysroot: 100% |##############| Time: 0:00:00

For this example, assume that the nano.bb recipe that is upstream has a 2.9.3 version number. However, the version in the local repository is 2.7.4. The following command from your build directory automatically upgrades the recipe for you:

Note

Using the-Voption is not necessary. Omitting the version number causesdevtool upgradeto upgrade the recipe to the most recent version.

$ devtool upgrade nano -V 2.9.3NOTE: Starting bitbake server...NOTE: Creating workspace layer in /home/scottrif/poky/build/workspaceParsing recipes: 100% |##########################################| Time: 0:00:46Parsing of 1431 .bb files complete (0 cached, 1431 parsed). 2040 targets, 56 skipped, 0 masked, 0 errors.NOTE: Extracting current version source...NOTE: Resolving any missing task queue dependencies...NOTE: Executing SetScene TasksNOTE: Executing RunQueue TasksNOTE: Tasks Summary: Attempted 74 tasks of which 72 didn't need to be rerun and all succeeded.Adding changed files: 100% |#####################################| Time: 0:00:00NOTE: Upgraded source extracted to /home/scottrif/poky/build/workspace/sources/nanoNOTE: New recipe is /home/scottrif/poky/build/workspace/recipes/nano/nano_2.9.3.bb

Continuing with this example, you can use devtool build to build the newly upgraded recipe: