环境信息

kubectl versionClient Version: version.Info{Major:"1", Minor:"16", GitVersion:"v1.16.9", GitCommit:"a17149e1a189050796ced469dbd78d380f2ed5ef", GitTreeState:"clean", BuildDate:"2020-04-16T11:44:51Z", GoVersion:"go1.13.9", Compiler:"gc", Platform:"linux/amd64"}Server Version: version.Info{Major:"1", Minor:"16", GitVersion:"v1.16.9", GitCommit:"a17149e1a189050796ced469dbd78d380f2ed5ef", GitTreeState:"clean", BuildDate:"2020-04-16T11:36:15Z", GoVersion:"go1.13.9", Compiler:"gc", Platform:"linux/amd64"}

术语解释

- Endpoint:抓取目标实际访问的抓取地址,组成为:

__scheme__+__address__+__metrics_path__。因此,很多标签重写都是重写的其中之一,或者全部,例如,__address_以及__metrics_path_两个是高频重写点

原理解释

集群组件本身都提供了/metrics端点暴露自身指标。

监控准备

以kubeadm启动的k8s集群中,etcd是以static pod的形式启动的,默认没有service及对应的endpoint可供集群内的prometheus访问。所以首先创建一个用来为prometheus提供接口的service(endpoint)。

了解Static Pod

静态Pod由特定节点上的kubelet daemon程序直接管理,API Server不会监控它们。不像其他Pod会被Control Plane(例如,Deployment)管理,而是由kubelet负责监视静态Pod(如果失败了,由kubelet负责重启)。

静态Pod总是绑定到特定节点的Kubelet进程上。kubelet会自动尝试为每一个静态Pod在API Server上创建一个镜像Pod,也就是说,在API Server上可以看到静态Pod,但是却不能管理。

具体可参考官方文档。

静态Pod有两种创建方式:

- 本地配置文件方式:kubelet 启动时由 —pod-manifest-path 指定的目录(默认/etc/kubernetes/manifests),kubelet会定期扫描这个目录,并根据这个目录下的.yaml或.json文件进行创建和更新操作

- 如果把pod的yaml描述文件放到这个目录中,等kubelet扫描到文件,会自动在本机创建出来 pod;

- 如果把pod的yaml文件更改了,kubelet也会识别到,会自动更新 pod;

- 如果把pod的yaml文件删除了,kubelet会自动删除掉pod;

- 因为静态pod不能被api-server直接管理,所以它的更新删除操作不能由kubectl来执行,只能直接修改或删除文本文件。

- HTTP仓库配置文件方式:

--manifest-url,kubelet定期从url获取文件,其余操作和第一种方式一样。

本地配置文件方式:解析

查看kubelet进程的配置:

ps -ef | grep kubeletroot 561 1 6 11月26 ? 10:05:57 /usr/bin/kubelet --bootstrap-kubeconfig=/etc/kubernetes/bootstrap-kubelet.conf --kubeconfig=/etc/kubernetes/kubelet.conf --config=/var/lib/kubelet/config.yaml --cgroup-driver=systemd --hostname-override=172.23.16.106 --network-plugin=cni --pod-infra-container-image=registry.aliyuncs.com/google_containers/pause:3.2 --root-dir=/var/lib/kubelet

查看配置文件--config=/var/lib/kubelet/config.yaml:

more /var/lib/kubelet/config.yaml---...staticPodPath: /etc/kubernetes/manifestsstreamingConnectionIdleTimeout: 4h0m0ssyncFrequency: 1m0stlsCertFile: /var/lib/kubelet/pki/kubelet.crttlsPrivateKeyFile: /var/lib/kubelet/pki/kubelet.keyvolumeStatsAggPeriod: 1m0s

查看现有StaticPod:

ll /etc/kubernetes/manifests总用量 20-rw-r--r-- 1 root root 2354 11月 5 18:01 etcd-external.yaml-rw------- 1 root root 3059 11月 5 18:03 kube-apiserver.yaml-rw------- 1 root root 2997 11月 5 18:03 kube-controller-manager.yaml-rw------- 1 root root 1325 11月 5 18:03 kube-scheduler.yaml-rw-r--r-- 1 root root 1157 11月 5 18:00 lb-kube-apiserver.yaml

如何暴露访问?除了kube-apiserver之外(Kubernetes会在default命名空间下自动创建一个Service kubernetes指向这个静态Pod),其他静态Pod都需要手工创建Service,以etcd-external.yaml为例:

cat etcd-external.yaml---apiVersion: v1kind: Podmetadata:creationTimestamp: nulllabels:component: etcdtier: control-planename: etcdnamespace: kube-systemspec:containers:- command:- etcd- --name=etcd-172.23.16.106- --advertise-client-urls=https://172.23.16.106:2379- --trusted-ca-file=/etc/kubernetes/pki/etcd/ca.crt- --cert-file=/etc/kubernetes/pki/etcd/server.crt- --key-file=/etc/kubernetes/pki/etcd/server.key- --peer-trusted-ca-file=/etc/kubernetes/pki/etcd/ca.crt- --peer-cert-file=/etc/kubernetes/pki/etcd/peer.crt- --peer-key-file=/etc/kubernetes/pki/etcd/peer.key- --peer-client-cert-auth=true- --listen-peer-urls=https://172.23.16.106:2380- --listen-metrics-urls=http://127.0.0.1:2381- --listen-client-urls=https://127.0.0.1:2379,https://172.23.16.106:2379- --initial-cluster-state=existing- --initial-advertise-peer-urls=https://172.23.16.106:2380- --initial-cluster=etcd-172.23.16.106=https://172.23.16.106:2380- --initial-cluster-token=etcd-cluster-token- --client-cert-auth=true- --snapshot-count=10000- --data-dir=/var/lib/etcd# 推荐一小时压缩一次数据这样可以极大的保证集群稳定- --auto-compaction-retention=1# Etcd Raft消息最大字节数,官方推荐是10M- --max-request-bytes=10485760# ETCD db数据大小,默认是2G,官方推荐是8G- --quota-backend-bytes=8589934592image: registry.aliyuncs.com/google_containers/etcd:3.4.3-0imagePullPolicy: IfNotPresentlivenessProbe:httpGet:host: 127.0.0.1path: /healthport: 2381scheme: HTTPfailureThreshold: 8initialDelaySeconds: 15timeoutSeconds: 15name: etcdresources: {}volumeMounts:- mountPath: /var/lib/etcdname: etcd-data- mountPath: /etc/kubernetes/pki/etcdname: etcd-certs- mountPath: /etc/localtimename: localtimereadOnly: truehostNetwork: truepriorityClassName: system-cluster-criticalvolumes:- hostPath:path: /var/lib/etcdtype: DirectoryOrCreatename: etcd-data- hostPath:path: /etc/kubernetes/pki/etcdtype: DirectoryOrCreatename: etcd-certs- hostPath:path: /etc/localtimetype: Filename: localtimestatus: {}

可以看到标签信息进而进行标签选择。

同时,需要注意,ETCD需要双向SSL验证,从如下配置--peer-client-cert-auth=true可知:

- --trusted-ca-file=/etc/kubernetes/pki/etcd/ca.crt- --cert-file=/etc/kubernetes/pki/etcd/server.crt- --key-file=/etc/kubernetes/pki/etcd/server.key- --peer-trusted-ca-file=/etc/kubernetes/pki/etcd/ca.crt- --peer-cert-file=/etc/kubernetes/pki/etcd/peer.crt- --peer-key-file=/etc/kubernetes/pki/etcd/peer.key- --peer-client-cert-auth=true

因此,请求端点时,需要配置客户端证书及秘钥,也即

--peer-cert-file=/etc/kubernetes/pki/etcd/peer.crt及--peer-key-file=/etc/kubernetes/pki/etcd/peer.key。

服务准备

- 执行脚本

mkdir -p /u01/repo/exportercd /u01/repo/exportervim etcd.yamlkubectl apply -f etcd.yaml

- YAML脚本

apiVersion: v1kind: Servicemetadata:namespace: kube-systemname: etcdlabels:component: etcdannotations:prometheus.io/scrape: "true"spec:selector:component: etcdtype: ClusterIPclusterIP: Noneports:- name: http-metricsport: 2379targetPort: 2379protocol: TCP

服务验证

cd /etc/kubernetes/pki/etcd## 方式一:通过-k参数指定不验证服务器端证书curl -k --cert ./peer.crt --key ./peer.key https://172.23.16.106:2379/metrics --header "Authorization: Bearer eyJhbGciOiJSUzI1NiIsImtpZCI6Ijc0QXUtSGlaNGFoTjVuRFhRTF8zREN3T0VFQ2loYkpzNXYzTHdXZ3FVMEEifQ.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJrdWJlLXN5c3RlbSIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VjcmV0Lm5hbWUiOiJob3BzLWFkbWluLXRva2VuLXo5Yjh0Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9zZXJ2aWNlLWFjY291bnQubmFtZSI6ImhvcHMtYWRtaW4iLCJrdWJlcm5ldGVzLmlvL3NlcnZpY2VhY2NvdW50L3NlcnZpY2UtYWNjb3VudC51aWQiOiI5ZWMzYjE5ZC1hYWQxLTRmMDUtOGRlNC1mMGRmZTM4NjViZTYiLCJzdWIiOiJzeXN0ZW06c2VydmljZWFjY291bnQ6a3ViZS1zeXN0ZW06aG9wcy1hZG1pbiJ9.I5UM5xUbQ6Qc9B-stcZp022tXIYjKSf4VtbF-FzCtCBJOy8gQdqoxfWj5gQa7O5TYgBH_YXxpy-q-J217wupxPeH_owNUGOBGj3eB8Sbs-BUVINqltGAAS9Mmzh2a-ApP9u1OsvEYafXf0vYAYSCBWnNyhUsljx4o-Yo6CmdyQh0f6FqEhcZMKXbyasVVcmZelswFUftLM4BRrxaF3JGvlw_PG7HAvIPDhQqC43gzI_m6xtVzjpGBSe_GTf7TY5_7Cs2c6u6ZowPVId0KNaCDTzlg0Jm5kIhETU8rbYp1kwunguabh1bH7PUG7m1daFsvkPktUW3Uc6QXsZcbB4qDw"## 方式二:通过--cacert指定CA证书curl --cacert ./ca.crt --cert ./peer.crt --key ./peer.key https://172.23.16.106:2379/metrics --header "Authorization: Bearer eyJhbGciOiJSUzI1NiIsImtpZCI6Ijc0QXUtSGlaNGFoTjVuRFhRTF8zREN3T0VFQ2loYkpzNXYzTHdXZ3FVMEEifQ.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJrdWJlLXN5c3RlbSIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VjcmV0Lm5hbWUiOiJob3BzLWFkbWluLXRva2VuLXo5Yjh0Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9zZXJ2aWNlLWFjY291bnQubmFtZSI6ImhvcHMtYWRtaW4iLCJrdWJlcm5ldGVzLmlvL3NlcnZpY2VhY2NvdW50L3NlcnZpY2UtYWNjb3VudC51aWQiOiI5ZWMzYjE5ZC1hYWQxLTRmMDUtOGRlNC1mMGRmZTM4NjViZTYiLCJzdWIiOiJzeXN0ZW06c2VydmljZWFjY291bnQ6a3ViZS1zeXN0ZW06aG9wcy1hZG1pbiJ9.I5UM5xUbQ6Qc9B-stcZp022tXIYjKSf4VtbF-FzCtCBJOy8gQdqoxfWj5gQa7O5TYgBH_YXxpy-q-J217wupxPeH_owNUGOBGj3eB8Sbs-BUVINqltGAAS9Mmzh2a-ApP9u1OsvEYafXf0vYAYSCBWnNyhUsljx4o-Yo6CmdyQh0f6FqEhcZMKXbyasVVcmZelswFUftLM4BRrxaF3JGvlw_PG7HAvIPDhQqC43gzI_m6xtVzjpGBSe_GTf7TY5_7Cs2c6u6ZowPVId0KNaCDTzlg0Jm5kIhETU8rbYp1kwunguabh1bH7PUG7m1daFsvkPktUW3Uc6QXsZcbB4qDw"## 方式三:经过验证,即使不指定Token,也可以正常访问curl --cacert ./ca.crt --cert ./peer.crt --key ./peer.key https://172.23.16.106:2379/metrics---...# HELP etcd_cluster_version Which version is running. 1 for 'cluster_version' label with current cluster version# TYPE etcd_cluster_version gaugeetcd_cluster_version{cluster_version="3.4"} 1# HELP etcd_debugging_disk_backend_commit_rebalance_duration_seconds The latency distributions of commit.rebalance called by bboltdb backend.# TYPE etcd_debugging_disk_backend_commit_rebalance_duration_seconds histogrametcd_debugging_disk_backend_commit_rebalance_duration_seconds_bucket{le="0.001"} 247054etcd_debugging_disk_backend_commit_rebalance_duration_seconds_bucket{le="0.002"} 247065etcd_debugging_disk_backend_commit_rebalance_duration_seconds_bucket{le="0.004"} 247071etcd_debugging_disk_backend_commit_rebalance_duration_seconds_bucket{le="0.008"} 247072etcd_debugging_disk_backend_commit_rebalance_duration_seconds_bucket{le="0.016"} 247075

注意:curl所有参数可通过curl --help查看。

-k:忽略验证服务器端证书,-k, --insecure Allow connections to SSL sites without certs (H)--cacert ./ca.crt:指定验证服务器端证书的根证书,--cacert FILE CA certificate to verify peer against (SSL)--cert ./peer.crt:指定客户端证书,-E, --cert CERT[:PASSWD] Client certificate file and password (SSL)--key ./peer.key:指定客户端证书秘钥,--key KEY Private key file name (SSL/SSH)--header "Authorization: Bearer $Token":指定Service Account的认证Token,正常来讲需要通过最小权限原则设置,但是,首先,本例中最终验证的结果是可以不配置;一般来讲如果需要配置,且不知道该用什么样的最小权限集合,则配置cluster-admin集群管理员角色至Service Account即可,本例中是hops-admin

核心配置项解析

抓取任务配置

| 任务编码 | 指标路径 | 协议 | 请求参数 | 认证授权及TLS | 说明 |

|---|---|---|---|---|---|

| K8S-ETCD | /metrics | https | - | Token认证,禁用验证服务器端证书 | 实际的抓取地址需要通过HTTPS访问 |

K8S服务发现配置

| 资源类型 | API Server地址 | 命名空间 | 认证授权及TLS | 说明 |

|---|---|---|---|---|

| endpoints | https://172.23.16.106:8443 | default,kube-system | Token认证,禁用验证服务器端证书 | API Server地址此处配置的是负载均衡地址,命名空间正常讲应该留空,代表所有空间 |

标签重写配置

K8S-ETCD

服务发现出来的__address__本身就是ETCD端点的IP,同时,基于静态Pod的原理,此处是不需要做重写的,反而可能无法通过apiserver proxy URLs的方式正常访问。

| 序号 | 重写动作 | 来源标签名称 | 分隔符 | 正则匹配 | Hash模数 | 目标标签名称 | 目标标签替换值 |

|---|---|---|---|---|---|---|---|

| 1 | 保持 | [meta_kubernetes_namespace, meta_kubernetes_service_name, __meta_kubernetes_endpoint_port_name] | kube-system;etcd;http-metrics | ||||

| 2 | 标签映射 | __meta_kubernetes_service_label_(.+) |

|||||

| 3 | 替换 | [__meta_kubernetes_namespace] |

kubernetes_namespace | ||||

| 4 | 替换 | [__meta_kubernetes_service_name] |

kubernetes_name |

最终抓取任务配置

- job_name: K8S-ETCDhonor_timestamps: truescrape_interval: 1mscrape_timeout: 10smetrics_path: /metricsscheme: httpsfile_sd_configs:- files:- /u01/prometheus/target/nodes/K8S-ETCD_targets_hosts.jsonrefresh_interval: 5mkubernetes_sd_configs:- api_server: https://172.23.16.106:8443role: endpointsbearer_token: <secret>tls_config:insecure_skip_verify: truenamespaces:names:- default- kube-systembearer_token: <secret>tls_config:ca_file: /u01/prometheus/tls/ca/4cb22d45db4d490c85a56a2257211a3c.crtcert_file: /u01/prometheus/tls/cert/e5dff40c73a34587b8708b63e43965f8.crtkey_file: /u01/prometheus/tls/key/c43bd44814114b30bb1662d98d74199f.keyinsecure_skip_verify: truerelabel_configs:- source_labels: [__meta_kubernetes_namespace, __meta_kubernetes_service_name, __meta_kubernetes_endpoint_port_name]separator: ;regex: kube-system;etcd;http-metricsreplacement: $1action: keep- separator: ;regex: __meta_kubernetes_service_label_(.+)replacement: $1action: labelmap- source_labels: [__meta_kubernetes_namespace]separator: ;regex: (.*)target_label: kubernetes_namespacereplacement: $1action: replace- source_labels: [__meta_kubernetes_service_name]separator: ;regex: (.*)target_label: kubernetes_namereplacement: $1action: replace- separator: ;regex: (.*)target_label: PersonNumreplacement: hopsaction: replace- separator: ;regex: (.*)target_label: _tenant_idreplacement: "0"action: replace

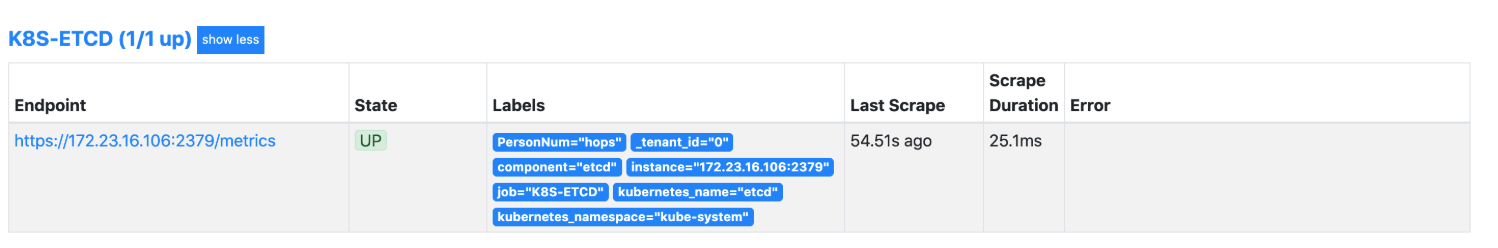

验证预览

结论

通过自动发现识别ETCD端点:

- 需要在抓取任务维度配置CA证书、客户端证书、客户端秘钥,ETCD需要SSL客户端双向验证(抓取任务维度的认证和TLS才是用于实际抓取时的认证和TLS,K8S维度的是用于访问API Server服务器做服务发现的)